KishinNext opened a new issue, #27306:

URL: https://github.com/apache/airflow/issues/27306

### Official Helm Chart version

1.7.0 (latest released)

### Apache Airflow version

2.4.1

### Kubernetes Version

v4.5.7

### Helm Chart configuration

The last helm char for Airflow

### Docker Image customisations

None

### What happened

I'm using the last helm chart of Airflow, and I used this configuration for

the cluster on EKS... but I get this error

### Error

```

nstall.go:192: [debug] Original chart version: ""

install.go:209: [debug] CHART PATH:

/home/ec2-user/.cache/helm/repository/airflow-1.7.0.tgz

client.go:310: [debug] Starting delete for "airflow-broker-url" Secret

client.go:339: [debug] secrets "airflow-broker-url" not found

client.go:128: [debug] creating 1 resource(s)

client.go:310: [debug] Starting delete for "airflow-fernet-key" Secret

client.go:339: [debug] secrets "airflow-fernet-key" not found

client.go:128: [debug] creating 1 resource(s)

client.go:310: [debug] Starting delete for "airflow-redis-password" Secret

client.go:339: [debug] secrets "airflow-redis-password" not found

client.go:128: [debug] creating 1 resource(s)

client.go:128: [debug] creating 30 resource(s)

client.go:310: [debug] Starting delete for "airflow-run-airflow-migrations"

Job

client.go:339: [debug] jobs.batch "airflow-run-airflow-migrations" not found

client.go:128: [debug] creating 1 resource(s)

client.go:540: [debug] Watching for changes to Job

airflow-run-airflow-migrations with timeout of 10m0s

client.go:568: [debug] Add/Modify event for airflow-run-airflow-migrations:

ADDED

client.go:607: [debug] airflow-run-airflow-migrations: Jobs active: 0, jobs

failed: 0, jobs succeeded: 0

client.go:568: [debug] Add/Modify event for airflow-run-airflow-migrations:

MODIFIED

client.go:607: [debug] airflow-run-airflow-migrations: Jobs active: 1, jobs

failed: 0, jobs succeeded: 0

Error: INSTALLATION FAILED: failed post-install: timed out waiting for the

condition

helm.go:84: [debug] failed post-install: timed out waiting for the condition

INSTALLATION FAILED

main.newInstallCmd.func2

helm.sh/helm/v3/cmd/helm/install.go:141

github.com/spf13/cobra.(*Command).execute

github.com/spf13/[email protected]/command.go:872

github.com/spf13/cobra.(*Command).ExecuteC

github.com/spf13/[email protected]/command.go:990

github.com/spf13/cobra.(*Command).Execute

github.com/spf13/[email protected]/command.go:918

main.main

helm.sh/helm/v3/cmd/helm/helm.go:83

runtime.main

runtime/proc.go:250

runtime.goexit

runtime/asm_amd64.s:1571

```

### PostgreSQL describe pod

```

Name: airflow-postgresql-0

Namespace: airflow

Priority: 0

Service Account: default

Node: <none>

Labels: app.kubernetes.io/component=primary

app.kubernetes.io/instance=airflow

app.kubernetes.io/managed-by=Helm

app.kubernetes.io/name=postgresql

controller-revision-hash=airflow-postgresql-d9b49657b

helm.sh/chart=postgresql-10.5.3

role=primary

statefulset.kubernetes.io/pod-name=airflow-postgresql-0

Annotations: kubernetes.io/psp: eks.privileged

Status: Pending

IP:

IPs: <none>

Controlled By: StatefulSet/airflow-postgresql

Containers:

airflow-postgresql:

Image: docker.io/bitnami/postgresql:11.12.0-debian-10-r44

Port: 5432/TCP

Host Port: 0/TCP

Requests:

cpu: 250m

memory: 256Mi

Liveness: exec [/bin/sh -c exec pg_isready -U "postgres" -h 127.0.0.1

-p 5432] delay=30s timeout=5s period=10s #success=1 #failure=6

Readiness: exec [/bin/sh -c -e exec pg_isready -U "postgres" -h

127.0.0.1 -p 5432

[ -f /opt/bitnami/postgresql/tmp/.initialized ] || [ -f

/bitnami/postgresql/.initialized ]

] delay=5s timeout=5s period=10s #success=1 #failure=6

Environment:

BITNAMI_DEBUG: false

POSTGRESQL_PORT_NUMBER: 5432

POSTGRESQL_VOLUME_DIR: /bitnami/postgresql

PGDATA: /bitnami/postgresql/data

POSTGRES_USER: postgres

POSTGRES_PASSWORD: <set to the key

'postgresql-password' in secret 'airflow-postgresql'> Optional: false

POSTGRESQL_ENABLE_LDAP: no

POSTGRESQL_ENABLE_TLS: no

POSTGRESQL_LOG_HOSTNAME: false

POSTGRESQL_LOG_CONNECTIONS: false

POSTGRESQL_LOG_DISCONNECTIONS: false

POSTGRESQL_PGAUDIT_LOG_CATALOG: off

POSTGRESQL_CLIENT_MIN_MESSAGES: error

POSTGRESQL_SHARED_PRELOAD_LIBRARIES: pgaudit

Mounts:

/bitnami/postgresql from data (rw)

/dev/shm from dshm (rw)

/var/run/secrets/kubernetes.io/serviceaccount from

kube-api-access-58lzq (ro)

Volumes:

data:

Type: PersistentVolumeClaim (a reference to a

PersistentVolumeClaim in the same namespace)

ClaimName: data-airflow-postgresql-0

ReadOnly: false

dshm:

Type: EmptyDir (a temporary directory that shares a pod's lifetime)

Medium: Memory

SizeLimit: <unset>

kube-api-access-58lzq:

Type: Projected (a volume that contains injected data

from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

ConfigMapOptional: <nil>

DownwardAPI: true

QoS Class: Burstable

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute

op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute

op=Exists for 300s

Events: <none>

```

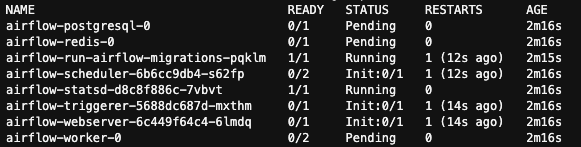

The pods never run

### What you think should happen instead

No idea, on the local environment (Kubernetes using Docker) the helm char

works fine.

### How to reproduce

### Comand used

```

helm install airflow apache-airflow/airflow --namespace airflow --debug

--timeout 10m0s

```

### Cluster Config

```

apiVersion: eksctl.io/v1alpha5

kind: ClusterConfig

metadata:

name: airflow

region: us-east-1

version: "1.23"

managedNodeGroups:

- name: workers

instanceType: t3.medium

privateNetworking: true

minSize: 1

maxSize: 3

desiredCapacity: 3

volumeSize: 20

ssh:

allow: true

publicKeyName: airflow-workstation

labels: { role: worker }

tags:

nodegroup-role: worker

iam:

withAddonPolicies:

ebs: true

imageBuilder: true

efs: true

albIngress: true

autoScaler: true

cloudWatch: true

externalDNS: true

```

### Anything else

_No response_

### Are you willing to submit PR?

- [ ] Yes I am willing to submit a PR!

### Code of Conduct

- [X] I agree to follow this project's [Code of

Conduct](https://github.com/apache/airflow/blob/main/CODE_OF_CONDUCT.md)

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: [email protected]

For queries about this service, please contact Infrastructure at:

[email protected]