pmercatoris opened a new issue, #29339: URL: https://github.com/apache/airflow/issues/29339

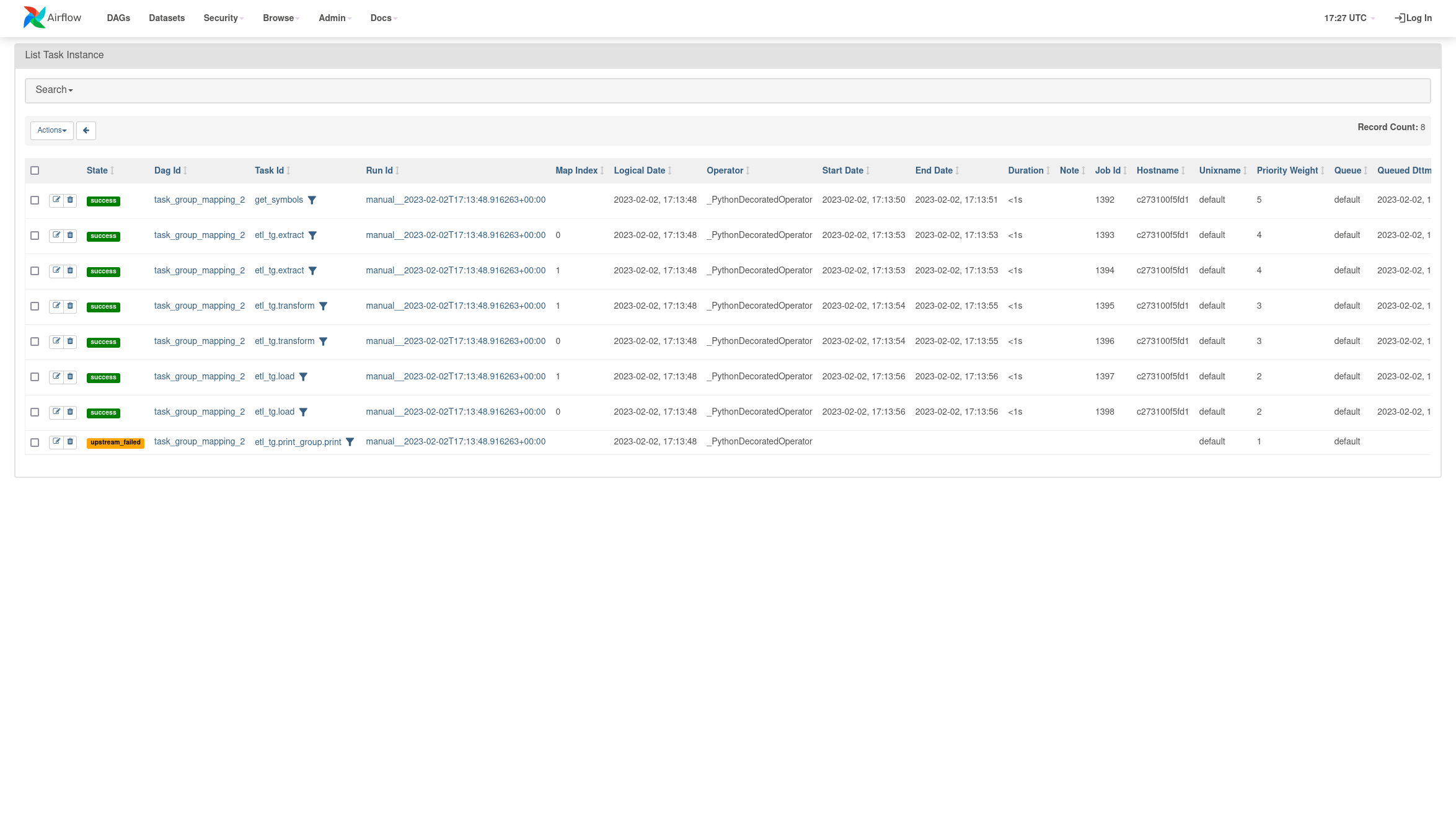

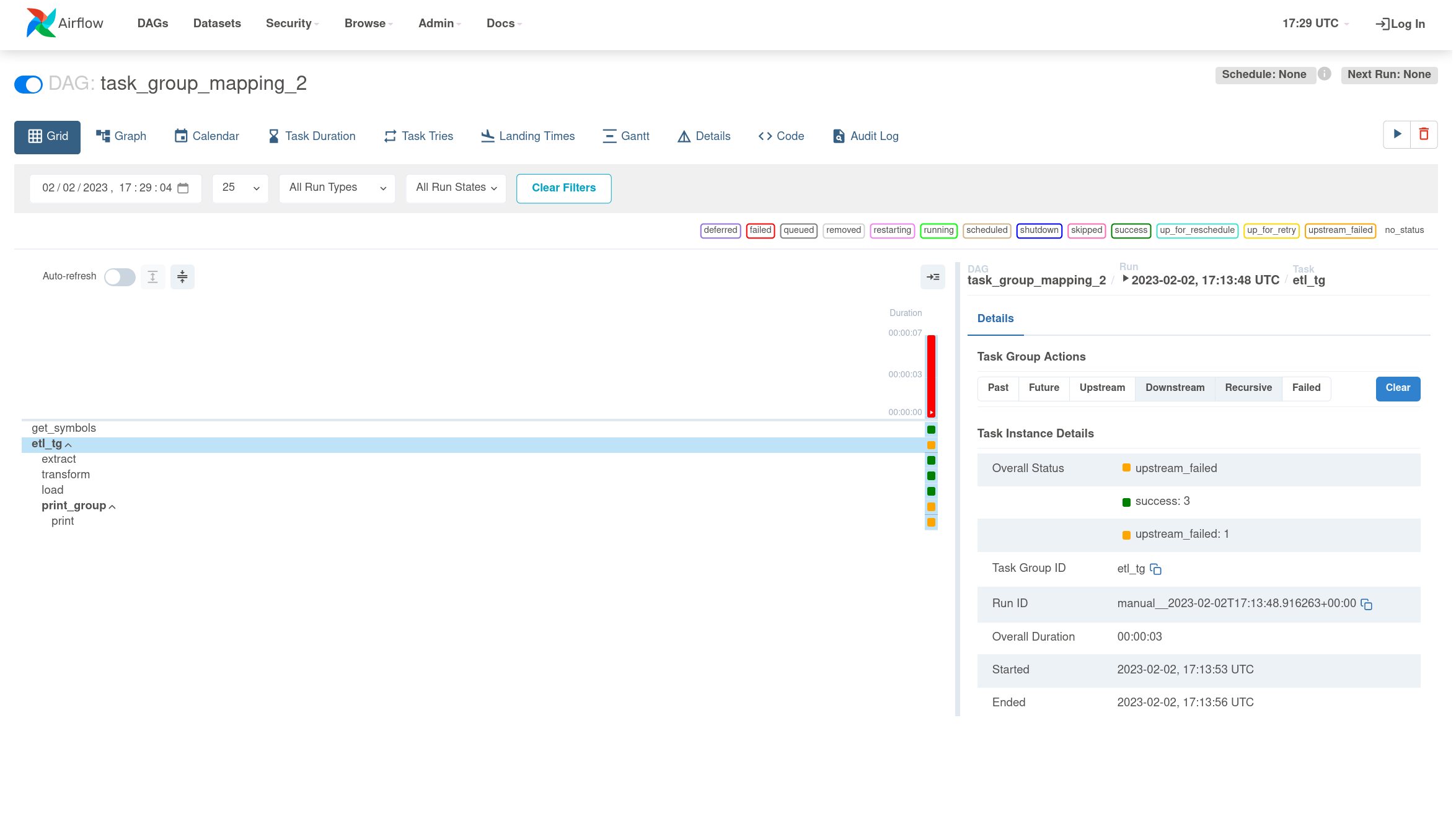

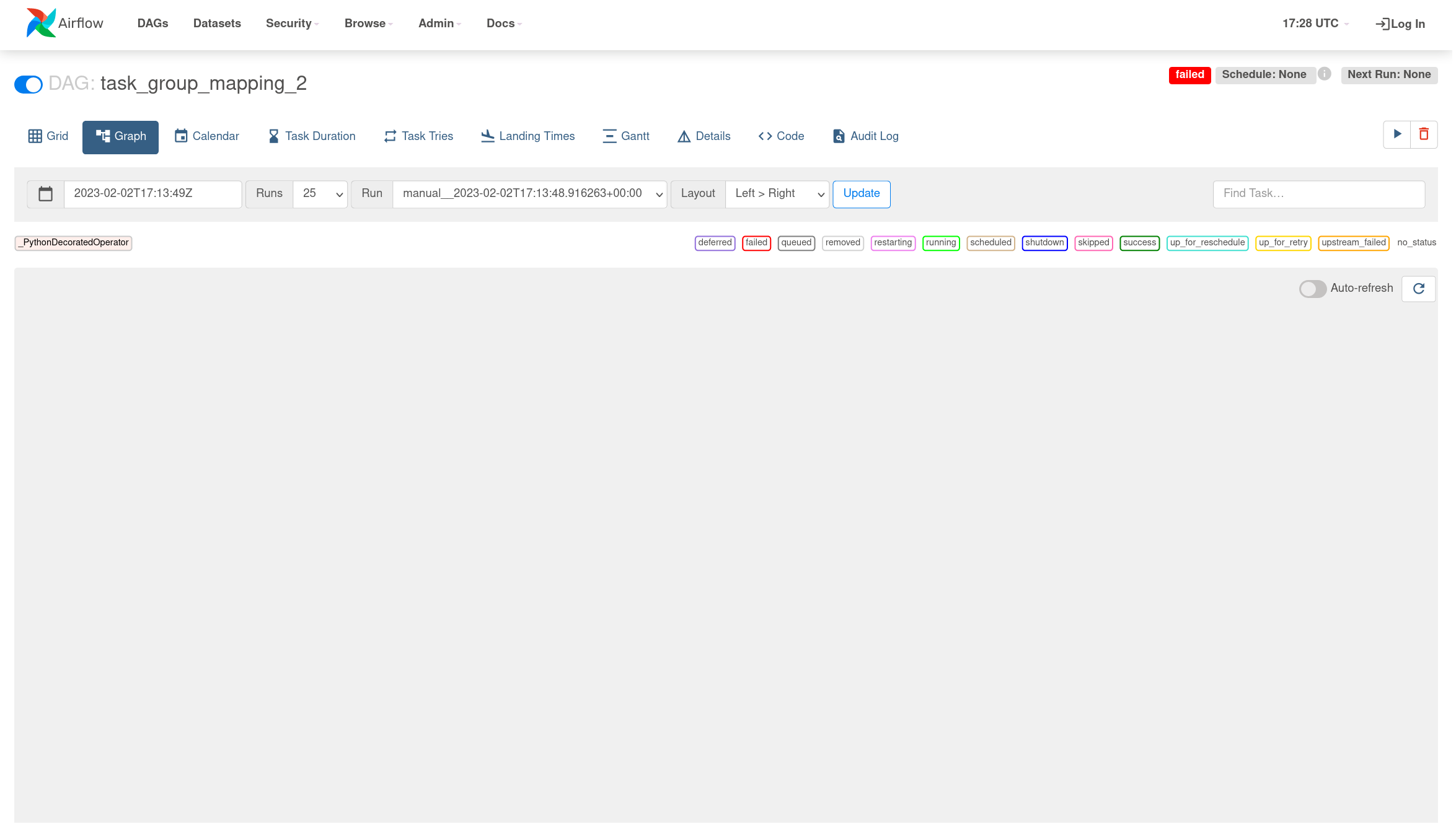

### Apache Airflow version 2.5.1 ### What happened I am trying to map a task group within another task group which is already mapped. However, when launching the dag, all tasks of the containing task group successfully finish. However, the following occurs: - The contained task group is not mapped and has a state of `upstream failed`.   - The graph UI doesn't show after launching the dag as in https://github.com/apache/airflow/issues/29287  ### What you think should happen instead I would expect the print_group and print task to start as soon as 1 of the load task finishes ### How to reproduce I am currently using the docker-compose of the version 2.5.1 `FROM apache/airflow:2.5.1-python3.10` ```python import pendulum from airflow.decorators import dag, task, task_group @task def get_symbols(): res = [('A', 1, 111), ('B', 2, 222)] return res @task def print(symbol_info, data_interval_end=None): # Do some work... print(symbol_info) return symbol_info @task_group() def print_group(symbol): return print(symbol_info=symbol) @task def extract(symbol_info, data_interval_end=None): # Do some work... return symbol_info @task def transform(symbol_info, data_interval_end=None): # Do some work... return symbol_info @task def load(symbol_info, data_interval_end=None): # Do some work... return 2*[symbol_info] @task_group def etl_tg(symbol): raw_symbols_data = extract(symbol_info=symbol) clean_symbols_data = transform(symbol_info=raw_symbols_data) loaded_symbols = load(symbol_info=clean_symbols_data) return print_group.expand(symbol=loaded_symbols) @dag( dag_id=f"task_group_mapping_2", tags=["sandbox"], schedule=None, start_date=pendulum.datetime(2023, 1, 1, tz="UTC"), catchup=False, max_active_runs=1, ) def etl_dag(): # DAG symbols = get_symbols() etl_tg.expand(symbol=symbols) etl_dag() ``` ### Operating System Ubuntu 20.04.5 LTS ### Versions of Apache Airflow Providers _No response_ ### Deployment Docker-Compose ### Deployment details _No response_ ### Anything else _No response_ ### Are you willing to submit PR? - [ ] Yes I am willing to submit a PR! ### Code of Conduct - [X] I agree to follow this project's [Code of Conduct](https://github.com/apache/airflow/blob/main/CODE_OF_CONDUCT.md) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]