utkarsharma2 commented on PR #31142:

URL: https://github.com/apache/airflow/pull/31142#issuecomment-1539501630

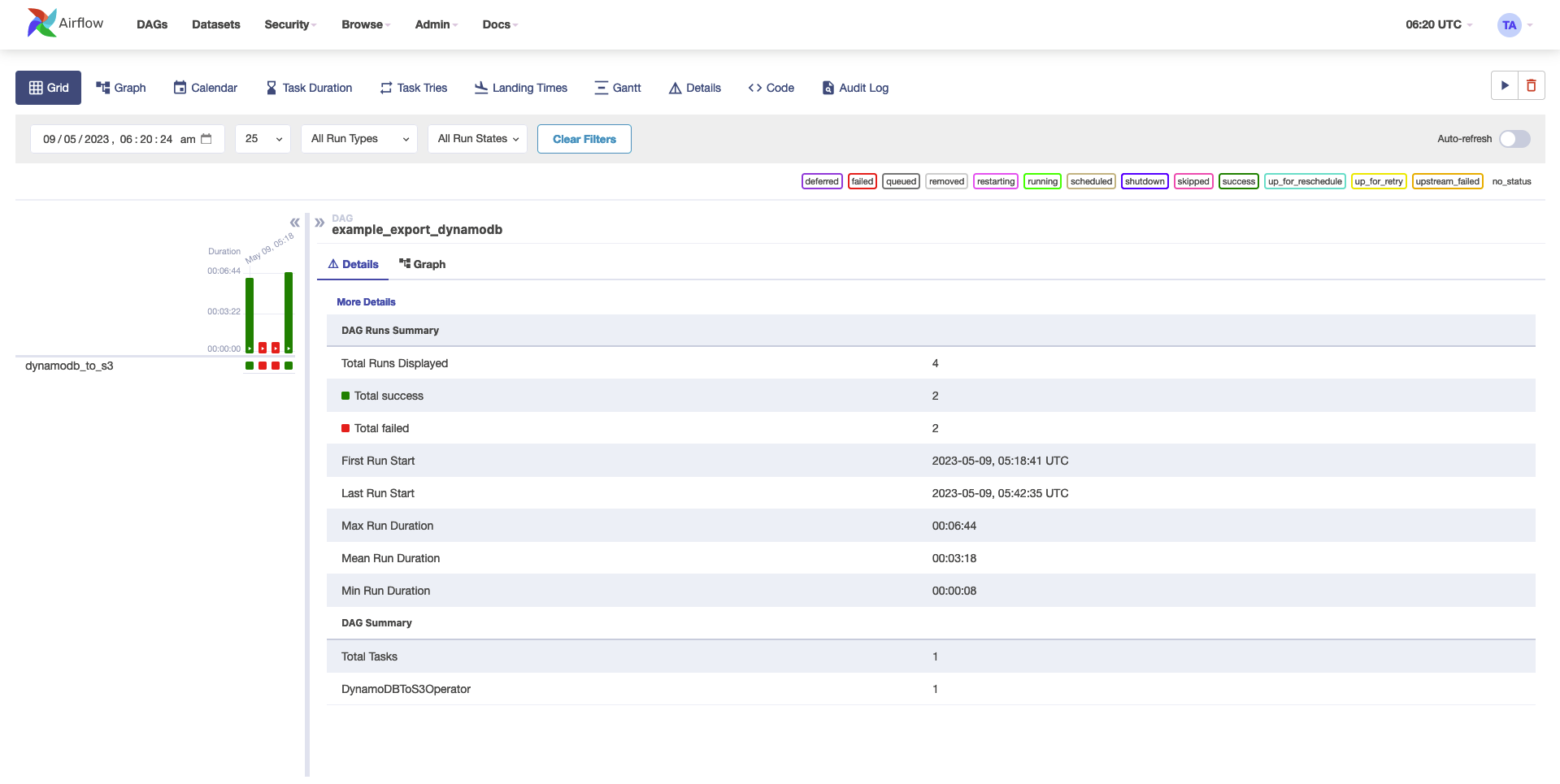

Have tested this locally with the below dag and it's working for me

```

from datetime import datetime

from airflow import DAG

from airflow.providers.amazon.aws.transfers.dynamodb_to_s3 import

DynamoDBToS3Operator

with DAG(

dag_id='example_export_dynamodb',

schedule_interval=None,

start_date=datetime(2021, 1, 1),

tags=['example'],

catchup=False,

) as dag:

dynamodb_to_s3_operator = DynamoDBToS3Operator(

task_id="dynamodb_to_s3",

dynamodb_table_name="test",

s3_bucket_name="tmp9",

file_size=4000,

export_time=datetime.now(),

aws_conn_id="aws_default",

s3_key_prefix="test1"

)

```

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: [email protected]

For queries about this service, please contact Infrastructure at:

[email protected]