pingzh commented on pull request #7141: URL: https://github.com/apache/airflow/pull/7141#issuecomment-619476465

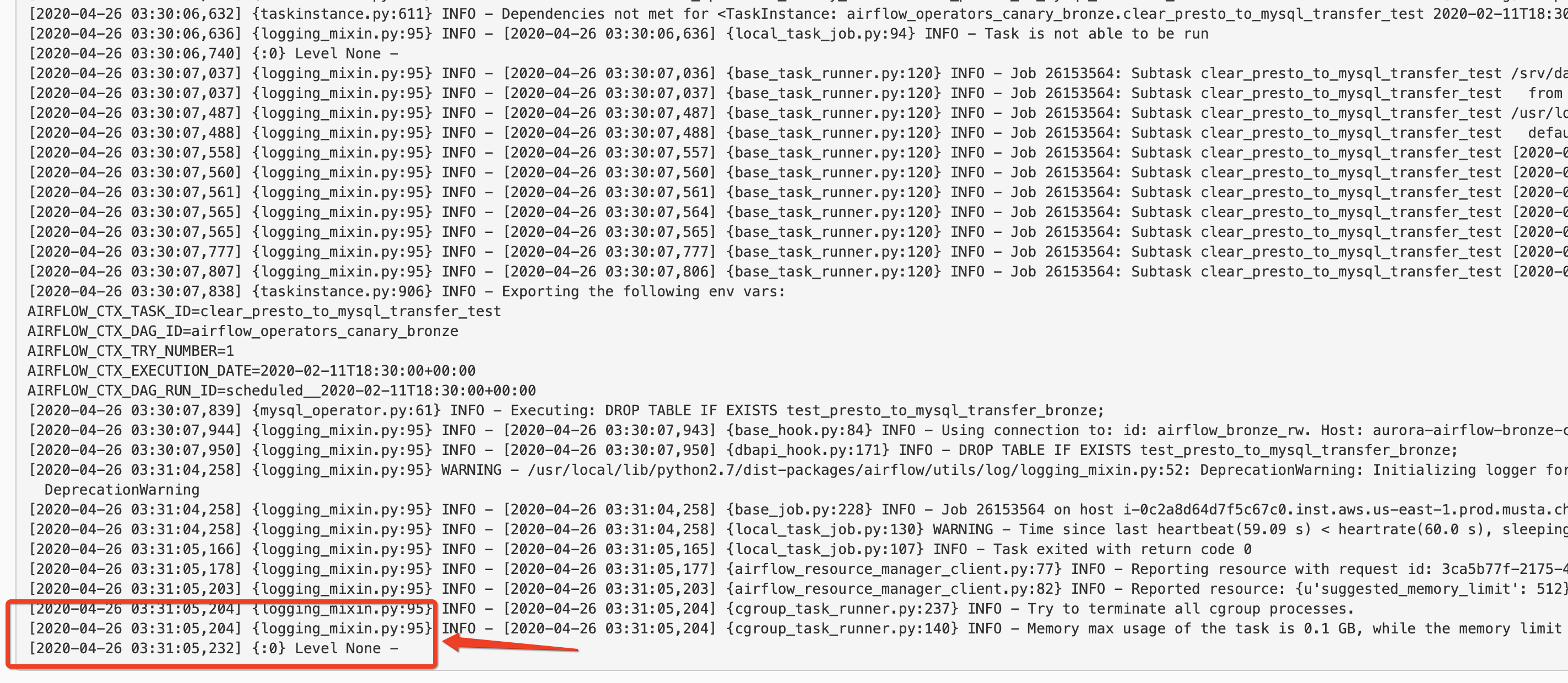

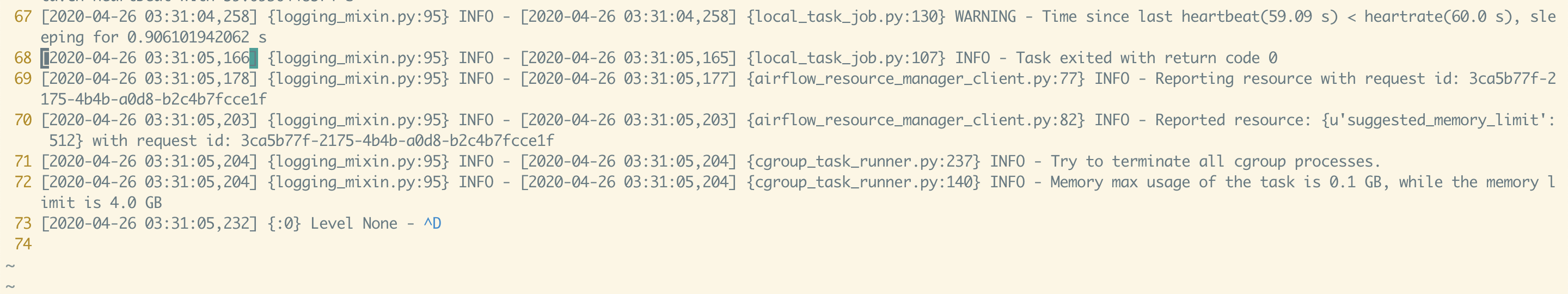

I replicated the change in our internal staging env, the webserver does not stop fetching the log until it timed out. As you can see the last line is  Our setting is `END_OF_LOG_MARK = u'\u0004\n'` ``` [elasticsearch] # Elasticsearch host host = # Format of the log_id, which is used to query for a given tasks logs log_id_template = {{dag_id}}-{{task_id}}-{{execution_date}}-{{try_number}} # Used to mark the end of a log stream for a task end_of_log_mark = end_of_log # Qualified URL for an elasticsearch frontend (like Kibana) with a template argument for log_id # Code will construct log_id using the log_id template from the argument above. # NOTE: The code will prefix the https:// automatically, don't include that here. frontend = # Write the task logs to the stdout of the worker, rather than the default files write_stdout = False # Instead of the default log formatter, write the log lines as JSON json_format = False # Log fields to also attach to the json output, if enabled json_fields = asctime, filename, lineno, levelname, message ``` this is the log from the log file:  --- One thing i also noticed is that in your code, the `ELASTICSEARCH_WRITE_STDOUT: str = conf.get('elasticsearch', 'WRITE_STDOUT')` is always `true`, since it is using the `conf.get`. this is fixed in this PR: https://github.com/apache/airflow/pull/7199/ ---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected]