[

https://issues.apache.org/jira/browse/AIRFLOW-6544?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17092486#comment-17092486

]

ASF GitHub Bot commented on AIRFLOW-6544:

-----------------------------------------

larryzhu2018 commented on pull request #7141:

URL: https://github.com/apache/airflow/pull/7141#issuecomment-619482093

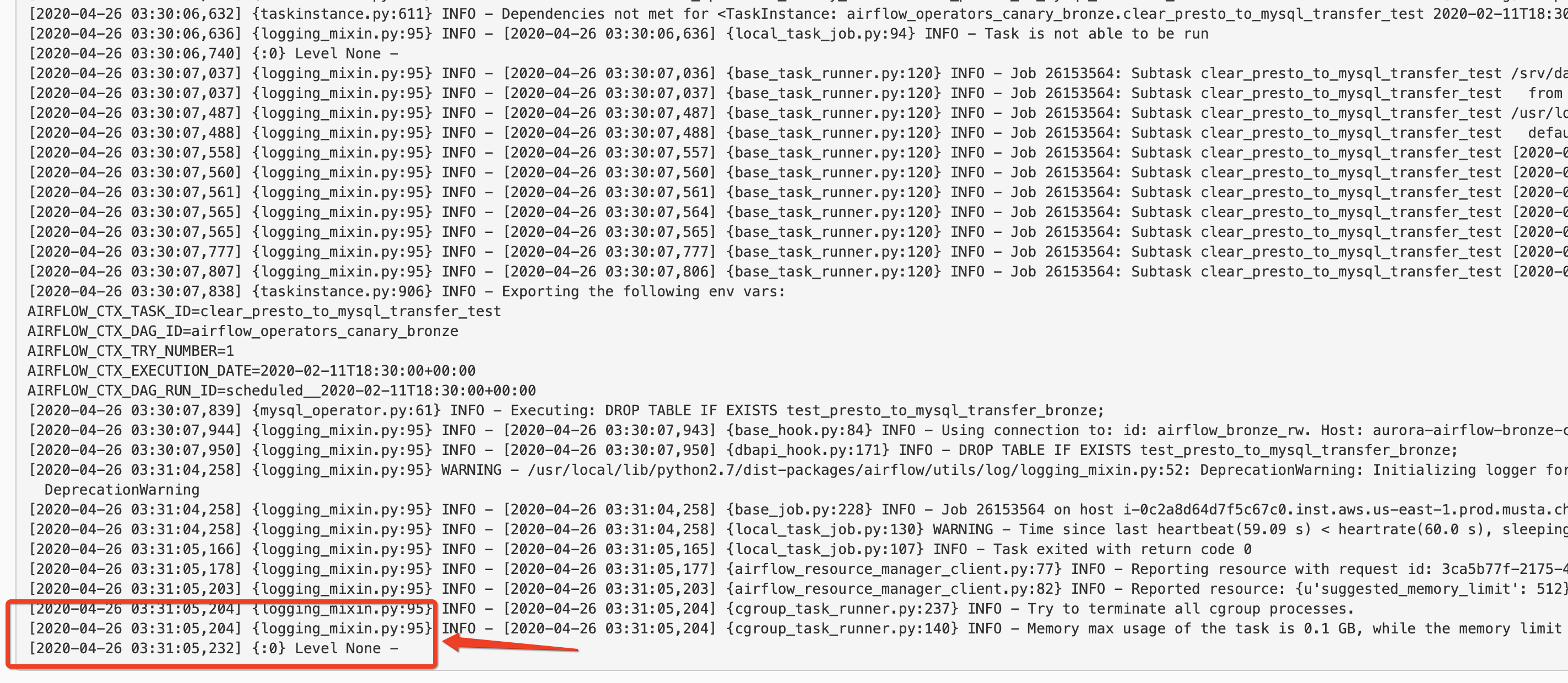

> I replicated the change in our internal staging env, the webserver does

not stop fetching the log until it timed out. As you can see the last line is

>

>

> Our setting is `END_OF_LOG_MARK = u'\u0004\n'`

>

logging pipeline in general does not work with whitespaces. Can you please

change this to "end_of_log_for_airflow_task_instance" and also as I mentioned

before you will need to turn on json format for the elastic search scenarios to

work well because otherwise parsing the dag_id, task_id etc would be harder in

elasticsearch. Please see the ingestion pipeline that I shared out earlier.

Here are the configurations I use for enabling logging for elasticsearch

config:

AIRFLOW__CORE__REMOTE_LOGGING: "True"

AIRFLOW__ELASTICSEARCH__HOST: "dev-iad-cluster-ingest.controltower:9200"

AIRFLOW__ELASTICSEARCH__LOG_ID_TEMPLATE:

"{dag_id}-{task_id}-{execution_date}-{try_number}"

AIRFLOW__ELASTICSEARCH__END_OF_LOG_MARK:

"end_of_log_for_airflow_task_instance"

AIRFLOW__ELASTICSEARCH__WRITE_STDOUT: "True"

AIRFLOW__ELASTICSEARCH__JSON_FORMAT: "True"

AIRFLOW__ELASTICSEARCH__JSON_FIELDS: "asctime, filename, lineno,

levelname, message"

AIRFLOW__ELASTICSEARCH__INDEX: "filebeat-*"

AIRFLOW__LOGGING__COLORED_CONSOLE_LOG: "False"

> ```

>

> [elasticsearch]

> # Elasticsearch host

> host =

> # Format of the log_id, which is used to query for a given tasks logs

> log_id_template = {{dag_id}}-{{task_id}}-{{execution_date}}-{{try_number}}

> # Used to mark the end of a log stream for a task

> end_of_log_mark = end_of_log

> # Qualified URL for an elasticsearch frontend (like Kibana) with a

template argument for log_id

> # Code will construct log_id using the log_id template from the argument

above.

> # NOTE: The code will prefix the https:// automatically, don't include

that here.

> frontend =

> # Write the task logs to the stdout of the worker, rather than the default

files

> write_stdout = False

> # Instead of the default log formatter, write the log lines as JSON

> json_format = False

> # Log fields to also attach to the json output, if enabled

> json_fields = asctime, filename, lineno, levelname, message

> ```

>

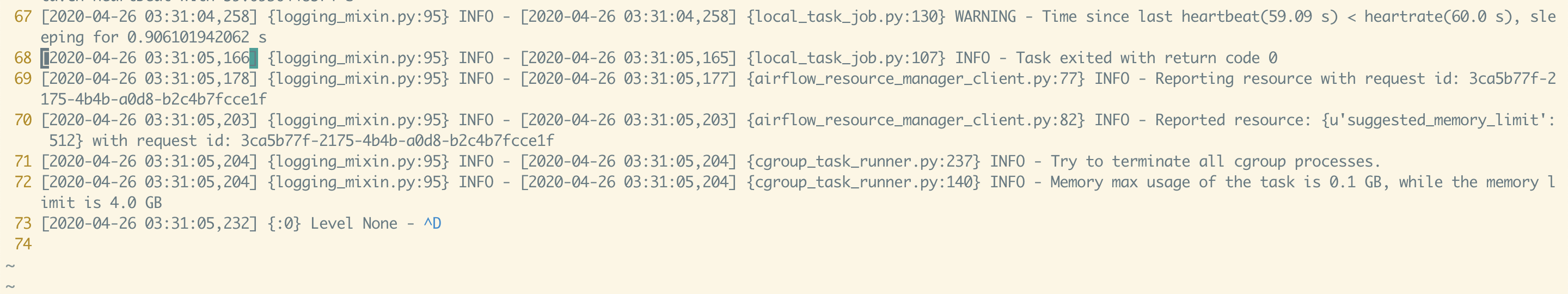

> this is the log from the log file:

>

>

> One thing i also noticed is that in your code, the

`ELASTICSEARCH_WRITE_STDOUT: str = conf.get('elasticsearch', 'WRITE_STDOUT')`

is always `true`, since it is using the `conf.get`. this is fixed in this PR:

#7199

thanks. I did not change this. this does not impact my scenarios as I deploy

airflow in kubernetes and I need to have the write-standout always be true.

----------------------------------------------------------------

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

[email protected]

> Log_id is not missing when writing logs to elastic search

> ---------------------------------------------------------

>

> Key: AIRFLOW-6544

> URL: https://issues.apache.org/jira/browse/AIRFLOW-6544

> Project: Apache Airflow

> Issue Type: Bug

> Components: logging

> Affects Versions: 1.10.7

> Reporter: Larry Zhu

> Assignee: Larry Zhu

> Priority: Major

> Labels: pull-request-available

> Fix For: 2.0.0

>

> Original Estimate: 1h

> Remaining Estimate: 1h

>

> The “end of log” marker does not include the aforementioned log_id. The issue

> is then airflow-web does not know when to stop tailing the logs.

> Also it would be better to include an elasticsearch configuration for index

> name so that the search is more efficient in big clusters with a lot of

> indices

--

This message was sent by Atlassian Jira

(v8.3.4#803005)