zhanguohao opened a new issue #4377:

URL: https://github.com/apache/incubator-dolphinscheduler/issues/4377

**For better global communication, Please describe it in English. If you

feel the description in English is not clear, then you can append description

in Chinese(just for Mandarin(CN)), thx! **

**Describe the question**

- about fault design When Worker lost zk connection or zk crash

- consistency of the task status

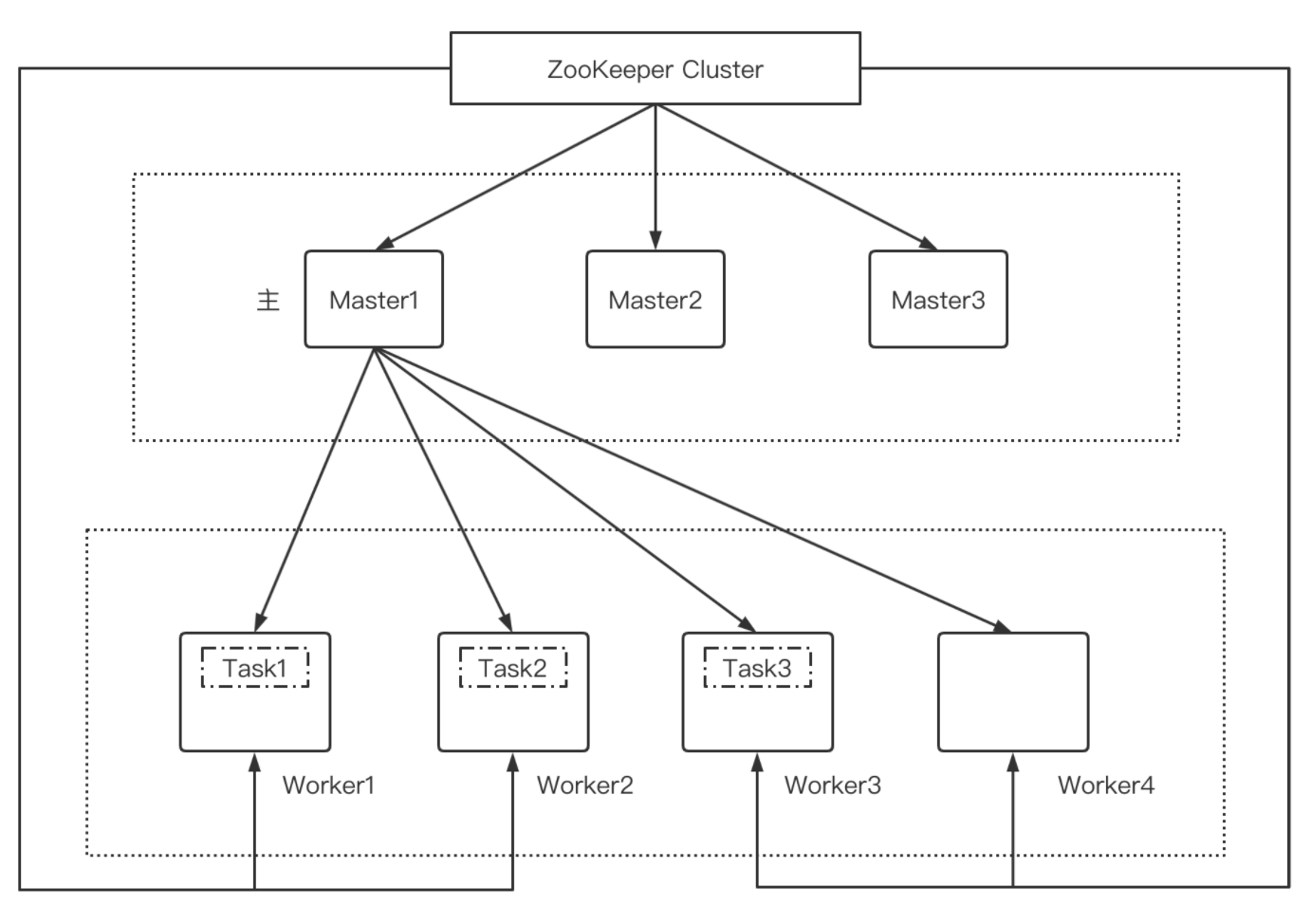

the task is one processInstance with one node,master hosting the

processInstance,worker hosting the task

(Following pic does not show the processInstance in master)

**Which version of DolphinScheduler:**

-[1.3.3]

-[1.3.4]

**Additional context**

There are several kinds of situations to consider

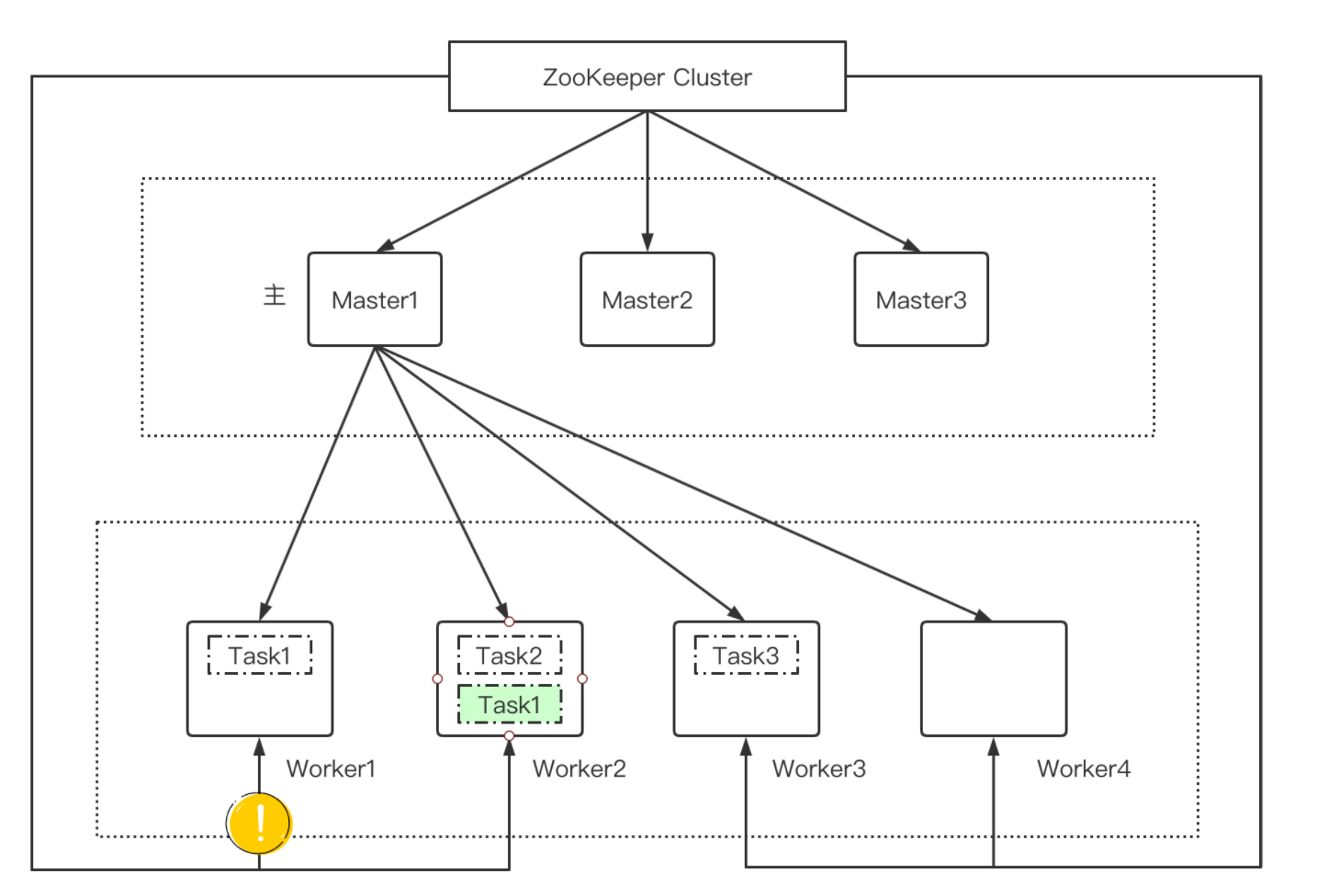

## 1. worker task repeat

if worker lost zk connection,master will start failover, and master1 start

new task on another worker,so task1 repeat

worker1 and worker2 both execute task1, if worker1 recover connection,the

thread for execute is busy

possible solution:

- when worker lost zk connection,clean local execution context, stop all

task thread

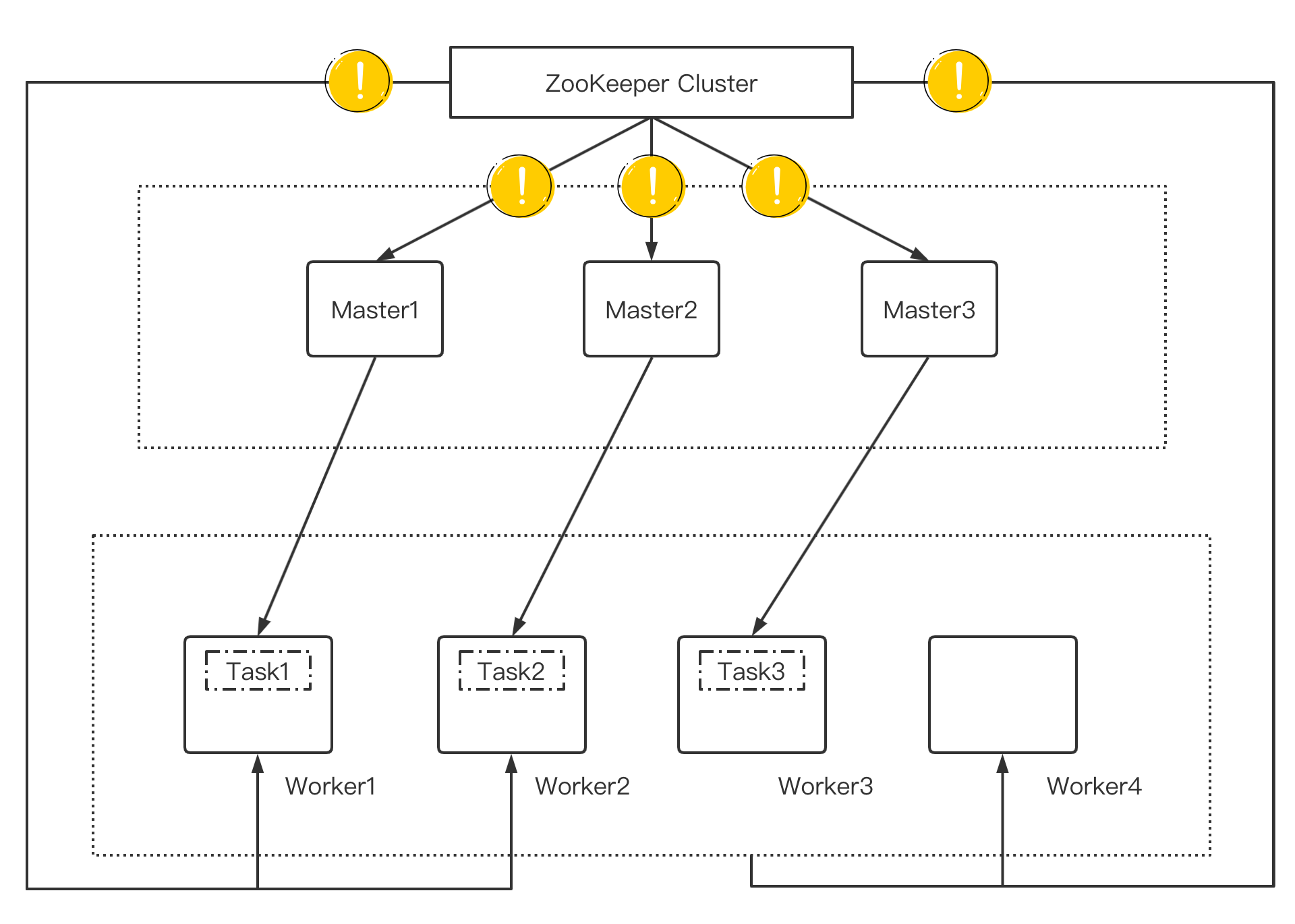

## 2. zk crash or zk network error(like switch error)

**worker still executes the task**

if zk recover,possible state

- The task in TaskPriorityQueue maybe be dispatched,and other master start

failover ,these task may repeat

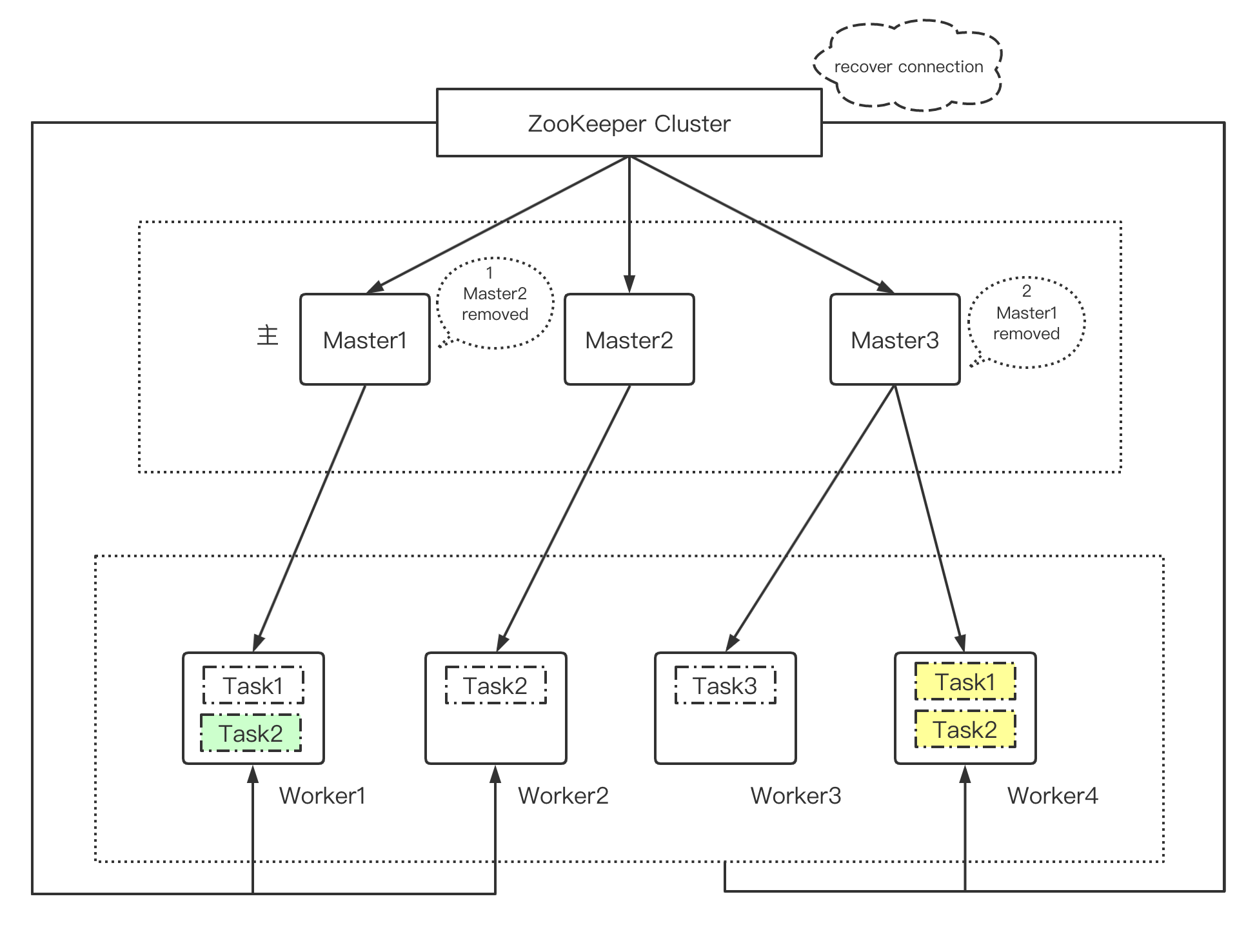

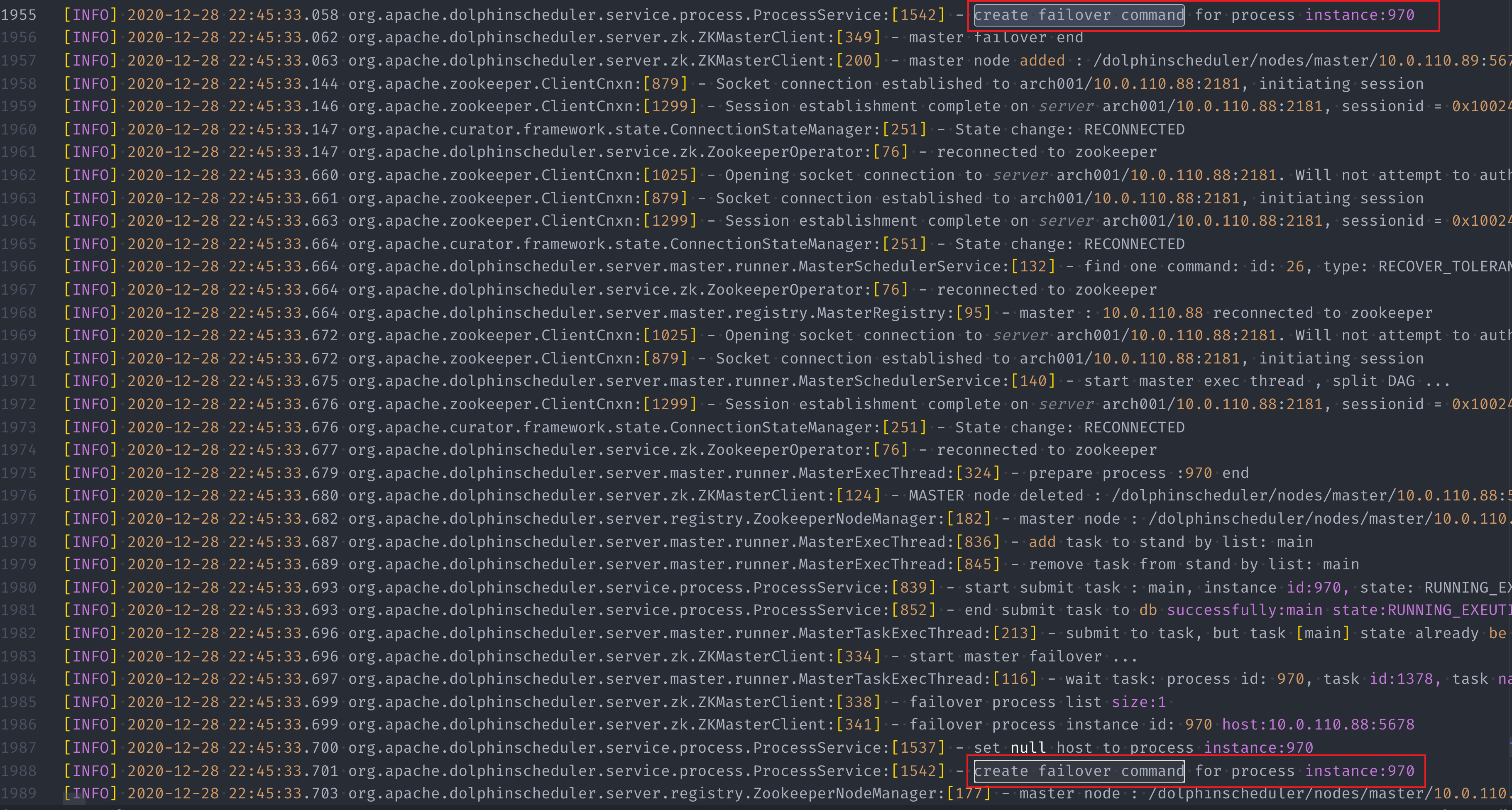

- Master1 received Master2 removed event, and start failover Master2(create

new failover command), and Master1 start task2 ,next few ms,Master1 received

Master1 removed event, and this will create new command for task2 (create new

failover command),so we get multiple task1

## 3. master thread and TaskPriorityQueue clear

when master lost zk connection , Whether to clean up the execution context

(MasterExecThread ,MasterTaskExecThread,TaskPriorityQueue)

----------------------------------------------------------------

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

[email protected]