baijun opened a new issue #4461: URL: https://github.com/apache/incubator-dolphinscheduler/issues/4461

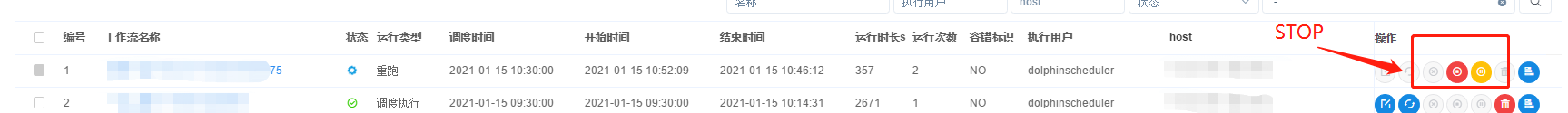

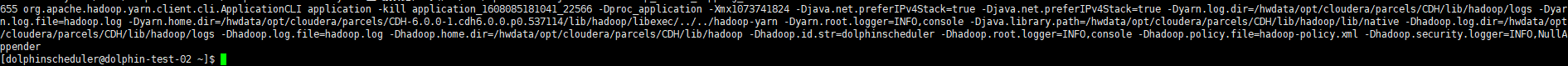

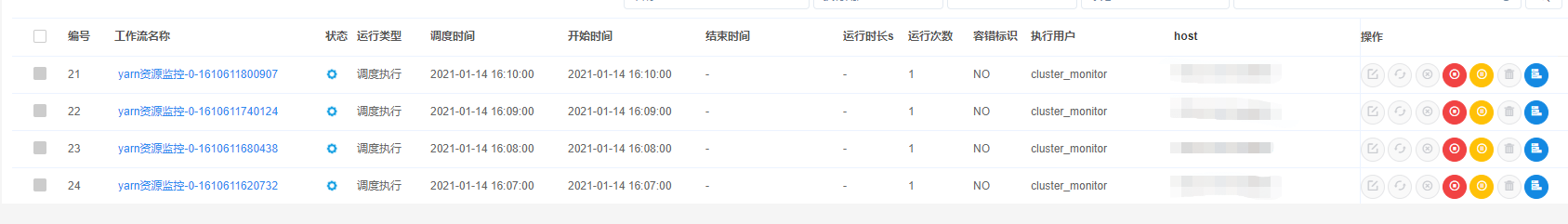

1. The workflow instance contains Spark tasks, which are submitted to YARN for execution via cluster mode. 2. Stop the workflow instance through DS interface.  3. At this time, DS will send kill instruction on the work host to terminate the submitted task.  4. When JPS looks at the work node, it will see the application-kill directive.   5. At this time, WORK is unable to respond to other requests. Work is stuck, and all tasks in DS are waiting for execution. 6. After you kill applicaiton manually, the task is normal. 7. version 1.3.4 1、工作流实例中包含spark任务,通过cluster模式提交到yarn上执行。 2、通过ds界面停止工作流实例。 3、此时ds会在work主机上发送kill指令终止提交的任务。 4、jps查看work节点会看到application -kill指令。 5、此时work处于无法响应其他请求的状态,work卡死,DS中所有任务处于等待执行状态。 6、手动kill掉applicaiton后,任务正常。 ---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected]