haorengoodman commented on issue #12308: URL: https://github.com/apache/dolphinscheduler/issues/12308#issuecomment-1275441726

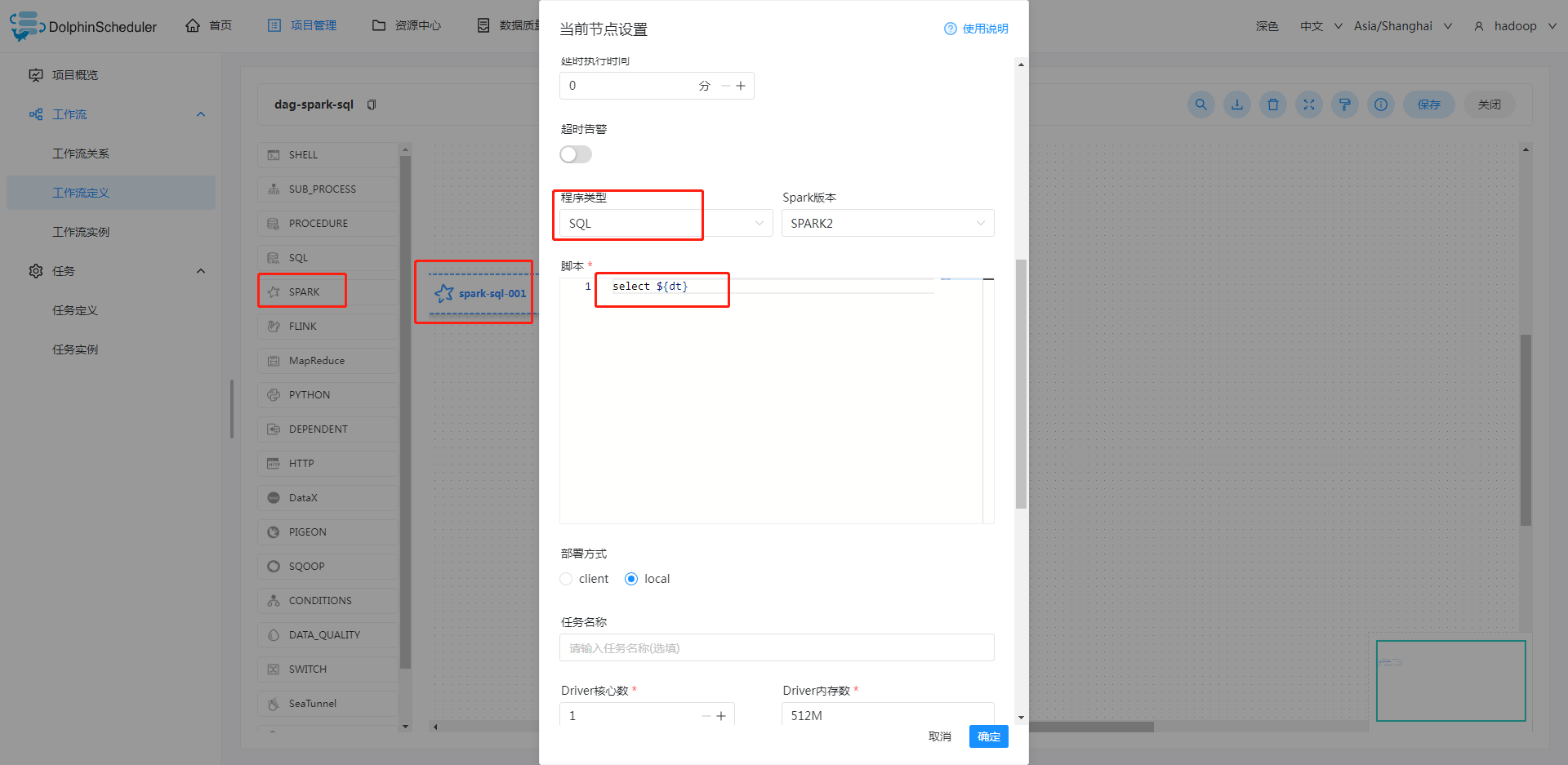

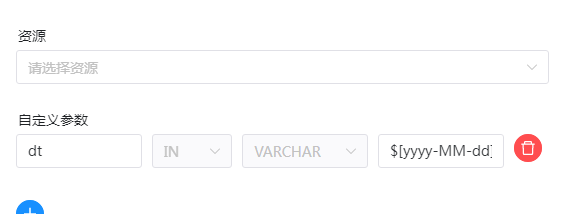

[LOG-PATH]: /opt/dolphin/install/worker-server/logs/20221012/7172773527040_35-121-137.log, [HOST]: Host{address='10.20.20.12:1234', ip='10.20.20.12', port=1234} [INFO] 2022-10-12 00:42:23.980 +0000 - spark task params {"localParams":[{"prop":"dt","direct":"IN","type":"VARCHAR","value":"$[yyyy-MM-dd]"}],"rawScript":"select ${dt}","resourceList":[],"programType":"SQL","mainClass":"","deployMode":"local","sparkVersion":"SPARK2","driverCores":1,"driverMemory":"512M","numExecutors":1,"executorMemory":"1G","executorCores":1} [INFO] 2022-10-12 00:42:23.990 +0000 - raw script : select ${dt} [INFO] 2022-10-12 00:42:23.990 +0000 - task execute path : /tmp/dolphinscheduler/exec/process/7170590636544/7172773527040_35/121/137 [INFO] 2022-10-12 00:42:24.016 +0000 - spark task command: ${SPARK_HOME2}/bin/spark-sql --master local --driver-cores 1 --driver-memory 512M --num-executors 1 --executor-cores 1 --executor-memory 1G -f /tmp/dolphinscheduler/exec/process/7170590636544/7172773527040_35/121/137/121_137_node.sql [INFO] 2022-10-12 00:42:24.017 +0000 - tenantCode user:hadoop, task dir:121_137 [INFO] 2022-10-12 00:42:24.017 +0000 - create command file:/tmp/dolphinscheduler/exec/process/7170590636544/7172773527040_35/121/137/121_137.command [INFO] 2022-10-12 00:42:24.017 +0000 - command : #!/bin/bash BASEDIR=$(cd `dirname $0`; pwd) cd $BASEDIR source /opt/dolphin/install/worker-server/conf/dolphinscheduler_env.sh ${SPARK_HOME2}/bin/spark-sql --master local --driver-cores 1 --driver-memory 512M --num-executors 1 --executor-cores 1 --executor-memory 1G -f /tmp/dolphinscheduler/exec/process/7170590636544/7172773527040_35/121/137/121_137_node.sql [INFO] 2022-10-12 00:42:24.061 +0000 - task run command: sudo -u hadoop sh /tmp/dolphinscheduler/exec/process/7170590636544/7172773527040_35/121/137/121_137.command [INFO] 2022-10-12 00:42:24.071 +0000 - process start, process id is: 689910 [INFO] 2022-10-12 00:42:26.063 +0000 - -> 2022-10-12 08:42:25 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable 2022-10-12 08:42:25 INFO conf.HiveConf: Found configuration file file:/opt/usdp-srv/srv/udp/2.0.0.0/spark/conf/hive-site.xml 2022-10-12 08:42:25 WARN conf.HiveConf: HiveConf of name hive.enforce.bucketing does not exist 2022-10-12 08:42:25 INFO internal.SharedState: loading hive config file: file:/opt/usdp-srv/srv/udp/2.0.0.0/spark/conf/hive-site.xml 2022-10-12 08:42:25 INFO internal.SharedState: spark.sql.warehouse.dir is not set, but hive.metastore.warehouse.dir is set. Setting spark.sql.warehouse.dir to the value of hive.metastore.warehouse.dir ('/cluster1/user/hive/warehouse/'). 2022-10-12 08:42:25 INFO internal.SharedState: Warehouse path is '/cluster1/user/hive/warehouse/'. 2022-10-12 08:42:25 INFO session.SessionState: Created HDFS directory: /cluster1/tmp/hive/hadoop/ab87d9d4-2d69-4865-abc8-22ff8fdf31a4 2022-10-12 08:42:26 INFO session.SessionState: Created local directory: /tmp/hadoop/ab87d9d4-2d69-4865-abc8-22ff8fdf31a4 2022-10-12 08:42:26 INFO session.SessionState: Created HDFS directory: /cluster1/tmp/hive/hadoop/ab87d9d4-2d69-4865-abc8-22ff8fdf31a4/_tmp_space.db [INFO] 2022-10-12 00:42:27.064 +0000 - -> 2022-10-12 08:42:26 INFO spark.SparkContext: Running Spark version 3.0.1 2022-10-12 08:42:26 INFO resource.ResourceUtils: ============================================================== 2022-10-12 08:42:26 INFO resource.ResourceUtils: Resources for spark.driver: 2022-10-12 08:42:26 INFO resource.ResourceUtils: ============================================================== 2022-10-12 08:42:26 INFO spark.SparkContext: Submitted application: SparkSQL::10.20.20.12 2022-10-12 08:42:26 INFO spark.SecurityManager: Changing view acls to: hadoop 2022-10-12 08:42:26 INFO spark.SecurityManager: Changing modify acls to: hadoop 2022-10-12 08:42:26 INFO spark.SecurityManager: Changing view acls groups to: 2022-10-12 08:42:26 INFO spark.SecurityManager: Changing modify acls groups to: 2022-10-12 08:42:26 INFO spark.SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(hadoop); groups with view permissions: Set(); users with modify permissions: Set(hadoop); groups with modify permissions: Set() 2022-10-12 08:42:26 INFO util.Utils: Successfully started service 'sparkDriver' on port 36912. 2022-10-12 08:42:26 INFO spark.SparkEnv: Registering MapOutputTracker 2022-10-12 08:42:26 INFO spark.SparkEnv: Registering BlockManagerMaster 2022-10-12 08:42:26 INFO storage.BlockManagerMasterEndpoint: Using org.apache.spark.storage.DefaultTopologyMapper for getting topology information 2022-10-12 08:42:26 INFO storage.BlockManagerMasterEndpoint: BlockManagerMasterEndpoint up 2022-10-12 08:42:26 INFO spark.SparkEnv: Registering BlockManagerMasterHeartbeat 2022-10-12 08:42:26 INFO storage.DiskBlockManager: Created local directory at /home/data/udp/2.0.0.0/spark/blockmgr-d9518483-40a9-44c7-8af8-fba2c519508e 2022-10-12 08:42:26 INFO memory.MemoryStore: MemoryStore started with capacity 93.3 MiB 2022-10-12 08:42:26 INFO spark.SparkEnv: Registering OutputCommitCoordinator 2022-10-12 08:42:26 INFO util.log: Logging initialized @2161ms to org.sparkproject.jetty.util.log.Slf4jLog 2022-10-12 08:42:26 INFO server.Server: jetty-9.4.z-SNAPSHOT; built: 2019-04-29T20:42:08.989Z; git: e1bc35120a6617ee3df052294e433f3a25ce7097; jvm 1.8.0_202-b08 2022-10-12 08:42:26 INFO server.Server: Started @2227ms 2022-10-12 08:42:26 INFO server.AbstractConnector: Started ServerConnector@64f1fd08{HTTP/1.1,[http/1.1]}{0.0.0.0:4040} 2022-10-12 08:42:26 INFO util.Utils: Successfully started service 'SparkUI' on port 4040. 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@10358c32{/jobs,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@5f117b3d{/jobs/json,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@71b6d77f{/jobs/job,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@78226c36{/jobs/job/json,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@11f406f8{/stages,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@622fdb81{/stages/json,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@2ec3633f{/stages/stage,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@20576557{/stages/stage/json,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@45c2e0a6{/stages/pool,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@a7ad6e5{/stages/pool/json,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@690bc15e{/storage,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@475646d4{/storage/json,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@3db972d2{/storage/rdd,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@687e4c93{/storage/rdd/json,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@6ec7bce0{/environment,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@3727f0ee{/environment/json,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@fb713e7{/executors,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@13741d5a{/executors/json,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@5c7668ba{/executors/threadDump,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@158f4cfe{/executors/threadDump/json,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@5aaaa446{/static,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@3d37203b{/,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@1894593a{/api,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@5527b211{/jobs/job/kill,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@78010562{/stages/stage/kill,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO ui.SparkUI: Bound SparkUI to 0.0.0.0, and started at http://h12:4040 2022-10-12 08:42:26 INFO executor.Executor: Starting executor ID driver on host h12 2022-10-12 08:42:26 INFO util.Utils: Successfully started service 'org.apache.spark.network.netty.NettyBlockTransferService' on port 58572. 2022-10-12 08:42:26 INFO netty.NettyBlockTransferService: Server created on h12:58572 2022-10-12 08:42:26 INFO storage.BlockManager: Using org.apache.spark.storage.RandomBlockReplicationPolicy for block replication policy 2022-10-12 08:42:26 INFO storage.BlockManagerMaster: Registering BlockManager BlockManagerId(driver, h12, 58572, None) 2022-10-12 08:42:26 INFO storage.BlockManagerMasterEndpoint: Registering block manager h12:58572 with 93.3 MiB RAM, BlockManagerId(driver, h12, 58572, None) 2022-10-12 08:42:26 INFO storage.BlockManagerMaster: Registered BlockManager BlockManagerId(driver, h12, 58572, None) 2022-10-12 08:42:26 INFO storage.BlockManager: Initialized BlockManager: BlockManagerId(driver, h12, 58572, None) 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@53f4c1e6{/metrics/json,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO history.SingleEventLogFileWriter: Logging events to hdfs://cluster1/cluster1/spark-logs/local-1665535346579.lz4.inprogress 2022-10-12 08:42:26 INFO internal.SharedState: loading hive config file: file:/opt/usdp-srv/srv/udp/2.0.0.0/spark/conf/hive-site.xml 2022-10-12 08:42:26 INFO internal.SharedState: spark.sql.warehouse.dir is not set, but hive.metastore.warehouse.dir is set. Setting spark.sql.warehouse.dir to the value of hive.metastore.warehouse.dir ('/cluster1/user/hive/warehouse/'). 2022-10-12 08:42:26 INFO internal.SharedState: Warehouse path is '/cluster1/user/hive/warehouse/'. 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@135a8c6f{/SQL,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@280d4882{/SQL/json,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@56b859a6{/SQL/execution,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@42aae04d{/SQL/execution/json,null,AVAILABLE,@Spark} 2022-10-12 08:42:26 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@516462cc{/static/sql,null,AVAILABLE,@Spark} [INFO] 2022-10-12 00:42:28.065 +0000 - -> 2022-10-12 08:42:27 INFO hive.HiveUtils: Initializing HiveMetastoreConnection version 2.3.7 using Spark classes. 2022-10-12 08:42:27 INFO client.HiveClientImpl: Warehouse location for Hive client (version 2.3.7) is /cluster1/user/hive/warehouse/ 2022-10-12 08:42:27 INFO hive.metastore: Trying to connect to metastore with URI thrift://h10:9083 2022-10-12 08:42:27 INFO hive.metastore: Opened a connection to metastore, current connections: 1 2022-10-12 08:42:27 INFO hive.metastore: Connected to metastore. Error in query: no viable alternative at input 'select \n'(line 2, pos 0) == SQL == select ^^^ 2022-10-12 08:42:27 INFO server.AbstractConnector: Stopped Spark@64f1fd08{HTTP/1.1,[http/1.1]}{0.0.0.0:4040} 2022-10-12 08:42:27 INFO ui.SparkUI: Stopped Spark web UI at http://h12:4040 [INFO] 2022-10-12 00:42:28.296 +0000 - process has exited, execute path:/tmp/dolphinscheduler/exec/process/7170590636544/7172773527040_35/121/137, processId:689910 ,exitStatusCode:1 ,processWaitForStatus:true ,processExitValue:1 [INFO] 2022-10-12 00:42:29.066 +0000 - -> 2022-10-12 08:42:28 INFO spark.MapOutputTrackerMasterEndpoint: MapOutputTrackerMasterEndpoint stopped! 2022-10-12 08:42:28 INFO memory.MemoryStore: MemoryStore cleared 2022-10-12 08:42:28 INFO storage.BlockManager: BlockManager stopped 2022-10-12 08:42:28 INFO storage.BlockManagerMaster: BlockManagerMaster stopped 2022-10-12 08:42:28 INFO scheduler.OutputCommitCoordinator$OutputCommitCoordinatorEndpoint: OutputCommitCoordinator stopped! 2022-10-12 08:42:28 INFO spark.SparkContext: Successfully stopped SparkContext 2022-10-12 08:42:28 INFO util.ShutdownHookManager: Shutdown hook called 2022-10-12 08:42:28 INFO util.ShutdownHookManager: Deleting directory /tmp/spark-69e6cf7a-7ef6-45ed-b2ff-3ce5ff5a3d89 2022-10-12 08:42:28 INFO util.ShutdownHookManager: Deleting directory /home/data/udp/2.0.0.0/spark/spark-c2a0103c-3876-445e-a12a-06798cb962da [INFO] 2022-10-12 00:42:29.067 +0000 - FINALIZE_SESSION -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]