alextinng opened a new issue, #13386: URL: https://github.com/apache/dolphinscheduler/issues/13386

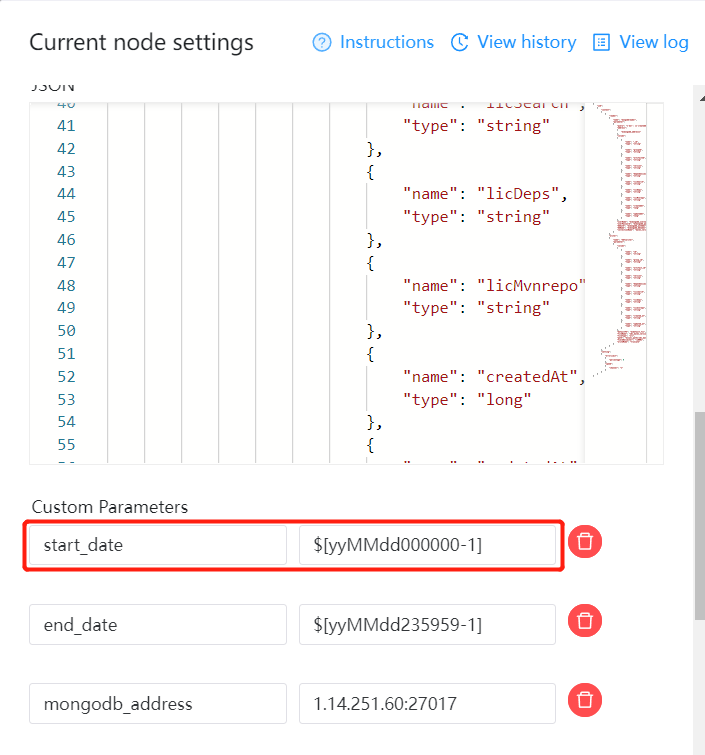

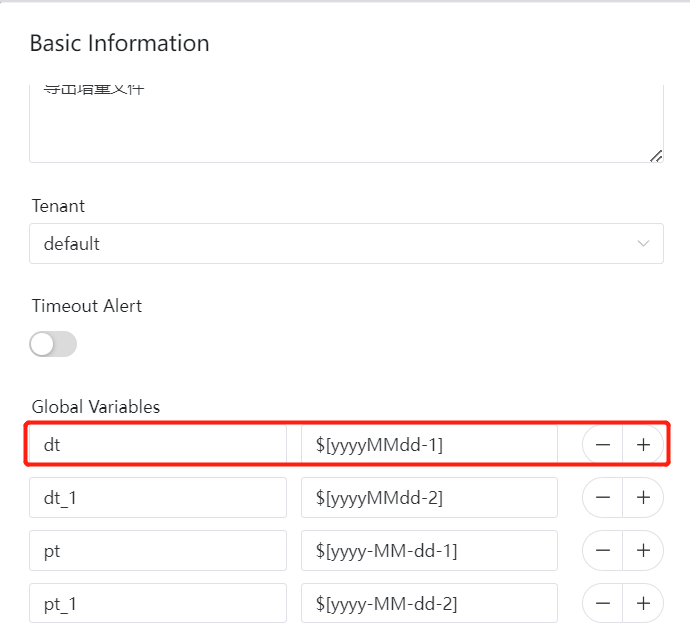

### Search before asking - [X] I had searched in the [issues](https://github.com/apache/dolphinscheduler/issues?q=is%3Aissue) and found no similar issues. ### What happened I built a workflow and try to complement data, then I found a wrong date value in task log. I defined a global variable called dt whose values is $[yyyyMMdd-1], after replacing the value of dt is 20230106; at the same time, there is another varialbe called start_date whose values is $[yyMMdd000000-1], after replacing the value of start_date is 230105000000. ### What you expected to happen the value of dt and start_date should be same ### How to reproduce step1: drag a datax task into workflow step2: define a custom variable  step3: define a global variable  step4: set schedule date to three days ago and run complement data ### Anything else task log: 1. when it is not a complement data task, the value of dt and start_date is same ``` [INFO] 2023-01-12 03:18:32.712 +0000 - datax task params {"localParams":[{"prop":"start_date","value":"$[yyMMdd000000-1]","direct":"IN","type":"VARCHAR"},{"prop":"end_date","value":"$[yyMMdd235959-1]","direct":"IN","type":"VARCHAR"},{"prop":"mongodb_address","value":"1.14.251.60:27017","direct":"IN","type":"VARCHAR"},{"prop":"mongodb_username","value":"datax","direct":"IN","type":"VARCHAR"},{"prop":"mongodb_password","value":"Seczone2022","direct":"IN","type":"VARCHAR"},{"prop":"mongodb_authdb","value":"seczone","direct":"IN","type":"VARCHAR"},{"prop":"mongodb_dbname","value":"seczone","direct":"IN","type":"VARCHAR"}],"resourceList":[],"customConfig":1,"json":"{\r\n \"job\":\r\n {\r\n \"content\":\r\n [\r\n {\r\n \"reader\":\r\n {\r\n \"name\": \"mongodbreader\",\r\n \"parameter\":\r\n {\r\n \"query\": \"{'$or': [{'createdAt': {'$gte': ${star t_date}, '$lte': ${end_date}}},{'updatedAt': {'$gte': ${start_date}, '$lte': ${end_date}}}]}\",\r\n \"address\":\r\n [\r\n \"${mongodb_address}\"\r\n ],\r\n \"column\":\r\n [\r\n {\r\n \"name\": \"_id\",\r\n \"type\": \"string\"\r\n }\r\n ],\r\n \"userName\": \"${mongodb_username}\",\r\n \"userPassword\": \"${mongodb_password}\",\r\n \"dbAuth\": \"${mongodb_authdb}\",\r\n \"dbName\": \"${mongodb_dbname}\",\r\n \"collectionName\": \"maven_version\"\r\n }\r\n },\r\n \"writer\":\r\n {\r\n \"name\": \"hdfswriter\",\r \n \"parameter\":\r\n {\r\n \"column\":\r\n [\r\n {\r\n \"name\": \"id\",\r\n \"type\": \"string\"\r\n }\r\n ],\r\n \"defaultFS\": \"${default_fs}\",\r\n \"fileName\": \"xxx\",\r\n \"fileType\": \"orc\",\r\n \"path\": \"${sync_path}/xxx/dt=${dt}\",\r\n \"fieldDelimiter\": \"\\u0001\",\r\n \"writeMode\": \"truncate\"\r\n }\r\n }\r\n }\r\n ],\r\n \"setting\":\r\n {\r\n \"errorLimit\":\r\n {\r\n \"percentage\": 0\r\n },\r\n \"speed\":\r\n {\r\n \"channel\": \"1\"\r\n }\r\n }\r\n }\r\n}","xms":1,"xmx":1} [INFO] 2023-01-12 03:18:32.714 +0000 - tenantCode user:root, task dir:129_323 [INFO] 2023-01-12 03:18:32.714 +0000 - create command file:/tmp/dolphinscheduler/exec/process/8135734005248/8166473070208_9/129/323/129_323.command [INFO] 2023-01-12 03:18:32.714 +0000 - command : #!/bin/bash BASEDIR=$(cd `dirname $0`; pwd) cd $BASEDIR export JAVA_HOME=/usr/lib/java-1.8.0 export HADOOP_HOME=/opt/hadoop export HIVE_HOME=/opt/hive export DATAX_HOME=/opt/datax export SPARK_HOME=/opt/spark2 export YARN_CONF_DIR=/etc/hadoop/conf export PATH=$PATH:$HADOOP_HOME/bin:$SPARK_HOME/bin:$JAVA_HOME/bin:$HIVE_HOME/bin:$DATAX_HOME/bin /tmp/dolphinscheduler/exec/process/8135734005248/8166473070208_9/129/323/129_323_node.sh [INFO] 2023-01-12 03:18:32.716 +0000 - task run command: sudo -u root sh /tmp/dolphinscheduler/exec/process/8135734005248/8166473070208_9/129/323/129_323.command [INFO] 2023-01-12 03:18:32.716 +0000 - process start, process id is: 8672 [INFO] 2023-01-12 03:18:33.716 +0000 - -> DataX (DATAX-OPENSOURCE-3.0), From Alibaba ! Copyright (C) 2010-2017, Alibaba Group. All Rights Reserved. 2023-01-12 11:18:33.338 [main] INFO VMInfo - VMInfo# operatingSystem class => sun.management.OperatingSystemImpl 2023-01-12 11:18:33.346 [main] INFO Engine - the machine info => osInfo: Oracle Corporation 1.8 25.102-b14 jvmInfo: Linux amd64 3.10.0-514.el7.x86_64 cpu num: 16 totalPhysicalMemory: -0.00G freePhysicalMemory: -0.00G maxFileDescriptorCount: -1 currentOpenFileDescriptorCount: -1 GC Names [PS MarkSweep, PS Scavenge] MEMORY_NAME | allocation_size | init_size PS Eden Space | 256.00MB | 256.00MB Code Cache | 240.00MB | 2.44MB Compressed Class Space | 1,024.00MB | 0.00MB PS Survivor Space | 42.50MB | 42.50MB PS Old Gen | 683.00MB | 683.00MB Metaspace | -0.00MB | 0.00MB 2023-01-12 11:18:33.381 [main] INFO Engine - { "content":[ { "reader":{ "name":"mongodbreader", "parameter":{ "address":[ "localhost:3306" ], "collectionName":"maven_version", "column":[ { "name":"_id", "type":"string" } ], "dbAuth":"xx", "dbName":"xx", "query":"{'$or': [{'createdAt': {'$gte': 230111000000, '$lte': 230111235959}},{'updatedAt': {'$gte': 230111000000, '$lte': 230111235959}}]}", "userName":"xxx", "userPassword":"***********" } }, "writer":{ "name":"hdfswriter", "parameter":{ "column":[ { "name":"id", "type":"string" } ], "defaultFS":"", "fieldDelimiter":"\u0001", "fileName":"xxxxx", "fileType":"orc", "path":"/path/dt=20230111", "writeMode":"truncate" } } } ], "setting":{ "errorLimit":{ "percentage":0 }, "speed":{ "channel":"1" } } } ``` 2. when it is a complement data task, the value of dt and start_date is different ``` [INFO] 2023-01-12 03:17:33.896 +0000 - datax task params {"localParams":[{"prop":"start_date","value":"$[yyMMdd000000-1]","direct":"IN","type":"VARCHAR"},{"prop":"end_date","value":"$[yyMMdd235959-1]","direct":"IN","type":"VARCHAR"},{"prop":"mongodb_address","value":"1.14.251.60:27017","direct":"IN","type":"VARCHAR"},{"prop":"mongodb_username","value":"datax","direct":"IN","type":"VARCHAR"},{"prop":"mongodb_password","value":"Seczone2022","direct":"IN","type":"VARCHAR"},{"prop":"mongodb_authdb","value":"seczone","direct":"IN","type":"VARCHAR"},{"prop":"mongodb_dbname","value":"seczone","direct":"IN","type":"VARCHAR"}],"resourceList":[],"customConfig":1,"json":"{\r\n \"job\":\r\n {\r\n \"content\":\r\n [\r\n {\r\n \"reader\":\r\n {\r\n \"name\": \"mongodbreader\",\r\n \"parameter\":\r\n {\r\n \"query\": \"{'$or': [{'createdAt': {'$gte': ${star t_date}, '$lte': ${end_date}}},{'updatedAt': {'$gte': ${start_date}, '$lte': ${end_date}}}]}\",\r\n \"address\":\r\n [\r\n \"${mongodb_address}\"\r\n ],\r\n \"column\":\r\n [\r\n {\r\n \"name\": \"_id\",\r\n \"type\": \"string\"\r\n } ],\r\n \"userName\": \"${mongodb_username}\",\r\n \"userPassword\": \"${mongodb_password}\",\r\n \"dbAuth\": \"${mongodb_authdb}\",\r\n \"dbName\": \"${mongodb_dbname}\",\r\n \"collectionName\": \"maven_version\"\r\n }\r\n },\r\n \"writer\":\r\n {\r\n \"name\": \"hdfswriter\",\r\n \"parameter\":\r\n {\r\n \"column\":\r\n [\r\n {\r\n \"name\": \"id\",\r\n \"type\": \"string\"\r\n } ],\r\n \"defaultFS\": \"${default_fs}\",\r\n \"fileName\": \"ods_maven_version\",\r\n \"fileType\": \"orc\",\r\n \"path\": \"${sync_path}/xxx/dt=${dt}\",\r\n \"fieldDelimiter\": \"\\u0001\",\r\n \"writeMode\": \"truncate\"\r\n }\r\n }\r\n }\r\n ],\r\n \"setting\":\r\n {\r\n \"errorLimit\":\r\n {\r\n \"percentage\": 0\r\n },\r\n \"speed\":\r\n {\r\n \"channel\": \"1\"\r\n } \r\n }\r\n }\r\n}","xms":1,"xmx":1} [INFO] 2023-01-12 03:17:33.906 +0000 - tenantCode user:root, task dir:128_322 [INFO] 2023-01-12 03:17:33.906 +0000 - create command file:/tmp/dolphinscheduler/exec/process/8135734005248/8166473070208_9/128/322/128_322.command [INFO] 2023-01-12 03:17:33.906 +0000 - command : #!/bin/bash BASEDIR=$(cd `dirname $0`; pwd) cd $BASEDIR export JAVA_HOME=/usr/lib/java-1.8.0 export HADOOP_HOME=/opt/hadoop export HIVE_HOME=/opt/hive export DATAX_HOME=/opt/datax export SPARK_HOME=/opt/spark2 export YARN_CONF_DIR=/etc/hadoop/conf export PATH=$PATH:$HADOOP_HOME/bin:$SPARK_HOME/bin:$JAVA_HOME/bin:$HIVE_HOME/bin:$DATAX_HOME/bin /tmp/dolphinscheduler/exec/process/8135734005248/8166473070208_9/128/322/128_322_node.sh [INFO] 2023-01-12 03:17:33.908 +0000 - task run command: sudo -u root sh /tmp/dolphinscheduler/exec/process/8135734005248/8166473070208_9/128/322/128_322.command [INFO] 2023-01-12 03:17:33.909 +0000 - process start, process id is: 8574 [INFO] 2023-01-12 03:17:34.909 +0000 - -> DataX (DATAX-OPENSOURCE-3.0), From Alibaba ! Copyright (C) 2010-2017, Alibaba Group. All Rights Reserved. 2023-01-12 11:17:34.303 [main] INFO VMInfo - VMInfo# operatingSystem class => sun.management.OperatingSystemImpl 2023-01-12 11:17:34.308 [main] INFO Engine - the machine info => osInfo: Oracle Corporation 1.8 25.102-b14 jvmInfo: Linux amd64 3.10.0-514.el7.x86_64 cpu num: 16 totalPhysicalMemory: -0.00G freePhysicalMemory: -0.00G maxFileDescriptorCount: -1 currentOpenFileDescriptorCount: -1 GC Names [PS MarkSweep, PS Scavenge] MEMORY_NAME | allocation_size | init_size PS Eden Space | 256.00MB | 256.00MB Code Cache | 240.00MB | 2.44MB Compressed Class Space | 1,024.00MB | 0.00MB PS Survivor Space | 42.50MB | 42.50MB PS Old Gen | 683.00MB | 683.00MB Metaspace | -0.00MB | 0.00MB 2023-01-12 11:17:34.325 [main] INFO Engine - { "content":[ { "reader":{ "name":"mongodbreader", "parameter":{ "address":[ "localhost:3306" ], "collectionName":"maven_version", "column":[ { "name":"_id", "type":"string" } ], "dbAuth":"xxx", "dbName":"xxx", "query":"{'$or': [{'createdAt': {'$gte': 230105000000, '$lte': 230105235959}},{'updatedAt': {'$gte': 230105000000, '$lte': 230105235959}}]}", "userName":"datax", "userPassword":"***********" } }, "writer":{ "name":"hdfswriter", "parameter":{ "column":[ { "name":"id", "type":"string" } ], "defaultFS":"xxxxx", "fieldDelimiter":"\u0001", "fileName":"xxx", "fileType":"orc", "path":"/path/xxx/dt=20230106", "writeMode":"truncate" } } } ], "setting":{ "errorLimit":{ "percentage":0 }, "speed":{ "channel":"1" } } } 2023-01-12 11:17:34.337 [main] WARN Engine - prioriy set to 0, because NumberFormatException, the value is: null 2023-01-12 11:17:34.339 [main] INFO PerfTrace - PerfTrace traceId=job_-1, isEnable=false, priority=0 2023-01-12 11:17:34.339 [main] INFO JobContainer - DataX jobContainer starts job. 2023-01-12 11:17:34.341 [main] INFO JobContainer - Set jobId = 0 2023-01-12 11:17:34.431 [job-0] INFO cluster - Cluster created with settings {hosts=[1.14.251.60:27017], mode=MULTIPLE, requiredClusterType=UNKNOWN, serverSelectionTimeout='30000 ms', maxWaitQueueSize=500} 2023-01-12 11:17:34.432 [job-0] INFO cluster - Adding discovered server 1.14.251.60:27017 to client view of cluster 2023-01-12 11:17:34.754 [cluster-ClusterId{value='63bf7bce19668f2189eb550b', description='null'}-1.14.251.60:27017] INFO connection - Opened connection [connectionId{localValue:1, serverValue:1062082}] to 1.14.251.60:27017 2023-01-12 11:17:34.780 [cluster-ClusterId{value='63bf7bce19668f2189eb550b', description='null'}-1.14.251.60:27017] INFO cluster - Monitor thread successfully connected to server with description ServerDescription{address=1.14.251.60:27017, type=STANDALONE, state=CONNECTED, ok=true, version=ServerVersion{versionList=[4, 0, 28]}, minWireVersion=0, maxWireVersion=7, maxDocumentSize=16777216, roundTripTimeNanos=25608745} 2023-01-12 11:17:34.781 [cluster-ClusterId{value='63bf7bce19668f2189eb550b', description='null'}-1.14.251.60:27017] INFO cluster - Discovered cluster type of STANDALONE Jan 12, 2023 11:17:34 AM org.apache.hadoop.util.NativeCodeLoader <clinit> WARNING: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable [INFO] 2023-01-12 03:17:35.910 +0000 - -> 2023-01-12 11:17:35.249 [job-0] INFO JobContainer - jobContainer starts to do prepare ... 2023-01-12 11:17:35.250 [job-0] INFO JobContainer - DataX Reader.Job [mongodbreader] do prepare work . 2023-01-12 11:17:35.250 [job-0] INFO JobContainer - DataX Writer.Job [hdfswriter] do prepare work . 2023-01-12 11:17:35.319 [job-0] INFO JobContainer - jobContainer starts to do split ... 2023-01-12 11:17:35.320 [job-0] INFO JobContainer - Job set Channel-Number to 1 channels. 2023-01-12 11:17:35.551 [job-0] INFO connection - Opened connection [connectionId{localValue:2, serverValue:1062085}] to 1.14.251.60:27017 2023-01-12 11:17:35.593 [job-0] INFO JobContainer - DataX Reader.Job [mongodbreader] splits to [1] tasks. 2023-01-12 11:17:35.593 [job-0] INFO HdfsWriter$Job - begin do split... 2023-01-12 11:17:35.596 [job-0] INFO HdfsWriter$Job - splited write file name:[hdfs://10.0.1.182:8020/user/sca/sca/sync/ods_maven_version/dt=20230106__51ebcc70_63b1_4040_a760_6a0d51fdaa06/ods_maven_version__c8fcfbd3_6c8a_4cd8_9dc2_9bd3ca308df6] 2023-01-12 11:17:35.596 [job-0] INFO HdfsWriter$Job - end do split. 2023-01-12 11:17:35.596 [job-0] INFO JobContainer - DataX Writer.Job [hdfswriter] splits to [1] tasks. 2023-01-12 11:17:35.610 [job-0] INFO JobContainer - jobContainer starts to do schedule ... 2023-01-12 11:17:35.614 [job-0] INFO JobContainer - Scheduler starts [1] taskGroups. 2023-01-12 11:17:35.615 [job-0] INFO JobContainer - Running by standalone Mode. 2023-01-12 11:17:35.620 [taskGroup-0] INFO TaskGroupContainer - taskGroupId=[0] start [1] channels for [1] tasks. 2023-01-12 11:17:35.623 [taskGroup-0] INFO Channel - Channel set byte_speed_limit to -1, No bps activated. 2023-01-12 11:17:35.623 [taskGroup-0] INFO Channel - Channel set record_speed_limit to -1, No tps activated. 2023-01-12 11:17:35.630 [taskGroup-0] INFO TaskGroupContainer - taskGroup[0] taskId[0] attemptCount[1] is started 2023-01-12 11:17:35.631 [0-0-0-reader] INFO cluster - Cluster created with settings {hosts=[1.14.251.60:27017], mode=MULTIPLE, requiredClusterType=UNKNOWN, serverSelectionTimeout='30000 ms', maxWaitQueueSize=500} 2023-01-12 11:17:35.631 [0-0-0-reader] INFO cluster - Adding discovered server 1.14.251.60:27017 to client view of cluster 2023-01-12 11:17:35.640 [0-0-0-reader] INFO cluster - No server chosen by ReadPreferenceServerSelector{readPreference=primary} from cluster description ClusterDescription{type=UNKNOWN, connectionMode=MULTIPLE, all=[ServerDescription{address=1.14.251.60:27017, type=UNKNOWN, state=CONNECTING}]}. Waiting for 30000 ms before timing out 2023-01-12 11:17:35.652 [0-0-0-writer] INFO HdfsWriter$Task - begin do write... 2023-01-12 11:17:35.653 [0-0-0-writer] INFO HdfsWriter$Task - write to file : [hdfs://10.0.1.182:8020/user/sca/sca/sync/ods_maven_version/dt=20230106__51ebcc70_63b1_4040_a760_6a0d51fdaa06/ods_maven_version__c8fcfbd3_6c8a_4cd8_9dc2_9bd3ca308df6] 2023-01-12 11:17:35.869 [cluster-ClusterId{value='63bf7bcf19668f2189eb550c', description='null'}-1.14.251.60:27017] INFO connection - Opened connection [connectionId{localValue:3, serverValue:1062086}] to 1.14.251.60:27017 2023-01-12 11:17:35.900 [cluster-ClusterId{value='63bf7bcf19668f2189eb550c', description='null'}-1.14.251.60:27017] INFO cluster - Monitor thread successfully connected to server with description ServerDescription{address=1.14.251.60:27017, type=STANDALONE, state=CONNECTED, ok=true, version=ServerVersion{versionList=[4, 0, 28]}, minWireVersion=0, maxWireVersion=7, maxDocumentSize=16777216, roundTripTimeNanos=30634535} 2023-01-12 11:17:35.900 [cluster-ClusterId{value='63bf7bcf19668f2189eb550c', description='null'}-1.14.251.60:27017] INFO cluster - Discovered cluster type of STANDALONE [INFO] 2023-01-12 03:17:36.911 +0000 - -> 2023-01-12 11:17:36.122 [0-0-0-reader] INFO connection - Opened connection [connectionId{localValue:4, serverValue:1062089}] to 1.14.251.60:27017 [INFO] 2023-01-12 03:17:45.912 +0000 - -> 2023-01-12 11:17:45.628 [job-0] INFO StandAloneJobContainerCommunicator - Total 0 records, 0 bytes | Speed 0B/s, 0 records/s | Error 0 records, 0 bytes | All Task WaitWriterTime 0.000s | All Task WaitReaderTime 0.000s | Percentage 0.00% [INFO] 2023-01-12 03:17:55.913 +0000 - -> 2023-01-12 11:17:55.629 [job-0] INFO StandAloneJobContainerCommunicator - Total 9344 records, 6061890 bytes | Speed 591.98KB/s, 934 records/s | Error 0 records, 0 bytes | All Task WaitWriterTime 0.139s | All Task WaitReaderTime 8.062s | Percentage 0.00% [INFO] 2023-01-12 03:18:04.683 +0000 - process has exited, execute path:/tmp/dolphinscheduler/exec/process/8135734005248/8166473070208_9/128/322, processId:8574 ,exitStatusCode:137 ,processWaitForStatus:true ,processExitValue:137 [INFO] 2023-01-12 03:18:04.914 +0000 - FINALIZE_SESSION ``` ### Version 3.0.x ### Are you willing to submit PR? - [X] Yes I am willing to submit a PR! ### Code of Conduct - [X] I agree to follow this project's [Code of Conduct](https://www.apache.org/foundation/policies/conduct) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]