ans76 opened a new issue #7681: URL: https://github.com/apache/incubator-doris/issues/7681

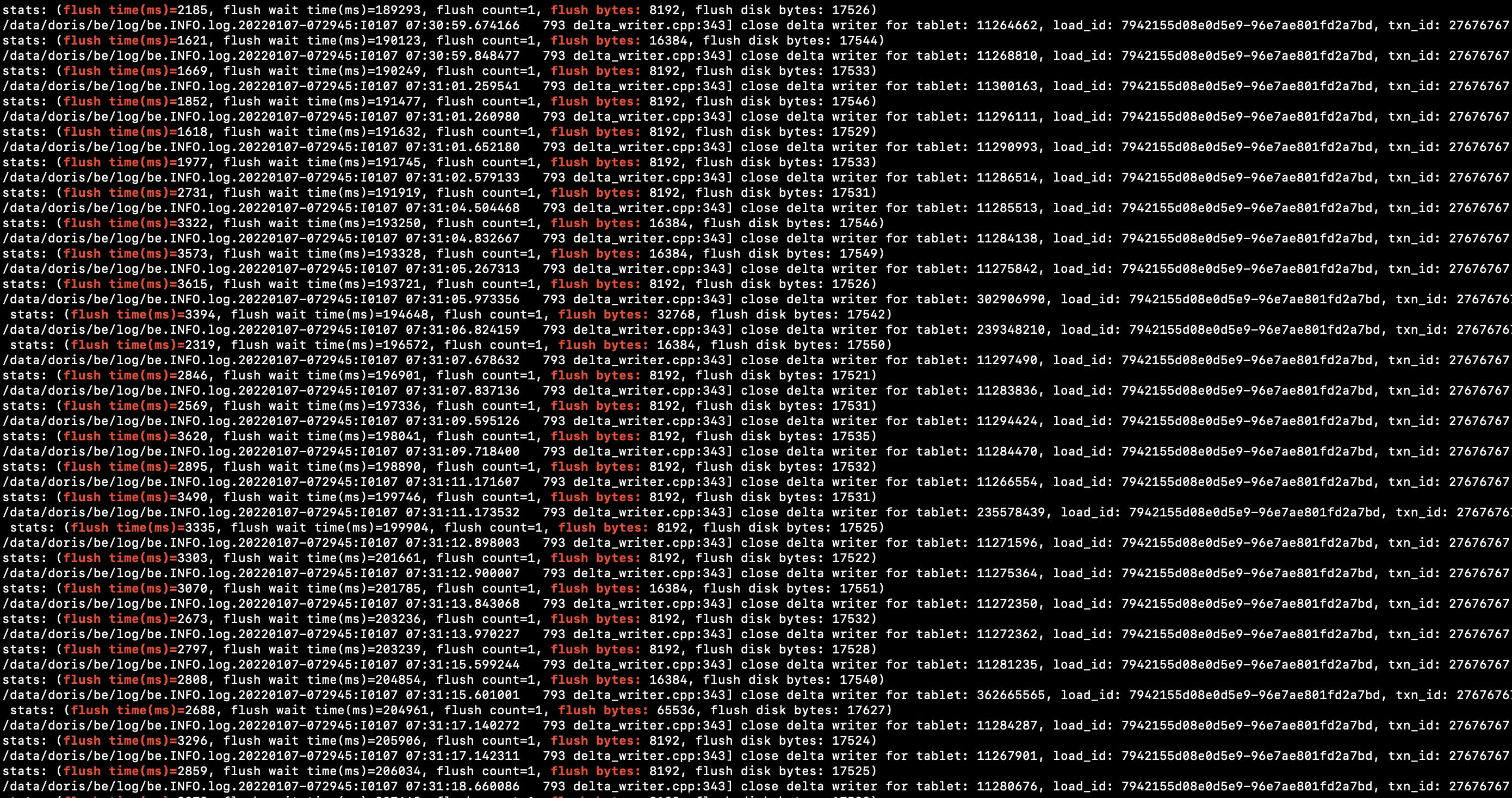

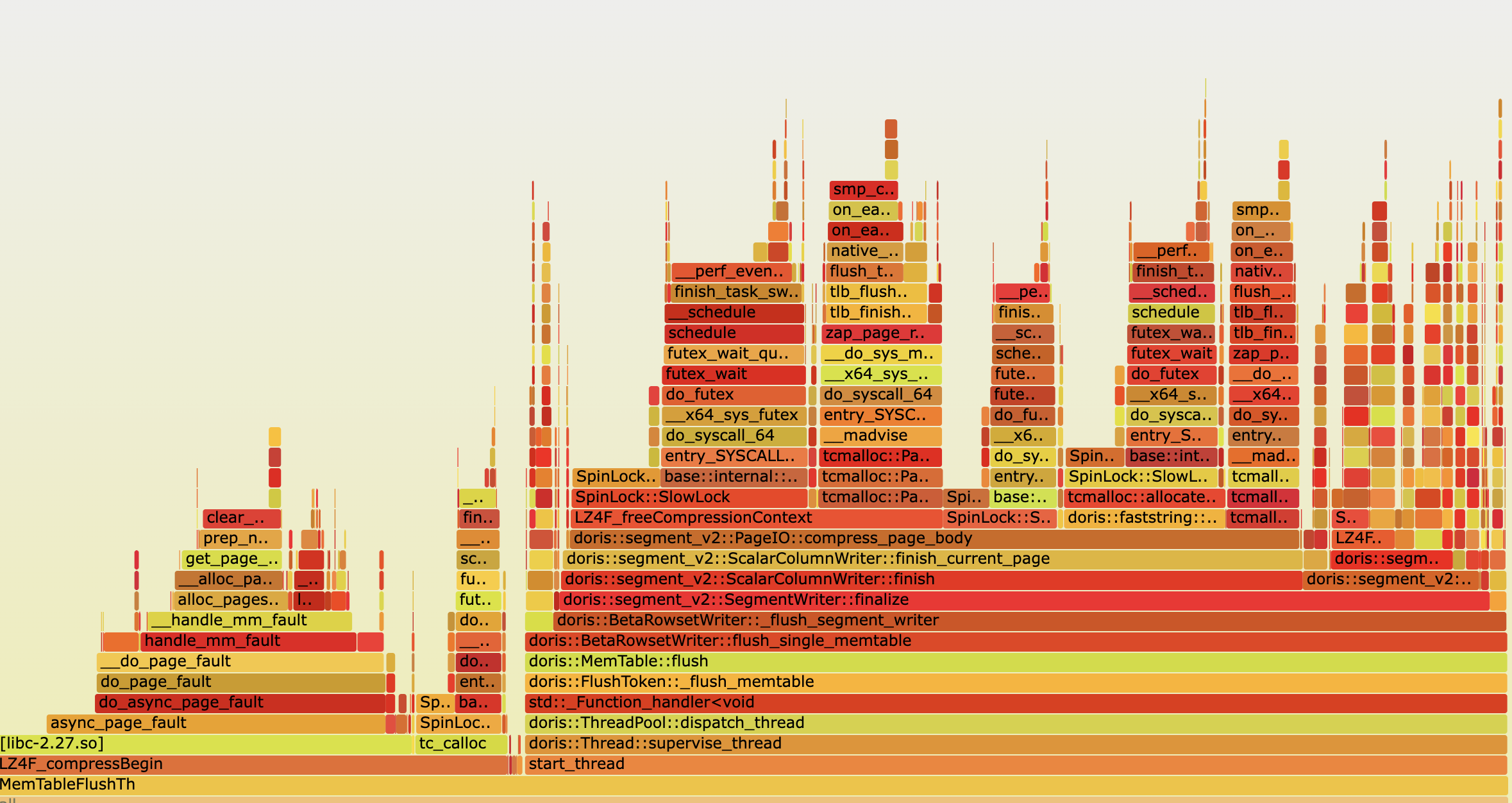

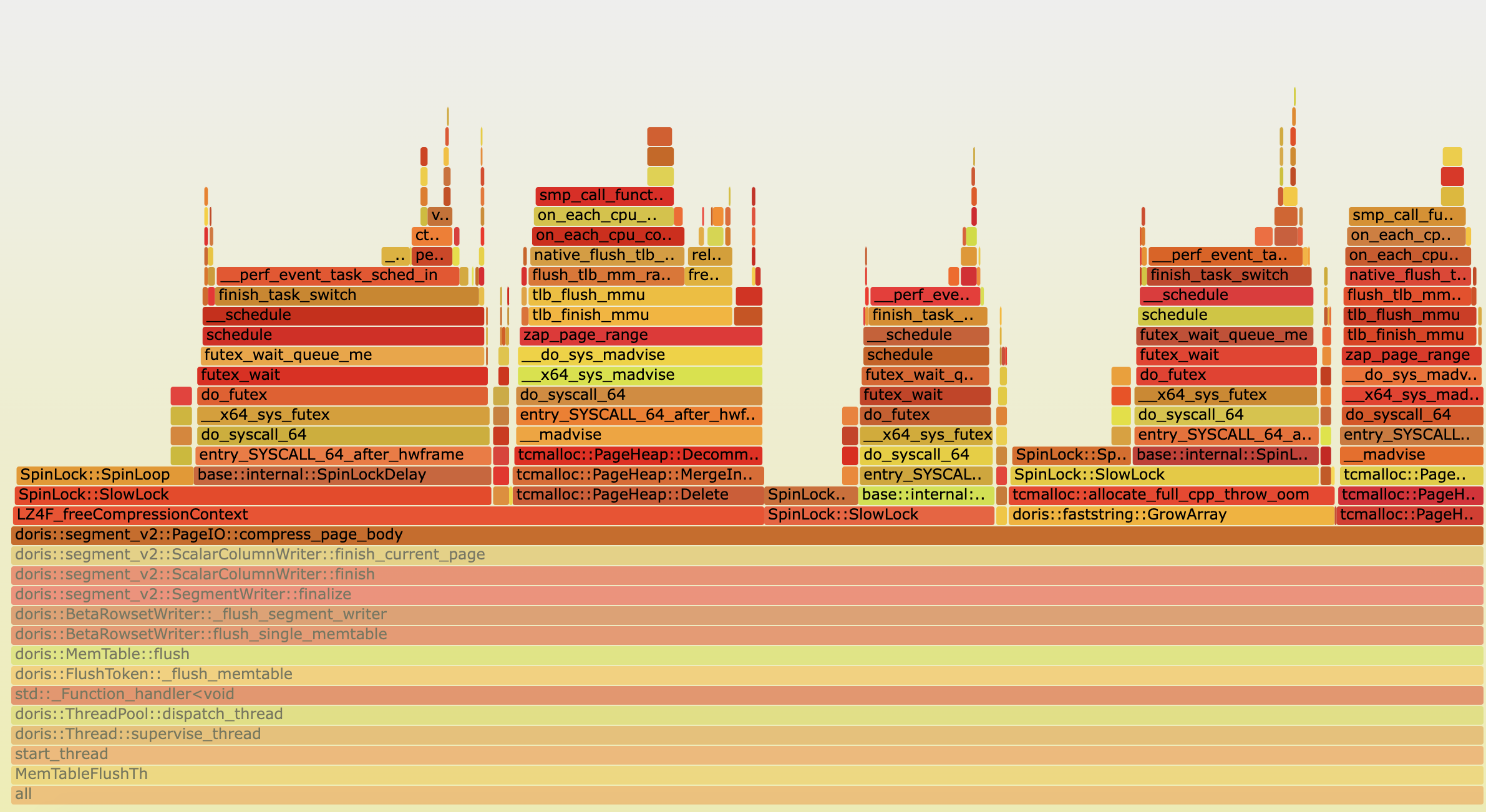

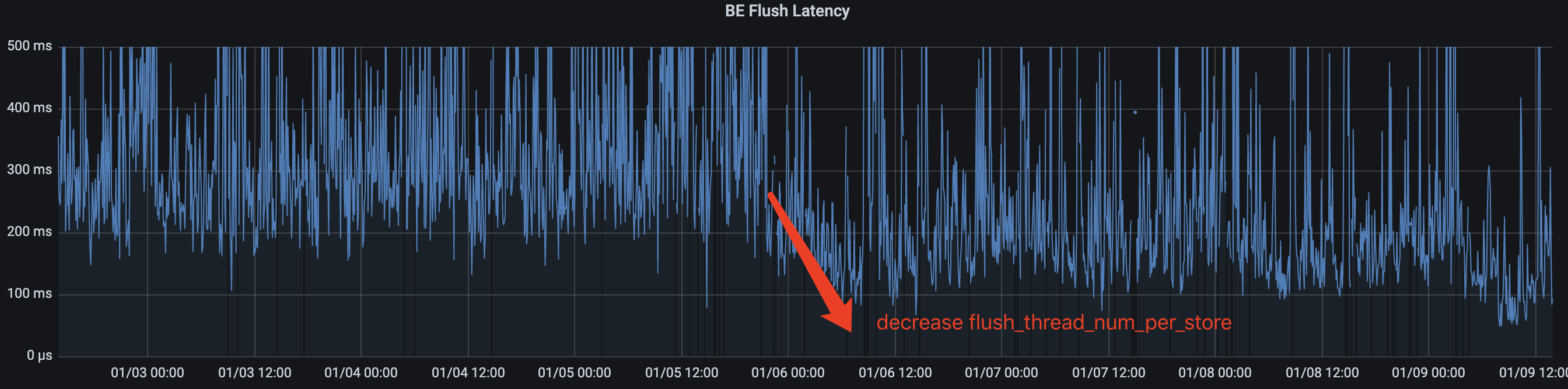

### Search before asking - [X] I had searched in the [issues](https://github.com/apache/incubator-doris/issues?q=is%3Aissue) and found no similar issues. ### Version palo 0.14.7 ### What's Wrong?  We're seeing memtable flush too slow(not for specific loading task) leading to queued many tablet write rpc requests. Generally increase `flush_thread_num_per_store` would to tell engine spend more resource gain better flush throughput but not work, the flush time also increase which is intriguing. Digging a little deeper into this, `LZ4F_freeCompressionContext` spent more time during flush.     few threads likely hang at spinlock acquire for long time(even hours) ` Thread 32 (Thread 0x7f5de1be5700 (LWP 549)): #0 0x00000000017bbb44 in sys_futex (v3=0, a2=0x0, t=0x7f5de1bdcf60, v=<optimized out>, o=128, a=0x43a4408 <tcmalloc::Static::pageheap_lock_>) at /app/be/src/gutil/linux_syscall_support.h:2419 #1 base::internal::SpinLockDelay (w=0x43a4408 <tcmalloc::Static::pageheap_lock_>, value=2, loop=<optimized out>) at /app/be/src/gutil/spinlock_linux-inl.h:80 #2 0x0000000001d4ff5d in SpinLock::SlowLock (this=this@entry=0x43a4408 <tcmalloc::Static::pageheap_lock_>) at src/base/spinlock.cc:118 #3 0x0000000002973708 in SpinLock::Lock (this=<optimized out>) at src/base/spinlock.h:71 #4 SpinLockHolder::SpinLockHolder (l=<optimized out>, this=<synthetic pointer>) at src/base/spinlock.h:133 #5 (anonymous namespace)::do_free_pages (span=0x1515f062b0, ptr=<optimized out>) at src/tcmalloc.cc:1403 #6 0x0000000001ba1eae in LZ4F_freeCompressionContext (LZ4F_compressionContext=0x12336df6b0) at lz4frame.c:398 #7 0x00000000012470ac in doris::Lz4fBlockCompression::compress (this=<optimized out>, inputs=..., output=0x7f5de1bdd120) at /app/be/src/util/block_compression.cpp:106 #8 0x0000000001064bd6 in doris::segment_v2::PageIO::compress_page_body (codec=0x3791408 <doris::Lz4fBlockCompression::instance()::s_instance>, min_space_saving=0.10000000000000001, body=std::vector of length 1, capacity 1 = {...}, compressed_body=compressed_body@entry=0x7f5de1bdd220) at /app/be/src/olap/rowset/segment_v2/page_io.cpp:47 #9 0x0000000001829bd8 in doris::segment_v2::PageIO::compress_and_write_page (result=0x7f5de1bdd210, footer=..., body=std::vector of length 1, capacity 1 = {...}, wblock=0x20b50a840, min_space_saving=<optimized out>, codec=<optimized out>) at /app/be/src/olap/rowset/segment_v2/page_io.h:97 #10 doris::segment_v2::ScalarColumnWriter::write_data (this=0x1dc238c60) at /app/be/src/olap/rowset/segment_v2/column_writer.cpp:313 #11 0x00000000017fe2ef in doris::segment_v2::SegmentWriter::_write_data (this=0x6e0238a80) at /app/be/src/olap/rowset/segment_v2/segment_writer.cpp:153 #12 doris::segment_v2::SegmentWriter::finalize (this=0x6e0238a80, segment_file_size=segment_file_size@entry=0x7f5de1bdd3b8, index_size=index_size@entry=0x7f5de1bdd3c0) at /app/be/src/olap/rowset/segment_v2/segment_writer.cpp:136 #13 0x00000000010b2005 in doris::BetaRowsetWriter::_flush_segment_writer (this=0xd2491cc80, writer=0x7f5de1bdd430) at /app/be/src/olap/rowset/beta_rowset_writer.cpp:248 #14 0x00000000010b2e23 in doris::BetaRowsetWriter::flush_single_memtable (this=0xd2491cc80, memtable=<optimized out>, flush_size=0x122ca863f8) at /app/be/src/olap/rowset/beta_rowset_writer.cpp:169 #15 0x00000000010580a4 in doris::MemTable::flush (this=0x122ca86300) at /app/be/src/olap/memtable.cpp:124 #16 0x0000000000fe0fe9 in doris::FlushToken::_flush_memtable (this=0x838ffef90, memtable=std::shared_ptr<doris::MemTable> (use count 2, weak count 0) = {...}) at /app/be/src/olap/memtable_flush_executor.cpp:65 #17 0x0000000000fe193f in std::__invoke_impl<void, void (doris::FlushToken::*&)(std::shared_ptr<doris::MemTable>), doris::FlushToken*&, std::shared_ptr<doris::MemTable>&> (__t=<optimized out>, __f=<optimized out>) at /usr/include/c++/7.3.0/bits/invoke.h:73 #18 std::__invoke<void (doris::FlushToken::*&)(std::shared_ptr<doris::MemTable>), doris::FlushToken*&, std::shared_ptr<doris::MemTable>&> (__fn=<optimized out>) at /usr/include/c++/7.3.0/bits/invoke.h:95 #19 std::_Bind<void (doris::FlushToken::*(doris::FlushToken*, std::shared_ptr<doris::MemTable>))(std::shared_ptr<doris::MemTable>)>::__call<void, , 0ul, 1ul>(std::tuple<>&&, std::_Index_tuple<0ul, 1ul>) (__args=..., this=<optimized out>) at /usr/include/c++/7.3.0/functional:467 #20 std::_Bind<void (doris::FlushToken::*(doris::FlushToken*, std::shared_ptr<doris::MemTable>))(std::shared_ptr<doris::MemTable>)>::operator()<, void>() (this=<optimized out>) at /usr/include/c++/7.3.0/functional:551 #21 std::_Function_handler<void (), std::_Bind<void (doris::FlushToken::*(doris::FlushToken*, std::shared_ptr<doris::MemTable>))(std::shared_ptr<doris::MemTable>)> >::_M_invoke(std::_Any_data const&) (__functor=...) at /usr/include/c++/7.3.0/bits/std_function.h:316 #22 0x00000000012e84f2 in std::function<void ()>::operator()() const (this=0xfadc9d8d8) at /usr/include/c++/7.3.0/bits/std_function.h:706 #23 doris::FunctionRunnable::run (this=0xfadc9d8d0) at /app/be/src/util/threadpool.cpp:42 #24 doris::ThreadPool::dispatch_thread (this=0x5383c20) at /app/be/src/util/threadpool.cpp:548 #25 0x00000000012e0358 in std::function<void ()>::operator()() const (this=0x53726a8) at /usr/include/c++/7.3.0/bits/std_function.h:706 #26 doris::Thread::supervise_thread (arg=0x5372690) at /app/be/src/util/thread.cpp:385 #27 0x00007f5defee26db in start_thread (arg=0x7f5de1be5700) at pthread_create.c:463 #28 0x00007f5df021b71f in clone () at ../sysdeps/unix/sysv/linux/x86_64/clone.S:95 ` ### What You Expected? none ### How to Reproduce? _No response_ ### Anything Else? _No response_ ### Are you willing to submit PR? - [X] Yes I am willing to submit a PR! ### Code of Conduct - [X] I agree to follow this project's [Code of Conduct](https://www.apache.org/foundation/policies/conduct) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected] --------------------------------------------------------------------- To unsubscribe, e-mail: [email protected] For additional commands, e-mail: [email protected]