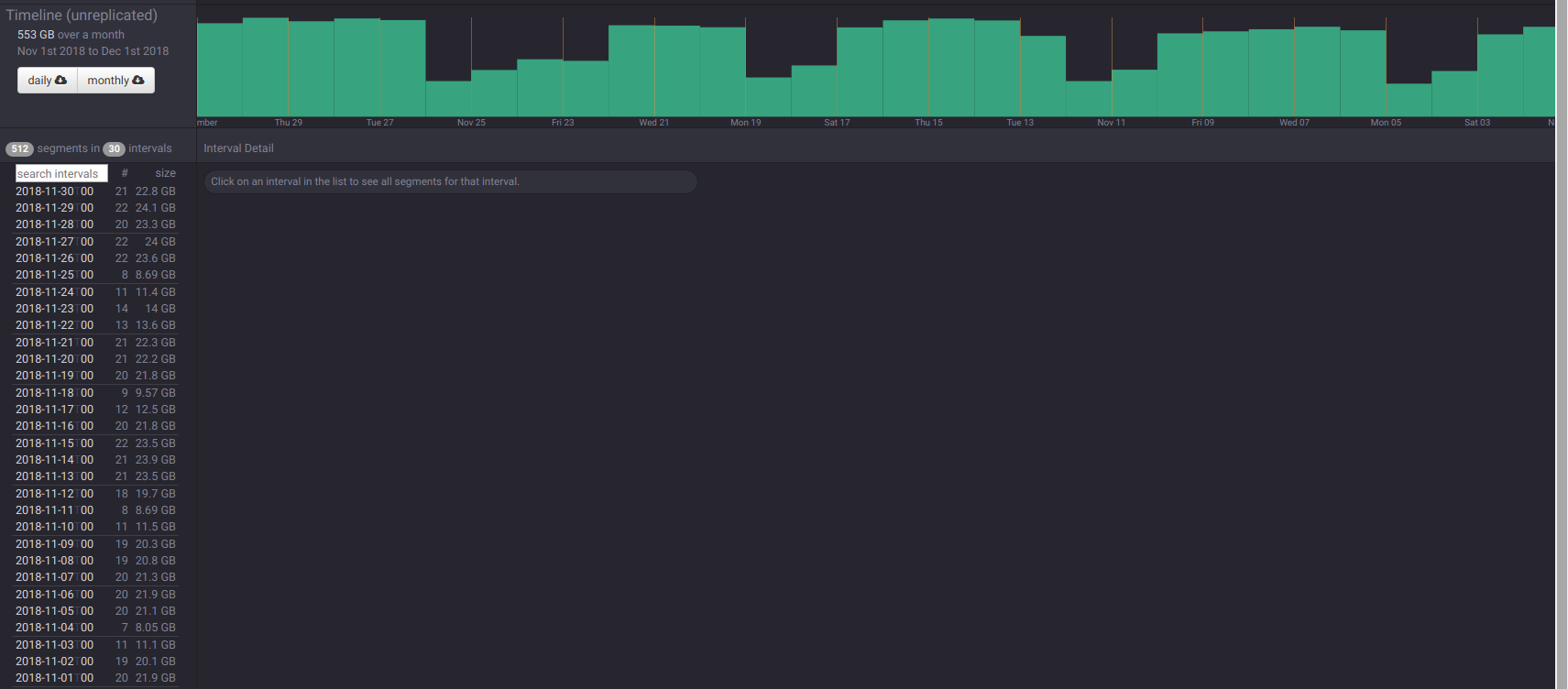

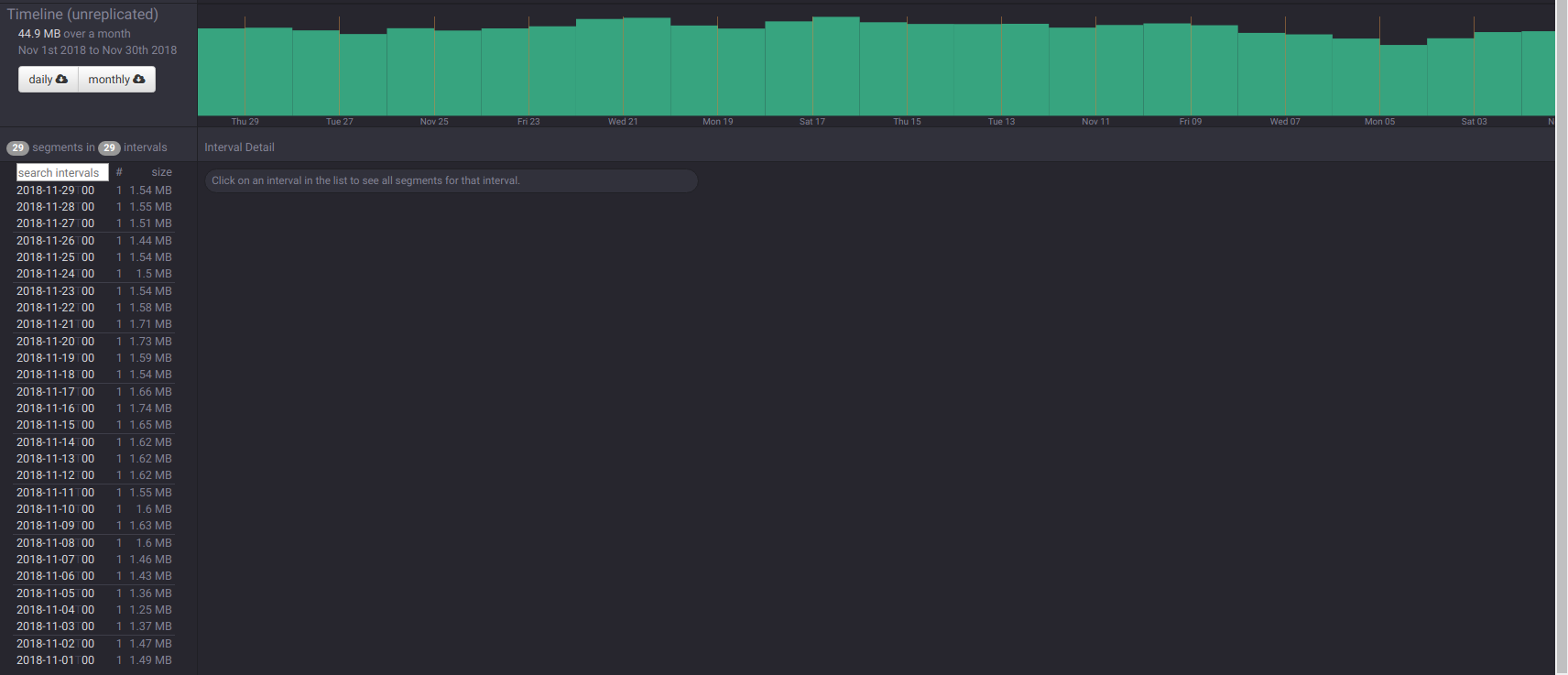

mihai-cazacu-adswizz opened a new issue #7119: [materialized view] The last day of month (and the last 24h) are not ingested by the Supervisor URL: https://github.com/apache/incubator-druid/issues/7119 Based on what @zhangxinyu1 said: > The timeline of derivativeDataSource is expected to be the same with baseDataSource's. Your can get the timeline of a dataSource from coordinator UI. and inspecting the timeline, the derivative data source has one day missing (the last day of the month). The base data source:  and the derivative one:  I have observed the same behavior on every view that I've created, no matter of the base data source and its type (`kafka` or `index_hadoop`). Here you can find a `kafka` ingestion spec: ``` { "type": "kafka", "dataSchema": { "dataSource": "some-data-source", "parser": { "type": "string", "parseSpec": { "format": "json", "timestampSpec": { "column": "timestamp", "format": "auto" }, "dimensionsSpec": { "dimensions": [], "dimensionExclusions": [ ... ], "spatialDimensions": [] } } }, "metricsSpec": [ ... ], "granularitySpec": { "type": "uniform", "segmentGranularity": "HOUR", "queryGranularity": "HOUR", "rollup": true, "intervals": null }, "transformSpec": { "filter": null, "transforms": [] } }, "tuningConfig": { "type": "kafka", "maxRowsInMemory": 40000, "maxRowsPerSegment": 5000000, "intermediatePersistPeriod": "PT10M", "basePersistDirectory": "/mnt/druid/tmp/1550607663100-0", "maxPendingPersists": 0, "indexSpec": { "bitmap": { "type": "concise" }, "dimensionCompression": "lz4", "metricCompression": "lz4", "longEncoding": "longs" }, "buildV9Directly": true, "reportParseExceptions": false, "handoffConditionTimeout": 0, "resetOffsetAutomatically": true, "segmentWriteOutMediumFactory": null, "workerThreads": null, "chatThreads": null, "chatRetries": 20, "httpTimeout": "PT60S", "shutdownTimeout": "PT180S", "offsetFetchPeriod": "PT30S" }, "ioConfig": { "topic": "some-topic", "replicas": 1, "taskCount": 12, "taskDuration": "PT3600S", "consumerProperties": { "bootstrap.servers": "kafka-broker.site.com:9092", "group.id": "someGroupId", "auto.offset.reset": "latest", "max.partition.fetch.bytes": "4000000" }, "startDelay": "PT0S", "period": "PT30S", "useEarliestOffset": false, "completionTimeout": "PT1800S", "lateMessageRejectionPeriod": null, "earlyMessageRejectionPeriod": null, "skipOffsetGaps": false }, "context": null } ``` and a `index_hadoop` one: ``` { "type": "index_hadoop", "spec": { "dataSchema": { "dataSource": "some-data-source", "metricsSpec": [ ... ], "granularitySpec": { "type": "uniform", "segmentGranularity": "day", "queryGranularity": "hour", "intervals": [ "$INTERVALS" ] }, "parser": { "parseSpec": { "format": "json", "timestampSpec": { "column": "timestamp", "format": "auto" }, "dimensionsSpec": { "dimensions": [], "dimensionExclusions": [ ... ], "spatialDimensions": [] } } } }, "ioConfig": { "type": "hadoop", "inputSpec": { "type": "static", "paths": "s3://some/path/" } }, "tuningConfig": { "type": "hadoop", "partitionsSpec": { "type": "hashed", "targetPartitionSize": 10000000 }, "maxRowsInMemory": 75000, "forceExtendableShardSpecs": true, "jobProperties": { "mapreduce.job.classloader": "true", "fs.s3.impl": "org.apache.hadoop.fs.s3native.NativeS3FileSystem", "fs.s3n.impl": "org.apache.hadoop.fs.s3native.NativeS3FileSystem", "io.compression.codecs": "org.apache.hadoop.io.compress.GzipCodec,org.apache.hadoop.io.compress.DefaultCodec,org.apache.hadoop.io.compress.BZip2Codec,org.apache.hadoop.io.compress.SnappyCodec", "mapreduce.reduce.shuffle.memory.limit.percent": "0.15", "mapreduce.reduce.shuffle.input.buffer.percent": "0.5" } } }, "hadoopDependencyCoordinates": [ "org.apache.hadoop:hadoop-client:2.7.3" ] } ```

---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected] With regards, Apache Git Services --------------------------------------------------------------------- To unsubscribe, e-mail: [email protected] For additional commands, e-mail: [email protected]