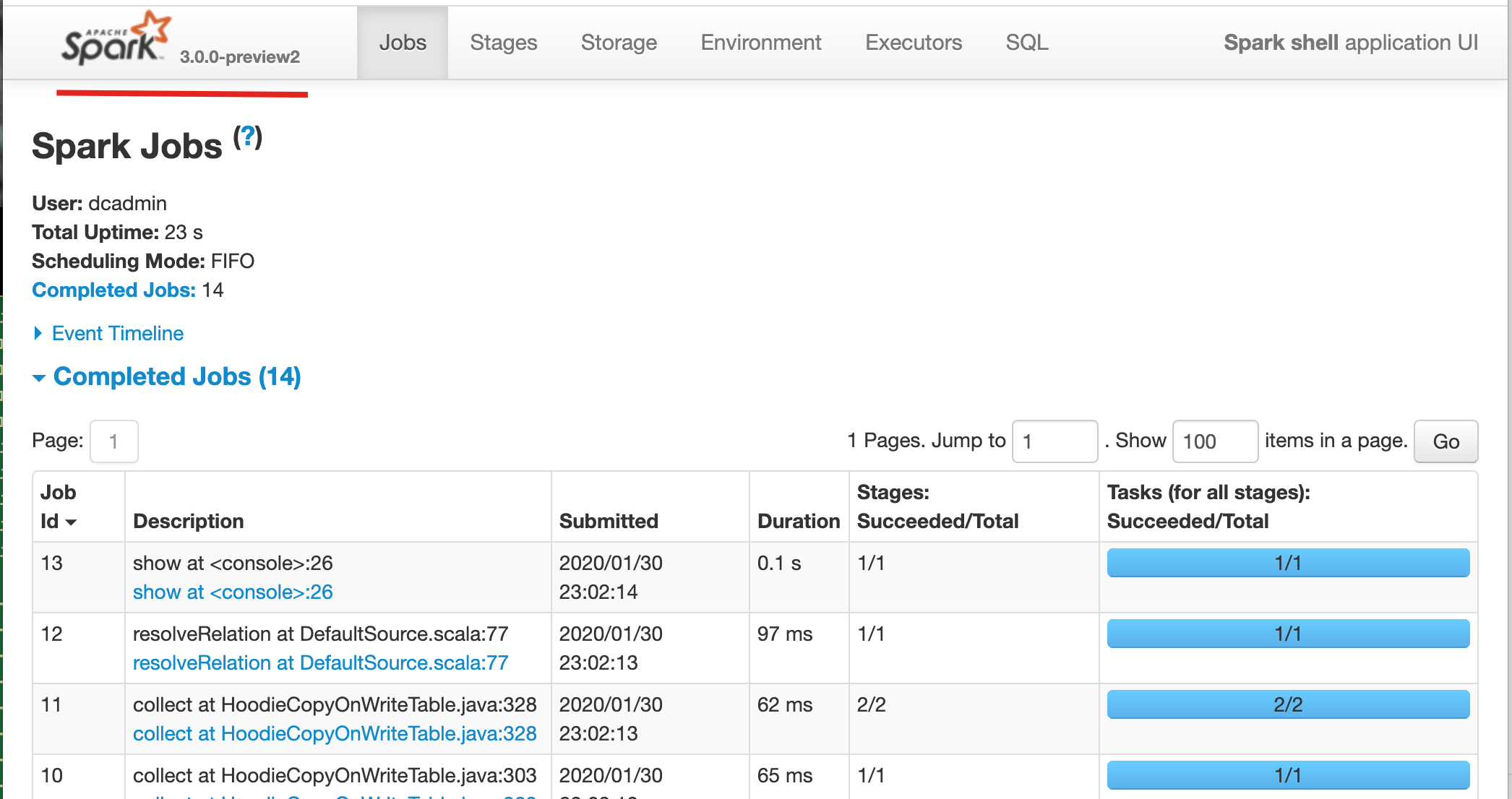

lamber-ken opened a new pull request #1293: [HUDI-585] Optimize the steps of building with scala-2.12 URL: https://github.com/apache/incubator-hudi/pull/1293 ## What is the purpose of the pull request At the master branch, to build scala 2.12 version, will execute dev/change-scala-version.sh firstly. This pr aims to optimize the steps. ## Brief change log - *Remove dev/change-scala-version.sh script* ## Verify this pull request **Test with `scala-2.11`** ``` mvn clean package -DskipTests -DskipITs mvn clean package -DskipTests -DskipITs -Pscala-2.11 ``` ``` rm -rf /tmp/hudi_mor_table export SPARK_HOME=/work/BigData/install/spark/spark-2.4.4-bin-hadoop2.7-2.11 ${SPARK_HOME}/bin/spark-shell \ --packages org.apache.spark:spark-avro_2.11:2.4.4 \ --jars `ls packaging/hudi-spark-bundle/target/hudi-spark-bundle_*.*-*.*.*-SNAPSHOT.jar` \ --conf 'spark.serializer=org.apache.spark.serializer.KryoSerializer' val basePath = "file:///tmp/hudi_mor_table" var datas = List("""{ "name": "kenken", "ts": 1574297893836, "age": 12, "location": "latitude"}""") val df = spark.read.json(spark.sparkContext.parallelize(datas, 2)) df.write.format("org.apache.hudi"). option("hoodie.insert.shuffle.parallelism", "10"). option("hoodie.upsert.shuffle.parallelism", "10"). option("hoodie.delete.shuffle.parallelism", "10"). option("hoodie.bulkinsert.shuffle.parallelism", "10"). option("hoodie.datasource.write.recordkey.field", "name"). option("hoodie.datasource.write.partitionpath.field", "location"). option("hoodie.datasource.write.precombine.field", "ts"). option("hoodie.table.name", "tableName"). mode("Overwrite"). save(basePath) spark.read.format("org.apache.hudi").load(basePath + "/*/").show() ``` <br> **Test with `scala-2.12`** ``` mvn clean package -DskipTests -DskipITs -Pscala-2.12 ``` ``` rm -rf /tmp/hudi_mor_table export SPARK_HOME=/work/BigData/install/spark/spark-3.0.0-preview2-bin-hadoop2.7 ${SPARK_HOME}/bin/spark-shell \ --packages org.apache.spark:spark-avro_2.12:3.0.0-preview2 \ --jars `ls packaging/hudi-spark-bundle/target/hudi-spark-bundle_*.*-*.*.*-SNAPSHOT.jar` \ --conf 'spark.serializer=org.apache.spark.serializer.KryoSerializer' val basePath = "file:///tmp/hudi_mor_table" var datas = List("""{ "name": "kenken", "ts": 1574297893836, "age": 12, "location": "latitude"}""") val df = spark.read.json(spark.sparkContext.parallelize(datas, 2)) df.write.format("org.apache.hudi"). option("hoodie.insert.shuffle.parallelism", "10"). option("hoodie.upsert.shuffle.parallelism", "10"). option("hoodie.delete.shuffle.parallelism", "10"). option("hoodie.bulkinsert.shuffle.parallelism", "10"). option("hoodie.datasource.write.recordkey.field", "name"). option("hoodie.datasource.write.partitionpath.field", "location"). option("hoodie.datasource.write.precombine.field", "ts"). option("hoodie.table.name", "tableName"). mode("Overwrite"). save(basePath) spark.read.format("org.apache.hudi").load(basePath + "/*/").show() ```  ## Committer checklist - [X] Has a corresponding JIRA in PR title & commit - [X] Commit message is descriptive of the change - [ ] CI is green - [ ] Necessary doc changes done or have another open PR - [ ] For large changes, please consider breaking it into sub-tasks under an umbrella JIRA.

---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected] With regards, Apache Git Services