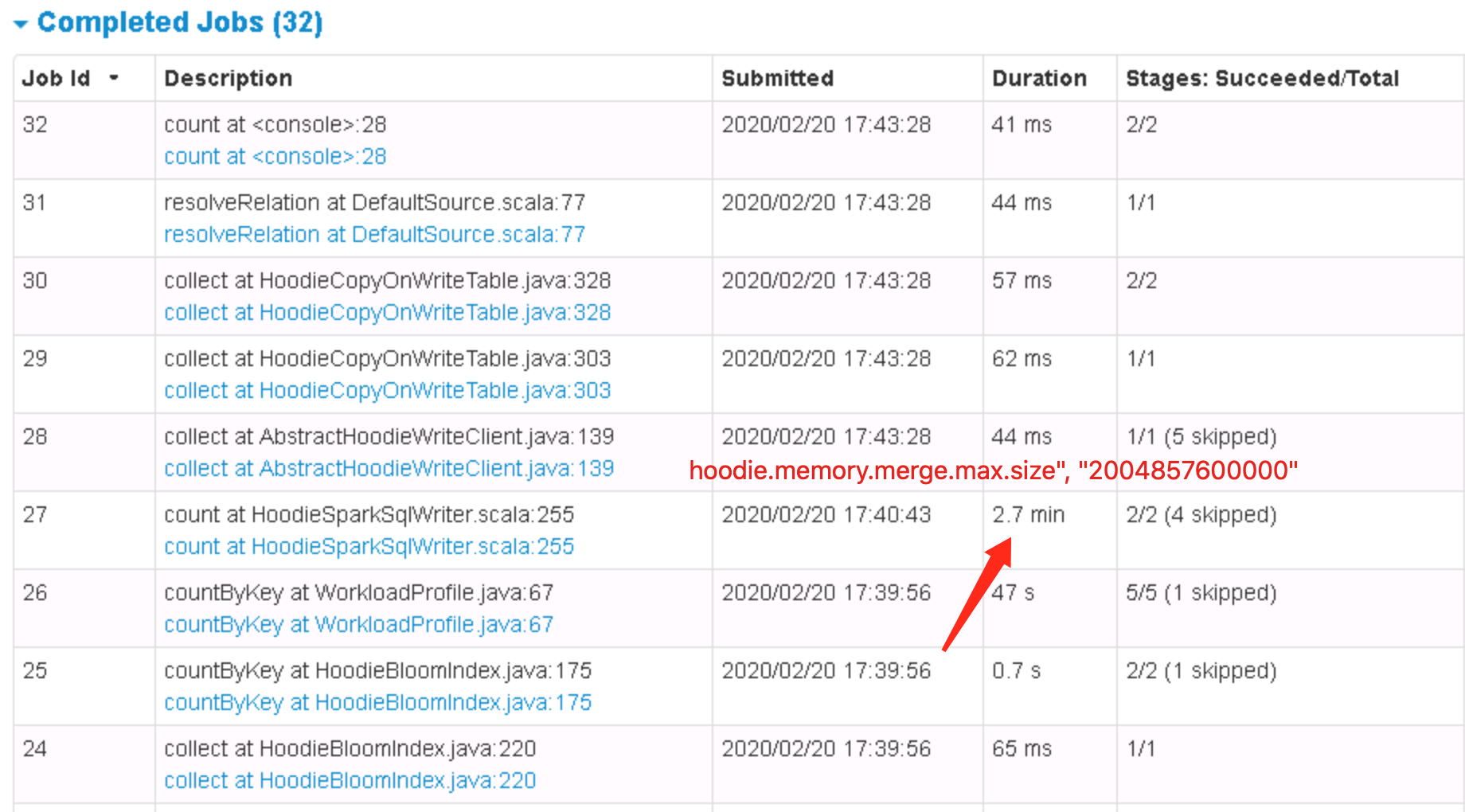

lamber-ken commented on issue #1328: Hudi upsert hangs URL: https://github.com/apache/incubator-hudi/issues/1328#issuecomment-588969473 Hi @vinothchandar, follow your steps **Analysis**: Upsert (4000000 entries) ``` WARN HoodieMergeHandle: Number of entries in MemoryBasedMap => 150875 Total size in bytes of MemoryBasedMap => 83886580 Number of entries in DiskBasedMap => 3849125 Size of file spilled to disk => 1443046132 ``` Hang stackstrace (DiskBasedMap#get) ``` "pool-21-thread-2" Id=696 cpuUsage=98% RUNNABLE at java.util.zip.ZipFile.getEntry(Native Method) at java.util.zip.ZipFile.getEntry(ZipFile.java:310) - locked java.util.jar.JarFile@1fc27ed4 at java.util.jar.JarFile.getEntry(JarFile.java:240) at java.util.jar.JarFile.getJarEntry(JarFile.java:223) at sun.misc.URLClassPath$JarLoader.getResource(URLClassPath.java:1005) at sun.misc.URLClassPath.getResource(URLClassPath.java:212) at java.net.URLClassLoader$1.run(URLClassLoader.java:365) at java.net.URLClassLoader$1.run(URLClassLoader.java:362) at java.security.AccessController.doPrivileged(Native Method) at java.net.URLClassLoader.findClass(URLClassLoader.java:361) at java.lang.ClassLoader.loadClass(ClassLoader.java:424) - locked java.lang.Object@28f65251 at sun.misc.Launcher$AppClassLoader.loadClass(Launcher.java:331) at java.lang.ClassLoader.loadClass(ClassLoader.java:411) - locked scala.reflect.internal.util.ScalaClassLoader$URLClassLoader@a353dff at java.lang.ClassLoader.loadClass(ClassLoader.java:411) - locked com.esotericsoftware.reflectasm.AccessClassLoader@2c7122e2 at com.esotericsoftware.reflectasm.AccessClassLoader.loadClass(AccessClassLoader.java:92) at java.lang.ClassLoader.loadClass(ClassLoader.java:357) at com.esotericsoftware.reflectasm.ConstructorAccess.get(ConstructorAccess.java:59) - locked com.esotericsoftware.reflectasm.AccessClassLoader@2c7122e2 at org.apache.hudi.common.util.SerializationUtils$KryoInstantiator$KryoBase.lambda$newInstantiator$0(SerializationUtils.java:151) at org.apache.hudi.common.util.SerializationUtils$KryoInstantiator$KryoBase$$Lambda$265/1458915834.newInstance(Unknown Source) at com.esotericsoftware.kryo.Kryo.newInstance(Kryo.java:1139) at com.esotericsoftware.kryo.serializers.FieldSerializer.create(FieldSerializer.java:562) at com.esotericsoftware.kryo.serializers.FieldSerializer.read(FieldSerializer.java:538) at com.esotericsoftware.kryo.Kryo.readObject(Kryo.java:731) at com.esotericsoftware.kryo.serializers.ObjectField.read(ObjectField.java:125) at com.esotericsoftware.kryo.serializers.FieldSerializer.read(FieldSerializer.java:543) at com.esotericsoftware.kryo.Kryo.readClassAndObject(Kryo.java:813) at org.apache.hudi.common.util.SerializationUtils$KryoSerializerInstance.deserialize(SerializationUtils.java:112) at org.apache.hudi.common.util.SerializationUtils.deserialize(SerializationUtils.java:86) at org.apache.hudi.common.util.collection.DiskBasedMap.get(DiskBasedMap.java:217) at org.apache.hudi.common.util.collection.DiskBasedMap.get(DiskBasedMap.java:211) at org.apache.hudi.common.util.collection.DiskBasedMap.get(DiskBasedMap.java:207) at org.apache.hudi.common.util.collection.ExternalSpillableMap.get(ExternalSpillableMap.java:173) at org.apache.hudi.common.util.collection.ExternalSpillableMap.get(ExternalSpillableMap.java:55) at org.apache.hudi.io.HoodieMergeHandle.write(HoodieMergeHandle.java:280) at org.apache.hudi.table.HoodieCopyOnWriteTable$UpdateHandler.consumeOneRecord(HoodieCopyOnWriteTable.java:434) at org.apache.hudi.table.HoodieCopyOnWriteTable$UpdateHandler.consumeOneRecord(HoodieCopyOnWriteTable.java:424) at org.apache.hudi.common.util.queue.BoundedInMemoryQueueConsumer.consume(BoundedInMemoryQueueConsumer.java:37) at org.apache.hudi.common.util.queue.BoundedInMemoryExecutor.lambda$null$2(BoundedInMemoryExecutor.java:121) at org.apache.hudi.common.util.queue.BoundedInMemoryExecutor$$Lambda$76/1412692041.call(Unknown Source) at java.util.concurrent.FutureTask.run(FutureTask.java:266) at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142) at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617) at java.lang.Thread.run(Thread.java:745) ``` Average time of `DiskBasedMap#get` ``` $ monitor *DiskBasedMap get -c 12 Affect(class-cnt:1 , method-cnt:4) cost in 221 ms. timestamp class method total success fail avg-rt(ms) fail-rate ---------------------------------------------------------------------------------------- 2020-02-20 18:13:36 DiskBasedMap get 5814 5814 0 6.12 0.00% timestamp class method total success fail avg-rt(ms) fail-rate ---------------------------------------------------------------------------------------- 2020-02-20 18:13:48 DiskBasedMap get 9117 9117 0 3.89 0.00% timestamp class method total success fail avg-rt(ms) fail-rate ---------------------------------------------------------------------------------------- 2020-02-20 18:14:16 DiskBasedMap get 8490 8490 0 4.10 0.00% ``` So, when write data to parquet file, needs 3849125(entries) * 4ms(avg) = 15396s. It takes a long time. More, add option `option("hoodie.memory.merge.max.size", "2004857600000")`, just need about 2.7min

---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected] With regards, Apache Git Services