Aload opened a new issue, #5346:

URL: https://github.com/apache/hudi/issues/5346

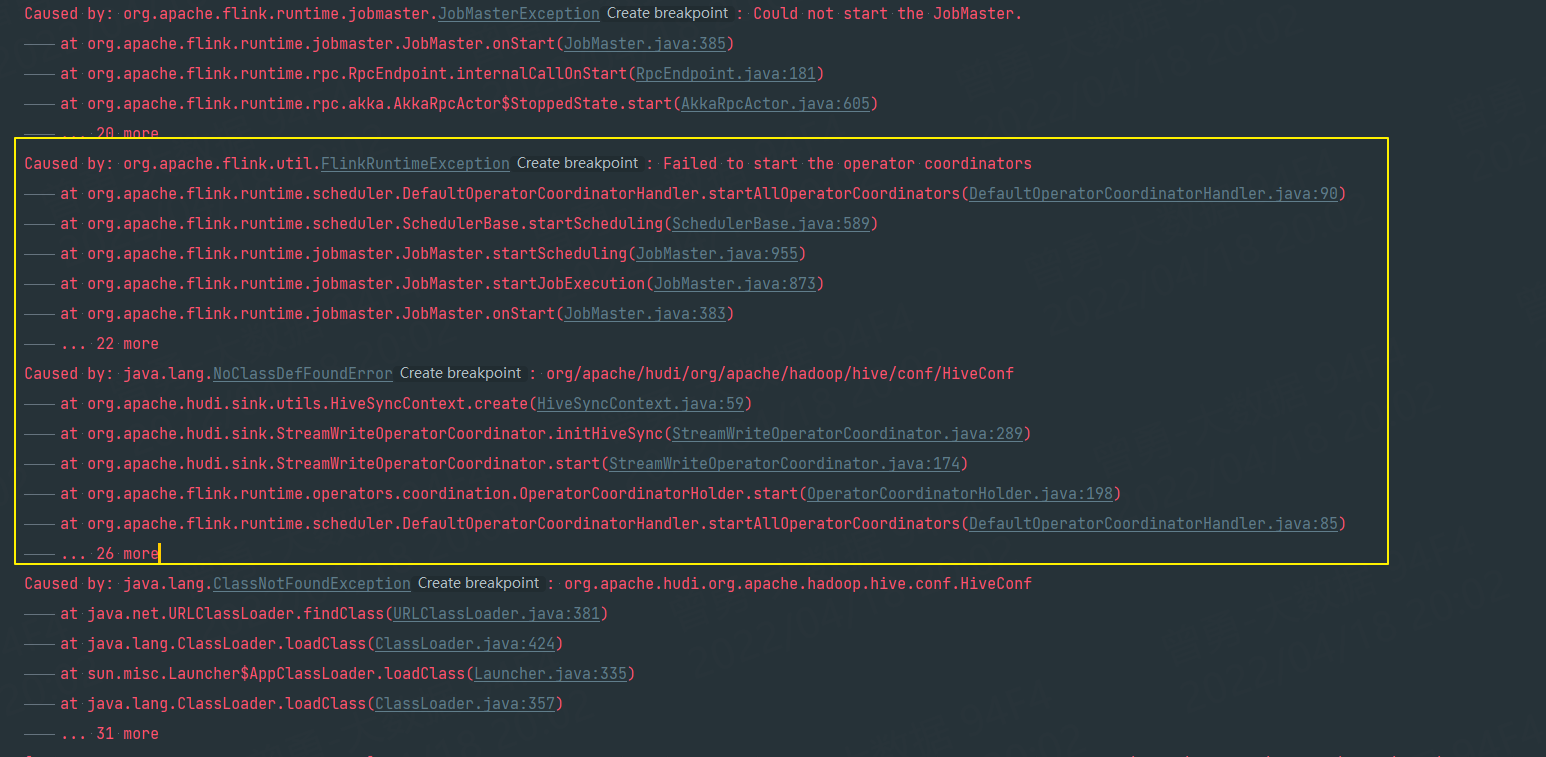

hi , When I use Flink to consume Kakfa and write hudi, I configure the hive

synchronization operation. The same problem occurred during startup .

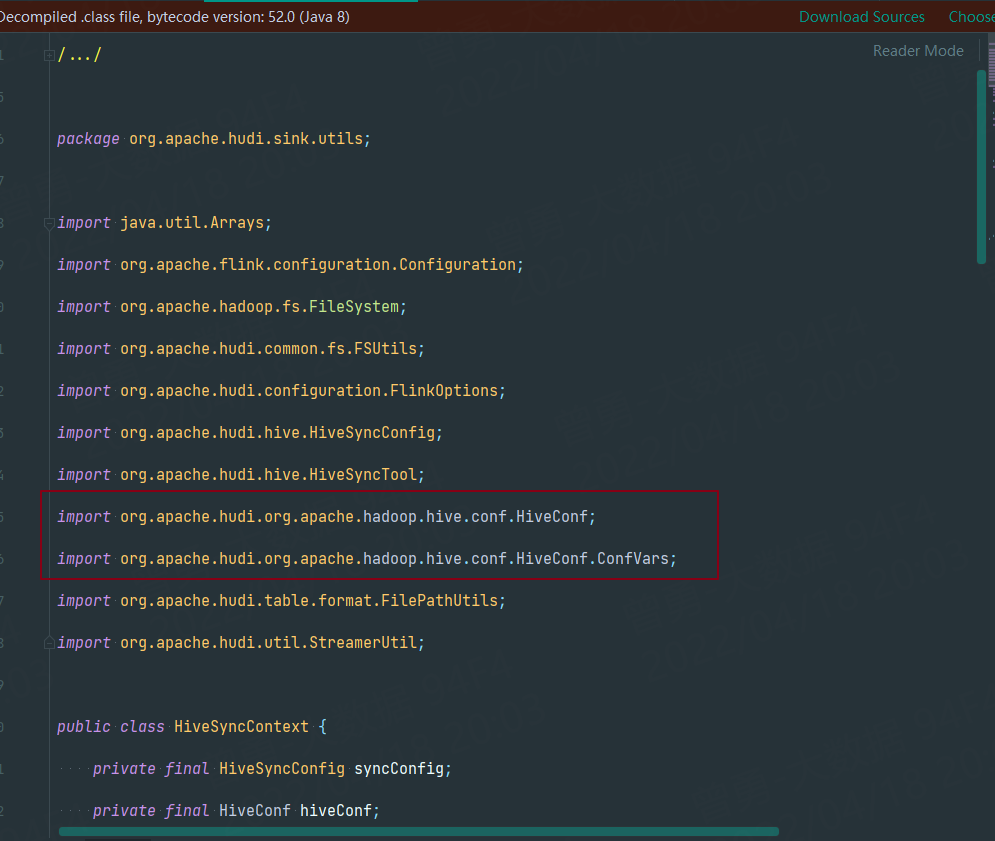

When I looked through the hivesyncContext.java source code, I noticed that

the package imported under the source code was very strange. But this is normal

when I don't synchronize hive.

**version: hudi 0.10.1 flink 1.13.1 scala 2.12.10**

eg:

private val sinkSql: String = SinkSql.apply(sinkTableName,

dataType.getLogicalType.asInstanceOf[RowType]) .option(FlinkOptions.PATH,

hoodiePath.concat(sinkDbName).concat("/").concat(sinkTableName).concat("/"))

.option(FlinkOptions.PRECOMBINE_FIELD, "receiveTime")

.option(FlinkOptions.RECORD_KEY_FIELD, "tenantId,pointId,sn,collectTime")

.option(FlinkOptions.READ_AS_STREAMING, true)

.option(FlinkOptions.READ_START_COMMIT, "earliest")

.option(FlinkOptions.OPERATION, WriteOperationType.INSERT)

.option(FlinkOptions.WRITE_BULK_INSERT_SHUFFLE_BY_PARTITION, true)

.option(FlinkOptions.TABLE_TYPE, HoodieTableType.COPY_ON_WRITE)

.option(FlinkOptions.BUCKET_ASSIGN_TASKS, 10)

.option(FlinkOptions.COMPACTION_ASYNC_ENABLED, true)

.option(FlinkOptions.COMPACTION_DELTA_COMMITS, 1)

.option(FlinkOptions.READ_STREAMING_CHECK_INTERVAL, 10) //

.option(FlinkOptions.INSERT_CLUSTER, true) .option(FlinkOptions.RETRY_TIMES, 5)

.option(FlinkOptions.INSERT_CLUSTER, true) .option(FlinkOptions.WRITE_TASKS,

10)

.option(FlinkOptions.HIVE_SYNC_ENABLED, true)

.option(FlinkOptions.HIVE_SYNC_AUTO_CREATE_DB, true)

.option(FlinkOptions.HIVE_SYNC_DB, "ods") .option(FlinkOptions.HIVE_SYNC_TABLE,

sinkTableName) .option(FlinkOptions.HIVE_SYNC_MODE, "hms")

.option(FlinkOptions.HIVE_SYNC_METASTORE_URIS, "thrift://dev32:9083")

.option(FlinkOptions.HIVE_SYNC_JDBC_URL, "jdbc:hive2://dev32:10000")

.option(FlinkOptions.HIVE_STYLE_PARTITIONING, true)

.option(FlinkOptions.HIVE_SYNC_SUPPORT_TIMESTAMP, true)

.partitionField("tenantId", "fmy", "fmm", "fmd") //

.option(FlinkOptions.INDEX_GLOBAL_ENABLED, true) .end

**Environment Description**

* Hudi version : 0.10.1

* Flink version : 1.13.1

* Hive version : 2.3.7

* Hadoop version : 2.7.3<

* Storage (HDFS/S3/GCS..) :HDFS

* Running on Docker? (yes/no) :no

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: [email protected]

For queries about this service, please contact Infrastructure at:

[email protected]