eric9204 opened a new issue, #5634: URL: https://github.com/apache/hudi/issues/5634

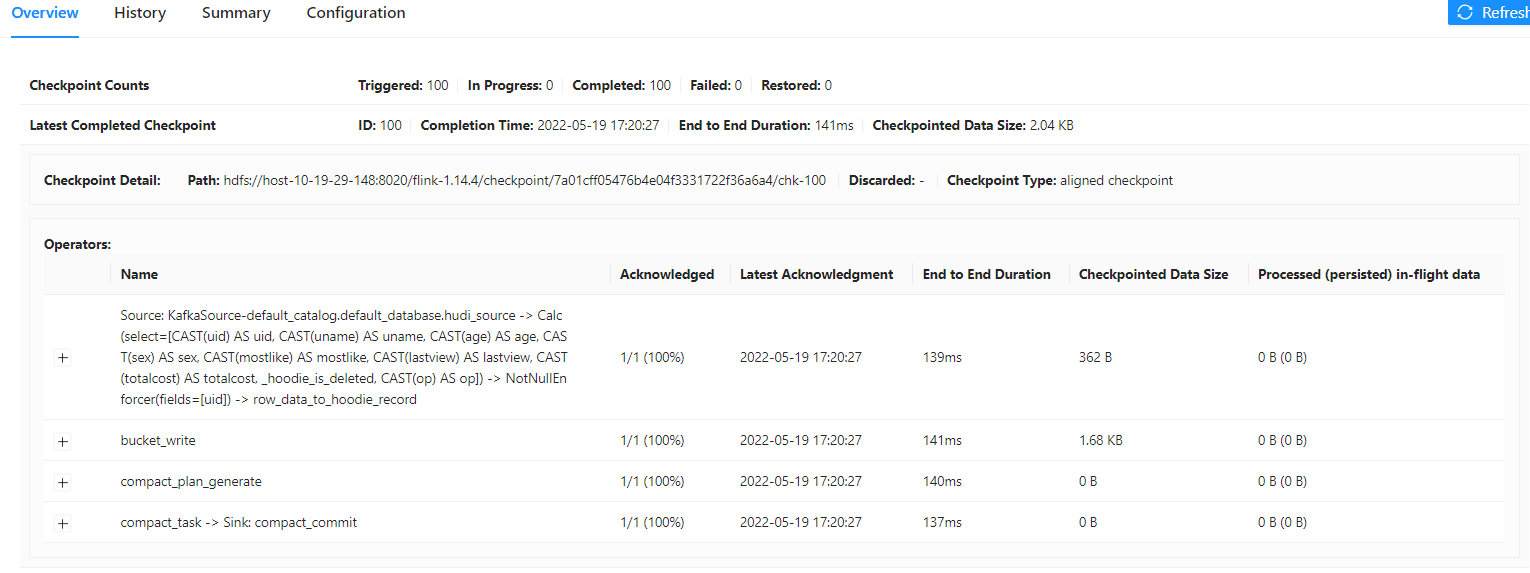

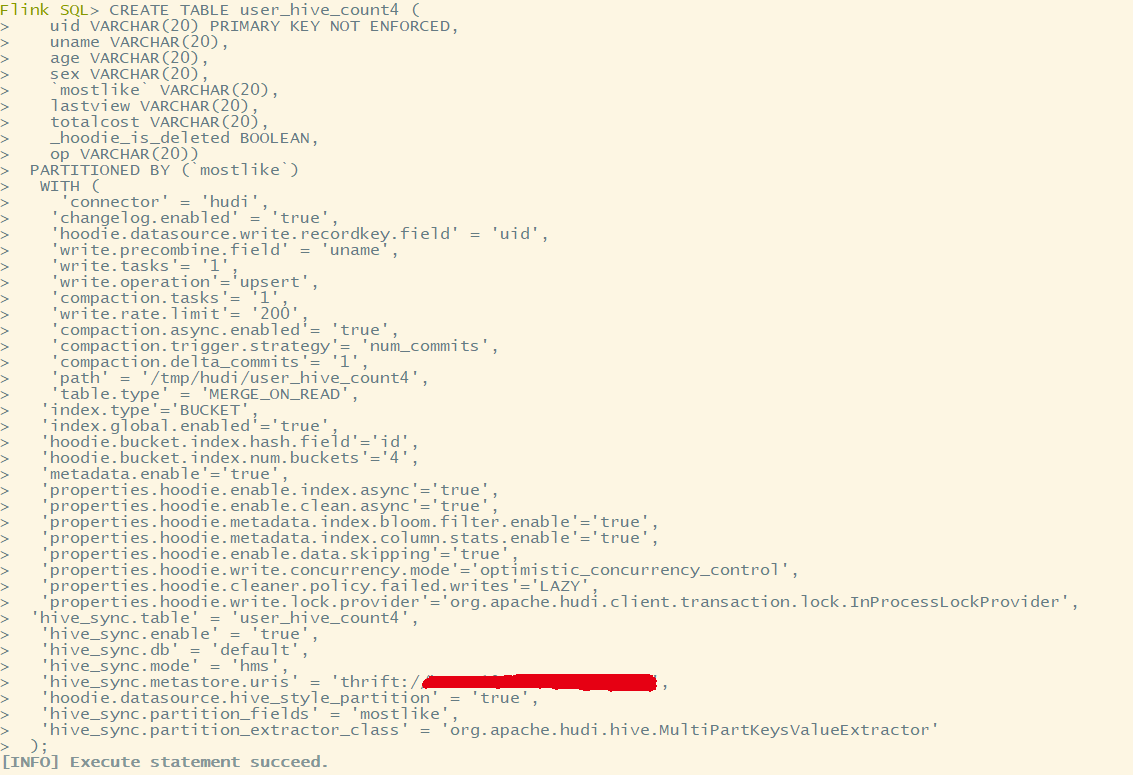

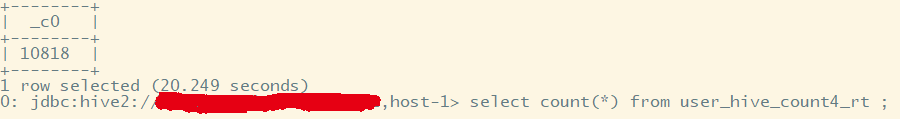

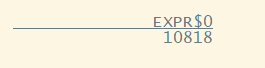

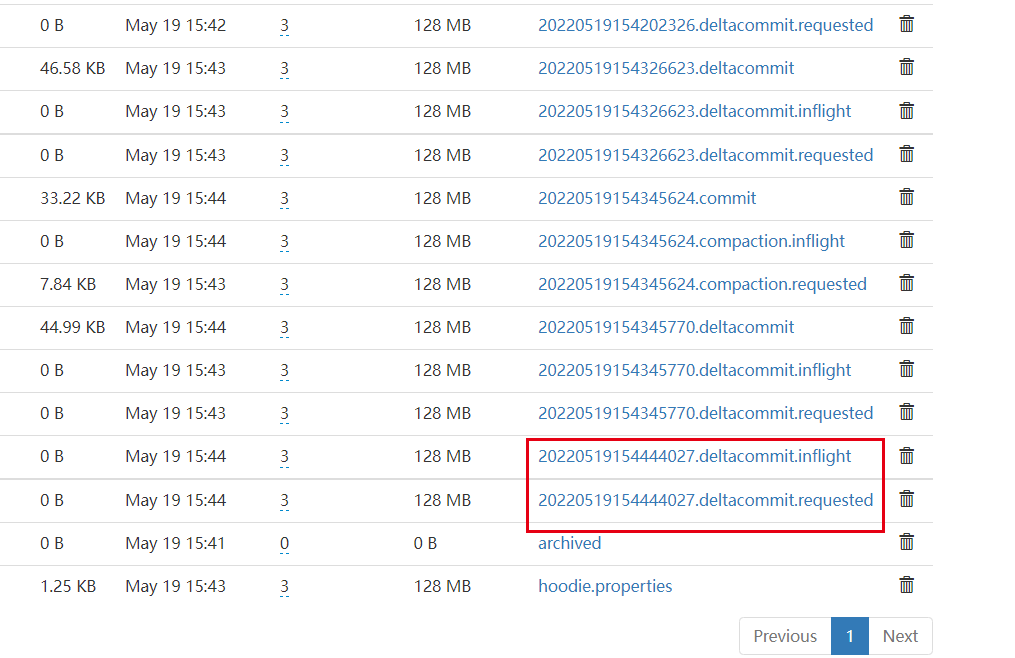

**_Tips before filing an issue_** - Have you gone through our [FAQs](https://hudi.apache.org/learn/faq/)? no - Join the mailing list to engage in conversations and get faster support at [email protected]. - If you have triaged this as a bug, then file an [issue](https://issues.apache.org/jira/projects/HUDI/issues) directly. **Describe the problem you faced** A clear and concise description of the problem. **To Reproduce** Steps to reproduce the behavior: 1. 2. 3. 4. **Expected behavior** A clear and concise description of what you expected to happen. problem 1:flink sql pull data from kafka and write to hudi,there are 11000 records in kafka topic,10000 records are inserts and other 1000 records are updates,enable index global config upsert write operation,select count hudi target table ,there are 10818 records in hudi target table.it seems like upsert operation did not work. problem 2: the last deltacommit did not commit up to now. **Environment Description** * Hudi version : 0.11.0 * Spark version : -- * Hive version : 3.1.2 * Hadoop version : 3.3.0 * Storage (HDFS/S3/GCS..) : hdfs * Running on Docker? (yes/no) : no **Additional context** flink1.14.4 & flink 1.13.5 Add any other context about the problem here. **Stacktrace** ```Add the stacktrace of the error.```       -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]