eric9204 opened a new issue, #5671: URL: https://github.com/apache/hudi/issues/5671

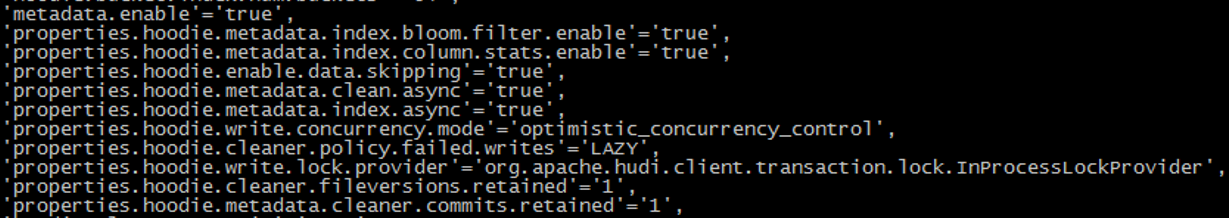

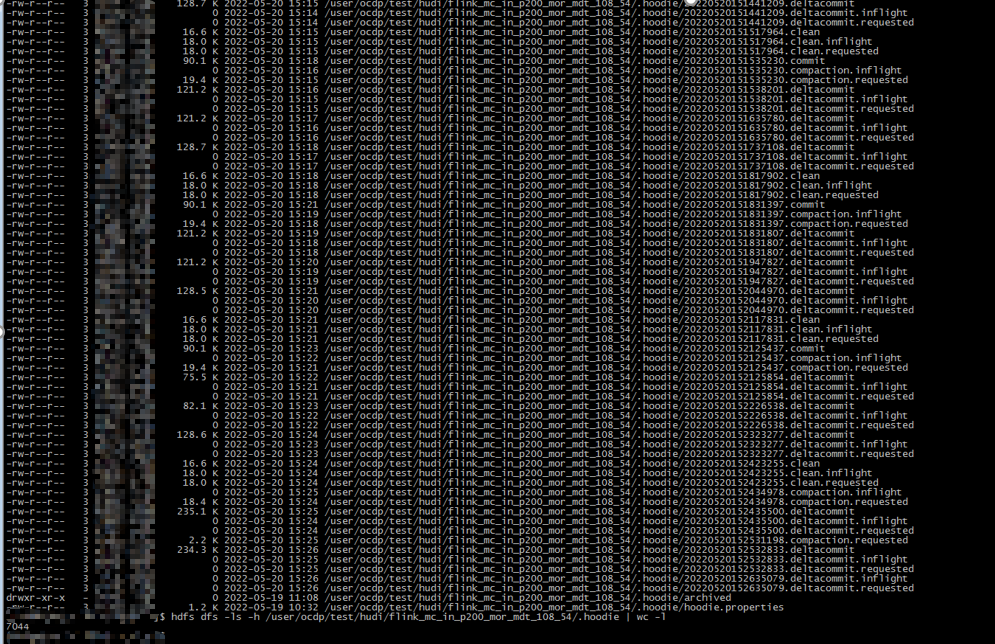

**Describe the problem you faced** Flink sql consume data from kafka and write to hudi, the sql statement enable metadata table, Archive can't be triggered.so ,there are 7044 files in the `.hoodie` directory **To Reproduce** Steps to reproduce the behavior: 1.Set the following parameters:  **Expected behavior** These file should be archived which in the `.hoodie` directory **Environment Description** * Hudi version : hudi-0.11.0 * Spark version : -- * Hive version : -- * Hadoop version : 3.1.0 * Storage (HDFS/S3/GCS..) : hdfs * Running on Docker? (yes/no) : no **Additional context** flink-1.13.5 **Stacktrace** ```Add the stacktrace of the error.```  **There are 7044 files in the `.hoodie` directory** -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]