qianchutao opened a new issue, #5690:

URL: https://github.com/apache/hudi/issues/5690

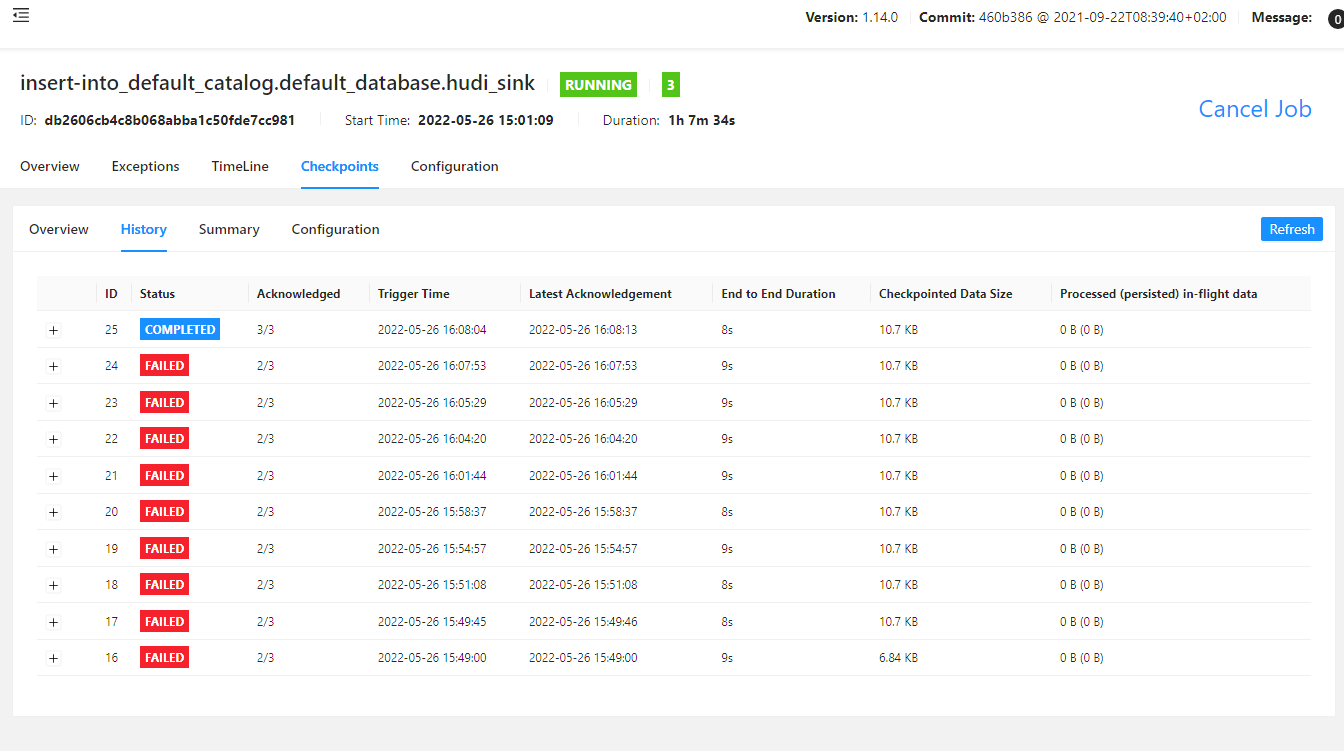

When I used Flink to synchronize data to write HUDi in COW mode,

Flink job kept failing to restart and checkpoint kept failing. The Parquet file

had been written to the path of S3, but the metadata of the table was not

generated.My error message is as follows:

Checkpoint failures are mostly unsuccessful. After many job restarts, the

metadata of the table is synchronized to glue

**Environment Description**

* Hudi version : 0.11.0

* Hive version : 3.1.2

* Hadoop version : 3.2.1

* Storage (HDFS/S3/GCS..) : S3

* Running on Docker? (yes/no) : no

**Stacktrace**

```Add the stacktrace of the error.```

2022-05-26 07:02:04,853 INFO

org.apache.hudi.common.table.timeline.HoodieActiveTimeline [] - Loaded

instants upto : Option{val=[==>20220526070204519__commit__INFLIGHT]}

2022-05-26 07:02:04,854 INFO

org.apache.hudi.sink.StreamWriteOperatorCoordinator [] - Executor

executes action [initialize instant ] success!

2022-05-26 07:05:05,157 INFO

org.apache.flink.runtime.checkpoint.CheckpointCoordinator [] - Triggering

checkpoint 1 (type=CHECKPOINT) @ 1653548704936 for job

db2606cb4c8b068abba1c50fde7cc981.

2022-05-26 07:05:05,161 INFO

org.apache.hudi.sink.StreamWriteOperatorCoordinator [] - Executor

executes action [taking checkpoint 1] success!

2022-05-26 07:05:17,905 ERROR

org.apache.hudi.sink.StreamWriteOperatorCoordinator [] - Executor

executes action [handle write metadata event for instant 20220526070204519]

error

java.lang.IllegalStateException: Receive an unexpected event for instant

20220526070207156 from task 0

at

org.apache.hudi.common.util.ValidationUtils.checkState(ValidationUtils.java:67)

~[data-warehouse-jar-with-dependencies.jar:?]

at

org.apache.hudi.sink.StreamWriteOperatorCoordinator.handleWriteMetaEvent(StreamWriteOperatorCoordinator.java:426)

~[data-warehouse-jar-with-dependencies.jar:?]

at

org.apache.hudi.sink.StreamWriteOperatorCoordinator.lambda$handleEventFromOperator$4(StreamWriteOperatorCoordinator.java:287)

~[data-warehouse-jar-with-dependencies.jar:?]

at

org.apache.hudi.sink.utils.NonThrownExecutor.lambda$execute$0(NonThrownExecutor.java:93)

~[data-warehouse-jar-with-dependencies.jar:?]

at

java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

[?:1.8.0_332]

at

java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

[?:1.8.0_332]

at java.lang.Thread.run(Thread.java:750) [?:1.8.0_332]

2022-05-26 07:05:17,918 INFO org.apache.flink.runtime.jobmaster.JobMaster

[] - Trying to recover from a global failure.

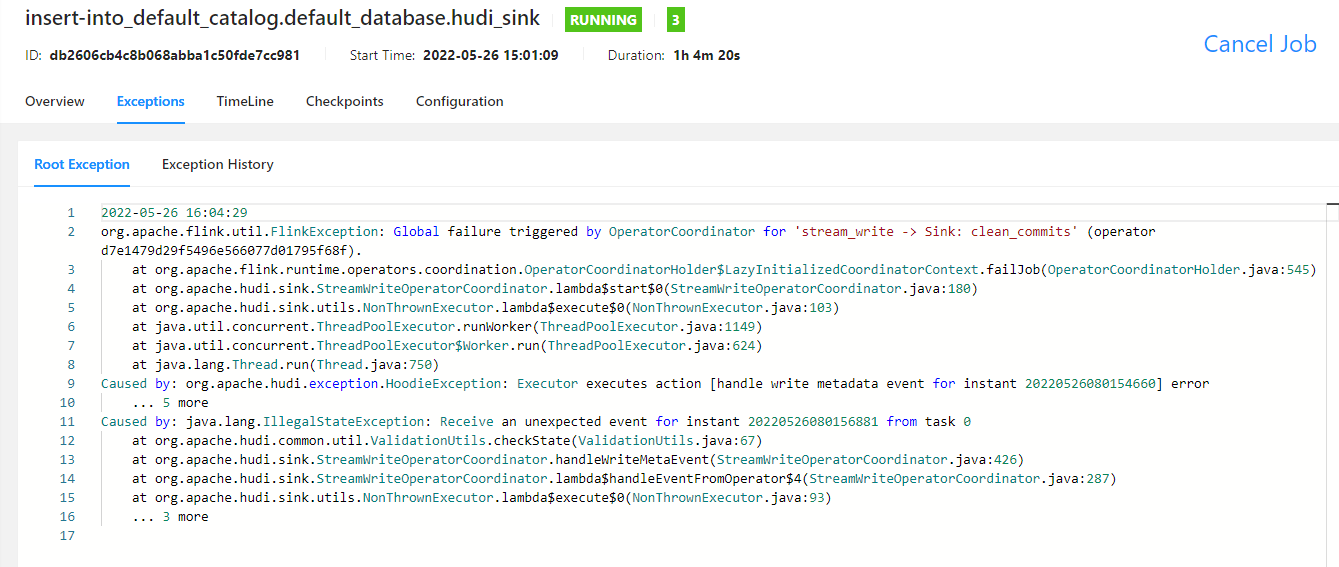

org.apache.flink.util.FlinkException: Global failure triggered by

OperatorCoordinator for 'stream_write -> Sink: clean_commits' (operator

d7e1479d29f5496e566077d01795f68f).

at

org.apache.flink.runtime.operators.coordination.OperatorCoordinatorHolder$LazyInitializedCoordinatorContext.failJob(OperatorCoordinatorHolder.java:545)

~[data-warehouse-jar-with-dependencies.jar:?]

at

org.apache.hudi.sink.StreamWriteOperatorCoordinator.lambda$start$0(StreamWriteOperatorCoordinator.java:180)

~[data-warehouse-jar-with-dependencies.jar:?]

at

org.apache.hudi.sink.utils.NonThrownExecutor.lambda$execute$0(NonThrownExecutor.java:103)

~[data-warehouse-jar-with-dependencies.jar:?]

at

java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

~[?:1.8.0_332]

at

java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

~[?:1.8.0_332]

at java.lang.Thread.run(Thread.java:750) ~[?:1.8.0_332]

Caused by: org.apache.hudi.exception.HoodieException: Executor executes

action [handle write metadata event for instant 20220526070204519] error

... 5 more

Caused by: java.lang.IllegalStateException: Receive an unexpected event for

instant 20220526070207156 from task 0

at

org.apache.hudi.common.util.ValidationUtils.checkState(ValidationUtils.java:67)

~[data-warehouse-jar-with-dependencies.jar:?]

at

org.apache.hudi.sink.StreamWriteOperatorCoordinator.handleWriteMetaEvent(StreamWriteOperatorCoordinator.java:426)

~[data-warehouse-jar-with-dependencies.jar:?]

at

org.apache.hudi.sink.StreamWriteOperatorCoordinator.lambda$handleEventFromOperator$4(StreamWriteOperatorCoordinator.java:287)

~[data-warehouse-jar-with-dependencies.jar:?]

at

org.apache.hudi.sink.utils.NonThrownExecutor.lambda$execute$0(NonThrownExecutor.java:93)

~[data-warehouse-jar-with-dependencies.jar:?]

... 3 more

2022-05-26 07:05:17,920 INFO

org.apache.flink.runtime.executiongraph.ExecutionGraph [] - Job

insert-into_default_catalog.default_database.hudi_sink

(db2606cb4c8b068abba1c50fde7cc981) switched from state RUNNING to RESTARTING.

2022-05-26 07:05:17,925 WARN

org.apache.hudi.sink.StreamWriteOperatorCoordinator [] - Reset the

event for task [0]

2022-05-26 07:05:17,926 INFO

org.apache.flink.runtime.executiongraph.ExecutionGraph [] - stream_write

-> Sink: clean_commits (1/1) (5b601ab60f621f586bd0f09c2cbe21b7) switched from

RUNNING to CANCELING.

2022-05-26 07:05:17,928 INFO

org.apache.flink.runtime.executiongraph.ExecutionGraph [] -

bucket_assigner (1/1) (e02f84c19cf38cebfd4c771efeb057c3) switched from RUNNING

to CANCELING.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: [email protected]

For queries about this service, please contact Infrastructure at:

[email protected]