alexeykudinkin opened a new pull request, #5733: URL: https://github.com/apache/hudi/pull/5733

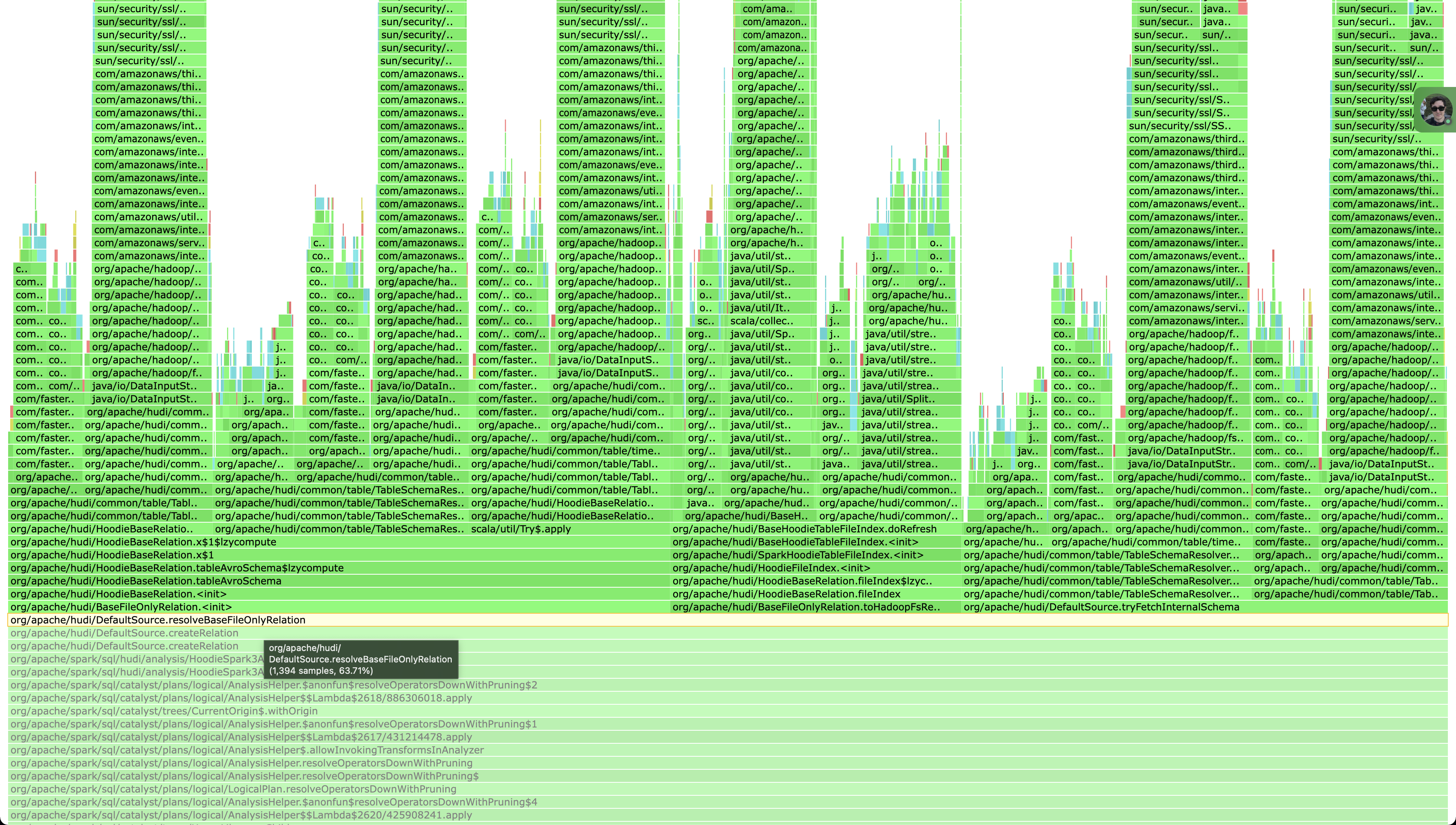

## *Tips* - *Thank you very much for contributing to Apache Hudi.* - *Please review https://hudi.apache.org/contribute/how-to-contribute before opening a pull request.* ## What is the purpose of the pull request As has been outlined in HUDI-4176, we've hit a roadblock while testing Hudi on a large dataset (~1Tb) having pretty fat commits where Hudi's commit metadata could reach into 100s of Mbs. As could be seen from the screenshot in such cases current implementation of `TableSchemaResolver` is doing a lot of repeated throw-away computations reading `HoodieCommitMetadata` (during init, fetching table's schema, fetching table's internal schema, etc). Given the size some of ours commit metadata instances Spark's parsing and resolving phase (when `spark.sql(...)` is involved, but before returned `Dataset` is dereferenced) starts to dominate some of our queries' execution time.  ## Brief change log - Rebased onto new APIs to avoid excessive Hadoop's Path allocations - Eliminated `hasOperationField` completely to avoid repeatitive computations - Cleaning up duplication in `HoodieActiveTimeline` - Added caching for common instances of `HoodieCommitMetadata` - Made `tableStructSchema` lazy; ## Verify this pull request This pull request is already covered by existing tests, such as *(please describe tests)*. ## Committer checklist - [ ] Has a corresponding JIRA in PR title & commit - [ ] Commit message is descriptive of the change - [ ] CI is green - [ ] Necessary doc changes done or have another open PR - [ ] For large changes, please consider breaking it into sub-tasks under an umbrella JIRA. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]