yangzhiyue opened a new issue, #5857: URL: https://github.com/apache/hudi/issues/5857

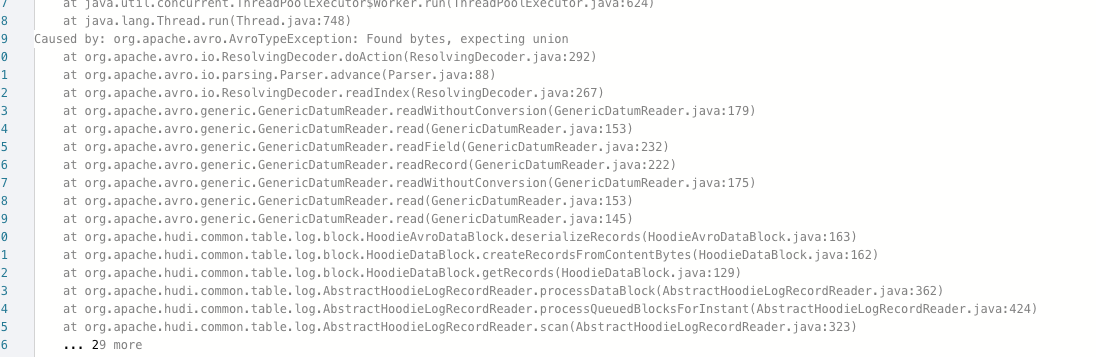

**_Tips before filing an issue_** - Have you gone through our [FAQs](https://hudi.apache.org/learn/faq/)? yes - Join the mailing list to engage in conversations and get faster support at [email protected]. yes - If you have triaged this as a bug, then file an [issue](https://issues.apache.org/jira/projects/HUDI/issues) directly. **Describe the problem you faced** We recently put hudi into production, and encountered a problem with a big task. He has hundreds of millions of binlog data every day, including insert and update, and has a joint primary key defined by us. These binlog data will be updated to more historical partitions and the latest partition.We use dynamoDb as a distributed lock, flink is used for incremental updates, spark tasks to write the previous historical hive partition data to this hudi table for data completion The specific version and configuration are flink 1.13.1 hudi 0.10.1 spark 3.0.1 table.type = MOR 'hoodie.datasource.write.keygenerator.class' = 'org.apache.hudi.keygen.SimpleAvroKeyGenerator' hoodie.index.type' = 'SIMPLE' problems encountered 1. When updating through insert into, there will be a problem of two records with a primary key, that is, data duplication 2. Update through insert into. When querying, sometimes there is a problem in the picture below. It feels that the data has been written badly.  chinese description(我们最近把hudi放到了生产,有个大任务遇到了问题,他每天有上亿条的binlog数据,包括insert和update,有一个我们定义的联合主键,这些binlog数据会去更新较多的历史分区和最新分区,我们使用dynamoDb作为分布式锁,flink用来进行增量更新,spark的任务从来将以前历史的hive分区数据写入到这个hudi表用于数据补全 具体的版本和配置是 flink 1.13.1 hudi 0.10.1 spark 3.0.1 table.type = MOR 'hoodie.datasource.write.keygenerator.class' = 'org.apache.hudi.keygen.SimpleAvroKeyGenerator' hoodie.index.type' = 'SIMPLE' 遇到的问题 1.通过insert into进行更新,会出现一个主键两条记录的问题,就是数据重复 2.通过insert into进行更新,查询的时候有时候下图的问题,感觉是数据被写坏了) **To Reproduce** Steps to reproduce the behavior: it is in description **Expected behavior** A clear and concise description of what you expected to happen. **Environment Description** * Hudi version : 0.10.1 * Spark version : 3.0.1 * Hive version : * Hadoop version : 3.1.2 * Storage (HDFS/S3/GCS..) : s3 * Flink: 1.13.1 * Running on Docker? (yes/no) : no **Additional context** Add any other context about the problem here. **Stacktrace** ```Add the stacktrace of the error.``` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]