ccchenhe commented on issue #6034:

URL: https://github.com/apache/hudi/issues/6034#issuecomment-1174748167

> > You can set up partitions, can you show some characteristics of the data

that duplicates ? It is helpful to dig into the reason.

> > ok

>

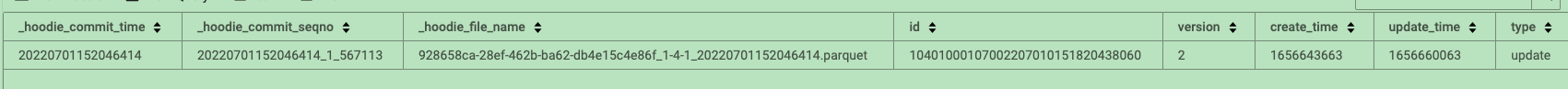

> now same id have 2 records, 1 is insert, other 1 is update

>

> ```json

> // insert

>

{"database":"database_00000001","table":"table_00000001","type":"insert","ts":1656643663,"maxwell_ts":1656643663889000,"xid":8498,"xoffset":1,"primary_key":[10401000107002207010151820438060],"primary_key_columns":["id"],"data":{"id":"10401000107002207010151820438060","version":1,"create_time":1656643663,"update_time":1656643663},"old":{}}

> // update

>

{"database":"database_00000001","table":"table_00000001","type":"update","ts":1656660063,"maxwell_ts":1656660063062000,"xid":7210,"xoffset":1,"primary_key":[10401000107002207010151820438060],"primary_key_columns":["id"],"data":{"id":"10401000107002207010151820438060","version":2,"create_time":1656643663,"update_time":1656660063},"old":{"version":1,"update_time":1656643663}}

> ```

>

> application using flink bloom state consume kafka ( these 2 records), and

we got

>

> application using flink bucket consume kafka ( these 2 records), and we

got <img alt="image" width="1792"

src="https://user-images.githubusercontent.com/20533543/177280462-66a9e285-73ae-484c-ac91-c80acc61a3ac.png";>

m sure that all of records saved kafka and consume. becuase each binlog has

double write hdfs use other channel

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: [email protected]

For queries about this service, please contact Infrastructure at:

[email protected]