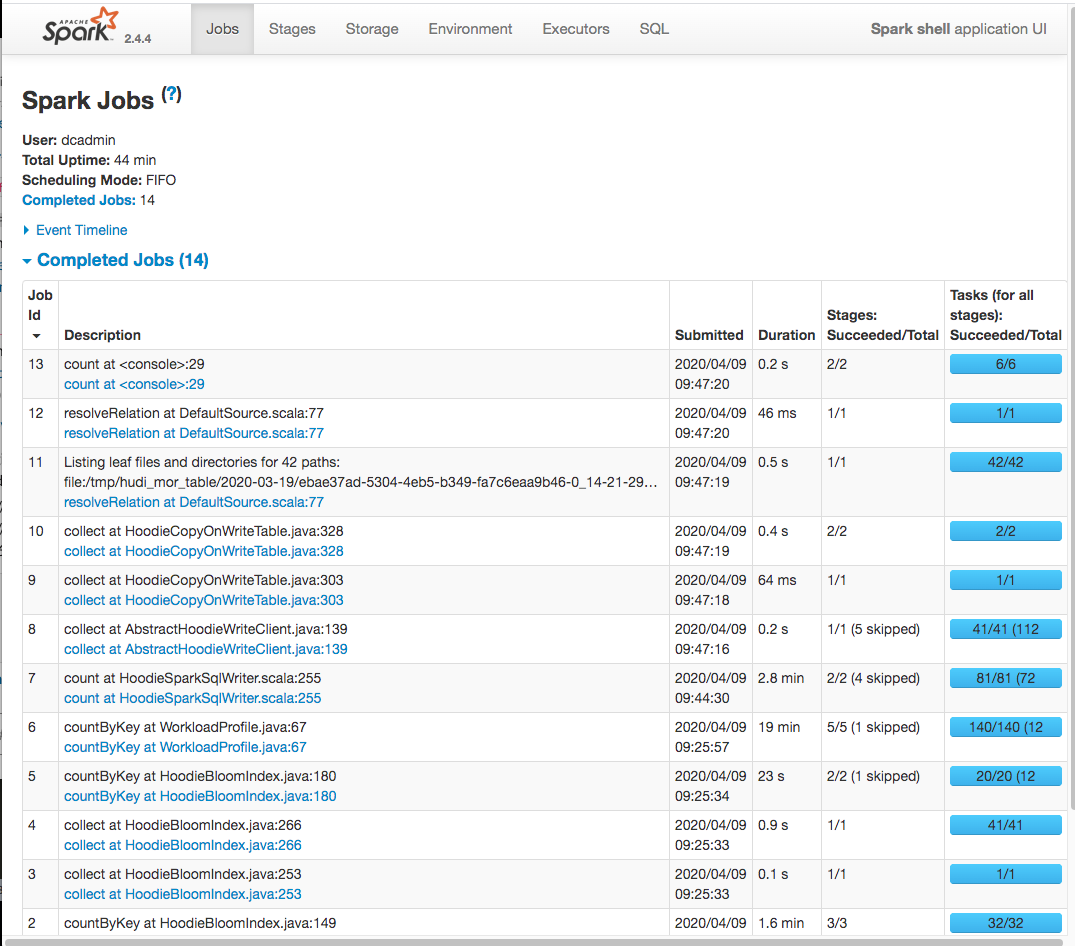

lamber-ken commented on issue #1491: [SUPPORT] OutOfMemoryError during upsert 53M records URL: https://github.com/apache/incubator-hudi/issues/1491#issuecomment-611288468 Hi @tverdokhlebd, the whole upsert cost about 30min ``` dcadmin-imac:hudi-debug dcadmin$ export SPARK_HOME=/work/BigData/install/spark/spark-2.4.4-bin-hadoop2.7 dcadmin-imac:hudi-debug dcadmin$ ${SPARK_HOME}/bin/spark-shell \ > --driver-memory 6G \ > --packages org.apache.hudi:hudi-spark-bundle_2.11:0.5.1-incubating,org.apache.spark:spark-avro_2.11:2.4.4 \ > --conf 'spark.serializer=org.apache.spark.serializer.KryoSerializer' Ivy Default Cache set to: /Users/dcadmin/.ivy2/cache The jars for the packages stored in: /Users/dcadmin/.ivy2/jars :: loading settings :: url = jar:file:/work/BigData/install/spark/spark-2.4.4-bin-hadoop2.7/jars/ivy-2.4.0.jar!/org/apache/ivy/core/settings/ivysettings.xml org.apache.hudi#hudi-spark-bundle_2.11 added as a dependency org.apache.spark#spark-avro_2.11 added as a dependency :: resolving dependencies :: org.apache.spark#spark-submit-parent-204b7ab2-3e3f-4853-9220-5e038fc99fc2;1.0 confs: [default] found org.apache.hudi#hudi-spark-bundle_2.11;0.5.1-incubating in central found org.apache.spark#spark-avro_2.11;2.4.4 in central found org.spark-project.spark#unused;1.0.0 in spark-list :: resolution report :: resolve 258ms :: artifacts dl 5ms :: modules in use: org.apache.hudi#hudi-spark-bundle_2.11;0.5.1-incubating from central in [default] org.apache.spark#spark-avro_2.11;2.4.4 from central in [default] org.spark-project.spark#unused;1.0.0 from spark-list in [default] --------------------------------------------------------------------- | | modules || artifacts | | conf | number| search|dwnlded|evicted|| number|dwnlded| --------------------------------------------------------------------- | default | 3 | 0 | 0 | 0 || 3 | 0 | --------------------------------------------------------------------- :: retrieving :: org.apache.spark#spark-submit-parent-204b7ab2-3e3f-4853-9220-5e038fc99fc2 confs: [default] 0 artifacts copied, 3 already retrieved (0kB/5ms) 20/04/09 09:23:38 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties Setting default log level to "WARN". To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel). Spark context Web UI available at http://10.101.52.18:4040 Spark context available as 'sc' (master = local[*], app id = local-1586395425370). Spark session available as 'spark'. Welcome to ____ __ / __/__ ___ _____/ /__ _\ \/ _ \/ _ `/ __/ '_/ /___/ .__/\_,_/_/ /_/\_\ version 2.4.4 /_/ Using Scala version 2.11.12 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_211) Type in expressions to have them evaluated. Type :help for more information. scala> scala> import org.apache.spark.sql.functions._ import org.apache.spark.sql.functions._ scala> scala> val tableName = "hudi_mor_table" tableName: String = hudi_mor_table scala> val basePath = "file:///tmp/hudi_mor_table" basePath: String = file:///tmp/hudi_mor_table scala> scala> var inputDF = spark.read.format("csv").option("header", "true").load("file:///work/hudi-debug/2.csv") inputDF: org.apache.spark.sql.DataFrame = [tds_cid: string, hit_timestamp: string ... 46 more fields] scala> scala> val hudiOptions = Map[String,String]( | "hoodie.insert.shuffle.parallelism" -> "10", | "hoodie.upsert.shuffle.parallelism" -> "10", | "hoodie.delete.shuffle.parallelism" -> "10", | "hoodie.bulkinsert.shuffle.parallelism" -> "10", | "hoodie.datasource.write.recordkey.field" -> "tds_cid", | "hoodie.datasource.write.partitionpath.field" -> "hit_date", | "hoodie.table.name" -> tableName, | "hoodie.datasource.write.precombine.field" -> "hit_timestamp", | "hoodie.datasource.write.operation" -> "upsert", | "hoodie.memory.merge.max.size" -> "2004857600000", | "hoodie.cleaner.policy" -> "KEEP_LATEST_FILE_VERSIONS", | "hoodie.cleaner.fileversions.retained" -> "1" | ) hudiOptions: scala.collection.immutable.Map[String,String] = Map(hoodie.insert.shuffle.parallelism -> 10, hoodie.datasource.write.precombine.field -> hit_timestamp, hoodie.cleaner.fileversions.retained -> 1, hoodie.delete.shuffle.parallelism -> 10, hoodie.datasource.write.operation -> upsert, hoodie.datasource.write.recordkey.field -> tds_cid, hoodie.table.name -> hudi_mor_table, hoodie.memory.merge.max.size -> 2004857600000, hoodie.cleaner.policy -> KEEP_LATEST_FILE_VERSIONS, hoodie.upsert.shuffle.parallelism -> 10, hoodie.datasource.write.partitionpath.field -> hit_date, hoodie.bulkinsert.shuffle.parallelism -> 10) scala> scala> inputDF.write.format("org.apache.hudi"). | options(hudiOptions). | mode("Append"). | save(basePath) 20/04/09 09:23:53 WARN Utils: Truncated the string representation of a plan since it was too large. This behavior can be adjusted by setting 'spark.debug.maxToStringFields' in SparkEnv.conf. [Stage 3:> (0 + 4) / 10]20/04/09 09:24:55 WARN MemoryStore: Not enough space to cache rdd_16_1 in memory! (computed 87.6 MB so far) 20/04/09 09:24:55 WARN BlockManager: Persisting block rdd_16_1 to disk instead. 20/04/09 09:24:56 WARN MemoryStore: Not enough space to cache rdd_16_2 in memory! (computed 196.9 MB so far) 20/04/09 09:24:56 WARN BlockManager: Persisting block rdd_16_2 to disk instead. 20/04/09 09:24:56 WARN MemoryStore: Not enough space to cache rdd_16_0 in memory! (computed 130.8 MB so far) 20/04/09 09:24:56 WARN BlockManager: Persisting block rdd_16_0 to disk instead. 20/04/09 09:24:56 WARN MemoryStore: Not enough space to cache rdd_16_3 in memory! (computed 196.3 MB so far) 20/04/09 09:24:56 WARN BlockManager: Persisting block rdd_16_3 to disk instead. [Stage 3:=======================> (4 + 4) / 10]20/04/09 09:25:12 WARN MemoryStore: Not enough space to cache rdd_16_6 in memory! (computed 58.5 MB so far) 20/04/09 09:25:12 WARN BlockManager: Persisting block rdd_16_6 to disk instead. 20/04/09 09:25:12 WARN MemoryStore: Not enough space to cache rdd_16_4 in memory! (computed 58.3 MB so far) 20/04/09 09:25:12 WARN BlockManager: Persisting block rdd_16_4 to disk instead. 20/04/09 09:25:12 WARN MemoryStore: Not enough space to cache rdd_16_5 in memory! (computed 87.6 MB so far) 20/04/09 09:25:12 WARN BlockManager: Persisting block rdd_16_5 to disk instead. 20/04/09 09:25:12 WARN MemoryStore: Failed to reserve initial memory threshold of 1024.0 KB for computing block rdd_16_7 in memory. 20/04/09 09:25:12 WARN MemoryStore: Not enough space to cache rdd_16_7 in memory! (computed 0.0 B so far) 20/04/09 09:25:12 WARN BlockManager: Persisting block rdd_16_7 to disk instead. [Stage 3:==============================================> (8 + 2) / 10]20/04/09 09:25:26 WARN MemoryStore: Not enough space to cache rdd_16_9 in memory! (computed 58.4 MB so far) 20/04/09 09:25:26 WARN BlockManager: Persisting block rdd_16_9 to disk instead. 20/04/09 09:25:26 WARN MemoryStore: Not enough space to cache rdd_16_8 in memory! (computed 87.6 MB so far) 20/04/09 09:25:26 WARN BlockManager: Persisting block rdd_16_8 to disk instead. [Stage 14:=======================================> (28 + 4) / 40]20/04/09 09:44:14 WARN MemoryStore: Not enough space to cache rdd_44_30 in memory! (computed 39.3 MB so far) 20/04/09 09:44:14 WARN BlockManager: Persisting block rdd_44_30 to disk instead. 20/04/09 09:44:14 WARN MemoryStore: Not enough space to cache rdd_44_29 in memory! (computed 58.5 MB so far) 20/04/09 09:44:14 WARN BlockManager: Persisting block rdd_44_29 to disk instead. 20/04/09 09:44:14 WARN MemoryStore: Not enough space to cache rdd_44_28 in memory! (computed 58.6 MB so far) 20/04/09 09:44:14 WARN BlockManager: Persisting block rdd_44_28 to disk instead. 20/04/09 09:44:14 WARN MemoryStore: Not enough space to cache rdd_44_31 in memory! (computed 17.3 MB so far) 20/04/09 09:44:14 WARN BlockManager: Persisting block rdd_44_31 to disk instead. [Stage 14:============================================> (32 + 4) / 40]20/04/09 09:44:19 WARN MemoryStore: Not enough space to cache rdd_44_33 in memory! (computed 26.0 MB so far) 20/04/09 09:44:19 WARN BlockManager: Persisting block rdd_44_33 to disk instead. 20/04/09 09:44:19 WARN MemoryStore: Not enough space to cache rdd_44_32 in memory! (computed 25.8 MB so far) 20/04/09 09:44:19 WARN BlockManager: Persisting block rdd_44_32 to disk instead. 20/04/09 09:44:19 WARN MemoryStore: Not enough space to cache rdd_44_35 in memory! (computed 5.2 MB so far) 20/04/09 09:44:19 WARN BlockManager: Persisting block rdd_44_35 to disk instead. 20/04/09 09:44:19 WARN MemoryStore: Not enough space to cache rdd_44_34 in memory! (computed 5.1 MB so far) 20/04/09 09:44:19 WARN BlockManager: Persisting block rdd_44_34 to disk instead. [Stage 14:==================================================> (36 + 4) / 40]20/04/09 09:44:26 WARN MemoryStore: Not enough space to cache rdd_44_38 in memory! (computed 1032.0 KB so far) 20/04/09 09:44:26 WARN BlockManager: Persisting block rdd_44_38 to disk instead. 20/04/09 09:44:26 WARN MemoryStore: Not enough space to cache rdd_44_36 in memory! (computed 3.4 MB so far) 20/04/09 09:44:26 WARN MemoryStore: Not enough space to cache rdd_44_37 in memory! (computed 3.4 MB so far) 20/04/09 09:44:26 WARN BlockManager: Persisting block rdd_44_37 to disk instead. 20/04/09 09:44:26 WARN BlockManager: Persisting block rdd_44_36 to disk instead. 20/04/09 09:44:26 WARN MemoryStore: Not enough space to cache rdd_44_39 in memory! (computed 7.6 MB so far) 20/04/09 09:44:26 WARN BlockManager: Persisting block rdd_44_39 to disk instead. 20/04/09 09:47:19 WARN DefaultSource: Snapshot view not supported yet via data source, for MERGE_ON_READ tables. Please query the Hive table registered using Spark SQL. scala> scala> spark.read.format("org.apache.hudi").load(basePath + "/2020-03-19/*").count(); 20/04/09 09:47:19 WARN DefaultSource: Snapshot view not supported yet via data source, for MERGE_ON_READ tables. Please query the Hive table registered using Spark SQL. res1: Long = 5045301 scala> scala> ```

---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected] With regards, Apache Git Services