KnightChess opened a new issue, #6829:

URL: https://github.com/apache/hudi/issues/6829

as desc in #6588

Stacktrace:

```shell

00:34 WARN: [kryo] Unable to load class

org.apache.hudi.org.apache.avro.util.Utf8 with kryo's ClassLoader. Retrying

with current..

22/09/05 11:17:58 ERROR AbstractHoodieLogRecordReader: Got exception when

reading log file

com.esotericsoftware.kryo.KryoException: Unable to find class:

org.apache.hudi.org.apache.avro.util.Utf8

Serialization trace:

orderingVal (org.apache.hudi.common.model.DeleteRecord)

at

com.esotericsoftware.kryo.util.DefaultClassResolver.readName(DefaultClassResolver.java:160)

at

com.esotericsoftware.kryo.util.DefaultClassResolver.readClass(DefaultClassResolver.java:133)

at com.esotericsoftware.kryo.Kryo.readClass(Kryo.java:693)

at

com.esotericsoftware.kryo.serializers.ObjectField.read(ObjectField.java:118)

at

com.esotericsoftware.kryo.serializers.FieldSerializer.read(FieldSerializer.java:543)

at com.esotericsoftware.kryo.Kryo.readObject(Kryo.java:731)

```

Steps to reproduce the behavior:

1.use Flink to insert data

2.use spark to query data

3.has delete block in log file

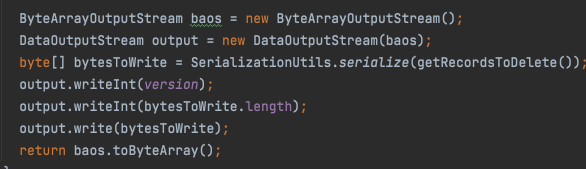

for HoodieDeleteBlock, it will use kryo serialize

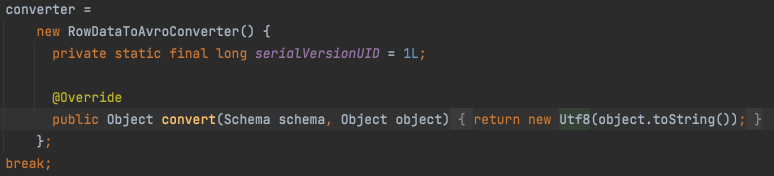

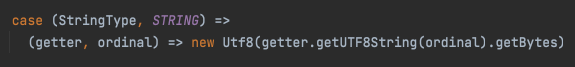

in flink and spark, the type char、varchar、string will use

org.apache.avro.util.Utf8

flink:

spark:

The flink bundle has relocation the avro but spark and presto bundle

didn't, so will cause ClassNotFound when the log file has delete block. So, how

to compatible it will better?

```shell

<relocation>

<pattern>org.apache.avro.</pattern>

<shadedPattern>${flink.bundle.shade.prefix}org.apache.avro.</shadedPattern>

</relocation>

```

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: [email protected]

For queries about this service, please contact Infrastructure at:

[email protected]