lucasberlang opened a new issue, #7223: URL: https://github.com/apache/hudi/issues/7223

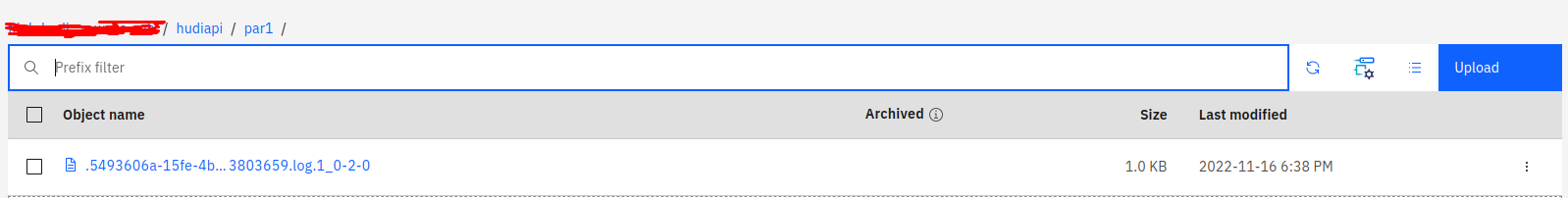

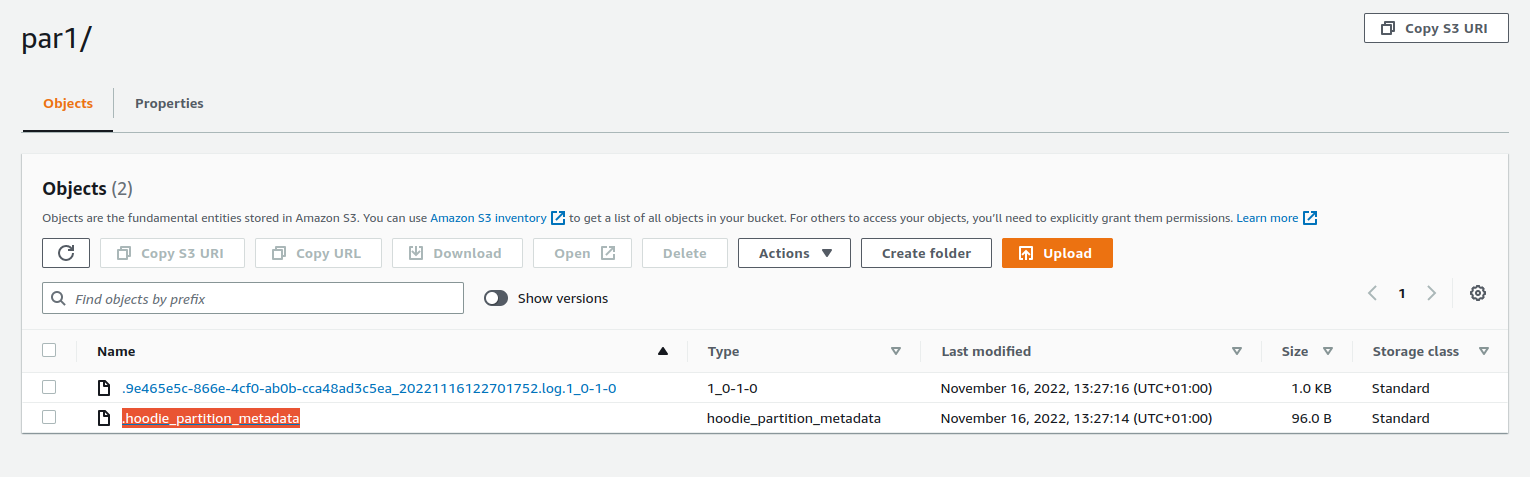

**Descripcion** I'm running flink on k8s and I'm trying to write to an IBM cloud object Storage bucket in hudi format, but it fails.I write the partition with the data but the hoodie_partition_metadata file is not written:  This causes that when launching a query on that table, it does not return data. **To Reproduce** Create a table in hudi format: ```sql CREATE TABLE t1_hudi( uuid VARCHAR(20) PRIMARY KEY NOT ENFORCED, name VARCHAR(10), age INT, ts TIMESTAMP(3), `partition` VARCHAR(20) ) PARTITIONED BY (`partition`) WITH ( 'connector' = 'hudi', 'path' = 's3a://${cos_bucket_name}/hudi', 'table.type' = 'MERGE_ON_READ' ); ``` Insert data into the table: ```sql INSERT INTO t1_hudi VALUES ('id1','Danny',23,TIMESTAMP '1970-01-01 00:00:01','par1'), ('id2','Stephen',33,TIMESTAMP '1970-01-01 00:00:02','par1'), ('id3','Julian',53,TIMESTAMP '1970-01-01 00:00:03','par2'), ('id4','Fabian',31,TIMESTAMP '1970-01-01 00:00:04','par2'), ('id5','Sophia',18,TIMESTAMP '1970-01-01 00:00:05','par3'), ('id6','Emma',20,TIMESTAMP '1970-01-01 00:00:06','par3'), ('id7','Bob',44,TIMESTAMP '1970-01-01 00:00:07','par4'), ('id8','Han',56,TIMESTAMP '1970-01-01 00:00:08','par4'); ``` **Expected behavior** I should be able to write in IBM Cloud object storage with hudi format and with the file .hoodie_partition_metadata like in AWS S3:  **Environment Description** * Hudi version : 0.12.0 * Flink version : 1.15.0 * Spark version : N/A * Hive version : N/A * Hadoop version : N/A * Storage (HDFS/S3/GCS..) : IBM COS * Running on Docker? (yes/no) : yes, GKE **Additional context** Properties in core-site.xml: ```xml <property> <name>fs.s3a.access.key</name> <value>xxxxxxxxx</value> </property> <property> <name>fs.s3a.secret.key</name> <value>xxxxxxxxx</value> </property> <property> <name>fs.s3a.awsAccessKeyId </name> <value>xxxxxxxxx</value> </property> <property> <name>fs.s3a.awsSecretAccessKey</name> <value>xxxxxxxxx</value> </property> <!-- --> <property> <name>fs.s3a.endpoint</name> <value>s3.eu-de.cloud-object-storage.appdomain.cloud</value> </property> <property> <name>fs.s3a.path.style.access</name> <value>true</value> </property> ``` flink-conf.yaml: ```yaml flink-conf.yaml: |+ jobmanager.rpc.address: flink-jobmanager-session taskmanager.numberOfTaskSlots: 2 blob.server.port: 6124 jobmanager.rpc.port: 6123 taskmanager.rpc.port: 6122 queryable-state.proxy.ports: 6125 jobmanager.memory.process.size: 1600m taskmanager.memory.process.size: 1728m parallelism.default: 2 execution.checkpointing.interval: 60s metrics.reporter.prom.class: org.apache.flink.metrics.prometheus.PrometheusReporter metrics.reporters: prom metrics.reporter.prom.port: 9249 ``` Dockerfile to execute flink with s3 plugin: ```Dockerfile ARG FLINK_VERSION ARG SCALA_VERSION FROM flink:${FLINK_VERSION}-scala_${SCALA_VERSION} ARG FLINK_HADOOP_VERSION ARG GCS_CONNECTOR_VERSION RUN test -n "$FLINK_HADOOP_VERSION" RUN test -n "$GCS_CONNECTOR_VERSION" ARG HUDI_HADOOP_JAR_NAME=hudi-flink1.15-bundle-0.12.0.jar ARG HUDI_HADOOP_JAR_URI=https://repo.maven.apache.org/maven2/org/apache/hudi/hudi-flink1.15-bundle/0.12.0/hudi-flink1.15-bundle-0.12.0.jar RUN echo "Downloading ${HUDI_HADOOP_JAR_URI}" && \ wget -q -O /opt/flink/lib/${HUDI_HADOOP_JAR_NAME} ${HUDI_HADOOP_JAR_URI} RUN mkdir -p /opt/flink/plugins/flink-s3-fs-hadoop/ && cp /opt/flink/opt/flink-s3-fs-hadoop-1.15.0.jar /opt/flink/plugins/flink-s3-fs-hadoop/ && cp /opt/flink/opt/flink-s3-fs-hadoop-1.15.0.jar /opt/flink/lib/ ``` Properties ```properties FLINK_VERSION ?= 1.15.0 SCALA_VERSION ?= 2.12 FLINK_HADOOP_VERSION ?= 2.8.3-9.0 GCS_CONNECTOR_VERSION ?= hadoop3-2.2.6 PYTHON_VERSION ?= ``` **Stacktrace** ``` 2022-11-16 17:27:55,475 WARN org.apache.hudi.common.model.HoodiePartitionMetadata [] - Error trying to save partition metadata (this is okay, as long as atleast 1 of these succced), s3a://xxxx/hudiapi/par3 org.apache.hadoop.fs.s3a.AWSBadRequestException: copyFile(hudiapi/par3/.hoodie_partition_metadata_0, hudiapi/par3/.hoodie_partition_metadata) on hudiapi/par3/.hoodie_partition_metadata_0: com.amazonaws.services.s3.model.AmazonS3Exception: Requests specifying Server-Side Encryption with Customer-Provided Keys must provide a valid encryption algorithm. (Service: Amazon S3; Status Code: 400; Error Code: InvalidArgument; Request ID: 875c15a3-fef5-4f1c-b68a-2097e3c230f6; S3 Extended Request ID: null; Proxy: null), S3 Extended Request ID: null:InvalidArgument: Requests specifying Server-Side Encryption with Customer-Provided Keys must provide a valid encryption algorithm. (Service: Amazon S3; Status Code: 400; Error Code: InvalidArgument; Request ID: 875c15a3-fef5-4f1c-b68a-2097e3c230f6; S3 Extended Request ID: null; Proxy: null) at org.apache.hadoop.fs.s3a.S3AUtils.translateException(S3AUtils.java:224) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at org.apache.hadoop.fs.s3a.Invoker.once(Invoker.java:111) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at org.apache.hadoop.fs.s3a.Invoker.once(Invoker.java:125) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at org.apache.hadoop.fs.s3a.S3AFileSystem.copyFile(S3AFileSystem.java:2581) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at org.apache.hadoop.fs.s3a.S3AFileSystem.innerRename(S3AFileSystem.java:1054) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at org.apache.hadoop.fs.s3a.S3AFileSystem.rename(S3AFileSystem.java:915) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at org.apache.hudi.common.fs.HoodieWrapperFileSystem.lambda$rename$13(HoodieWrapperFileSystem.java:327) ~[hudi-flink1.15-bundle-0.12.0.jar:0.12.0] at org.apache.hudi.common.fs.HoodieWrapperFileSystem.executeFuncWithTimeMetrics(HoodieWrapperFileSystem.java:106) ~[hudi-flink1.15-bundle-0.12.0.jar:0.12.0] at org.apache.hudi.common.fs.HoodieWrapperFileSystem.rename(HoodieWrapperFileSystem.java:320) ~[hudi-flink1.15-bundle-0.12.0.jar:0.12.0] at org.apache.hudi.common.model.HoodiePartitionMetadata.trySave(HoodiePartitionMetadata.java:121) ~[hudi-flink1.15-bundle-0.12.0.jar:0.12.0] at org.apache.hudi.io.HoodieAppendHandle.init(HoodieAppendHandle.java:177) ~[hudi-flink1.15-bundle-0.12.0.jar:0.12.0] at org.apache.hudi.io.HoodieAppendHandle.write(HoodieAppendHandle.java:424) ~[hudi-flink1.15-bundle-0.12.0.jar:0.12.0] at org.apache.hudi.io.HoodieWriteHandle.write(HoodieWriteHandle.java:225) ~[hudi-flink1.15-bundle-0.12.0.jar:0.12.0] at org.apache.hudi.execution.ExplicitWriteHandler.consumeOneRecord(ExplicitWriteHandler.java:47) ~[hudi-flink1.15-bundle-0.12.0.jar:0.12.0] at org.apache.hudi.execution.ExplicitWriteHandler.consumeOneRecord(ExplicitWriteHandler.java:33) ~[hudi-flink1.15-bundle-0.12.0.jar:0.12.0] at org.apache.hudi.common.util.queue.BoundedInMemoryQueueConsumer.consume(BoundedInMemoryQueueConsumer.java:37) ~[hudi-flink1.15-bundle-0.12.0.jar:0.12.0] at org.apache.hudi.common.util.queue.BoundedInMemoryExecutor.lambda$null$2(BoundedInMemoryExecutor.java:135) ~[hudi-flink1.15-bundle-0.12.0.jar:0.12.0] at java.util.concurrent.FutureTask.run(Unknown Source) [?:?] at java.util.concurrent.ThreadPoolExecutor.runWorker(Unknown Source) [?:?] at java.util.concurrent.ThreadPoolExecutor$Worker.run(Unknown Source) [?:?] at java.lang.Thread.run(Unknown Source) [?:?] Caused by: com.amazonaws.services.s3.model.AmazonS3Exception: Requests specifying Server-Side Encryption with Customer-Provided Keys must provide a valid encryption algorithm. (Service: Amazon S3; Status Code: 400; Error Code: InvalidArgument; Request ID: 875c15a3-fef5-4f1c-b68a-2097e3c230f6; S3 Extended Request ID: null; Proxy: null) at com.amazonaws.http.AmazonHttpClient$RequestExecutor.handleErrorResponse(AmazonHttpClient.java:1819) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at com.amazonaws.http.AmazonHttpClient$RequestExecutor.handleServiceErrorResponse(AmazonHttpClient.java:1403) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at com.amazonaws.http.AmazonHttpClient$RequestExecutor.executeOneRequest(AmazonHttpClient.java:1372) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at com.amazonaws.http.AmazonHttpClient$RequestExecutor.executeHelper(AmazonHttpClient.java:1145) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at com.amazonaws.http.AmazonHttpClient$RequestExecutor.doExecute(AmazonHttpClient.java:802) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at com.amazonaws.http.AmazonHttpClient$RequestExecutor.executeWithTimer(AmazonHttpClient.java:770) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at com.amazonaws.http.AmazonHttpClient$RequestExecutor.execute(AmazonHttpClient.java:744) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at com.amazonaws.http.AmazonHttpClient$RequestExecutor.access$500(AmazonHttpClient.java:704) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at com.amazonaws.http.AmazonHttpClient$RequestExecutionBuilderImpl.execute(AmazonHttpClient.java:686) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at com.amazonaws.http.AmazonHttpClient.execute(AmazonHttpClient.java:550) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at com.amazonaws.http.AmazonHttpClient.execute(AmazonHttpClient.java:530) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at com.amazonaws.services.s3.AmazonS3Client.invoke(AmazonS3Client.java:5259) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at com.amazonaws.services.s3.AmazonS3Client.invoke(AmazonS3Client.java:5206) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at com.amazonaws.services.s3.AmazonS3Client.copyObject(AmazonS3Client.java:2057) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at com.amazonaws.services.s3.transfer.internal.CopyCallable.copyInOneChunk(CopyCallable.java:145) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at com.amazonaws.services.s3.transfer.internal.CopyCallable.call(CopyCallable.java:133) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at com.amazonaws.services.s3.transfer.internal.CopyMonitor.call(CopyMonitor.java:132) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] at com.amazonaws.services.s3.transfer.internal.CopyMonitor.call(CopyMonitor.java:43) ~[flink-s3-fs-hadoop-1.15.0.jar:1.15.0] ``` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]