BohanZhang0222 opened a new issue, #8254: URL: https://github.com/apache/hudi/issues/8254

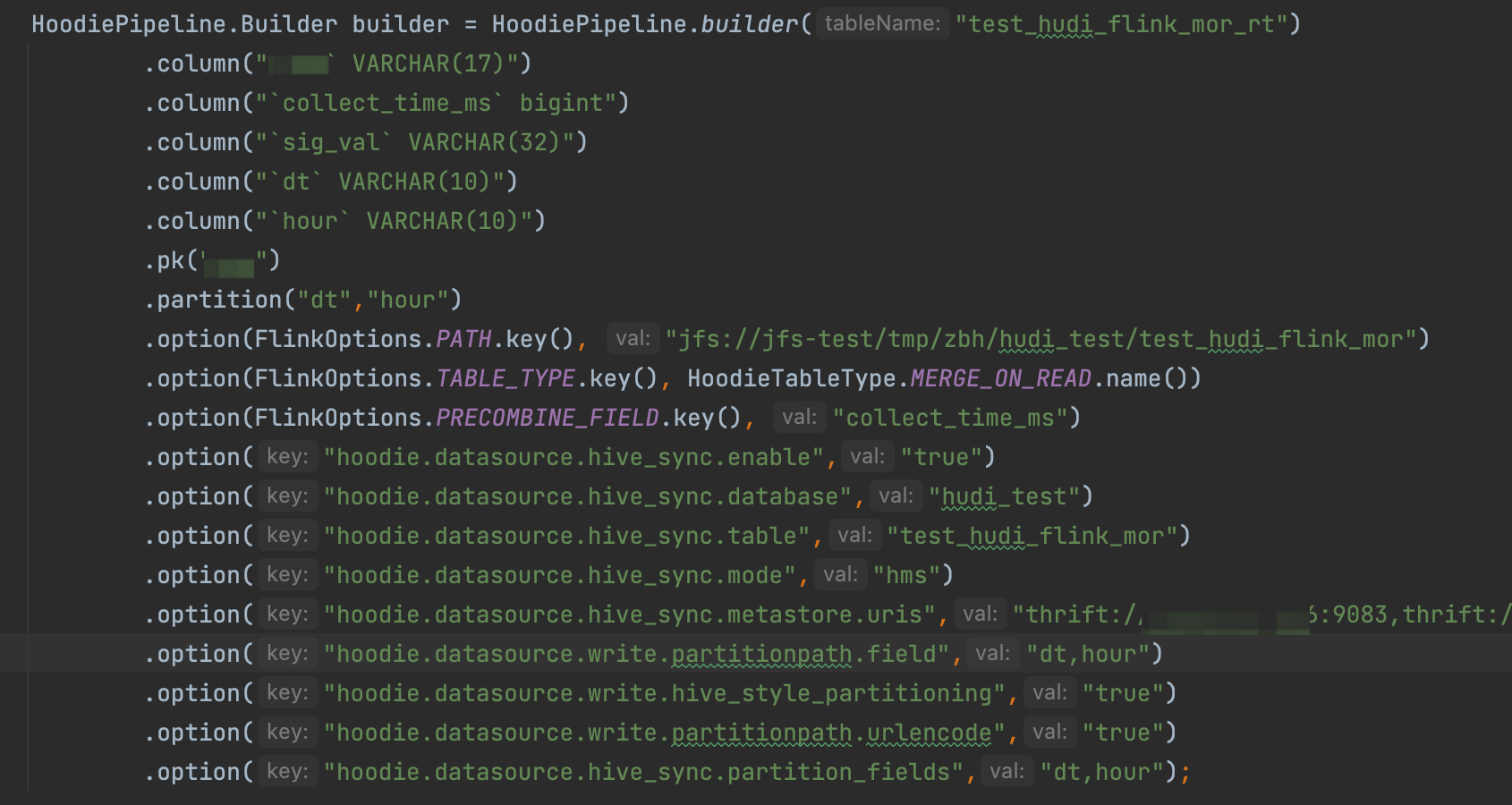

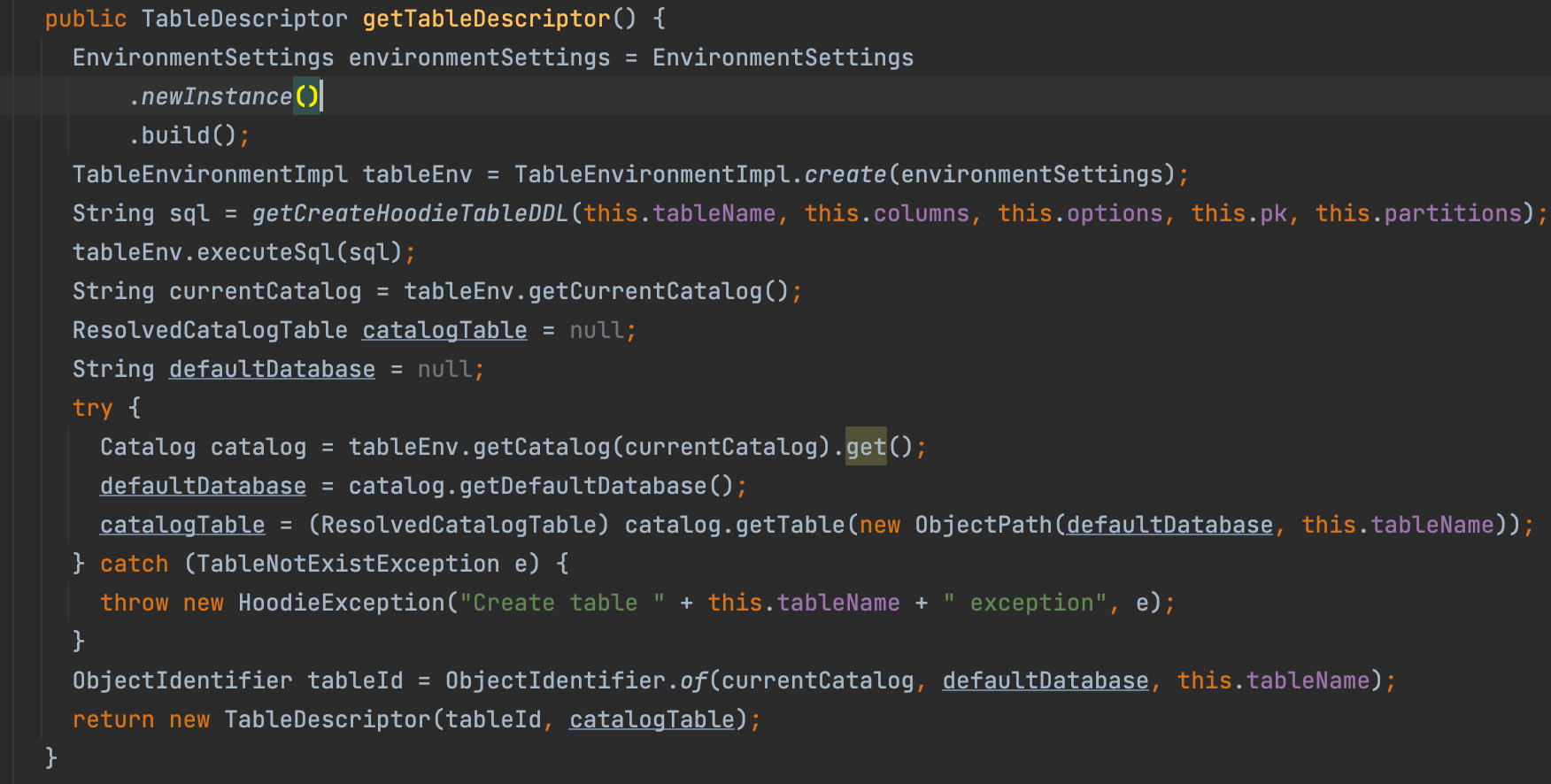

**_Tips before filing an issue_** - Have you gone through our [FAQs](https://hudi.apache.org/learn/faq/)? - Join the mailing list to engage in conversations and get faster support at [email protected]. - If you have triaged this as a bug, then file an [issue](https://issues.apache.org/jira/projects/HUDI/issues) directly. **Describe the problem you faced** I write to hudi table using Flink DataStream API, and I hope to manage metadata with hms. The code I wrote is as follows, but I don't know how to import Hive catalog.  **To Reproduce** Steps to reproduce the behavior: 1. write data with Flink DataStream API. 2. show tables on hms. 3. 4. **Expected behavior** The table can be seen in hms. **Environment Description** * Hudi version : 0.13.0 * Flink version : 1.14 * Hive version : 1.2.1 * Hadoop version : 2.7.6 * Storage (HDFS/S3/GCS..) : HDFS * Running on Docker? (yes/no) : no **Additional context** I noticed that org.apache.hudi.util.HoodiePipeline initializes the TableEnvironment, but does not support the passing of parameters. that currently hudi does not support writing data using api and synchronizing metadata to hms, is my understanding correct?  **Stacktrace** ```Add the stacktrace of the error.``` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]