guanziyue commented on code in PR #4913:

URL: https://github.com/apache/hudi/pull/4913#discussion_r1232272443

##########

hudi-client/hudi-client-common/src/main/java/org/apache/hudi/io/HoodieWriteHandle.java:

##########

@@ -273,4 +280,31 @@ protected static Option<IndexedRecord>

toAvroRecord(HoodieRecord record, Schema

return Option.empty();

}

}

+

+ protected class AppendLogWriteCallback implements HoodieLogFileWriteCallback

{

+ // here we distinguish log files created from log files being appended.

Considering following scenario:

+ // An appending task write to log file.

+ // (1) append to existing file file_instant_writetoken1.log.1

+ // (2) rollover and create file file_instant_writetoken2.log.2

+ // Then this task failed and retry by a new task.

+ // (3) append to existing file file_instant_writetoken1.log.1

+ // (4) rollover and create file file_instant_writetoken3.log.2

+ // finally file_instant_writetoken2.log.2 should not be committed to hudi,

we use marker file to delete it.

+ // keep in mind that log file is not always fail-safe unless it never roll

over

+

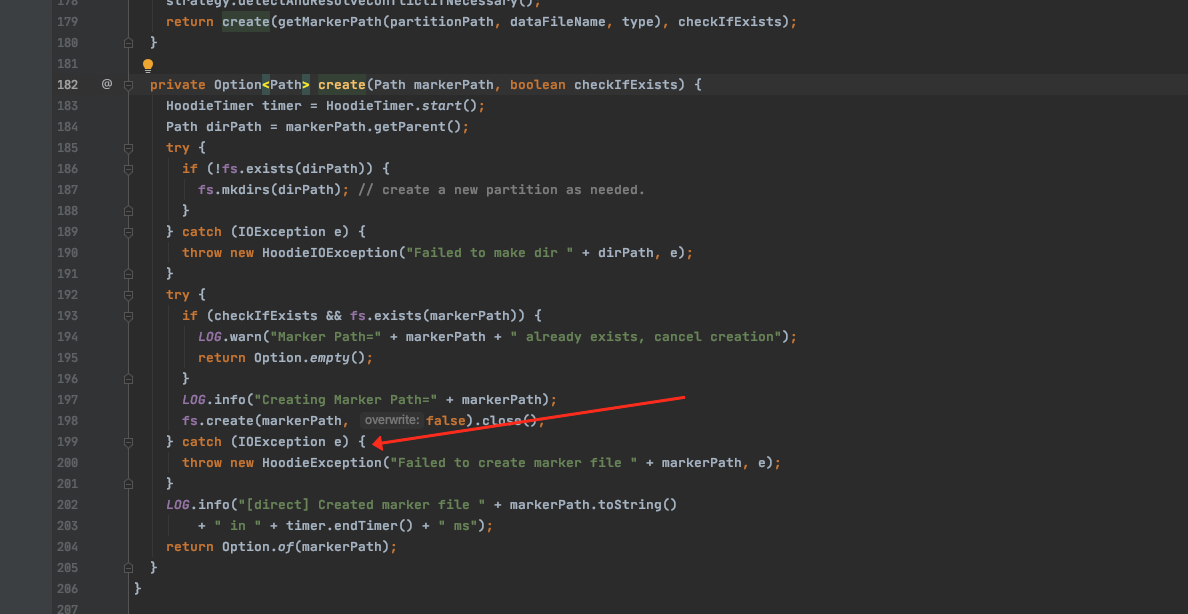

Review Comment:

> Under this case, if we use direct marker type, the new task would always

get an exception when it call `preLogFileOpen` because we use `create` instead

of `createIfNotExist()`?

Thanks for raising this problem. It should have some problem in spark when

speculation enabled. I can have a fix on that. But you mentioned always. Do you

use hudi on flink? Could you pls kindly share the stacktrace of it?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: [email protected]

For queries about this service, please contact Infrastructure at:

[email protected]