somebol opened a new issue #1757:

URL: https://github.com/apache/hudi/issues/1757

Hi Team,

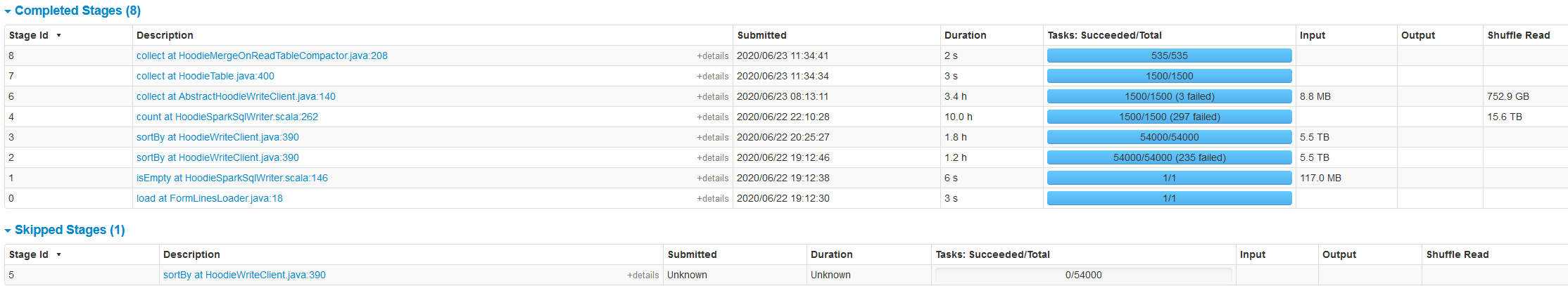

We are trying to load a very large dataset into hudi. The bulk insert job

took ~16.5 hours to complete. The job was run with vanilla settings without any

optimisations.

How can we tune the job to make it run faster?

**Dataset**

Data stored in HDFS / parquet

size: 5.5 TB

number of files: 27000

number of records: ~300 billion

**hudi options**

.option(RECORDKEY_FIELD_OPT_KEY(), "id")

.option(PARTITIONPATH_FIELD_OPT_KEY(), "<partition>")

.option(PRECOMBINE_FIELD_OPT_KEY(), "<ts>")

.option(HIVE_STYLE_PARTITIONING_OPT_KEY(), "true")

.option(TABLE_TYPE_OPT_KEY(), MOR_TABLE_TYPE_OPT_VAL())

.option(OPERATION_OPT_KEY(), BULK_INSERT_OPERATION_OPT_VAL())

.option(TABLE_NAME, "<name>")

**spark conf**

conf.set("spark.debug.maxToStringFields", "100");

conf.set("spark.sql.shuffle.partitions", "2001");

conf.set("spark.sql.warehouse.dir", "/user/hive/warehouse");

conf.set("spark.sql.autoBroadcastJoinThreshold", "31457280");

conf.set("spark.sql.hive.filesourcePartitionFileCacheSize", "2000000000");

conf.set("spark.sql.sources.partitionOverwriteMode", "dynamic");

conf.set("mapreduce.input.fileinputformat.input.dir.recursive", "true");

conf.set("spark.storage.replication.proactive", "true");

**spark submit**

SPARK_CMD="spark2-submit \

--files log4j.properties

--conf "spark.driver.extraJavaOptions=${log4j_setting}" \

--conf "spark.executor.extraJavaOptions=${log4j_setting}" \

--conf spark.kryoserializer.buffer.max=2040M \

--num-executors 25 \

--executor-cores 5 \

--driver-memory 8G \

--executor-memory 21G \

--master yarn \

--deploy-mode client

**Environment Description**

* Hudi version : 0.5.3

* Spark version : 2.40

* Cloudera version : 6.33

* Hadoop version : 3.0.0

* Storage (HDFS/S3/GCS..) : HDFS

* Running on Docker? (yes/no) : No

**screenshot of spark stages**

----------------------------------------------------------------

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

[email protected]