nylqd commented on issue #9269: URL: https://github.com/apache/hudi/issues/9269#issuecomment-1653356214

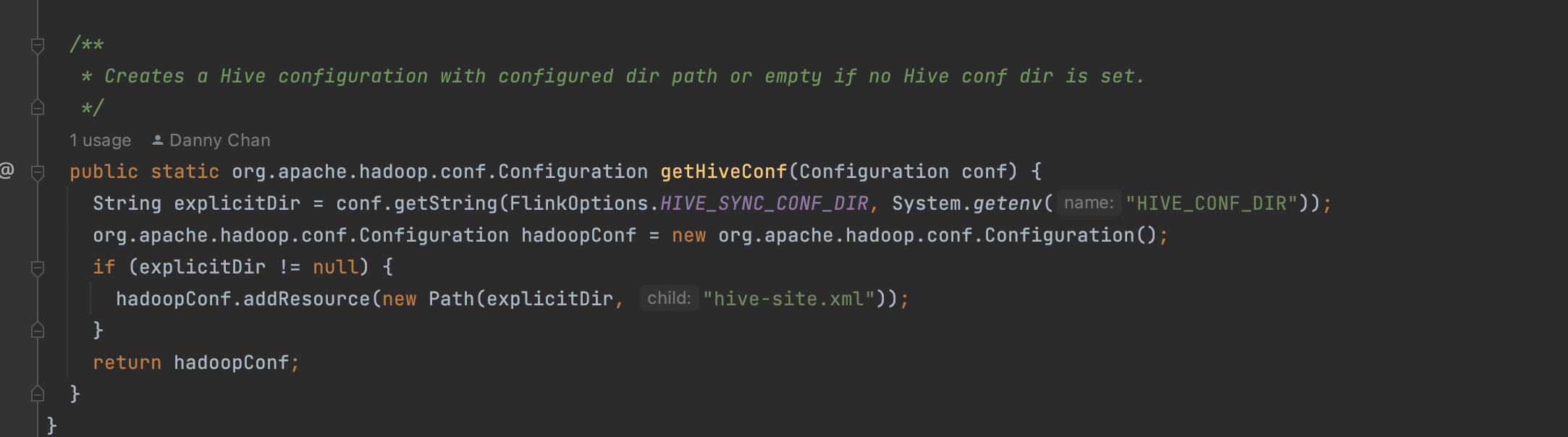

> > > hdfs path, since the code running on yarn and my hive-site.xml is in a local dir > > > > > > I guess you are right, the hive conf dir is only valid for the catalog itself, not the job, the catalog does not pass around all the hive related config options to the job. > > > > > > Maybe you can fire a fix for it, in the HoodieHiveCatalog, when generating a new catalog table, config the hive options through `hadoop.` prefix. > > > > > > Another way is to config the system variable: `HIVE_CONF_DIR`: > > > > > >  > > > > thx for ur clarification, after set hive tblproperties from hive-site.xml with prefix `hadoop.`, we finally sync schema successfully > > > > next step, we gonna try to set those properties in the HoodieHiveCatalog After same digging, we can add all `hive-site.xml` kvs in `HoodieHiveCatalog.instantiateHiveTable()` and it works But there are way too many properties, and we found these ones should added - hive.metastore.uris - hive.metastore.sasl.enabled - hive.metastore.kerberos.principle Should we add all properties or just add specifed ones -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]