jiegzhan opened a new issue #1980: URL: https://github.com/apache/hudi/issues/1980

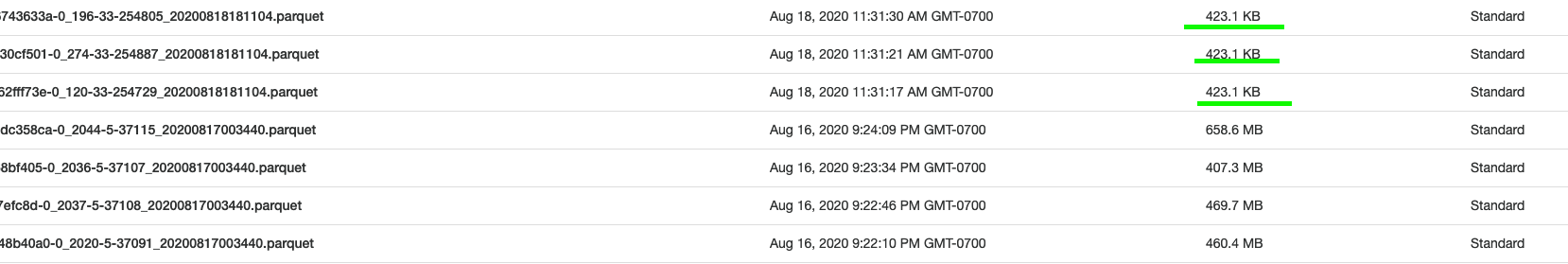

I ran a `delete` query on my hudi table and found out there are a lot newly generated small files (423KB) (see screenshot). Is there a way to control the size of these small files when running `delete` query? Basically I am trying to avoid many small files after running `delete` query.  This is my delete query: ``` val deleteExistingRecords = spark.read.format("org.apache.hudi").load(basePath + "/*/*").where(col("device_id").startsWith("D")) deleteExistingRecords. write.format("org.apache.hudi"). option("hoodie.datasource.write.operation", "delete"). option("hoodie.parquet.max.file.size", 2000000000). option("hoodie.parquet.block.size", 2000000000). option("hoodie.parquet.small.file.limit", 512000000). option(TABLE_NAME, tableName). option(TABLE_TYPE_OPT_KEY, "COPY_ON_WRITE"). option(RECORDKEY_FIELD_OPT_KEY, "device_id"). option(PRECOMBINE_FIELD_OPT_KEY, "device_id"). option(PARTITIONPATH_FIELD_OPT_KEY, "date_key"). option(HIVE_SYNC_ENABLED_OPT_KEY, "true"). option(HIVE_DATABASE_OPT_KEY, "default"). option(HIVE_TABLE_OPT_KEY, "hudi_fact_device_logs"). option(HIVE_USER_OPT_KEY, "hadoop"). option(HIVE_PARTITION_FIELDS_OPT_KEY, "date_key"). option(HIVE_PARTITION_EXTRACTOR_CLASS_OPT_KEY, classOf[MultiPartKeysValueExtractor].getName). mode(Append). save(basePath) ``` ---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected]