WTa-hash commented on issue #2057: URL: https://github.com/apache/hudi/issues/2057#issuecomment-685015564

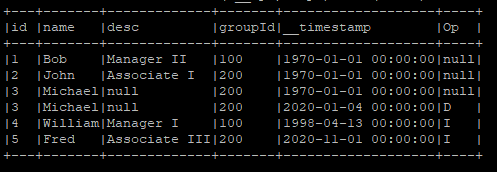

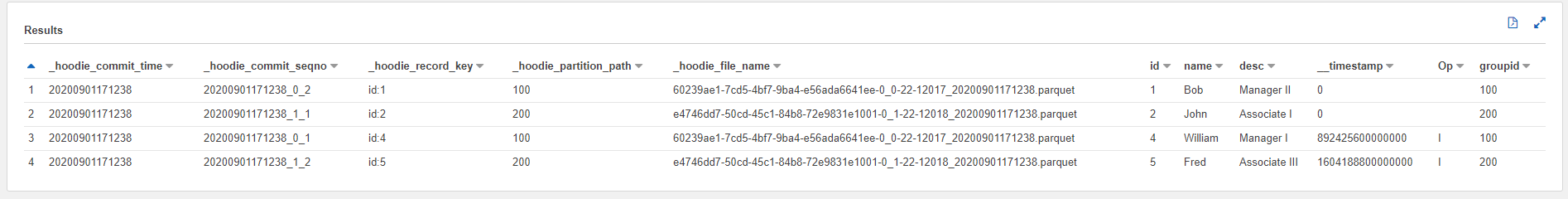

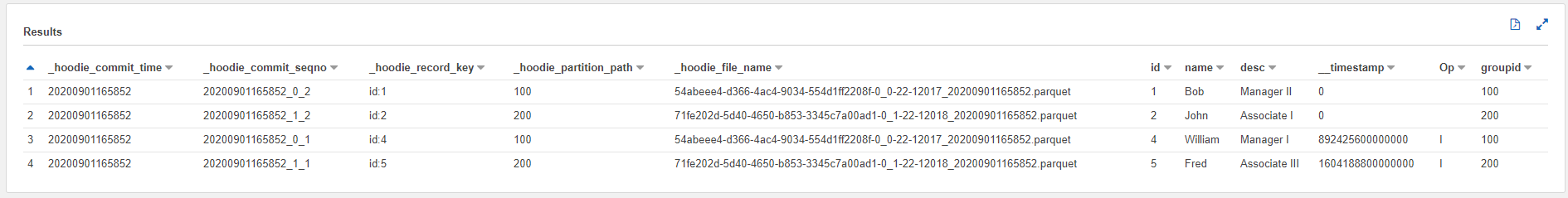

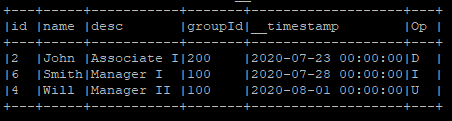

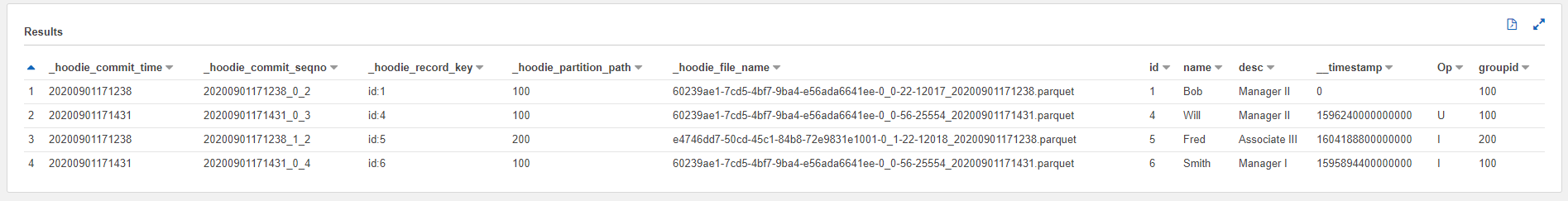

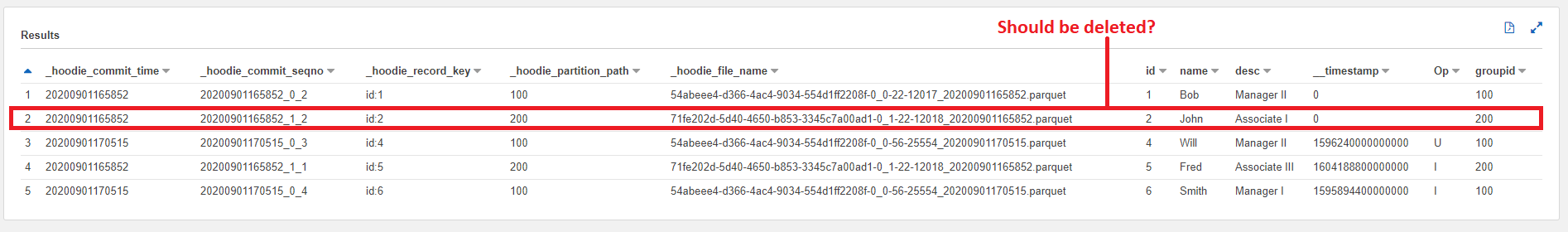

For the 0.6.0 issue with error: java.lang.NoSuchMethodError: org.apache.spark.sql.execution.datasources.PartitionedFile.(Lorg/apache/spark/sql/catalyst/InternalRow;Ljava/lang/String;JJ[Ljava/lang/String;)V ^ I can get pass this issue if I query the table using AWS Athena and remove Spark reads from the script. This then brings up another issue. Keep in mind, I am using a custom AWSDmsAvroPayload class referenced in https://issues.apache.org/jira/browse/HUDI-802 with Hudi 0.6.0. Using Hudi 0.6.0, I first create a Hudi table using this dataframe:  If I create a COW table, the dataframe gets processed correctly:  If I create a MOR table, the dataframe also gets processed correctly (read-optimized table):  Next, I process a new dataframe with some data changes on the existing Hudi table:  The updates are processed correctly in the COW table:  However, the updates did not process the deletion of ID=2 in MOR table:  There were no errors when processing the MOR table. ---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected]