Tandoy opened a new issue #3205: URL: https://github.com/apache/hudi/issues/3205

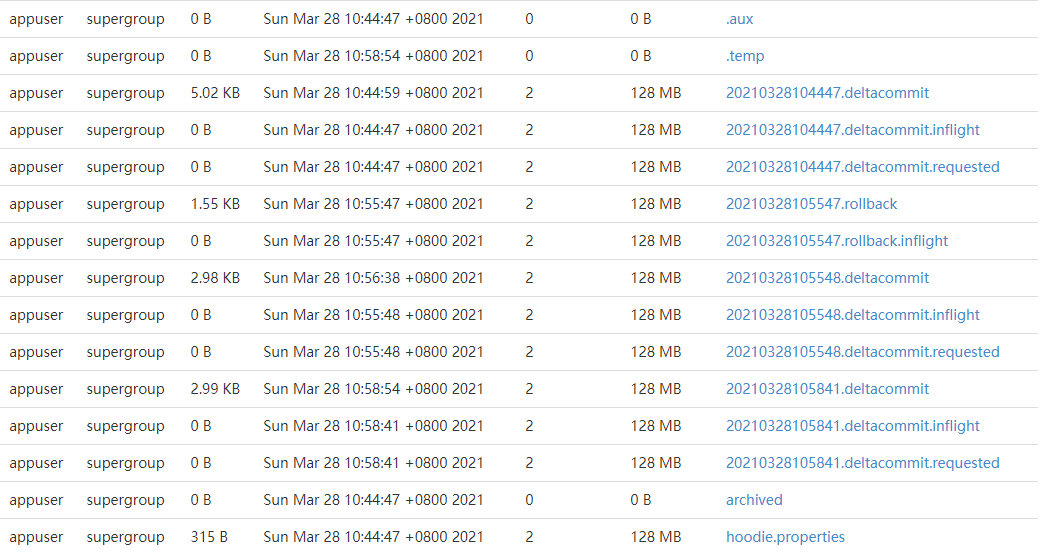

**Steps to reproduce the behavior:** `spark-submit \ --master yarn \ --deploy-mode cluster \ --conf spark.task.cpus=1 \ --conf spark.executor.cores=1 \ --class org.apache.hudi.utilities.HoodieClusteringJob `ls /home/appuser/tangzhi/hudi-spark/hudi-sparkSql/packaging/hudi-utilities-bundle/target/hudi-utilities-bundle_2.11-0.9.0-SNAPSHOT.jar` \ --schedule \ --base-path hdfs://dxbigdata101:8020/user/hudi/test/data/hudi_test_kafka \ --table-name hudi_test_kafka \ --spark-memory 1G` **Expected behavior:** Use HoodieClusteringJob to cluster the hudi MOR table to improve query speed **Environment Description:** Hudi version : master Spark version : 2.4.0.cloudera2 Hadoop version : 2.6.0-cdh5.13.3 Hive version : 1.1.0-cdh5.13.3 Storage (HDFS/S3/GCS..) : HDFS Running on Docker? (yes/no) :no **Stacktrace: There is no error log, but clustering fails** 21/06/30 17:42:51 INFO javalin.Javalin: Starting Javalin ... 21/06/30 17:42:51 INFO javalin.Javalin: Listening on http://localhost:36337/ 21/06/30 17:42:51 INFO javalin.Javalin: Javalin started in 204ms \o/ 21/06/30 17:42:51 INFO service.TimelineService: Starting Timeline server on port :36337 21/06/30 17:42:51 INFO embedded.EmbeddedTimelineService: Started embedded timeline server at dxbigdata103:36337 21/06/30 17:42:51 INFO client.AbstractHoodieWriteClient: Scheduling table service CLUSTER 21/06/30 17:42:51 INFO client.AbstractHoodieWriteClient: Scheduling clustering at instant time :20210630174251 21/06/30 17:42:51 INFO table.HoodieTableMetaClient: Loading HoodieTableMetaClient from hdfs://dxbigdata101:8020/user/hudi/test/data/hudi_on_flinksql2 21/06/30 17:42:51 INFO fs.FSUtils: Hadoop Configuration: fs.defaultFS: [hdfs://dxbigdata101:8020], Config:[Configuration: core-default.xml, core-site.xml, yarn-default.xml, yarn-site.xml, mapred-default.xml, mapred-site.xml, hdfs-default.xml, hdfs-site.xml, __spark_hadoop_conf__.xml], FileSystem: [DFS[DFSClient[clientName=DFSClient_NONMAPREDUCE_-45629993_1, ugi=appuser (auth:SIMPLE)]]] 21/06/30 17:42:51 INFO table.HoodieTableConfig: Loading table properties from hdfs://dxbigdata101:8020/user/hudi/test/data/hudi_on_flinksql2/.hoodie/hoodie.properties 21/06/30 17:42:51 INFO table.HoodieTableMetaClient: Finished Loading Table of type MERGE_ON_READ(version=1, baseFileFormat=PARQUET) from hdfs://dxbigdata101:8020/user/hudi/test/data/hudi_on_flinksql2 21/06/30 17:42:51 INFO table.HoodieTableMetaClient: Loading Active commit timeline for hdfs://dxbigdata101:8020/user/hudi/test/data/hudi_on_flinksql2 21/06/30 17:42:51 INFO timeline.HoodieActiveTimeline: Loaded instants [[20210328104447__deltacommit__COMPLETED], [20210328105547__rollback__COMPLETED], [20210328105548__deltacommit__COMPLETED], [20210328105841__deltacommit__COMPLETED]] 21/06/30 17:42:51 INFO view.FileSystemViewManager: Creating View Manager with storage type :REMOTE_FIRST 21/06/30 17:42:51 INFO view.FileSystemViewManager: Creating remote first table view 21/06/30 17:42:51 INFO cluster.SparkClusteringPlanActionExecutor: Checking if clustering needs to be run on hdfs://dxbigdata101:8020/user/hudi/test/data/hudi_on_flinksql2 21/06/30 17:42:51 INFO cluster.SparkClusteringPlanActionExecutor: Not scheduling clustering as only 3 commits was found since last clustering Optional.empty. Waiting for 4 21/06/30 17:42:51 ERROR utilities.HoodieClusteringJob: Clustering with basePath: hdfs://dxbigdata101:8020/user/hudi/test/data/hudi_on_flinksql2, tableName: hudi_on_flinksql2, runSchedule: true failed 21/06/30 17:42:51 INFO server.AbstractConnector: Stopped Spark@76a15757{HTTP/1.1,[http/1.1]}{0.0.0.0:0} 21/06/30 17:42:51 INFO ui.SparkUI: Stopped Spark web UI at http://dxbigdata103:35949 21/06/30 17:42:51 INFO yarn.YarnAllocator: Driver requested a total number of 0 executor(s). 21/06/30 17:42:51 INFO cluster.YarnClusterSchedulerBackend: Shutting down all executors 21/06/30 17:42:51 INFO cluster.YarnSchedulerBackend$YarnDriverEndpoint: Asking each executor to shut down 21/06/30 17:42:51 INFO cluster.SchedulerExtensionServices: Stopping SchedulerExtensionServices (serviceOption=None, services=List(), started=false) 21/06/30 17:42:51 INFO spark.MapOutputTrackerMasterEndpoint: MapOutputTrackerMasterEndpoint stopped! 21/06/30 17:42:51 INFO memory.MemoryStore: MemoryStore cleared 21/06/30 17:42:51 INFO storage.BlockManager: BlockManager stopped 21/06/30 17:42:51 INFO storage.BlockManagerMaster: BlockManagerMaster stopped 21/06/30 17:42:51 INFO scheduler.OutputCommitCoordinator$OutputCommitCoordinatorEndpoint: OutputCommitCoordinator stopped! 21/06/30 17:42:51 INFO spark.SparkContext: Successfully stopped SparkContext 21/06/30 17:42:51 INFO yarn.ApplicationMaster: Final app status: SUCCEEDED, exitCode: 0 21/06/30 17:42:51 INFO yarn.ApplicationMaster: Unregistering ApplicationMaster with SUCCEEDED 21/06/30 17:42:51 INFO impl.AMRMClientImpl: Waiting for application to be successfully unregistered.8c455a3024c0 **Additional context: No replace commit file is generated**  -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]