Tandoy opened a new issue #3218: URL: https://github.com/apache/hudi/issues/3218

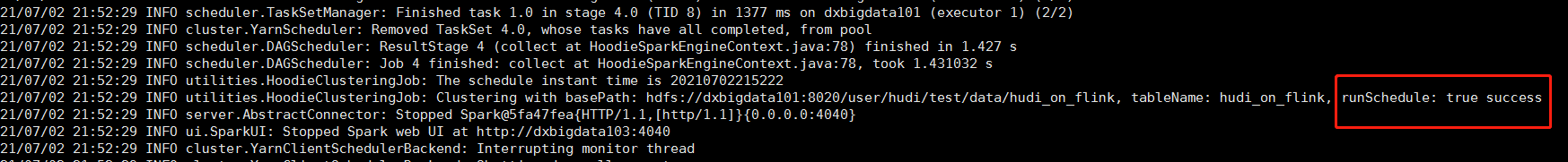

**Steps to reproduce the behavior:** spark2-submit \ --master yarn \ --deploy-mode client \ --conf spark.task.cpus=1 \ --conf spark.executor.cores=1 \ --class org.apache.hudi.utilities.HoodieClusteringJob ls /home/appuser/tangzhi/hudi-spark/hudi-sparkSql/packaging/hudi-utilities-bundle/target/hudi-utilities-bundle_2.11-0.9.0-SNAPSHOT.jar \ --schedule \ --base-path hdfs://dxbigdata101:8020/user/hudi/test/data/hudi_on_flink \ --table-name hudi_on_flink \ --spark-memory 1G **Expected behavior:** Use HoodieClusteringJob to cluster the hudi COW table to improve query speed **Environment Description:** Hudi version : master Spark version : 2.4.0.cloudera2 Hadoop version : 2.6.0-cdh5.13.3 Hive version : 1.1.0-cdh5.13.3 Storage (HDFS/S3/GCS..) : HDFS Running on Docker? (yes/no) :no **Additional context:** The log shows that Clustering is successful, but only the replacecommit.requested file is generated but the .commit file is not generated   -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]