abdulmuqeeth commented on issue #3077: URL: https://github.com/apache/hudi/issues/3077#issuecomment-876040512

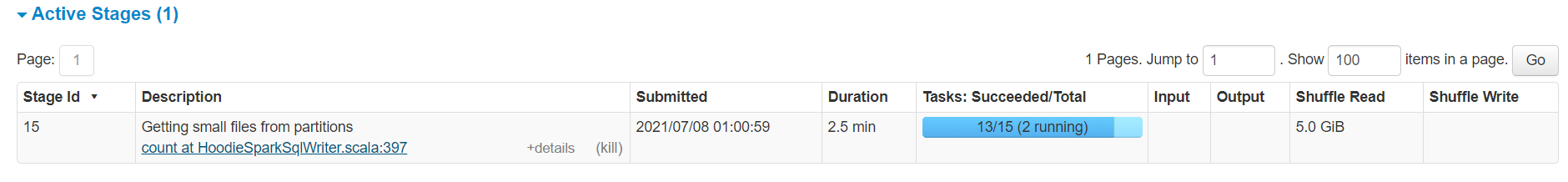

I have a similar observation. I've done an initial load of 300M rows into a COW hudi table and trying to upsert a batch of 12M which is mostly new data but might occasionally contain a couple thousand rows that are updates. This upsert sometimes takes 8mins and sometimes about 25mins. I've noticed that the stage that's causing the increase in time is **Getting small files from partitions** . Sometimes this stage runs with 12-15 tasks creating 12-15 output files (doesn't obey hoodie.parquet.max.file.size or hoodie.parquet.small.file.limit and this runs quickly ) and sometimes it runs with just 1 task and is very slow. I've tried it with same volume of data and the time it takes varies greatly. Any idea how to control it's parallelism? I would ideally want 2-3tasks to avoid a lot of small files being created if it cannot compact files.  -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]