This is an automated email from the ASF dual-hosted git repository.

rong pushed a commit to branch master

in repository https://gitbox.apache.org/repos/asf/iotdb.git

The following commit(s) were added to refs/heads/master by this push:

new fcd7824 [IOTDB-2144][Metric] Collect IoTDB Runtime Metrics (#4573)

fcd7824 is described below

commit fcd782403eb0dbb56c61488051559acab48dccb1

Author: xinzhongtianxia <[email protected]>

AuthorDate: Wed Dec 22 16:03:36 2021 +0800

[IOTDB-2144][Metric] Collect IoTDB Runtime Metrics (#4573)

Co-authored-by: 还真 <[email protected]>

---

cluster/src/assembly/cluster.xml | 4 +

.../log/manage/PartitionedSnapshotLogManager.java | 15 ++

.../iotdb/cluster/server/ClusterRPCService.java | 8 +-

.../server/handlers/caller/ElectionHandler.java | 17 ++

.../cluster/utils/nodetool/ClusterMonitor.java | 83 ++++++

.../iotdb/cluster/server/member/BaseMember.java | 2 +

docs/UserGuide/System-Tools/Metric-Tool.md | 288 +++++++++++++++++++--

docs/zh/UserGuide/System-Tools/Metric-Tool.md | 280 ++++++++++++++++++--

.../dropwizard/DropwizardMetricManagerTest.java | 1 +

.../main/assembly/resources/conf/iotdb-metric.yml | 1 +

.../org/apache/iotdb/metrics/MetricService.java | 6 +-

.../apache/iotdb/metrics/config/MetricConfig.java | 3 +-

.../metrics/config/MetricConfigDescriptor.java | 12 +-

.../iotdb/metrics/utils/PredefinedMetric.java | 3 +-

.../micrometer/MicrometerMetricManager.java | 11 +

.../reporter/MicrometerPrometheusReporter.java | 27 +-

.../micrometer/MicrometerMetricManagerTest.java | 1 +

.../db/concurrent/IoTDBThreadPoolFactory.java | 10 +-

.../apache/iotdb/db/engine/cache/ChunkCache.java | 16 ++

.../db/engine/cache/TimeSeriesMetadataCache.java | 46 +++-

.../engine/compaction/CompactionTaskManager.java | 51 ++++

.../compaction/task/AbstractCompactionTask.java | 18 ++

.../apache/iotdb/db/engine/flush/FlushManager.java | 28 ++

.../iotdb/db/engine/flush/MemTableFlushTask.java | 16 ++

.../iotdb/db/engine/memtable/AbstractMemTable.java | 42 ++-

.../engine/storagegroup/StorageGroupProcessor.java | 14 +

.../engine/storagegroup/TsFileProcessorInfo.java | 23 ++

.../org/apache/iotdb/db/metadata/MManager.java | 60 +++++

.../iotdb/db/query/pool/QueryTaskPoolManager.java | 28 ++

.../java/org/apache/iotdb/db/service/IoTDB.java | 2 +-

.../org/apache/iotdb/db/service/RPCService.java | 8 +-

.../apache/iotdb/db/service/metrics/Metric.java | 25 +-

.../iotdb/db/service/metrics/MetricsService.java | 112 +++++++-

.../org/apache/iotdb/db/service/metrics/Tag.java | 15 +-

.../db/service/thrift/ProcessorWithMetrics.java | 70 +++++

.../db/service/thrift/impl/TSServiceImpl.java | 8 +-

.../java/org/apache/iotdb/db/utils/FileUtils.java | 24 ++

37 files changed, 1277 insertions(+), 101 deletions(-)

diff --git a/cluster/src/assembly/cluster.xml b/cluster/src/assembly/cluster.xml

index 66b9e38..7025a3e 100644

--- a/cluster/src/assembly/cluster.xml

+++ b/cluster/src/assembly/cluster.xml

@@ -27,5 +27,9 @@

<directory>${maven.multiModuleProjectDirectory}/server/src/assembly/resources/tools</directory>

<outputDirectory>${file.separator}tools</outputDirectory>

</fileSet>

+ <fileSet>

+

<directory>${maven.multiModuleProjectDirectory}/metrics/interface/src/main/assembly/resources/conf</directory>

+ <outputDirectory>conf</outputDirectory>

+ </fileSet>

</fileSets>

</assembly>

diff --git

a/cluster/src/main/java/org/apache/iotdb/cluster/log/manage/PartitionedSnapshotLogManager.java

b/cluster/src/main/java/org/apache/iotdb/cluster/log/manage/PartitionedSnapshotLogManager.java

index 56713d1..3edde08 100644

---

a/cluster/src/main/java/org/apache/iotdb/cluster/log/manage/PartitionedSnapshotLogManager.java

+++

b/cluster/src/main/java/org/apache/iotdb/cluster/log/manage/PartitionedSnapshotLogManager.java

@@ -31,6 +31,10 @@ import org.apache.iotdb.cluster.rpc.thrift.Node;

import org.apache.iotdb.cluster.server.member.DataGroupMember;

import org.apache.iotdb.db.metadata.path.PartialPath;

import org.apache.iotdb.db.service.IoTDB;

+import org.apache.iotdb.db.service.metrics.Metric;

+import org.apache.iotdb.db.service.metrics.MetricsService;

+import org.apache.iotdb.db.service.metrics.Tag;

+import org.apache.iotdb.metrics.config.MetricConfigDescriptor;

import org.apache.iotdb.tsfile.write.schema.TimeseriesSchema;

import org.slf4j.Logger;

@@ -77,6 +81,17 @@ public abstract class PartitionedSnapshotLogManager<T

extends Snapshot> extends

this.factory = factory;

this.thisNode = thisNode;

this.dataGroupMember = dataGroupMember;

+

+ if

(MetricConfigDescriptor.getInstance().getMetricConfig().getEnableMetric()) {

+ MetricsService.getInstance()

+ .getMetricManager()

+ .getOrCreateAutoGauge(

+ Metric.CLUSTER_UNCOMMITTED_LOG.toString(),

+ getUnCommittedEntryManager().getAllEntries(),

+ List::size,

+ Tag.NAME.toString(),

+ thisNode.internalIp + "_" + dataGroupMember.getName());

+ }

}

public void takeSnapshotForSpecificSlots(List<Integer> requiredSlots,

boolean needLeader)

diff --git

a/cluster/src/main/java/org/apache/iotdb/cluster/server/ClusterRPCService.java

b/cluster/src/main/java/org/apache/iotdb/cluster/server/ClusterRPCService.java

index 22ed902..f7c5e95 100644

---

a/cluster/src/main/java/org/apache/iotdb/cluster/server/ClusterRPCService.java

+++

b/cluster/src/main/java/org/apache/iotdb/cluster/server/ClusterRPCService.java

@@ -25,9 +25,11 @@ import org.apache.iotdb.db.conf.IoTDBConfig;

import org.apache.iotdb.db.conf.IoTDBDescriptor;

import org.apache.iotdb.db.exception.runtime.RPCServiceException;

import org.apache.iotdb.db.service.ServiceType;

+import org.apache.iotdb.db.service.thrift.ProcessorWithMetrics;

import org.apache.iotdb.db.service.thrift.ThriftService;

import org.apache.iotdb.db.service.thrift.ThriftServiceThread;

import org.apache.iotdb.db.service.thrift.handler.RPCServiceThriftHandler;

+import org.apache.iotdb.metrics.config.MetricConfigDescriptor;

import org.apache.iotdb.service.rpc.thrift.TSIService.Processor;

public class ClusterRPCService extends ThriftService implements

ClusterRPCServiceMBean {

@@ -57,7 +59,11 @@ public class ClusterRPCService extends ThriftService

implements ClusterRPCServic

if (impl == null) {

throw new InstantiationException("ClusterTSServiceImpl is null");

}

- processor = new Processor<>(impl);

+ if

(MetricConfigDescriptor.getInstance().getMetricConfig().getEnableMetric()) {

+ processor = new ProcessorWithMetrics(impl);

+ } else {

+ processor = new Processor<>(impl);

+ }

}

@Override

diff --git

a/cluster/src/main/java/org/apache/iotdb/cluster/server/handlers/caller/ElectionHandler.java

b/cluster/src/main/java/org/apache/iotdb/cluster/server/handlers/caller/ElectionHandler.java

index 6190d20..229673b 100644

---

a/cluster/src/main/java/org/apache/iotdb/cluster/server/handlers/caller/ElectionHandler.java

+++

b/cluster/src/main/java/org/apache/iotdb/cluster/server/handlers/caller/ElectionHandler.java

@@ -21,6 +21,10 @@ package org.apache.iotdb.cluster.server.handlers.caller;

import org.apache.iotdb.cluster.rpc.thrift.Node;

import org.apache.iotdb.cluster.server.member.RaftMember;

+import org.apache.iotdb.db.service.metrics.Metric;

+import org.apache.iotdb.db.service.metrics.MetricsService;

+import org.apache.iotdb.db.service.metrics.Tag;

+import org.apache.iotdb.metrics.config.MetricConfigDescriptor;

import org.apache.thrift.async.AsyncMethodCallback;

import org.slf4j.Logger;

@@ -73,6 +77,7 @@ public class ElectionHandler implements

AsyncMethodCallback<Long> {

@Override

public void onComplete(Long resp) {

long voterResp = resp;

+ String result = "fail";

synchronized (raftMember.getTerm()) {

if (terminated.get()) {

// a voter has rejected this election, which means the term or the log

id falls behind

@@ -98,6 +103,7 @@ public class ElectionHandler implements

AsyncMethodCallback<Long> {

terminated.set(true);

raftMember.getTerm().notifyAll();

raftMember.onElectionWins();

+ result = "win";

logger.info("{}: Election {} is won", memberName, currTerm);

}

// still need more votes

@@ -124,6 +130,17 @@ public class ElectionHandler implements

AsyncMethodCallback<Long> {

}

}

}

+ if

(MetricConfigDescriptor.getInstance().getMetricConfig().getEnableMetric()) {

+ MetricsService.getInstance()

+ .getMetricManager()

+ .count(

+ 1,

+ Metric.CLUSTER_ELECT.toString(),

+ Tag.NAME.toString(),

+ raftMember.getThisNode().internalIp,

+ Tag.STATUS.toString(),

+ result);

+ }

}

@Override

diff --git

a/cluster/src/main/java/org/apache/iotdb/cluster/utils/nodetool/ClusterMonitor.java

b/cluster/src/main/java/org/apache/iotdb/cluster/utils/nodetool/ClusterMonitor.java

index fa5c5d2..0768666 100644

---

a/cluster/src/main/java/org/apache/iotdb/cluster/utils/nodetool/ClusterMonitor.java

+++

b/cluster/src/main/java/org/apache/iotdb/cluster/utils/nodetool/ClusterMonitor.java

@@ -19,6 +19,7 @@

package org.apache.iotdb.cluster.utils.nodetool;

import org.apache.iotdb.cluster.ClusterIoTDB;

+import org.apache.iotdb.cluster.client.sync.SyncMetaClient;

import org.apache.iotdb.cluster.config.ClusterConstant;

import org.apache.iotdb.cluster.config.ClusterDescriptor;

import org.apache.iotdb.cluster.partition.PartitionGroup;

@@ -30,6 +31,7 @@ import org.apache.iotdb.cluster.server.NodeCharacter;

import org.apache.iotdb.cluster.server.member.DataGroupMember;

import org.apache.iotdb.cluster.server.member.MetaGroupMember;

import org.apache.iotdb.cluster.server.monitor.Timer;

+import org.apache.iotdb.cluster.utils.ClientUtils;

import org.apache.iotdb.cluster.utils.nodetool.function.NodeToolCmd;

import org.apache.iotdb.db.conf.IoTDBConstant;

import org.apache.iotdb.db.exception.StartupException;

@@ -38,9 +40,14 @@ import org.apache.iotdb.db.metadata.path.PartialPath;

import org.apache.iotdb.db.service.IService;

import org.apache.iotdb.db.service.JMXService;

import org.apache.iotdb.db.service.ServiceType;

+import org.apache.iotdb.db.service.metrics.Metric;

+import org.apache.iotdb.db.service.metrics.MetricsService;

+import org.apache.iotdb.db.service.metrics.Tag;

+import org.apache.iotdb.metrics.config.MetricConfigDescriptor;

import org.apache.iotdb.tsfile.utils.Pair;

import org.apache.commons.collections4.map.MultiKeyMap;

+import org.apache.thrift.TException;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

@@ -48,6 +55,8 @@ import java.util.ArrayList;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

+import java.util.concurrent.Executors;

+import java.util.concurrent.TimeUnit;

import static

org.apache.iotdb.cluster.utils.nodetool.function.NodeToolCmd.BUILDING_CLUSTER_INFO;

import static

org.apache.iotdb.cluster.utils.nodetool.function.NodeToolCmd.META_LEADER_UNKNOWN_INFO;

@@ -68,6 +77,9 @@ public class ClusterMonitor implements ClusterMonitorMBean,

IService {

public void start() throws StartupException {

try {

JMXService.registerMBean(INSTANCE, mbeanName);

+ if

(MetricConfigDescriptor.getInstance().getMetricConfig().getEnableMetric()) {

+ startCollectClusterStatus();

+ }

} catch (Exception e) {

String errorMessage =

String.format("Failed to start %s because of %s",

this.getID().getName(), e.getMessage());

@@ -75,6 +87,77 @@ public class ClusterMonitor implements ClusterMonitorMBean,

IService {

}

}

+ private void startCollectClusterStatus() {

+ // monitor all nodes' live status

+ LOGGER.info("start metric node status and leader distribution");

+ Executors.newSingleThreadScheduledExecutor()

+ .scheduleAtFixedRate(

+ () -> {

+ MetaGroupMember metaGroupMember =

ClusterIoTDB.getInstance().getMetaGroupMember();

+ if (metaGroupMember != null

+ &&

metaGroupMember.getLeader().equals(metaGroupMember.getThisNode())) {

+ metricNodeStatus(metaGroupMember);

+ metricLeaderDistribution(metaGroupMember);

+ }

+ },

+ 10L,

+ 10L,

+ TimeUnit.SECONDS);

+ }

+

+ private void metricLeaderDistribution(MetaGroupMember metaGroupMember) {

+ Map<Node, Integer> leaderCountMap = new HashMap<>();

+ ClusterIoTDB.getInstance()

+ .getDataGroupEngine()

+ .getHeaderGroupMap()

+ .forEach(

+ (header, dataGroupMember) -> {

+ Node leader = dataGroupMember.getLeader();

+ int delta = 1;

+ Integer count = leaderCountMap.getOrDefault(leader, 0);

+ leaderCountMap.put(leader, count + delta);

+ });

+ List<Node> ring = getRing();

+ for (Node node : ring) {

+ Integer count = leaderCountMap.getOrDefault(node, 0);

+ MetricsService.getInstance()

+ .getMetricManager()

+ .gauge(

+ count,

+ Metric.CLUSTER_NODE_LEADER_COUNT.toString(),

+ Tag.NAME.toString(),

+ node.internalIp);

+ }

+ }

+

+ private void metricNodeStatus(MetaGroupMember metaGroupMember) {

+ List<Node> ring = getRing();

+ for (Node node : ring) {

+ boolean isAlive = false;

+ if (node.equals(metaGroupMember.getThisNode())) {

+ isAlive = true;

+ }

+ SyncMetaClient client = (SyncMetaClient)

metaGroupMember.getSyncClient(node);

+ if (client != null) {

+ try {

+ client.checkAlive();

+ isAlive = true;

+ } catch (TException e) {

+ client.getInputProtocol().getTransport().close();

+ } finally {

+ ClientUtils.putBackSyncClient(client);

+ }

+ }

+ MetricsService.getInstance()

+ .getMetricManager()

+ .gauge(

+ isAlive ? 1 : 0,

+ Metric.CLUSTER_NODE_STATUS.toString(),

+ Tag.NAME.toString(),

+ node.internalIp);

+ }

+ }

+

@Override

public List<Pair<Node, NodeCharacter>> getMetaGroup() {

MetaGroupMember metaMember =

ClusterIoTDB.getInstance().getMetaGroupMember();

diff --git

a/cluster/src/test/java/org/apache/iotdb/cluster/server/member/BaseMember.java

b/cluster/src/test/java/org/apache/iotdb/cluster/server/member/BaseMember.java

index d6ad1b7..a918a58 100644

---

a/cluster/src/test/java/org/apache/iotdb/cluster/server/member/BaseMember.java

+++

b/cluster/src/test/java/org/apache/iotdb/cluster/server/member/BaseMember.java

@@ -59,6 +59,7 @@ import org.apache.iotdb.db.service.IoTDB;

import org.apache.iotdb.db.service.RegisterManager;

import org.apache.iotdb.db.utils.EnvironmentUtils;

import org.apache.iotdb.db.utils.SchemaUtils;

+import org.apache.iotdb.metrics.config.MetricConfigDescriptor;

import org.apache.thrift.async.AsyncMethodCallback;

import org.junit.After;

@@ -111,6 +112,7 @@ public class BaseMember {

prevLeaderWait = RaftMember.getWaitLeaderTimeMs();

prevEnableWAL = IoTDBDescriptor.getInstance().getConfig().isEnableWal();

IoTDBDescriptor.getInstance().getConfig().setEnableWal(false);

+

MetricConfigDescriptor.getInstance().getMetricConfig().setEnableMetric(false);

RaftMember.setWaitLeaderTimeMs(10);

syncLeaderMaxWait = ClusterConstant.getSyncLeaderMaxWaitMs();

diff --git a/docs/UserGuide/System-Tools/Metric-Tool.md

b/docs/UserGuide/System-Tools/Metric-Tool.md

index 2844e96..ceba2f9 100644

--- a/docs/UserGuide/System-Tools/Metric-Tool.md

+++ b/docs/UserGuide/System-Tools/Metric-Tool.md

@@ -19,34 +19,268 @@

-->

-# Metric Tools

-IoTDB Server provides a monitoring module, which can introduce variable

monitoring where needed. Currently, it provides two monitoring methods, Jmx and

Prometheus, and can introduce 5 indicators such as counter. For more

instructions on the interface, please refer to <a href =

"https://github.com/apache/iotdb/tree/master/metrics";>the metric part of the

description document</a>

+## What is metrics?

+Along with IoTDB running, some metrics reflecting current system's status will

be collected continuously, which will provide some useful information helping

us resolving system problems and detecting potential system risks.

-## use

+## When to use metrics?

-Step 1: Obtain IoTDB-server.

+Belows are some typical application scenarios

-Step 2: Edit the configuration file `iotdb-metric.yml`

+1. System is running slowly

-- You can choose to use the method of obtaining metrics and modify the

`metricReporterList`: optional parameters `jmx`, `prometheus`

-- You can select the type of monitoring framework and modify the

`monitortype`: optional parameters `dropwizard`, `micrometer`, and at the same

time introduce the dependency of the corresponding monitoring framework in the

IoTDB Server configuration file.

+ When system is running slowly, we always hope to have information about

system's running status as detail as possible, such as

-The third step: bury the points in the corresponding position in the IoTDB

Server.

+ - JVM:Is there FGC?How long does it cost?How much does the memory usage

decreased after GC?Are there lots of threads?

+ - System:Is the CPU usage too hi?Are there many disk IOs?

+ - Connections:How many connections are there in the current time?

+ - Interface:What is the TPS and latency of every interface?

+ - ThreadPool:Are there many pending tasks?

+ - Cache Hit Ratio

-- First obtain the corresponding monitoring manager through

`MetricsService.getInstance().getMetricManager()`, and then call the

corresponding method to obtain the corresponding indicator.

-- Afterwards, you can operate on the obtained indicators, and the monitoring

framework will automatically publish the indicators through the selected method.

-- It should be noted that all tags provided by the getOrCreate method should

be even numbers to form the corresponding key-value.

+2. No space left on device

-Step 3: Start IoTDB-server.

+ When meet a "no space left on device" error, we really want to know which

kind of data file had a rapid rise in the past hours.

-Step 4: View the specific indicator parameters, and you can view them

separately according to the selected method of obtaining the indicators, that

is, `jmx` or `prometheus`

+3. Is the system running in abnormal status

-- For jmx, please check `org.apache.iotdb.metrics` after connecting

-- Perform the following configuration for prometheus (some parameters can be

adjusted by yourself to obtain monitoring data), It should be noted that if you

use docker to run iotdb-server, you need to use the `-p 9091:9091` parameter to

expose the corresponding port when using `docker run`.

+ We could use the count of error logs、the alive status of nodes in cluster,

etc, to determine whether the system is running abnormally.

+## Who will use metrics?

+

+Any person cares about the system's status, including but not limited to RD,

QA, SRE, DBA, can use the metrics to work more efficiently.

+

+## What metrics does IoTDB have?

+

+For now, we have provided some metrics for several core modules of IoTDB, and

more metrics will be added or updated along with the development of new

features and optimization or refactoring of architecture.

+

+### Key Concept

+

+Before step into next, we'd better stop to have a look into some key concepts

about metrics.

+

+Every metric data has two properties

+

+- Metric Name

+

+ The name of this metric,for example, ```logback_events_total``` indicates

the total count of log events。

+

+- Tag

+

+ Each metric could have 0 or several sub classes (Tag), for the same example,

the ```logback_events_total``` metric has a sub class named ```level```, which

means ```the total count of log events at the specific level```

+

+### Data Format

+

+IoTDB provides metrics data both in JMX and Prometheus format. For JMX, you

can get these metrics via ```org.apache.iotdb.metrics```.

+

+Next, we will choose Prometheus format data as samples to describe each kind

of metric.

+

+### IoTDB Metrics

+

+### API

+

+| Metric | Tag | Description

| Sample |

+| ------------------- | --------------------- |

---------------------------------------- |

-------------------------------------------- |

+| entry_seconds_count | name="interface name" | The total request count of the

interface | entry_seconds_count{name="openSession",} 1.0 |

+| entry_seconds_sum | name="interface name" | The total cost seconds of the

interface | entry_seconds_sum{name="openSession",} 0.024 |

+| entry_seconds_max | name="interface name" | The max latency of the

interface | entry_seconds_max{name="openSession",} 0.024 |

+| quantity_total | name="pointsIn" | The total points inserted into

IoTDB | quantity_total{name="pointsIn",} 1.0 |

+

+### File

+

+| Metric | Tag | Description

| Sample |

+| ---------- | -------------------- |

----------------------------------------------- | --------------------------- |

+| file_size | name="wal/seq/unseq" | The current file size of wal/seq/unseq

in bytes | file_size{name="wal",} 67.0 |

+| file_count | name="wal/seq/unseq" | The current count of wal/seq/unseq files

| file_count{name="seq",} 1.0 |

+

+### Flush

+

+| Metric | Tag |

Description | Sample

|

+| ----------------------- | ------------------------------------------- |

------------------------------------------------------------ |

------------------------------------------------------------ |

+| queue | name="flush",<br />status="running/waiting" | The

count of current flushing tasks in running and waiting status |

queue{name="flush",status="waiting",}

0.0<br/>queue{name="flush",status="running",} 0.0 |

+| cost_task_seconds_count | name="flush" | The

total count of flushing occurs till now |

cost_task_seconds_count{name="flush",} 1.0 |

+| cost_task_seconds_max | name="flush" | The

seconds of the longest flushing task takes till now |

cost_task_seconds_max{name="flush",} 0.363 |

+| cost_task_seconds_sum | name="flush" | The

total cost seconds of all flushing tasks till now |

cost_task_seconds_sum{name="flush",} 0.363 |

+

+### Compaction

+

+| Metric | Tag

| Description |

Sample |

+| ----------------------- |

------------------------------------------------------------ |

------------------------------------------------------------ |

---------------------------------------------------- |

+| queue | name="compaction_inner/compaction_cross",<br

/>status="running/waiting" | The count of current compaction tasks in running

and waiting status | queue{name="compaction_inner",status="waiting",} 0.0 |

+| cost_task_seconds_count | name="compaction"

| The total count of compaction occurs till now |

cost_task_seconds_count{name="compaction",} 1.0 |

+| cost_task_seconds_max | name="compaction"

| The seconds of the longest compaction task takes till now |

cost_task_seconds_max{name="compaction",} 0.363 |

+| cost_task_seconds_sum | name="compaction"

| The total cost seconds of all compaction tasks till now |

cost_task_seconds_sum{name="compaction",} 0.363 |

+

+### Memory Usage

+

+| Metric | Tag | Description

| Sample |

+| ------ | --------------------------------------- |

------------------------------------------------------------ |

--------------------------------- |

+| mem | name="chunkMetaData/storageGroup/mtree" | Current memory size of

chunkMetaData/storageGroup/mtree data in bytes | mem{name="chunkMetaData",}

2050.0 |

+

+### Cache Hit Ratio

+

+| Metric | Tag | Description

| Sample |

+| --------- | --------------------------------------- |

------------------------------------------------------------ |

--------------------------- |

+| cache_hit | name="chunk/timeSeriesMeta/bloomFilter" | Cache hit ratio of

chunk/timeSeriesMeta and prevention ratio of bloom filter |

cache_hit{name="chunk",} 80 |

+

+### Business Data

+

+| Metric | Tag | Description

| Sample |

+| -------- | ------------------------------------- |

------------------------------------------------------------ |

-------------------------------- |

+| quantity | name="timeSeries/storageGroup/device" | The current count of

timeSeries/storageGroup/devices in IoTDB | quantity{name="timeSeries",} 1.0 |

+

+### Cluster

+

+| Metric | Tag | Description

| Sample

|

+| ------------------------- | ------------------------------- |

------------------------------------------------------------ |

------------------------------------------------------------ |

+| cluster_node_leader_count | name="{{ip}}" | The count of

```dataGroupLeader``` on each node, which reflects the distribution of leaders

| cluster_node_leader_count{name="127.0.0.1",} 2.0 |

+| cluster_uncommitted_log | name="{{ip_datagroupHeader}}" | The count of

```uncommitted_log``` on each node in data groups it belongs to |

cluster_uncommitted_log{name="127.0.0.1_Data-127.0.0.1-40010-raftId-0",} 0.0 |

+| cluster_node_status | name="{{ip}}" | The current

node status, 1=online 2=offline |

cluster_node_status{name="127.0.0.1",} 1.0 |

+| cluster_elect_total | name="{{ip}}",status="fail/win" | The count and

result (won or failed) of elections the node participated in. |

cluster_elect_total{name="127.0.0.1",status="win",} 1.0 |

+

+### Log Events

+

+| Metric | Tag | Description

| Sample

|

+| -------------------- | -------------------------------------- |

------------------------------------------------------------ |

--------------------------------------- |

+| logback_events_total | {level="trace/debug/info/warn/error",} | The count of

trace/debug/info/warn/error log events till now |

logback_events_total{level="warn",} 0.0 |

+

+### JVM

+

+#### Threads

+

+| Metric | Tag

| Description | Sample

|

+| -------------------------- |

------------------------------------------------------------ |

------------------------------------ |

-------------------------------------------------- |

+| jvm_threads_live_threads | None

| The current count of threads | jvm_threads_live_threads

25.0 |

+| jvm_threads_daemon_threads | None

| The current count of daemon threads |

jvm_threads_daemon_threads 12.0 |

+| jvm_threads_peak_threads | None

| The max count of threads till now | jvm_threads_peak_threads

28.0 |

+| jvm_threads_states_threads |

state="runnable/blocked/waiting/timed-waiting/new/terminated" | The count of

threads in each status | jvm_threads_states_threads{state="runnable",} 10.0 |

+

+#### GC

+

+| Metric | Tag

| Description

| Sample |

+| ----------------------------------- |

------------------------------------------------------ |

------------------------------------------------------------ |

------------------------------------------------------------ |

+| jvm_gc_pause_seconds_count | action="end of major GC/end of minor

GC",cause="xxxx" | The total count of YGC/FGC events and its cause

| jvm_gc_pause_seconds_count{action="end of major GC",cause="Metadata GC

Threshold",} 1.0 |

+| jvm_gc_pause_seconds_sum | action="end of major GC/end of minor

GC",cause="xxxx" | The total cost seconds of YGC/FGC and its cause

| jvm_gc_pause_seconds_sum{action="end of major GC",cause="Metadata GC

Threshold",} 0.03 |

+| jvm_gc_pause_seconds_max | action="end of major

GC",cause="Metadata GC Threshold" | The max cost seconds of YGC/FGC till now

and its cause | jvm_gc_pause_seconds_max{action="end of major

GC",cause="Metadata GC Threshold",} 0.0 |

+| jvm_gc_overhead_percent | None

| An approximation of the percent of CPU time used by GC

activities over the last lookback period or since monitoring began, whichever

is shorter, in the range [0..1] | jvm_gc_overhead_percent 0.0

|

+| jvm_gc_memory_promoted_bytes_total | None

| Count of positive increases in the size of the old generation

memory pool before GC to after GC | jvm_gc_memory_promoted_bytes_total

8425512.0 |

+| jvm_gc_max_data_size_bytes | None

| Max size of long-lived heap memory pool

| jvm_gc_max_data_size_bytes 2.863661056E9 |

+| jvm_gc_live_data_size_bytes | 无

| Size of long-lived heap memory pool after reclamation |

jvm_gc_live_data_size_bytes 8450088.0 |

+| jvm_gc_memory_allocated_bytes_total | None

| Incremented for an increase in the size of the (young) heap

memory pool after one GC to before the next |

jvm_gc_memory_allocated_bytes_total 4.2979144E7 |

+

+#### Memory

+

+| Metric | Tag |

Description | Sample

|

+| ------------------------------- | ------------------------------- |

------------------------------------------------------------ |

------------------------------------------------------------ |

+| jvm_buffer_memory_used_bytes | id="direct/mapped" | An

estimate of the memory that the Java virtual machine is using for this buffer

pool | jvm_buffer_memory_used_bytes{id="direct",} 3.46728099E8 |

+| jvm_buffer_total_capacity_bytes | id="direct/mapped" | An

estimate of the total capacity of the buffers in this pool |

jvm_buffer_total_capacity_bytes{id="mapped",} 0.0 |

+| jvm_buffer_count_buffers | id="direct/mapped" | An

estimate of the number of buffers in the pool |

jvm_buffer_count_buffers{id="direct",} 183.0 |

+| jvm_memory_committed_bytes | {area="heap/nonheap",id="xxx",} | The

amount of memory in bytes that is committed for the Java virtual machine to use

| jvm_memory_committed_bytes{area="heap",id="Par Survivor Space",}

2.44252672E8<br/>jvm_memory_committed_bytes{area="nonheap",id="Metaspace",}

3.9051264E7<br/> |

+| jvm_memory_max_bytes | {area="heap/nonheap",id="xxx",} | The

maximum amount of memory in bytes that can be used for memory management |

jvm_memory_max_bytes{area="heap",id="Par Survivor Space",}

2.44252672E8<br/>jvm_memory_max_bytes{area="nonheap",id="Compressed Class

Space",} 1.073741824E9 |

+| jvm_memory_used_bytes | {area="heap/nonheap",id="xxx",} | The

amount of used memory |

jvm_memory_used_bytes{area="heap",id="Par Eden Space",}

1.000128376E9<br/>jvm_memory_used_bytes{area="nonheap",id="Code Cache",}

2.9783808E7<br/> |

+

+#### Classes

+

+| Metric | Tag

| Description | Sample

|

+| ---------------------------------- |

--------------------------------------------- |

------------------------------------------------------------ |

------------------------------------------------------------ |

+| jvm_classes_unloaded_classes_total | 无

| The total number of classes unloaded since the Java virtual machine has

started execution | jvm_classes_unloaded_classes_total 680.0

|

+| jvm_classes_loaded_classes | 无

| The number of classes that are currently loaded in the Java virtual

machine | jvm_classes_loaded_classes 5975.0 |

+| jvm_compilation_time_ms_total | {compiler="HotSpot 64-Bit Tiered

Compilers",} | The approximate accumulated elapsed time spent in compilation |

jvm_compilation_time_ms_total{compiler="HotSpot 64-Bit Tiered Compilers",}

107092.0 |

+

+If you want add your own metrics data in IoTDB, please see the [IoTDB Metric

Framework] (https://github.com/apache/iotdb/tree/master/metrics) document.

+

+## How to get these metrics?

+

+The metrics collection switch is disabled by default,you need to enable it

from ```conf/iotdb-metric.yml```

+

+### Iotdb-metric.yml

+

+```yaml

+# The default value is false,change it to true and start/restart you IoTDB

server, then you will get the metrics data.

+enableMetric: false

+

+# IoTDB provides metrics data both in JMX and Prometheus format.

+metricReporterList:

+ - jmx

+ - prometheus

+

+# You can choose the underlying inplementation of the framework, dropwizard or

micrometer, the latter is recommended.

+monitorType: micrometer

+

+# you can set the period time of push.

+# This param works only when monitorType=dropwizard

+pushPeriodInSecond: 5

+########################################################

+# #

+# if the reporter is prometheus, #

+# then the following must be set #

+# #

+########################################################

+prometheusReporterConfig:

+ prometheusExporterUrl: http://localhost

+

+ # From this port, you can get metrics data by http request

+ prometheusExporterPort: 9091

+```

+

+Then you can get metrics data as follows

+

+1. Enable metrics switch in ```iotdb-metric.yml```

+2. You can just stay other config params as default.

+3. Start/Restart your IoTDB server/cluster

+4. Open your browser or use the ```curl``` command to request

```http://servier_ip:9001/metrics```,then you will get metrics data like

follows:

+

+```

+# HELP file_count

+# TYPE file_count gauge

+file_count{name="wal",} 0.0

+file_count{name="unseq",} 0.0

+file_count{name="seq",} 2.0

+# HELP file_size

+# TYPE file_size gauge

+file_size{name="wal",} 0.0

+file_size{name="unseq",} 0.0

+file_size{name="seq",} 560.0

+# HELP queue

+# TYPE queue gauge

+queue{name="flush",status="waiting",} 0.0

+queue{name="flush",status="running",} 0.0

+# HELP quantity

+# TYPE quantity gauge

+quantity{name="timeSeries",} 1.0

+quantity{name="storageGroup",} 1.0

+quantity{name="device",} 1.0

+# HELP logback_events_total Number of error level events that made it to the

logs

+# TYPE logback_events_total counter

+logback_events_total{level="warn",} 0.0

+logback_events_total{level="debug",} 2760.0

+logback_events_total{level="error",} 0.0

+logback_events_total{level="trace",} 0.0

+logback_events_total{level="info",} 71.0

+# HELP mem

+# TYPE mem gauge

+mem{name="storageGroup",} 0.0

+mem{name="mtree",} 1328.0

```

-job_name: push-metrics

+

+### Integrating with Prometheus and Grafana

+

+As above descriptions,IoTDB provides metrics data in standard Prometheus

format,so we can integrate with Prometheus and Grafana directly.

+

+The following picture describes the relationships among IoTDB, Prometheus and

Grafana

+

+

+

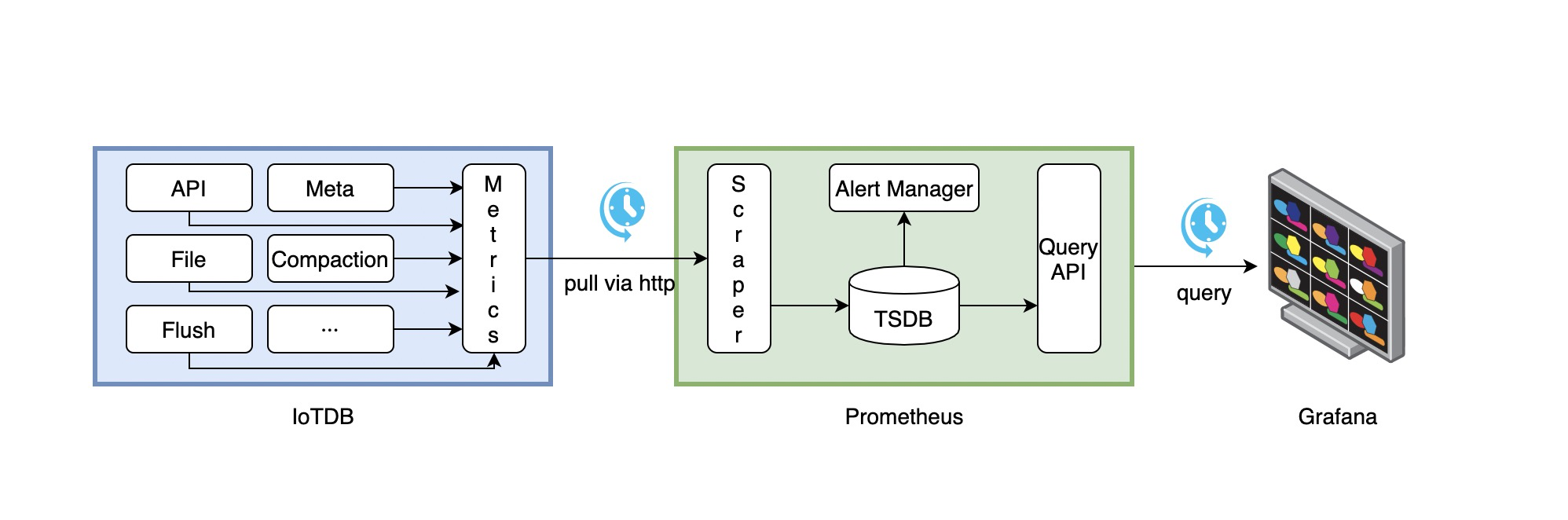

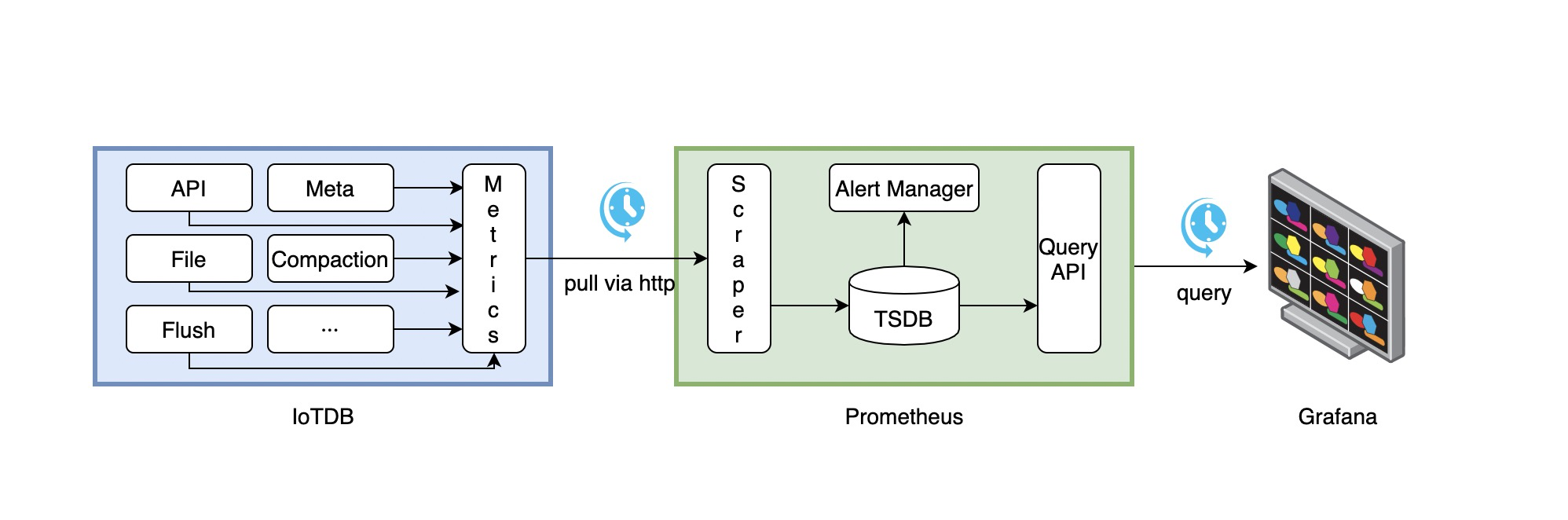

+1. Along with running, IoTDB will collect its metrics continuously.

+2. Prometheus scrapes metrics from IoTDB at a constant interval (can be

configured).

+3. Prometheus saves these metrics to its inner TSDB.

+4. Grafana queries metrics from Prometheus at a constant interval (can be

configured) and then presents them on the graph.

+

+So, we need to do some additional works to configure and deploy Prometheus and

Grafana.

+

+For instance, you can config your Prometheus as follows to get metrics data

from IoTDB:

+

+```yaml

+job_name: pull-metrics

honor_labels: true

honor_timestamps: true

scrape_interval: 15s

@@ -55,6 +289,22 @@ metrics_path: /metrics

scheme: http

follow_redirects: true

static_configs:

--targets:

- -localhost:9091

-```

\ No newline at end of file

+- targets:

+ - localhost:9091

+```

+

+The following documents may help you have a good journey with Prometheus and

Grafana.

+

+[Prometheus

getting_started](https://prometheus.io/docs/prometheus/latest/getting_started/)

+

+[Prometheus scrape

metrics](https://prometheus.io/docs/prometheus/latest/configuration/configuration/#scrape_config)

+

+[Grafana

getting_started](https://grafana.com/docs/grafana/latest/getting-started/getting-started/)

+

+[Grafana query metrics from

Prometheus](https://prometheus.io/docs/visualization/grafana/#grafana-support-for-prometheus)

+

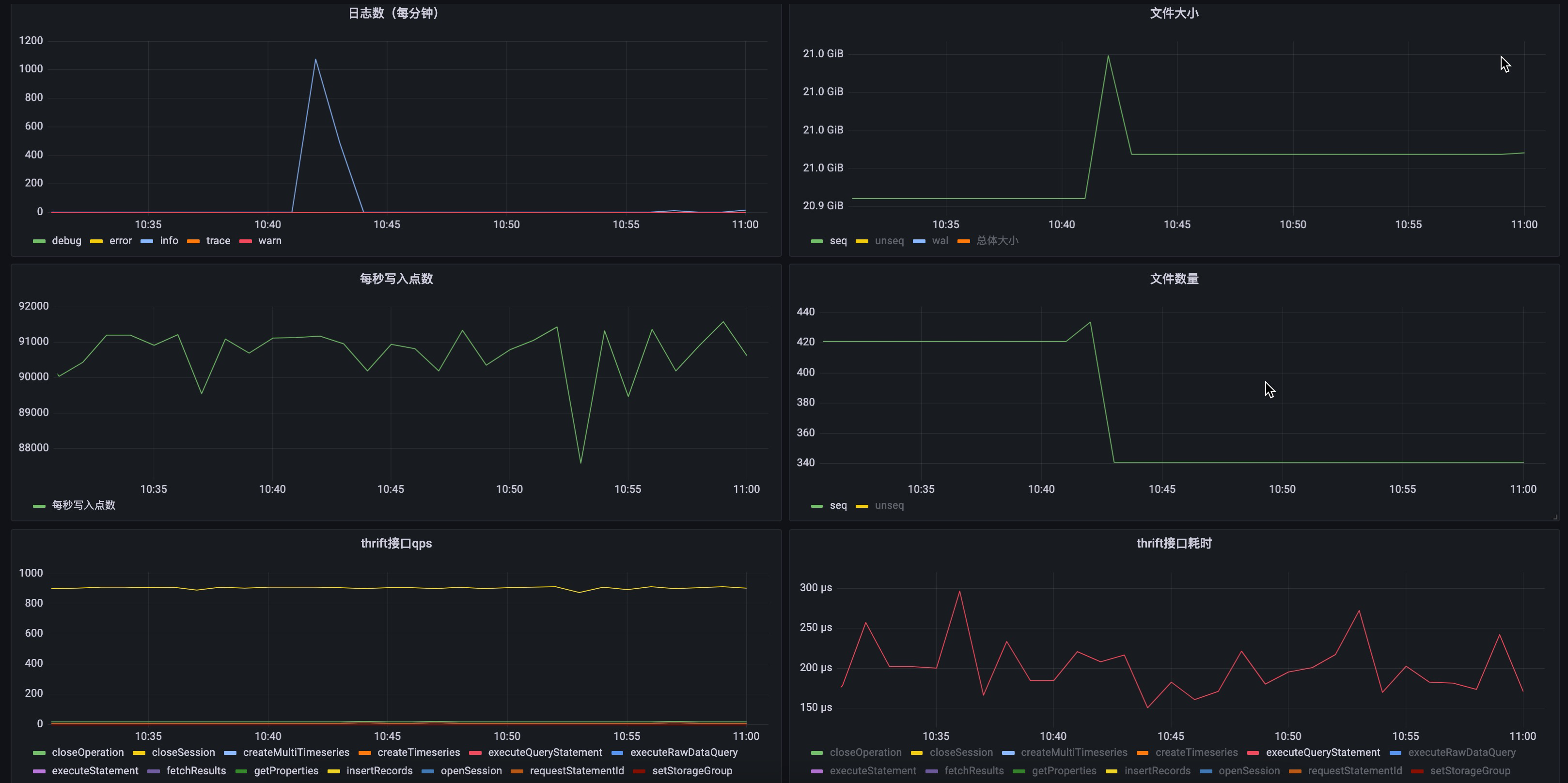

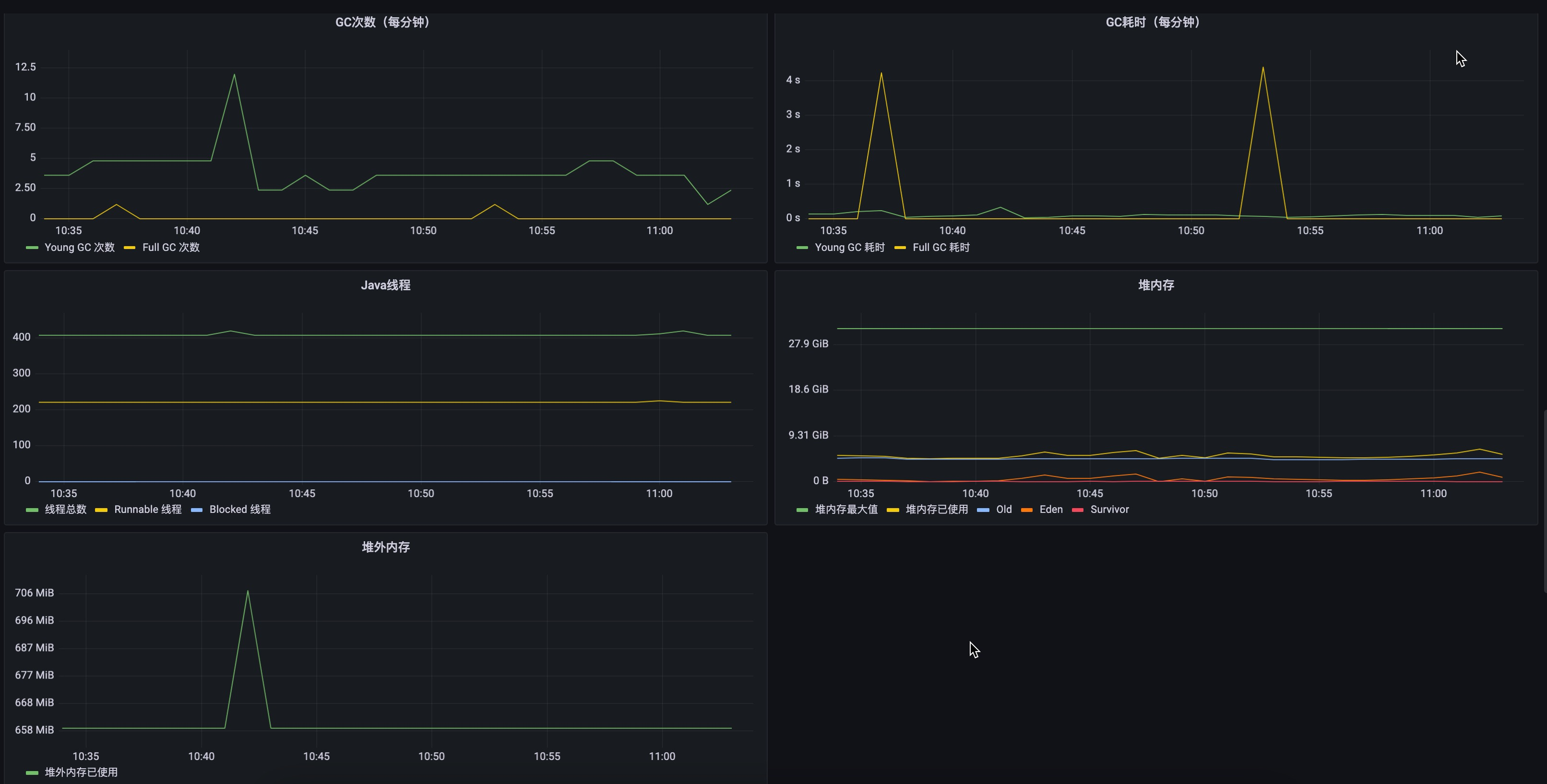

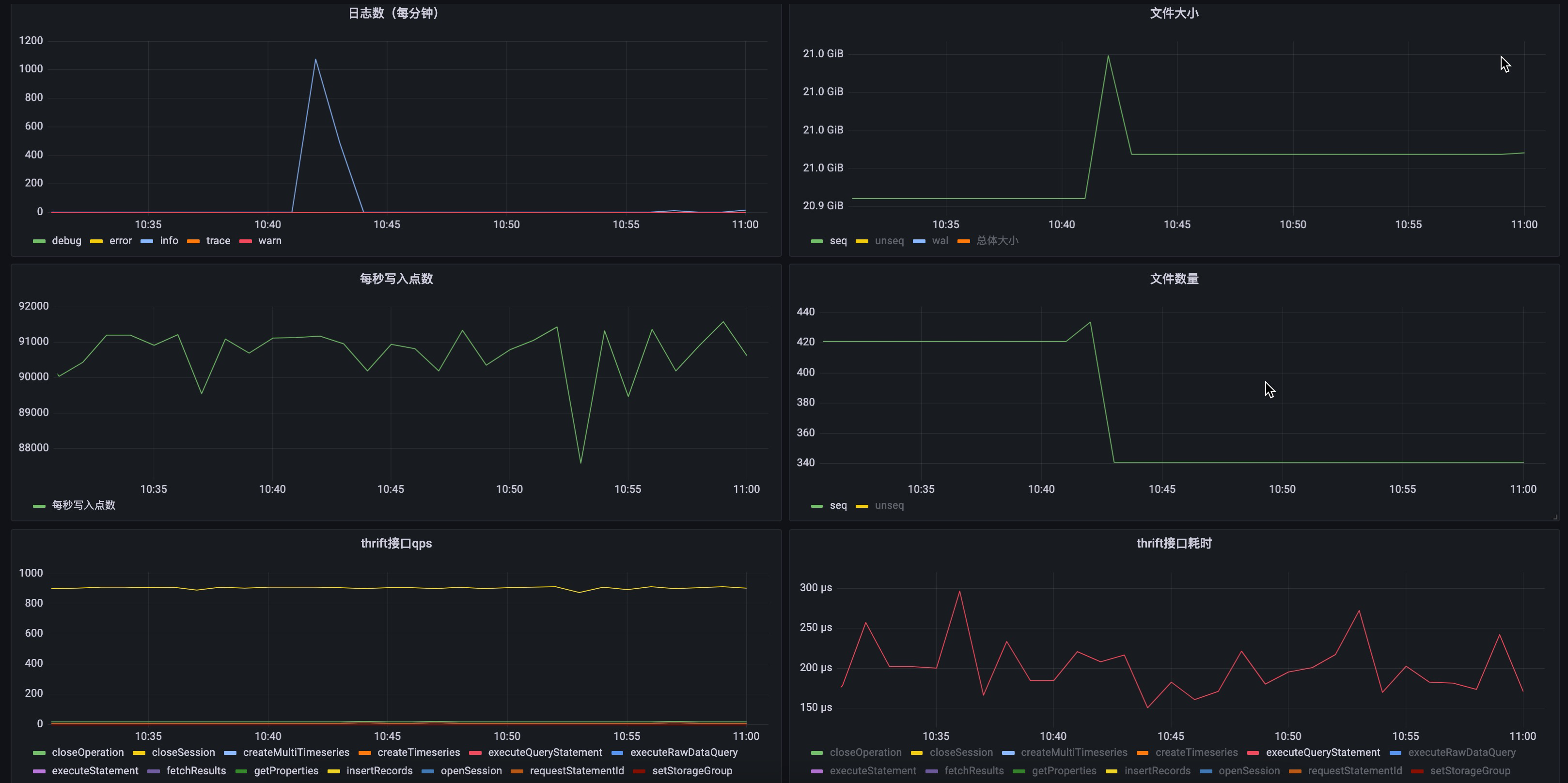

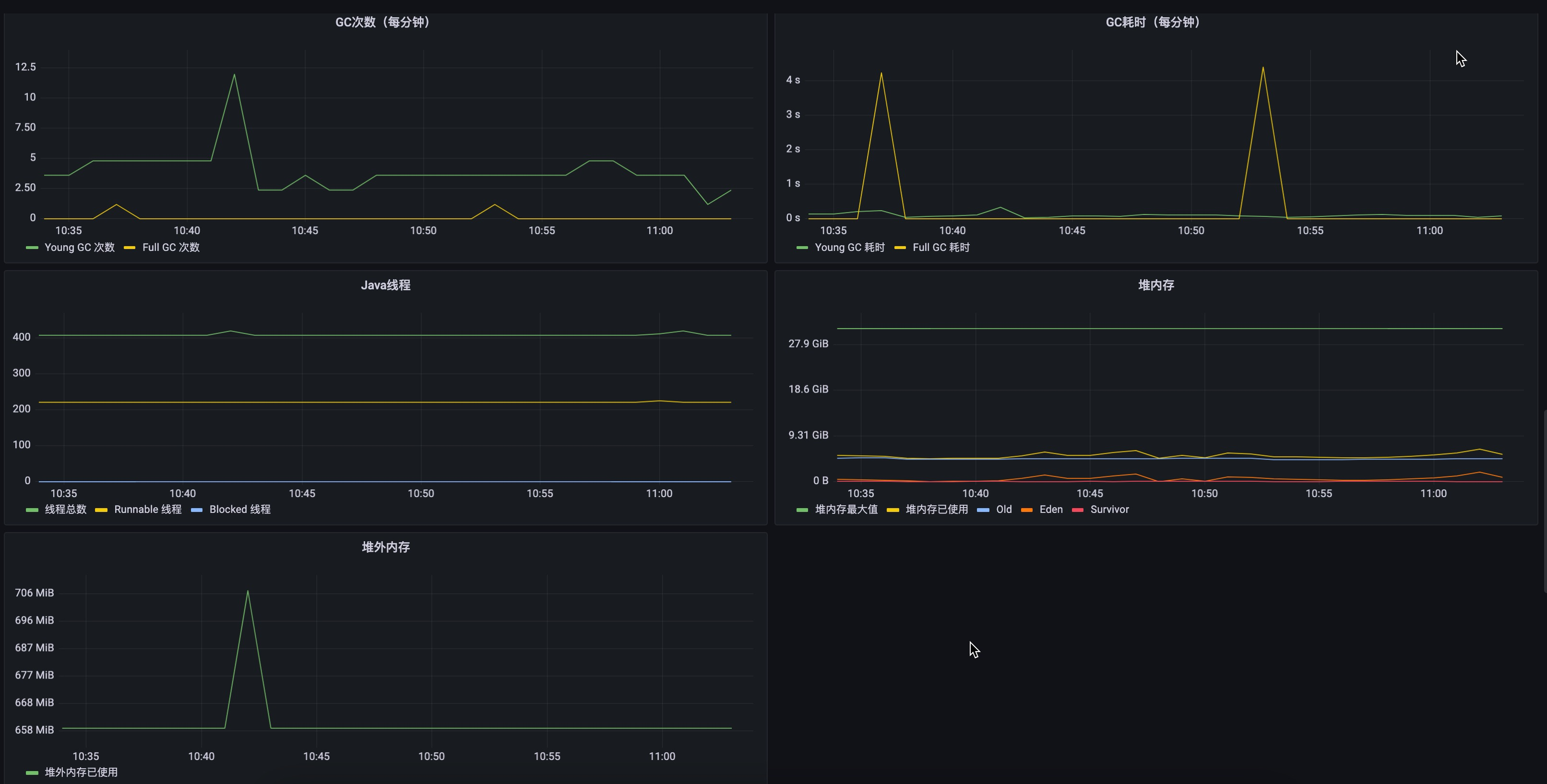

+Here are two demo pictures of IoTDB's metrics data in Grafana.

+

+

+

+

\ No newline at end of file

diff --git a/docs/zh/UserGuide/System-Tools/Metric-Tool.md

b/docs/zh/UserGuide/System-Tools/Metric-Tool.md

index a9a1051..c15defb 100644

--- a/docs/zh/UserGuide/System-Tools/Metric-Tool.md

+++ b/docs/zh/UserGuide/System-Tools/Metric-Tool.md

@@ -19,34 +19,264 @@

-->

-# Metric 工具

-IoTDB Server

提供了监控模块,可以在需要地方的引入变量监控,目前提供Jmx和Prometheus两种监控方式,可以引入counter等5种指标。关于接口的更多说明请参考<a

href = "https://github.com/apache/iotdb/tree/master/metrics";>metric部分说明文档</a>

+## 什么是Metrics?

+在IoTDB运行过程中,我们希望对IoTDB的状态进行观测,以便于排查系统问题或者及时发现系统潜在的风险。能**反映系统运行状态的一系列指标**就是metrics。

-## 使用

+## 什么场景下会使用到metrics?

-第一步:获得 IoTDB-server。

+那么什么时候会用到metrics呢?下面列举一些常见的场景。

-第二步:编辑配置文件`iotdb-metric.yml`

+1. 系统变慢了

-- 可以选择使用获取指标的方式,修改`metricReporterList`:可选参数`jmx`、`prometheus`

-- 可以选择监控框架的类型,修改`monitortype`:可选参数`dropwizard`、`micrometer`,同时在IoTDB

Server的配置文件中引入对应监控框架的依赖。

+ 系统变慢几乎是最常见也最头疼的问题,这时候我们需要尽可能多的信息来帮助我们找到系统变慢的原因,比如:

-第三步:在 IoTDB Server 中的对应位置埋点。

+ - JVM信息:是不是有FGC?GC耗时多少?GC后内存有没有恢复?是不是有大量的线程?

+ - 系统信息:CPU使用率是不是太高了?磁盘IO是不是很频繁?

+ - 连接数:当前连接是不是太多?

+ - 接口:当前TPS是多少?各个接口耗时有没有变化?

+ - 线程池:系统中各种任务是否有积压?

+ - 缓存命中率

--

首先通过`MetricsService.getInstance().getMetricManager()`获取到对应的监控管理器,之后调用对应方法即可获取到对应指标。

-- 之后对获取到的指标进行操作即可,监控框架会自动将指标通过选定方式发布。

-- 需要注意的是,所有getOrCreate方法提供的tag均应为偶数,组成对应的key-value。

+2. 磁盘快满了

-第三步:启动 IoTDB-server。

+ 这时候我们迫切想知道最近一段时间数据文件的增长情况,看看是不是某种文件有突增。

-第四步:查看具体的指标参数,根据选择的获取的指标的方式分别查看即可,即`jmx`或`prometheus`

+3. 系统运行是否正常

-- 对于jmx,请连接后查看`org.apache.iotdb.metrics`

--

对于prometheus进行如下的配置(部分参数可以自行调整,从而获取监控数据),需要注意的是,如果使用docker方式运行iotdb-server,则需要在使用`docker

run`的时候使用`-p 9091:9091`参数来暴露对应端口。

+ 此时我们可能需要通过错误日志的数量、集群节点的状态等指标来判断系统是否在正常运行。

+## 什么人需要使用metrics?

+

+所有关注系统状态的人员都可以使用,包括但不限于研发、测试、运维、DBA等等

+

+## IoTDB都有哪些metrics?

+

+目前,IoTDB对外提供一些主要模块的metrics,并且随着新功能的开发以及系统优化或者重构,metrics也会同步添加和更新。

+

+### 名词解释

+

+在进一步了解这些指标之前,我们先来看几个名词解释:

+

+- Metric Name

+

+ 指标名称唯,比如logback_events_total表示日志事件发生的总次数。

+

+- Tag

+

+ 每个指标下面可以有0到多个分类,比如logback_events_total下有一个```level```的分类,用来表示特定级别下的日志数量。

+### 数据格式

+

+IoTDB对外提供JMX和Prometheus格式的监控指标,对于JMX,可以通过```org.apache.iotdb.metrics```获取metrics指标。

+

+接下来我们以Prometheus格式为例对各个监控项进行说明。

+

+### IoTDB Metrics

+

+### 接入层

+

+| Metric | Tag | 说明 | 示例

|

+| ------------------- | --------------- | ---------------- |

-------------------------------------------- |

+| entry_seconds_count | name="接口名" | 接口累计访问次数 |

entry_seconds_count{name="openSession",} 1.0 |

+| entry_seconds_sum | name="接口名" | 接口累计耗时(s) |

entry_seconds_sum{name="openSession",} 0.024 |

+| entry_seconds_max | name="接口名" | 接口最大耗时(s) |

entry_seconds_max{name="openSession",} 0.024 |

+| quantity_total | name="pointsIn" | 系统累计写入点数 |

quantity_total{name="pointsIn",} 1.0 |

+

+### 文件

+

+| Metric | Tag | 说明 | 示例

|

+| ---------- | -------------------- | ----------------------------------- |

--------------------------- |

+| file_size | name="wal/seq/unseq" | 当前时间wal/seq/unseq文件大小(byte) |

file_size{name="wal",} 67.0 |

+| file_count | name="wal/seq/unseq" | 当前时间wal/seq/unseq文件个数 |

file_count{name="seq",} 1.0 |

+

+### Flush

+

+| Metric | Tag | 说明

| 示例

|

+| ----------------------- | ------------------------------------------- |

-------------------------------- |

------------------------------------------------------------ |

+| queue | name="flush",<br />status="running/waiting" |

当前时间flush任务数 | queue{name="flush",status="waiting",}

0.0<br/>queue{name="flush",status="running",} 0.0 |

+| cost_task_seconds_count | name="flush" |

flush累计发生次数 | cost_task_seconds_count{name="flush",} 1.0

|

+| cost_task_seconds_max | name="flush" |

到目前为止flush耗时(s)最大的一次 | cost_task_seconds_max{name="flush",} 0.363

|

+| cost_task_seconds_sum | name="flush" |

flush累计耗时(s) | cost_task_seconds_sum{name="flush",} 0.363

|

+

+### Compaction

+

+| Metric | Tag

| 说明 | 示例

|

+| ----------------------- |

------------------------------------------------------------ |

------------------------------------- |

---------------------------------------------------- |

+| queue | name="compaction_inner/compaction_cross",<br

/>status="running/waiting" | 当前时间compaction任务数 |

queue{name="compaction_inner",status="waiting",} 0.0 |

+| cost_task_seconds_count | name="compaction"

| compaction累计发生次数 |

cost_task_seconds_count{name="compaction",} 1.0 |

+| cost_task_seconds_max | name="compaction"

| 到目前为止compaction耗时(s)最大的一次 |

cost_task_seconds_max{name="compaction",} 0.363 |

+| cost_task_seconds_sum | name="compaction"

| compaction累计耗时(s) |

cost_task_seconds_sum{name="compaction",} 0.363 |

+

+### 内存占用

+

+| Metric | Tag | 说明

| 示例 |

+| ------ | --------------------------------------- |

-------------------------------------------------- |

--------------------------------- |

+| mem | name="chunkMetaData/storageGroup/mtree" |

chunkMetaData/storageGroup/mtree占用的内存(byte) | mem{name="chunkMetaData",} 2050.0

|

+

+### 缓存命中率

+

+| Metric | Tag | 说明

| 示例 |

+| --------- | --------------------------------------- |

----------------------------------------------- | --------------------------- |

+| cache_hit | name="chunk/timeSeriesMeta/bloomFilter" |

chunk/timeSeriesMeta缓存命中率,bloomFilter拦截率 | cache_hit{name="chunk",} 80 |

+

+### 业务数据

+

+| Metric | Tag | 说明

| 示例 |

+| -------- | ------------------------------------- |

-------------------------------------------- | --------------------------------

|

+| quantity | name="timeSeries/storageGroup/device" |

当前时间timeSeries/storageGroup/device的数量 | quantity{name="timeSeries",} 1.0 |

+

+### 集群

+

+| Metric | Tag | 说明

| 示例

|

+| ------------------------- | ------------------------------- |

------------------------------------------------------------ |

------------------------------------------------------------ |

+| cluster_node_leader_count | name="{{ip}}" |

节点上```dataGroupLeader```的数量,用来观察leader是否分布均匀 |

cluster_node_leader_count{name="127.0.0.1",} 2.0 |

+| cluster_uncommitted_log | name="{{ip_datagroupHeader}}" |

节点```uncommitted_log```的数量 |

cluster_uncommitted_log{name="127.0.0.1_Data-127.0.0.1-40010-raftId-0",} 0.0 |

+| cluster_node_status | name="{{ip}}" | 节点状态,1=online

2=offline |

cluster_node_status{name="127.0.0.1",} 1.0 |

+| cluster_elect_total | name="{{ip}}",status="fail/win" | 节点参与选举的次数及结果

|

cluster_elect_total{name="127.0.0.1",status="win",} 1.0 |

+

+### 日志

+

+| Metric | Tag | 说明

| 示例 |

+| -------------------- | -------------------------------------- |

--------------------------------------- |

--------------------------------------- |

+| logback_events_total | {level="trace/debug/info/warn/error",} |

trace/debug/info/warn/error日志累计数量 | logback_events_total{level="warn",} 0.0 |

+

+### JVM

+

+#### 线程

+

+| Metric | Tag

| 说明 | 示例

|

+| -------------------------- |

------------------------------------------------------------ |

------------------------ | -------------------------------------------------- |

+| jvm_threads_live_threads | 无

| 当前线程数 | jvm_threads_live_threads 25.0

|

+| jvm_threads_daemon_threads | 无

| 当前daemon线程数 | jvm_threads_daemon_threads 12.0

|

+| jvm_threads_peak_threads | 无

| 峰值线程数 | jvm_threads_peak_threads 28.0

|

+| jvm_threads_states_threads |

state="runnable/blocked/waiting/timed-waiting/new/terminated" | 当前处于各种状态的线程数 |

jvm_threads_states_threads{state="runnable",} 10.0 |

+

+#### 垃圾回收

+

+| Metric | Tag

| 说明 | 示例

|

+| ----------------------------------- |

------------------------------------------------------ |

-------------------------------------------- |

------------------------------------------------------------ |

+| jvm_gc_pause_seconds_count | action="end of major GC/end of minor

GC",cause="xxxx" | YGC/FGC发生次数及其原因 |

jvm_gc_pause_seconds_count{action="end of major GC",cause="Metadata GC

Threshold",} 1.0 |

+| jvm_gc_pause_seconds_sum | action="end of major GC/end of minor

GC",cause="xxxx" | YGC/FGC累计耗时及其原因 |

jvm_gc_pause_seconds_sum{action="end of major GC",cause="Metadata GC

Threshold",} 0.03 |

+| jvm_gc_pause_seconds_max | action="end of major

GC",cause="Metadata GC Threshold" | YGC/FGC最大耗时及其原因 |

jvm_gc_pause_seconds_max{action="end of major GC",cause="Metadata GC

Threshold",} 0.0 |

+| jvm_gc_overhead_percent | 无

| GC消耗cpu的比例 |

jvm_gc_overhead_percent 0.0 |

+| jvm_gc_memory_promoted_bytes_total | 无

| 从GC之前到GC之后老年代内存池大小正增长的累计 | jvm_gc_memory_promoted_bytes_total

8425512.0 |

+| jvm_gc_max_data_size_bytes | 无

| 老年代内存的历史最大值 | jvm_gc_max_data_size_bytes

2.863661056E9 |

+| jvm_gc_live_data_size_bytes | 无

| GC后老年代内存的大小 |

jvm_gc_live_data_size_bytes 8450088.0 |

+| jvm_gc_memory_allocated_bytes_total | 无

| 在一个GC之后到下一个GC之前年轻代增加的内存 |

jvm_gc_memory_allocated_bytes_total 4.2979144E7 |

+

+#### 内存

+

+| Metric | Tag | 说明

| 示例 |

+| ------------------------------- | ------------------------------- |

----------------------- |

------------------------------------------------------------ |

+| jvm_buffer_memory_used_bytes | id="direct/mapped" |

已经使用的缓冲区大小 | jvm_buffer_memory_used_bytes{id="direct",} 3.46728099E8 |

+| jvm_buffer_total_capacity_bytes | id="direct/mapped" | 最大缓冲区大小

| jvm_buffer_total_capacity_bytes{id="mapped",} 0.0 |

+| jvm_buffer_count_buffers | id="direct/mapped" | 当前缓冲区数量

| jvm_buffer_count_buffers{id="direct",} 183.0 |

+| jvm_memory_committed_bytes | {area="heap/nonheap",id="xxx",} |

当前向JVM申请的内存大小 | jvm_memory_committed_bytes{area="heap",id="Par Survivor

Space",}

2.44252672E8<br/>jvm_memory_committed_bytes{area="nonheap",id="Metaspace",}

3.9051264E7<br/> |

+| jvm_memory_max_bytes | {area="heap/nonheap",id="xxx",} | JVM最大内存

| jvm_memory_max_bytes{area="heap",id="Par Survivor Space",}

2.44252672E8<br/>jvm_memory_max_bytes{area="nonheap",id="Compressed Class

Space",} 1.073741824E9 |

+| jvm_memory_used_bytes | {area="heap/nonheap",id="xxx",} |

JVM已使用内存大小 | jvm_memory_used_bytes{area="heap",id="Par Eden Space",}

1.000128376E9<br/>jvm_memory_used_bytes{area="nonheap",id="Code Cache",}

2.9783808E7<br/> |

+

+#### Classes

+

+| Metric | Tag

| 说明 | 示例

|

+| ---------------------------------- |

--------------------------------------------- | ---------------------- |

------------------------------------------------------------ |

+| jvm_classes_unloaded_classes_total | 无

| jvm累计卸载的class数量 | jvm_classes_unloaded_classes_total 680.0

|

+| jvm_classes_loaded_classes | 无

| jvm累计加载的class数量 | jvm_classes_loaded_classes 5975.0

|

+| jvm_compilation_time_ms_total | {compiler="HotSpot 64-Bit Tiered

Compilers",} | jvm耗费在编译上的时间 | jvm_compilation_time_ms_total{compiler="HotSpot

64-Bit Tiered Compilers",} 107092.0 |

+

+如果想自己在IoTDB中添加更多Metrics埋点,可以参考[IoTDB Metrics

Framework](https://github.com/apache/iotdb/tree/master/metrics)使用说明

+

+## 怎样获取这些metrics?

+

+metric采集默认是关闭的,需要先到conf/iotdb-metric.yml中打开

+

+### 配置文件

+

+```yaml

+# 默认是false,改成true后启动iotdb,就可以获取到metrics数据了

+enableMetric: false

+

+# 对外以jmx和prometheus协议提供metrics数据

+metricReporterList:

+ - jmx

+ - prometheus

+

+# 底层采用的metric库,推荐使用micrometer

+monitorType: micrometer

+

+# 该参数只对 monitorType=dropwizard生效

+pushPeriodInSecond: 5

+

+########################################################

+# #

+# if the reporter is prometheus, #

+# then the following must be set #

+# #

+########################################################

+prometheusReporterConfig:

+ prometheusExporterUrl: http://localhost

+

+# 通过这个端口可以以http协议获取到metric数据

+ prometheusExporterPort: 9091

+```

+

+然后按照下面的操作获取metrics数据

+1. 打开配置文件中的metric开关

+2. 其他参数默认不动即可

+3. 启动IoTDB

+4. 打开浏览器或者用```curl``` 访问 ```http://servier_ip:9001/metrics```, 就能看到metric数据了:

+

+```

+# HELP file_count

+# TYPE file_count gauge

+file_count{name="wal",} 0.0

+file_count{name="unseq",} 0.0

+file_count{name="seq",} 2.0

+# HELP file_size

+# TYPE file_size gauge

+file_size{name="wal",} 0.0

+file_size{name="unseq",} 0.0

+file_size{name="seq",} 560.0

+# HELP queue

+# TYPE queue gauge

+queue{name="flush",status="waiting",} 0.0

+queue{name="flush",status="running",} 0.0

+# HELP quantity

+# TYPE quantity gauge

+quantity{name="timeSeries",} 1.0

+quantity{name="storageGroup",} 1.0

+quantity{name="device",} 1.0

+# HELP logback_events_total Number of error level events that made it to the

logs

+# TYPE logback_events_total counter

+logback_events_total{level="warn",} 0.0

+logback_events_total{level="debug",} 2760.0

+logback_events_total{level="error",} 0.0

+logback_events_total{level="trace",} 0.0

+logback_events_total{level="info",} 71.0

+# HELP mem

+# TYPE mem gauge

+mem{name="storageGroup",} 0.0

+mem{name="mtree",} 1328.0

```

-job_name: push-metrics

+

+### 对接Prometheus和Grafana

+

+如上面所述,IoTDB对外透出标准Prometheus格式的metrics数据,可以直接和Prometheus以及Grafana集成。

+

+IoTDB、Prometheus、Grafana三者的关系如下图所示:

+

+

+

+1. IoTDB在运行过程中持续收集metrics数据。

+2. Prometheus以固定的间隔(可配置)从IoTDB的HTTP接口拉取metrics数据。

+3. Prometheus将拉取到的metrics数据存储到自己的TSDB中。

+4. Grafana以固定的间隔(可配置)从Prometheus查询metrics数据并绘图展示。

+

+从交互流程可以看出,我们需要做一些额外的工作来部署和配置Prometheus和Grafana。

+

+比如,你可以对Prometheus进行如下的配置(部分参数可以自行调整)来从IoTDB获取监控数据

+

+```yaml

+job_name: pull-metrics

honor_labels: true

honor_timestamps: true

scrape_interval: 15s

@@ -57,4 +287,20 @@ follow_redirects: true

static_configs:

- targets:

- localhost:9091

-```

\ No newline at end of file

+```

+

+更多细节可以参考下面的文档:

+

+[Prometheus安装使用文档](https://prometheus.io/docs/prometheus/latest/getting_started/)

+

+[Prometheus从HTTP接口拉取metrics数据的配置说明](https://prometheus.io/docs/prometheus/latest/configuration/configuration/#scrape_config)

+

+[Grafana安装使用文档](https://grafana.com/docs/grafana/latest/getting-started/getting-started/)

+

+[Grafana从Prometheus查询数据并绘图的文档](https://prometheus.io/docs/visualization/grafana/#grafana-support-for-prometheus)

+

+最后是IoTDB的metrics数据在Grafana中显示的效果图:

+

+

+

+

\ No newline at end of file

diff --git

a/metrics/dropwizard-metrics/src/test/java/org/apache/iotdb/metrics/dropwizard/DropwizardMetricManagerTest.java

b/metrics/dropwizard-metrics/src/test/java/org/apache/iotdb/metrics/dropwizard/DropwizardMetricManagerTest.java

index 0ba3478..9620f32 100644

---

a/metrics/dropwizard-metrics/src/test/java/org/apache/iotdb/metrics/dropwizard/DropwizardMetricManagerTest.java

+++

b/metrics/dropwizard-metrics/src/test/java/org/apache/iotdb/metrics/dropwizard/DropwizardMetricManagerTest.java

@@ -43,6 +43,7 @@ public class DropwizardMetricManagerTest {

System.setProperty("line.separator", "\n");

// set up path of yml

System.setProperty("IOTDB_CONF", "src/test/resources");

+ MetricService.init();

metricManager = MetricService.getMetricManager();

}

diff --git

a/metrics/interface/src/main/assembly/resources/conf/iotdb-metric.yml

b/metrics/interface/src/main/assembly/resources/conf/iotdb-metric.yml

index 0148b2e..4de6896 100644

--- a/metrics/interface/src/main/assembly/resources/conf/iotdb-metric.yml

+++ b/metrics/interface/src/main/assembly/resources/conf/iotdb-metric.yml

@@ -23,6 +23,7 @@ enableMetric: false

# can be multiple reporter, e.g., jmx, prometheus

metricReporterList:

- jmx

+ - prometheus

# you can check choose one type of monitor frame, such as dropwizard,

micrometer

monitorType: micrometer

diff --git

a/metrics/interface/src/main/java/org/apache/iotdb/metrics/MetricService.java

b/metrics/interface/src/main/java/org/apache/iotdb/metrics/MetricService.java

index 69c6e1d..352c53a 100644

---

a/metrics/interface/src/main/java/org/apache/iotdb/metrics/MetricService.java

+++

b/metrics/interface/src/main/java/org/apache/iotdb/metrics/MetricService.java

@@ -39,10 +39,6 @@ public class MetricService {

private static final MetricConfig metricConfig =

MetricConfigDescriptor.getInstance().getMetricConfig();

- static {

- init();

- }

-

private static final MetricService INSTANCE = new MetricService();

private static MetricManager metricManager;

@@ -56,7 +52,7 @@ public class MetricService {

private MetricService() {}

/** init config, manager and reporter */

- private static void init() {

+ public static void init() {

logger.info("Init metric service");

// load manager

loadManager();

diff --git

a/metrics/interface/src/main/java/org/apache/iotdb/metrics/config/MetricConfig.java

b/metrics/interface/src/main/java/org/apache/iotdb/metrics/config/MetricConfig.java

index 9892b2e..6ac1825 100644

---

a/metrics/interface/src/main/java/org/apache/iotdb/metrics/config/MetricConfig.java

+++

b/metrics/interface/src/main/java/org/apache/iotdb/metrics/config/MetricConfig.java

@@ -36,7 +36,8 @@ public class MetricConfig {

private MonitorType monitorType = MonitorType.micrometer;

/** provide or push metric data to remote system, could be jmx, prometheus,

iotdb, etc. */

- private List<ReporterType> metricReporterList =

Arrays.asList(ReporterType.jmx);

+ private List<ReporterType> metricReporterList =

+ Arrays.asList(ReporterType.jmx, ReporterType.prometheus);

/** the config of prometheus reporter */

private PrometheusReporterConfig prometheusReporterConfig = new

PrometheusReporterConfig();

diff --git

a/metrics/interface/src/main/java/org/apache/iotdb/metrics/config/MetricConfigDescriptor.java

b/metrics/interface/src/main/java/org/apache/iotdb/metrics/config/MetricConfigDescriptor.java

index e72c75d..de73a21 100644

---

a/metrics/interface/src/main/java/org/apache/iotdb/metrics/config/MetricConfigDescriptor.java

+++

b/metrics/interface/src/main/java/org/apache/iotdb/metrics/config/MetricConfigDescriptor.java

@@ -32,7 +32,7 @@ import java.io.InputStream;

/** The utils class to load configure. Read from yaml file. */

public class MetricConfigDescriptor {

private static final Logger logger =

LoggerFactory.getLogger(MetricConfigDescriptor.class);

- private MetricConfig metricConfig = new MetricConfig();

+ private MetricConfig metricConfig;

public MetricConfig getMetricConfig() {

return metricConfig;

@@ -51,7 +51,7 @@ public class MetricConfigDescriptor {

*

* @return the file path

*/

- public String getPropsUrl() {

+ private String getPropsUrl() {

String url = System.getProperty(MetricConstant.IOTDB_CONF, null);

if (url == null) {

logger.warn(

@@ -67,19 +67,21 @@ public class MetricConfigDescriptor {

/** Load an property file and set MetricConfig variables. If not found file,

use default value. */

private void loadProps() {

-

String url = getPropsUrl();

-

Constructor constructor = new Constructor(MetricConfig.class);

Yaml yaml = new Yaml(constructor);

if (url != null) {

try (InputStream inputStream = new FileInputStream(new File(url))) {

logger.info("Start to read config file {}", url);

metricConfig = (MetricConfig) yaml.load(inputStream);

+ return;

} catch (IOException e) {

- logger.warn("Fail to find config file {}", url, e);

+ logger.warn("Fail to find config file : {}, use default", url, e);

}

+ } else {

+ logger.warn("Fail to find config file, use default");

}

+ metricConfig = new MetricConfig();

}

private static class MetricConfigDescriptorHolder {

diff --git

a/metrics/interface/src/main/java/org/apache/iotdb/metrics/utils/PredefinedMetric.java

b/metrics/interface/src/main/java/org/apache/iotdb/metrics/utils/PredefinedMetric.java

index 49494a3..f242110 100644

---

a/metrics/interface/src/main/java/org/apache/iotdb/metrics/utils/PredefinedMetric.java

+++

b/metrics/interface/src/main/java/org/apache/iotdb/metrics/utils/PredefinedMetric.java

@@ -20,5 +20,6 @@

package org.apache.iotdb.metrics.utils;

public enum PredefinedMetric {

- JVM

+ JVM,

+ LOGBACK

}

diff --git

a/metrics/micrometer-metrics/src/main/java/org/apache/iotdb/metrics/micrometer/MicrometerMetricManager.java

b/metrics/micrometer-metrics/src/main/java/org/apache/iotdb/metrics/micrometer/MicrometerMetricManager.java

index d47ce0f..7fdbf74 100644

---

a/metrics/micrometer-metrics/src/main/java/org/apache/iotdb/metrics/micrometer/MicrometerMetricManager.java

+++

b/metrics/micrometer-metrics/src/main/java/org/apache/iotdb/metrics/micrometer/MicrometerMetricManager.java

@@ -34,6 +34,7 @@ import org.apache.iotdb.metrics.utils.ReporterType;

import io.micrometer.core.instrument.*;

import io.micrometer.core.instrument.binder.jvm.*;

+import io.micrometer.core.instrument.binder.logging.LogbackMetrics;

import io.micrometer.jmx.JmxMeterRegistry;

import io.micrometer.prometheus.PrometheusConfig;

import io.micrometer.prometheus.PrometheusMeterRegistry;

@@ -396,6 +397,9 @@ public class MicrometerMetricManager implements

MetricManager {

case JVM:

enableJvmMetrics();

break;

+ case LOGBACK:

+ enableLogbackMetrics();

+ break;

default:

logger.warn("Unsupported metric type {}", metric);

}

@@ -420,6 +424,13 @@ public class MicrometerMetricManager implements

MetricManager {

jvmThreadMetrics.bindTo(meterRegistry);

}

+ private void enableLogbackMetrics() {

+ if (!isEnable) {

+ return;

+ }

+ new LogbackMetrics().bindTo(meterRegistry);

+ }

+

@Override

public void removeCounter(String metric, String... tags) {

if (!isEnable) {

diff --git

a/metrics/micrometer-metrics/src/main/java/org/apache/iotdb/metrics/micrometer/reporter/MicrometerPrometheusReporter.java

b/metrics/micrometer-metrics/src/main/java/org/apache/iotdb/metrics/micrometer/reporter/MicrometerPrometheusReporter.java

index 99f7650..2e39a8a 100644

---

a/metrics/micrometer-metrics/src/main/java/org/apache/iotdb/metrics/micrometer/reporter/MicrometerPrometheusReporter.java

+++

b/metrics/micrometer-metrics/src/main/java/org/apache/iotdb/metrics/micrometer/reporter/MicrometerPrometheusReporter.java

@@ -28,12 +28,14 @@ import org.apache.iotdb.metrics.utils.ReporterType;

import io.micrometer.core.instrument.MeterRegistry;

import io.micrometer.core.instrument.Metrics;

import io.micrometer.prometheus.PrometheusMeterRegistry;

+import io.netty.channel.ChannelOption;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import reactor.core.publisher.Mono;

import reactor.netty.DisposableServer;

import reactor.netty.http.server.HttpServer;

+import java.time.Duration;

import java.util.Set;

import java.util.stream.Collectors;

@@ -43,7 +45,7 @@ public class MicrometerPrometheusReporter implements Reporter

{

MetricConfigDescriptor.getInstance().getMetricConfig();

private MetricManager metricManager;

- private Thread runThread;

+ private DisposableServer httpServer;

@Override

public boolean start() {

@@ -57,8 +59,10 @@ public class MicrometerPrometheusReporter implements

Reporter {

}

PrometheusMeterRegistry prometheusMeterRegistry =

(PrometheusMeterRegistry) meterRegistrySet.toArray()[0];

- DisposableServer server =

+ httpServer =

HttpServer.create()

+ .idleTimeout(Duration.ofMillis(30_000L))

+ .option(ChannelOption.CONNECT_TIMEOUT_MILLIS, 2000)

.port(

Integer.parseInt(

metricConfig.getPrometheusReporterConfig().getPrometheusExporterPort()))

@@ -69,22 +73,21 @@ public class MicrometerPrometheusReporter implements

Reporter {

(request, response) ->

response.sendString(Mono.just(prometheusMeterRegistry.scrape()))))

.bindNow();

- runThread = new Thread(server::onDispose);

- runThread.start();

+ LOGGER.info(

+ "http server for metrics stated, listen on {}",

+

metricConfig.getPrometheusReporterConfig().getPrometheusExporterPort());

return true;

}

@Override

public boolean stop() {

- try {

- // stop prometheus reporter

- if (runThread != null) {

- runThread.join();

+ if (httpServer != null) {

+ try {

+ httpServer.disposeNow();

+ } catch (Exception e) {

+ LOGGER.error("failed to stop server", e);

+ return false;

}

- } catch (InterruptedException e) {

- LOGGER.warn("Failed to stop micrometer prometheus reporter", e);

- Thread.currentThread().interrupt();

- return false;

}

return true;

}

diff --git

a/metrics/micrometer-metrics/src/test/java/org/apache/iotdb/metrics/micrometer/MicrometerMetricManagerTest.java

b/metrics/micrometer-metrics/src/test/java/org/apache/iotdb/metrics/micrometer/MicrometerMetricManagerTest.java

index 827f25c..a0a346c 100644

---

a/metrics/micrometer-metrics/src/test/java/org/apache/iotdb/metrics/micrometer/MicrometerMetricManagerTest.java

+++

b/metrics/micrometer-metrics/src/test/java/org/apache/iotdb/metrics/micrometer/MicrometerMetricManagerTest.java

@@ -42,6 +42,7 @@ public class MicrometerMetricManagerTest {

System.setProperty("line.separator", "\n");

// set up path of yml

System.setProperty("IOTDB_CONF", "src/test/resources");

+ MetricService.init();

metricManager = MetricService.getMetricManager();

}

diff --git

a/server/src/main/java/org/apache/iotdb/db/concurrent/IoTDBThreadPoolFactory.java

b/server/src/main/java/org/apache/iotdb/db/concurrent/IoTDBThreadPoolFactory.java

index 1749ff2..1c557bf 100644

---

a/server/src/main/java/org/apache/iotdb/db/concurrent/IoTDBThreadPoolFactory.java

+++

b/server/src/main/java/org/apache/iotdb/db/concurrent/IoTDBThreadPoolFactory.java

@@ -79,14 +79,8 @@ public class IoTDBThreadPoolFactory {

public static ExecutorService newFixedThreadPoolWithDaemonThread(int

nThreads, String poolName) {

logger.info(NEW_FIXED_THREAD_POOL_LOGGER_FORMAT, poolName, nThreads);

- return new WrappedThreadPoolExecutor(

- nThreads,

- nThreads,

- 0L,

- TimeUnit.MILLISECONDS,

- new LinkedBlockingQueue<>(),

- new IoTDBDaemonThreadFactory(poolName),

- poolName);

+ return new WrappedSingleThreadExecutorService(

+ Executors.newSingleThreadExecutor(new IoTThreadFactory(poolName)),

poolName);

}

public static ExecutorService newFixedThreadPool(

diff --git

a/server/src/main/java/org/apache/iotdb/db/engine/cache/ChunkCache.java

b/server/src/main/java/org/apache/iotdb/db/engine/cache/ChunkCache.java

index c965f12..9aa14ab 100644

--- a/server/src/main/java/org/apache/iotdb/db/engine/cache/ChunkCache.java

+++ b/server/src/main/java/org/apache/iotdb/db/engine/cache/ChunkCache.java

@@ -22,7 +22,11 @@ package org.apache.iotdb.db.engine.cache;

import org.apache.iotdb.db.conf.IoTDBConfig;

import org.apache.iotdb.db.conf.IoTDBDescriptor;

import org.apache.iotdb.db.query.control.FileReaderManager;

+import org.apache.iotdb.db.service.metrics.Metric;

+import org.apache.iotdb.db.service.metrics.MetricsService;

+import org.apache.iotdb.db.service.metrics.Tag;

import org.apache.iotdb.db.utils.TestOnly;

+import org.apache.iotdb.metrics.config.MetricConfigDescriptor;

import org.apache.iotdb.tsfile.file.metadata.ChunkMetadata;

import org.apache.iotdb.tsfile.read.TsFileSequenceReader;

import org.apache.iotdb.tsfile.read.common.Chunk;

@@ -80,6 +84,18 @@ public class ChunkCache {

throw e;

}

});

+

+ // add metrics

+ if

(MetricConfigDescriptor.getInstance().getMetricConfig().getEnableMetric()) {

+ MetricsService.getInstance()

+ .getMetricManager()

+ .getOrCreateAutoGauge(

+ Metric.CACHE_HIT.toString(),

+ lruCache,

+ l -> (long) (l.stats().hitRate() * 100),

+ Tag.NAME.toString(),

+ "chunk");

+ }

}

public static ChunkCache getInstance() {

diff --git

a/server/src/main/java/org/apache/iotdb/db/engine/cache/TimeSeriesMetadataCache.java

b/server/src/main/java/org/apache/iotdb/db/engine/cache/TimeSeriesMetadataCache.java

index 7c92b82..797037f 100644

---

a/server/src/main/java/org/apache/iotdb/db/engine/cache/TimeSeriesMetadataCache.java

+++

b/server/src/main/java/org/apache/iotdb/db/engine/cache/TimeSeriesMetadataCache.java

@@ -23,7 +23,11 @@ import org.apache.iotdb.db.conf.IoTDBConfig;

import org.apache.iotdb.db.conf.IoTDBConstant;

import org.apache.iotdb.db.conf.IoTDBDescriptor;

import org.apache.iotdb.db.query.control.FileReaderManager;

+import org.apache.iotdb.db.service.metrics.Metric;

+import org.apache.iotdb.db.service.metrics.MetricsService;

+import org.apache.iotdb.db.service.metrics.Tag;

import org.apache.iotdb.db.utils.TestOnly;

+import org.apache.iotdb.metrics.config.MetricConfigDescriptor;

import org.apache.iotdb.tsfile.file.metadata.ChunkMetadata;

import org.apache.iotdb.tsfile.file.metadata.TimeseriesMetadata;

import org.apache.iotdb.tsfile.read.TsFileSequenceReader;

@@ -66,6 +70,9 @@ public class TimeSeriesMetadataCache {

private final AtomicLong entryAverageSize = new AtomicLong(0);

+ private final AtomicLong bloomFilterRequestCount = new AtomicLong(0L);

+ private final AtomicLong bloomFilterPreventCount = new AtomicLong(0L);

+

private final Map<String, WeakReference<String>> devices =

Collections.synchronizedMap(new WeakHashMap<>());

private static final String SEPARATOR = "$";

@@ -97,6 +104,33 @@ public class TimeSeriesMetadataCache {

+

RamUsageEstimator.shallowSizeOf(value.getChunkMetadataList())))

.recordStats()

.build();

+

+ if

(MetricConfigDescriptor.getInstance().getMetricConfig().getEnableMetric()) {

+ // add metrics

+ MetricsService.getInstance()

+ .getMetricManager()

+ .getOrCreateAutoGauge(

+ Metric.CACHE_HIT.toString(),

+ lruCache,

+ l -> (long) (l.stats().hitRate() * 100),

+ Tag.NAME.toString(),

+ "timeSeriesMeta");

+ // add metrics

+ MetricsService.getInstance()

+ .getMetricManager()

+ .getOrCreateAutoGauge(

+ Metric.CACHE_HIT.toString(),

+ bloomFilterPreventCount,

+ prevent -> {

+ if (bloomFilterRequestCount.get() == 0L) {

+ return 1L;

+ }

+ return (long)

+ ((double) prevent.get() / (double)

bloomFilterRequestCount.get() * 100L);

+ },

+ Tag.NAME.toString(),

+ "bloomFilter");

+ }

}

public static TimeSeriesMetadataCache getInstance() {

@@ -141,11 +175,15 @@ public class TimeSeriesMetadataCache {

BloomFilter bloomFilter =

BloomFilterCache.getInstance()

.get(new BloomFilterCache.BloomFilterCacheKey(key.filePath),

debug);

- if (bloomFilter != null &&

!bloomFilter.contains(path.getFullPath())) {

- if (debug) {

- DEBUG_LOGGER.info("TimeSeries meta data {} is filter by

bloomFilter!", key);

+ if (bloomFilter != null) {

+ bloomFilterRequestCount.incrementAndGet();

+ if (!bloomFilter.contains(path.getFullPath())) {

+ bloomFilterPreventCount.incrementAndGet();

+ if (debug) {

+ DEBUG_LOGGER.info("TimeSeries meta data {} is filter by

bloomFilter!", key);

+ }

+ return null;

}

- return null;

}

TsFileSequenceReader reader =

FileReaderManager.getInstance().get(key.filePath, true);

List<TimeseriesMetadata> timeSeriesMetadataList =

diff --git

a/server/src/main/java/org/apache/iotdb/db/engine/compaction/CompactionTaskManager.java

b/server/src/main/java/org/apache/iotdb/db/engine/compaction/CompactionTaskManager.java

index 8eac781..54fbaa6 100644

---

a/server/src/main/java/org/apache/iotdb/db/engine/compaction/CompactionTaskManager.java

+++

b/server/src/main/java/org/apache/iotdb/db/engine/compaction/CompactionTaskManager.java

@@ -23,10 +23,17 @@ import

org.apache.iotdb.db.concurrent.IoTDBThreadPoolFactory;

import org.apache.iotdb.db.concurrent.ThreadName;

import

org.apache.iotdb.db.concurrent.threadpool.WrappedScheduledExecutorService;

import org.apache.iotdb.db.conf.IoTDBDescriptor;

+import

org.apache.iotdb.db.engine.compaction.cross.AbstractCrossSpaceCompactionTask;

+import

org.apache.iotdb.db.engine.compaction.inner.AbstractInnerSpaceCompactionTask;

import org.apache.iotdb.db.engine.compaction.task.AbstractCompactionTask;

import org.apache.iotdb.db.service.IService;

import org.apache.iotdb.db.service.ServiceType;

+import org.apache.iotdb.db.service.metrics.Metric;

+import org.apache.iotdb.db.service.metrics.MetricsService;

+import org.apache.iotdb.db.service.metrics.Tag;

import org.apache.iotdb.db.utils.TestOnly;

+import org.apache.iotdb.metrics.config.MetricConfigDescriptor;

+import org.apache.iotdb.metrics.type.Gauge;

import com.google.common.collect.MinMaxPriorityQueue;

import org.slf4j.Logger;

@@ -192,6 +199,12 @@ public class CompactionTaskManager implements IService {

if (!candidateCompactionTaskQueue.contains(compactionTask)

&& !runningCompactionTaskList.contains(compactionTask)) {

candidateCompactionTaskQueue.add(compactionTask);

+

+ // add metrics

+ if

(MetricConfigDescriptor.getInstance().getMetricConfig().getEnableMetric()) {

+ addMetrics(compactionTask, true, false);

+ }

+

return true;

}

return false;

@@ -206,15 +219,53 @@ public class CompactionTaskManager implements IService {

<

IoTDBDescriptor.getInstance().getConfig().getConcurrentCompactionThread()

&& candidateCompactionTaskQueue.size() > 0) {

AbstractCompactionTask task = candidateCompactionTaskQueue.poll();

+

+ // add metrics

+ if

(MetricConfigDescriptor.getInstance().getMetricConfig().getEnableMetric()) {

+ addMetrics(task, false, false);

+ }

+

if (task != null && task.checkValidAndSetMerging()) {

submitTask(task.getFullStorageGroupName(), task.getTimePartition(),

task);

runningCompactionTaskList.add(task);

+

+ // add metrics

+ if

(MetricConfigDescriptor.getInstance().getMetricConfig().getEnableMetric()) {

+ addMetrics(task, true, true);

+ }

}

}

}

+ private void addMetrics(AbstractCompactionTask task, boolean isAdd, boolean

isRunning) {

+ String taskType = "unknown";

+ if (task instanceof AbstractInnerSpaceCompactionTask) {

+ taskType = "inner";

+ } else if (task instanceof AbstractCrossSpaceCompactionTask) {

+ taskType = "cross";

+ }

+ Gauge gauge =

+ MetricsService.getInstance()

+ .getMetricManager()

+ .getOrCreateGauge(

+ Metric.QUEUE.toString(),

+ Tag.NAME.toString(),

+ "compaction_" + taskType,

+ Tag.STATUS.toString(),

+ isRunning ? "running" : "waiting");

+ if (isAdd) {

+ gauge.incr(1L);

+ } else {

+ gauge.decr(1L);

+ }

+ }

+

public synchronized void removeRunningTaskFromList(AbstractCompactionTask

task) {

runningCompactionTaskList.remove(task);

+ // add metrics

+ if

(MetricConfigDescriptor.getInstance().getMetricConfig().getEnableMetric()) {

+ addMetrics(task, false, true);

+ }

}

/**

diff --git

a/server/src/main/java/org/apache/iotdb/db/engine/compaction/task/AbstractCompactionTask.java

b/server/src/main/java/org/apache/iotdb/db/engine/compaction/task/AbstractCompactionTask.java

index 4bf4d46..ff27c1d 100644

---

a/server/src/main/java/org/apache/iotdb/db/engine/compaction/task/AbstractCompactionTask.java

+++

b/server/src/main/java/org/apache/iotdb/db/engine/compaction/task/AbstractCompactionTask.java

@@ -23,11 +23,16 @@ import

org.apache.iotdb.db.engine.compaction.CompactionScheduler;

import org.apache.iotdb.db.engine.compaction.CompactionTaskManager;

import

org.apache.iotdb.db.engine.compaction.cross.inplace.InplaceCompactionRecoverTask;

import

org.apache.iotdb.db.engine.compaction.inner.sizetiered.SizeTieredCompactionRecoverTask;

+import org.apache.iotdb.db.service.metrics.Metric;

+import org.apache.iotdb.db.service.metrics.MetricsService;

+import org.apache.iotdb.db.service.metrics.Tag;

+import org.apache.iotdb.metrics.config.MetricConfigDescriptor;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import java.util.concurrent.Callable;

+import java.util.concurrent.TimeUnit;

import java.util.concurrent.atomic.AtomicInteger;

/**

@@ -53,6 +58,7 @@ public abstract class AbstractCompactionTask implements

Callable<Void> {

@Override

public Void call() throws Exception {

+ long startTime = System.currentTimeMillis();

currentTaskNum.incrementAndGet();

try {

doCompaction();

@@ -66,6 +72,18 @@ public abstract class AbstractCompactionTask implements

Callable<Void> {

}

this.currentTaskNum.decrementAndGet();

}

+

+ if

(MetricConfigDescriptor.getInstance().getMetricConfig().getEnableMetric()) {

+ MetricsService.getInstance()

+ .getMetricManager()

+ .timer(