This is an automated email from the ASF dual-hosted git repository.

xingtanzjr pushed a commit to branch rel/1.2

in repository https://gitbox.apache.org/repos/asf/iotdb.git

The following commit(s) were added to refs/heads/rel/1.2 by this push:

new f2e3e7ae082 Update configuration and remove FREQ encoding (#10169)

(#10185)

f2e3e7ae082 is described below

commit f2e3e7ae0829ead25e35e63886d1ea2984c3e1ed

Author: Haonan <[email protected]>

AuthorDate: Sat Jun 17 14:35:59 2023 +0800

Update configuration and remove FREQ encoding (#10169) (#10185)

---

.../resources/conf/iotdb-confignode.properties | 34 +-

docs/UserGuide/API/InfluxDB-Protocol.md | 344 --------------------

docs/UserGuide/Data-Concept/Encoding.md | 22 +-

docs/UserGuide/Reference/Common-Config-Manual.md | 81 +----

docs/UserGuide/Reference/DataNode-Config-Manual.md | 2 +-

docs/zh/UserGuide/API/InfluxDB-Protocol.md | 347 ---------------------

docs/zh/UserGuide/Data-Concept/Encoding.md | 22 +-

.../zh/UserGuide/Reference/Common-Config-Manual.md | 83 +----

.../UserGuide/Reference/DataNode-Config-Manual.md | 2 +-

.../org/apache/iotdb/db/it/IoTDBEncodingIT.java | 59 ----

iotdb-client/client-cpp/src/main/Session.h | 1 -

.../client-py/iotdb/utils/IoTDBConstants.py | 1 -

.../iotdb/flink/tsfile/util/TSFileConfigUtil.java | 1 -

.../util/TSFileConfigUtilCompletenessTest.java | 2 -

.../resources/conf/iotdb-common.properties | 29 +-

.../iotdb/commons/conf/CommonDescriptor.java | 4 -

.../resources/conf/iotdb-datanode.properties | 36 +--

.../java/org/apache/iotdb/db/conf/IoTDBConfig.java | 29 +-

.../org/apache/iotdb/db/conf/IoTDBDescriptor.java | 24 --

.../java/org/apache/iotdb/db/service/DataNode.java | 3 -

.../iotdb/db/service/InfluxDBRPCService.java | 109 -------

.../iotdb/db/service/InfluxDBRPCServiceMBean.java | 21 --

.../org/apache/iotdb/db/utils/SchemaUtils.java | 2 -

site/src/main/.vuepress/sidebar/en.ts | 1 -

site/src/main/.vuepress/sidebar/zh.ts | 1 -

tsfile/pom.xml | 4 -

.../iotdb/tsfile/common/conf/TSFileConfig.java | 20 --

.../iotdb/tsfile/common/conf/TSFileDescriptor.java | 3 -

.../iotdb/tsfile/encoding/decoder/Decoder.java | 2 -

.../iotdb/tsfile/encoding/decoder/FreqDecoder.java | 144 ---------

.../iotdb/tsfile/encoding/encoder/FreqEncoder.java | 317 -------------------

.../tsfile/encoding/encoder/TSEncodingBuilder.java | 64 ----

.../tsfile/file/metadata/TimeseriesMetadata.java | 2 -

.../tsfile/file/metadata/enums/TSEncoding.java | 3 +-

.../apache/iotdb/tsfile/utils/BitConstructor.java | 94 ------

.../org/apache/iotdb/tsfile/utils/BitReader.java | 70 -----

.../tsfile/encoding/decoder/FreqDecoderTest.java | 161 ----------

37 files changed, 37 insertions(+), 2107 deletions(-)

diff --git a/confignode/src/assembly/resources/conf/iotdb-confignode.properties

b/confignode/src/assembly/resources/conf/iotdb-confignode.properties

index 726c0264b31..53bc6cbd0a0 100644

--- a/confignode/src/assembly/resources/conf/iotdb-confignode.properties

+++ b/confignode/src/assembly/resources/conf/iotdb-confignode.properties

@@ -120,7 +120,7 @@ cn_target_config_node_list=127.0.0.1:10710

# The reporters of metric module to report metrics

# If there are more than one reporter, please separate them by commas ",".

-# Options: [JMX, PROMETHEUS, IOTDB]

+# Options: [JMX, PROMETHEUS]

# Datatype: String

# cn_metric_reporter_list=

@@ -140,34 +140,4 @@ cn_target_config_node_list=127.0.0.1:10710

# The port of prometheus reporter of metric module

# Datatype: int

-# cn_metric_prometheus_reporter_port=9091

-

-# The host of IoTDB reporter of metric module

-# Could set 127.0.0.1(for local test) or ipv4 address

-# Datatype: String

-# cn_metric_iotdb_reporter_host=127.0.0.1

-

-# The port of IoTDB reporter of metric module

-# Datatype: int

-# cn_metric_iotdb_reporter_port=6667

-

-# The username of IoTDB reporter of metric module

-# Datatype: String

-# cn_metric_iotdb_reporter_username=root

-

-# The password of IoTDB reporter of metric module

-# Datatype: String

-# cn_metric_iotdb_reporter_password=root

-

-# The max connection number of IoTDB reporter of metric module

-# Datatype: int

-# cn_metric_iotdb_reporter_max_connection_number=3

-

-# The location of IoTDB reporter of metric module

-# The metrics will write into root.__system.${location}

-# Datatype: String

-# cn_metric_iotdb_reporter_location=metric

-

-# The push period of IoTDB reporter of metric module in second

-# Datatype: int

-# cn_metric_iotdb_reporter_push_period=15

\ No newline at end of file

+# cn_metric_prometheus_reporter_port=9091

\ No newline at end of file

diff --git a/docs/UserGuide/API/InfluxDB-Protocol.md

b/docs/UserGuide/API/InfluxDB-Protocol.md

deleted file mode 100644

index 1bb0a5a15e5..00000000000

--- a/docs/UserGuide/API/InfluxDB-Protocol.md

+++ /dev/null

@@ -1,344 +0,0 @@

-<!--

-

- Licensed to the Apache Software Foundation (ASF) under one

- or more contributor license agreements. See the NOTICE file

- distributed with this work for additional information

- regarding copyright ownership. The ASF licenses this file

- to you under the Apache License, Version 2.0 (the

- "License"); you may not use this file except in compliance

- with the License. You may obtain a copy of the License at

-

- http://www.apache.org/licenses/LICENSE-2.0

-

- Unless required by applicable law or agreed to in writing,

- software distributed under the License is distributed on an

- "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

- KIND, either express or implied. See the License for the

- specific language governing permissions and limitations

- under the License.

-

--->

-

-## 0. Import Dependency

-

-```xml

- <dependency>

- <groupId>org.apache.iotdb</groupId>

- <artifactId>influxdb-protocol</artifactId>

- <version>1.0.0</version>

- </dependency>

-```

-

-Here are some

[examples](https://github.com/apache/iotdb/blob/master/example/influxdb-protocol-example/src/main/java/org/apache/iotdb/influxdb/InfluxDBExample.java)

of connecting IoTDB using the InfluxDB-Protocol adapter.

-

-## 1. Switching Scheme

-

-If your original service code for accessing InfluxDB is as follows:

-

-```java

-InfluxDB influxDB = InfluxDBFactory.connect(openurl, username, password);

-```

-

-You only need to replace the InfluxDBFactory with **IoTDBInfluxDBFactory** to

switch the business to IoTDB:

-

-```java

-InfluxDB influxDB = IoTDBInfluxDBFactory.connect(openurl, username, password);

-```

-

-## 2. Conceptual Design

-

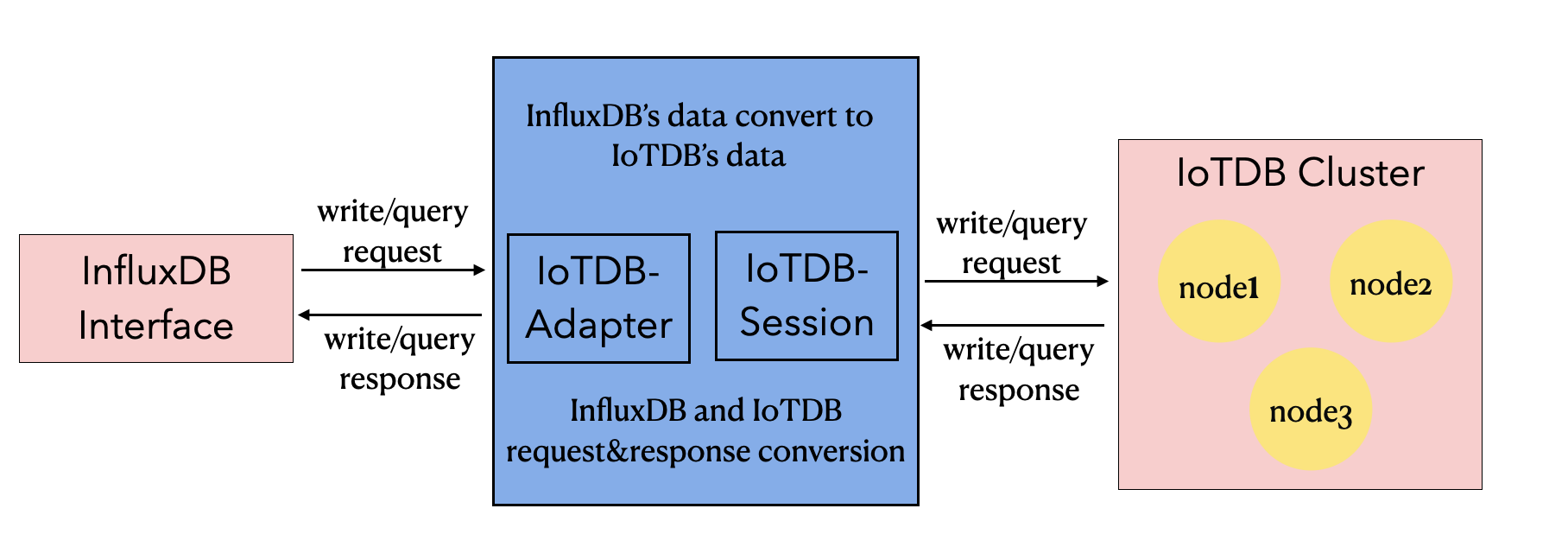

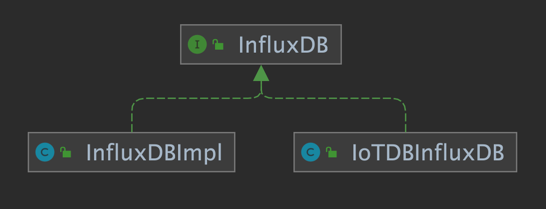

-### 2.1 InfluxDB-Protocol Adapter

-

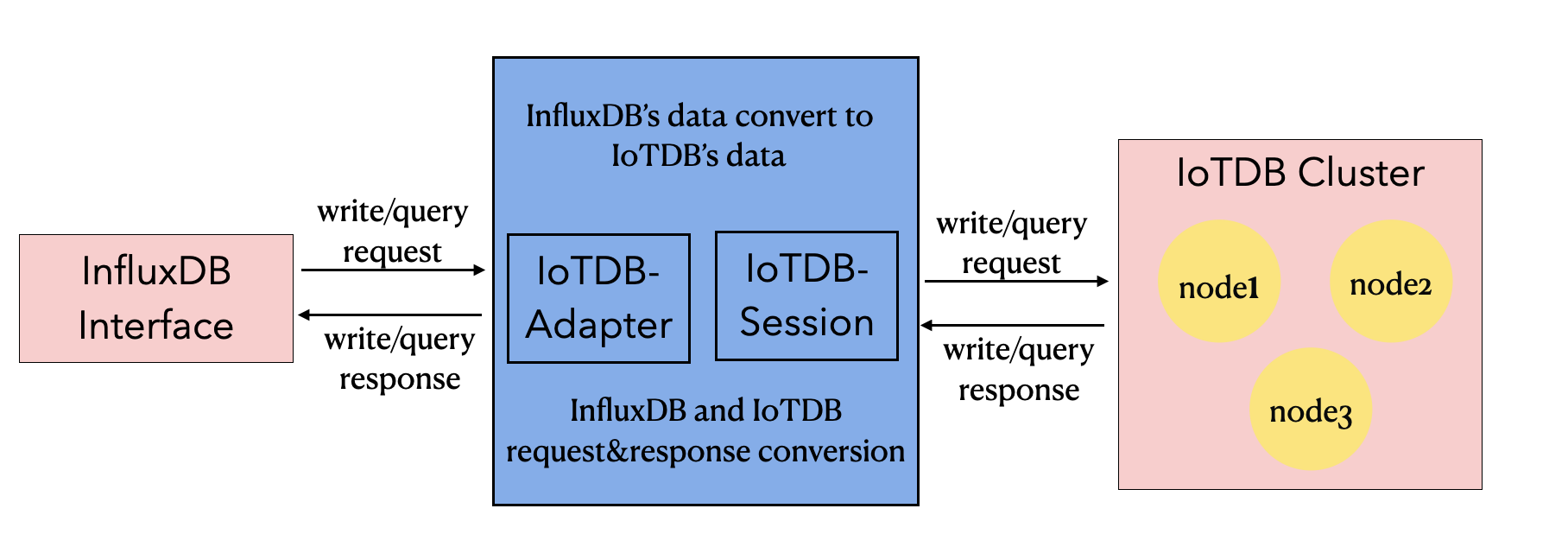

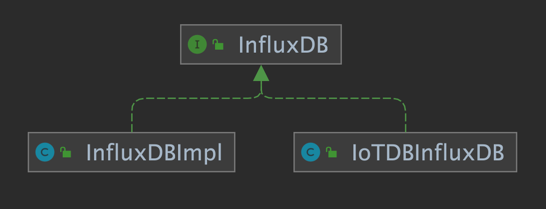

-Based on the IoTDB Java ServiceProvider interface, the adapter implements the

'interface InfluxDB' of the java interface of InfluxDB, and provides users with

all the interface methods of InfluxDB. End users can use the InfluxDB protocol

to initiate write and read requests to IoTDB without perception.

-

-

-

-

-

-

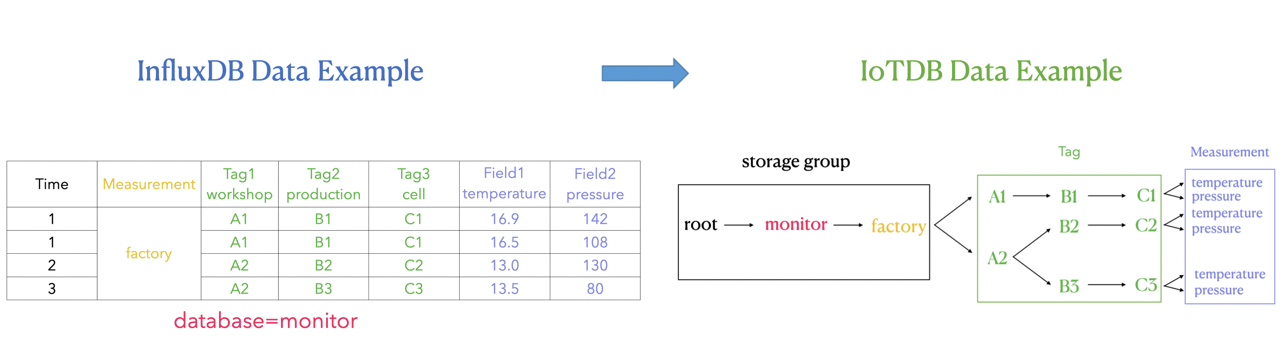

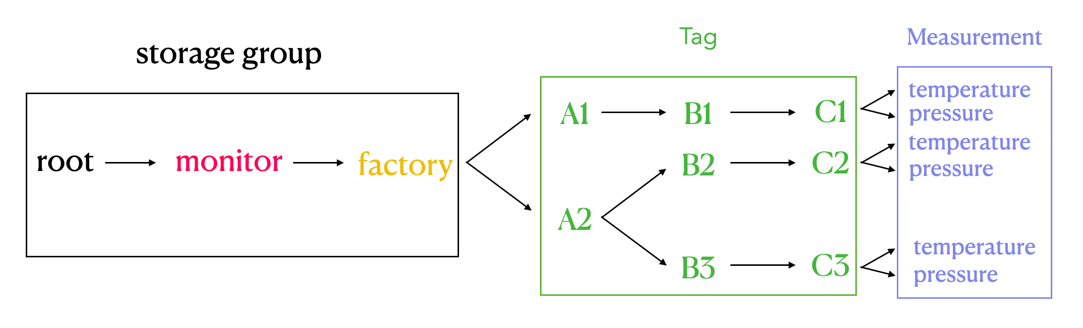

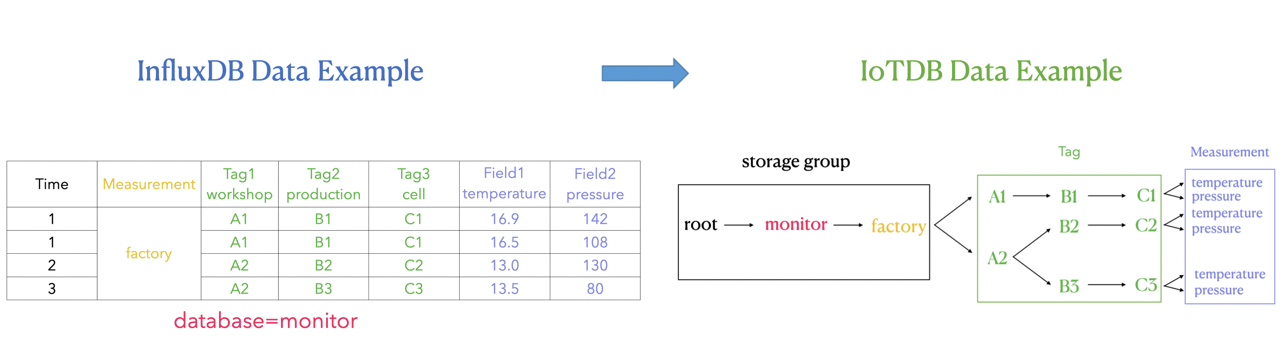

-### 2.2 Metadata Format Conversion

-The metadata of InfluxDB is tag field model, and the metadata of IoTDB is tree

model. In order to make the adapter compatible with the InfluxDB protocol, the

metadata model of InfluxDB needs to be transformed into the metadata model of

IoTDB.

-

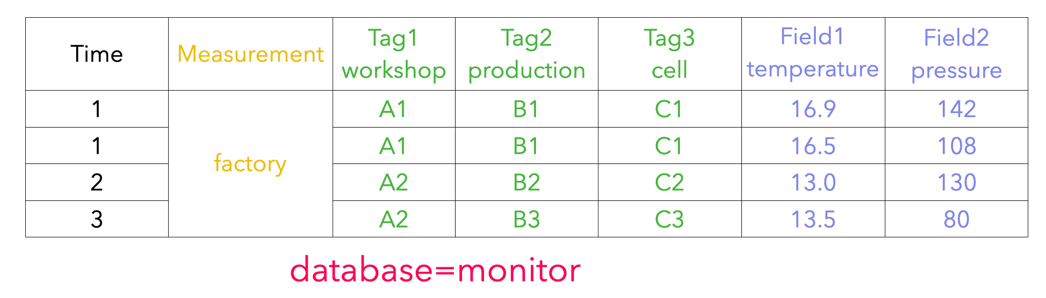

-#### 2.2.1 InfluxDB Metadata

-

-1. database: database name.

-2. measurement: measurement name.

-3. tags: various indexed attributes.

-4. fields: various record values(attributes without index).

-

-

-

-#### 2.2.2 IoTDB Metadata

-

-1. database: database name.

-2. path(time series ID): storage path.

-3. measurement: physical quantity.

-

-

-

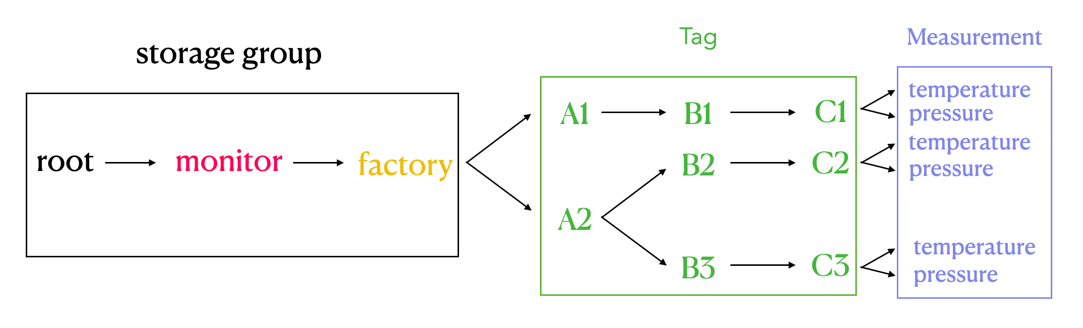

-#### 2.2.3 Mapping relationship between the two

-

-The mapping relationship between InfluxDB metadata and IoTDB metadata is as

follows:

-1. The database and measurement in InfluxDB are combined as the database in

IoTDB.

-2. The field key in InfluxDB is used as the measurement path in IoTDB, and the

field value in InfluxDB is the measured point value recorded under the path.

-3. Tag in InfluxDB is expressed by the path between database and measurement

in IoTDB. The tag key of InfluxDB is implicitly expressed by the order of the

path between database and measurement, and the tag value is recorded as the

name of the path in the corresponding order.

-

-The transformation relationship from InfluxDB metadata to IoTDB metadata can

be represented by the following publicity:

-

-`root.{database}.{measurement}.{tag value 1}.{tag value 2}...{tag value

N-1}.{tag value N}.{field key}`

-

-

-

-As shown in the figure above, it can be seen that:

-

-In IoTDB, we use the path between database and measurement to express the

concept of InfluxDB tag, which is the part of the green box on the right in the

figure.

-

-Each layer between database and measurement represents a tag. If the number of

tag keys is n, the number of layers of the path between database and

measurement is n. We sequentially number each layer between database and

measurement, and each sequence number corresponds to a tag key one by one. At

the same time, we use the **path name** of each layer between database and

measurement to remember tag value. Tag key can find the tag value under the

corresponding path level through its own s [...]

-

-#### 2.2.4 Key Problem

-

-In the SQL statement of InfluxDB, the different order of tags does not affect

the actual execution .

-

-For example: `insert factory, workshop=A1, production=B1, temperature=16.9`

and `insert factory, production=B1, workshop=A1, temperature=16.9` have the

same meaning (and execution result) of the two InfluxDB SQL.

-

-However, in IoTDB, the above inserted data points can be stored in

`root.monitor.factory.A1.B1.temperature` can also be stored in

`root.monitor.factory.B1.A1.temperature`. Therefore, the order of the tags of

the InfluxDB stored in the IoTDB path needs special consideration because

`root.monitor.factory.A1.B1.temperature` and

-

-`root.monitor.factory.B1.A1.temperature` is two different sequences. We can

think that iotdb metadata model is "sensitive" to the processing of tag order.

-

-Based on the above considerations, we also need to record the hierarchical

order of each tag in the IoTDB path in the IoTDB, as to ensure that the adapter

can only operate on a time series in the IoTDB as long as the SQL expresses

operations on the same time series, regardless of the order in which the tags

appear in the InfluxDB SQL.

-

-Another problem that needs to be considered here is how to persist the tag key

and corresponding order relationship of InfluxDB into the IoTDB database to

ensure that relevant information will not be lost.

-

-**Solution:**

-

-**The form of tag key correspondence in memory**

-

-Maintain the order of tags at the IoTDB path level by using the map structure

of `Map<Measurement,Map<Tag key, order>>` in memory.

-

-``` java

- Map<String, Map<String, Integer>> measurementTagOrder

-```

-

-It can be seen that map is a two-tier structure.

-

-The key of the first layer is an InfluxDB measurement of string type, and the

value of the first layer is a Map<string,Integer> structure.

-

-The key of the second layer is the InfluxDB tag key of string type, and the

value of the second layer is the tag order of Integer type, that is, the order

of tags at the IoTDB path level.

-

-When in use, you can first locate the tag through the InfluxDB measurement,

then locate the tag through the InfluxDB tag key, and finally get the order of

tags at the IoTDB path level.

-

-**Persistence scheme of tag key correspondence order**

-

-Database is `root.TAG_ Info`, using `database_name`,`measurement_ name`, `tag_

Name ` and ` tag_ Order ` under the database to store tag key and its

corresponding order relationship by measuring points.

-

-```

-+-----------------------------+---------------------------+------------------------------+----------------------+-----------------------+

-|

Time|root.TAG_INFO.database_name|root.TAG_INFO.measurement_name|root.TAG_INFO.tag_name|root.TAG_INFO.tag_order|

-+-----------------------------+---------------------------+------------------------------+----------------------+-----------------------+

-|2021-10-12T01:21:26.907+08:00| monitor|

factory| workshop| 1|

-|2021-10-12T01:21:27.310+08:00| monitor|

factory| production| 2|

-|2021-10-12T01:21:27.313+08:00| monitor|

factory| cell| 3|

-|2021-10-12T01:21:47.314+08:00| building|

cpu| tempture| 1|

-+-----------------------------+---------------------------+------------------------------+----------------------+-----------------------+

-```

-

-

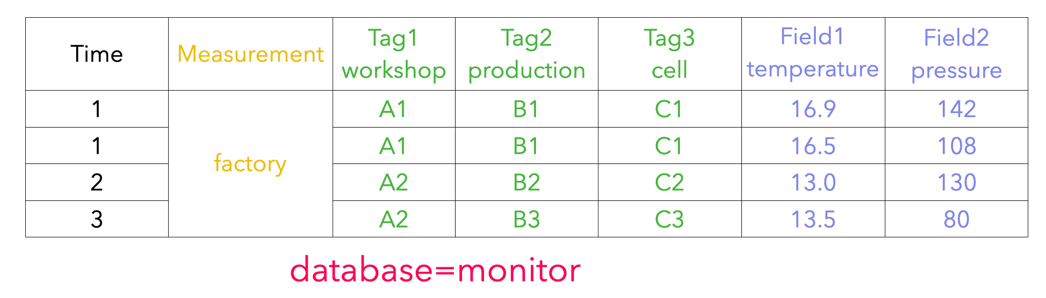

-### 2.3 Example

-

-#### 2.3.1 Insert records

-

-1. Suppose three pieces of data are inserted into the InfluxDB in the

following order (database = monitor):

-

- (1)`insert student,name=A,phone=B,sex=C score=99`

-

- (2)`insert student,address=D score=98`

-

- (3)`insert student,name=A,phone=B,sex=C,address=D score=97`

-

-2. Simply explain the timing of the above InfluxDB, and database is monitor;

Measurement is student; Tag is name, phone, sex and address respectively; Field

is score.

-

-The actual storage of the corresponding InfluxDB is:

-

-```

-time address name phone sex socre

----- ------- ---- ----- --- -----

-1633971920128182000 A B C 99

-1633971947112684000 D 98

-1633971963011262000 D A B C 97

-```

-

-3. The process of inserting three pieces of data in sequence by IoTDB is as

follows:

-

- (1) When inserting the first piece of data, we need to update the three new

tag keys to the table. The table of the record tag sequence corresponding to

IoTDB is:

-

- | database | measurement | tag_key | Order |

- | -------- | ----------- | ------- | ----- |

- | monitor | student | name | 0 |

- | monitor | student | phone | 1 |

- | monitor | student | sex | 2 |

-

- (2) When inserting the second piece of data, since there are already three

tag keys in the table recording the tag order, it is necessary to update the

record with the fourth tag key=address. The table of the record tag sequence

corresponding to IoTDB is:

-

- | database | measurement | tag_key | order |

- | -------- | ----------- | ------- | ----- |

- | monitor | student | name | 0 |

- | monitor | student | phone | 1 |

- | monitor | student | sex | 2 |

- | monitor | student | address | 3 |

-

- (3) When inserting the third piece of data, the four tag keys have been

recorded at this time, so there is no need to update the record. The table of

the record tag sequence corresponding to IoTDB is:

-

- | database | measurement | tag_key | order |

- | -------- | ----------- | ------- | ----- |

- | monitor | student | name | 0 |

- | monitor | student | phone | 1 |

- | monitor | student | sex | 2 |

- | monitor | student | address | 3 |

-

-4. (1) The IoTDB sequence corresponding to the first inserted data is

root.monitor.student.A.B.C

-

- (2) The IoTDB sequence corresponding to the second inserted data is

root.monitor.student.PH.PH.PH.D (where PH is a placeholder).

-

- It should be noted that since the tag key = address of this data appears

the fourth, but it does not have the corresponding first three tag values, it

needs to be replaced by a PH. The purpose of this is to ensure that the tag

order in each data will not be disordered, which is consistent with the order

in the current order table, so that the specified tag can be filtered when

querying data.

-

- (3) The IoTDB sequence corresponding to the second inserted data is

root.monitor.student.A.B.C.D

-

- The actual storage of the corresponding IoTDB is:

-

-```

-+-----------------------------+--------------------------------+-------------------------------------+----------------------------------+

-|

Time|root.monitor.student.A.B.C.score|root.monitor.student.PH.PH.PH.D.score|root.monitor.student.A.B.C.D.score|

-+-----------------------------+--------------------------------+-------------------------------------+----------------------------------+

-|2021-10-12T01:21:26.907+08:00| 99|

NULL| NULL|

-|2021-10-12T01:21:27.310+08:00| NULL|

98| NULL|

-|2021-10-12T01:21:27.313+08:00| NULL|

NULL| 97|

-+-----------------------------+--------------------------------+-------------------------------------+----------------------------------+

-```

-

-5. If the insertion order of the above three data is different, we can see

that the corresponding actual path has changed, because the order of tags in

the InfluxDB data has changed, and the order of the corresponding path nodes in

IoTDB has also changed.

-

-However, this will not affect the correctness of the query, because once the

tag order of InfluxDB is determined, the query will also filter the tag values

according to the order recorded in this order table. Therefore, the correctness

of the query will not be affected.

-

-#### 2.3.2 Query Data

-

-1. Query the data of phone = B in student. In database = monitor, measurement

= student, the order of tag = phone is 1, and the maximum order is 3. The query

corresponding to IoTDB is:

-

- ```sql

- select * from root.monitor.student.*.B

- ```

-

-2. Query the data with phone = B and score > 97 in the student. The query

corresponding to IoTDB is:

-

- ```sql

- select * from root.monitor.student.*.B where score>97

- ```

-

-3. Query the data of the student with phone = B and score > 97 in the last

seven days. The query corresponding to IoTDB is:

-

- ```sql

- select * from root.monitor.student.*.B where score>97 and time > now()-7d

- ```

-

-4. Query the name = a or score > 97 in the student. Since the tag is stored in

the path, there is no way to complete the **or** semantic query of tag and

field at the same time with one query. Therefore, multiple queries or operation

union set are required. The query corresponding to IoTDB is:

-

- ```sql

- select * from root.monitor.student.A

- select * from root.monitor.student where score>97

- ```

- Finally, manually combine the results of the above two queries.

-

-5. Query the student (name = a or phone = B or sex = C) with a score > 97.

Since the tag is stored in the path, there is no way to use one query to

complete the **or** semantics of the tag. Therefore, multiple queries or

operations are required to merge. The query corresponding to IoTDB is:

-

- ```sql

- select * from root.monitor.student.A where score>97

- select * from root.monitor.student.*.B where score>97

- select * from root.monitor.student.*.*.C where score>97

- ```

- Finally, manually combine the results of the above three queries.

-

-## 3. Support

-

-### 3.1 InfluxDB Version Support

-

-Currently, supports InfluxDB 1.x version, which does not support InfluxDB 2.x

version.

-

-The Maven dependency of `influxdb-java` supports 2.21 +, and the lower version

is not tested.

-

-

-### 3.2 Function Interface Support

-

-Currently, supports interface functions are as follows:

-

-```java

-public Pong ping();

-

-public String version();

-

-public void flush();

-

-public void close();

-

-public InfluxDB setDatabase(final String database);

-

-public QueryResult query(final Query query);

-

-public void write(final Point point);

-

-public void write(final String records);

-

-public void write(final List<String> records);

-

-public void write(final String database,final String retentionPolicy,final

Point point);

-

-public void write(final int udpPort,final Point point);

-

-public void write(final BatchPoints batchPoints);

-

-public void write(final String database,final String retentionPolicy,

-final ConsistencyLevel consistency,final String records);

-

-public void write(final String database,final String retentionPolicy,

-final ConsistencyLevel consistency,final TimeUnit precision,final String

records);

-

-public void write(final String database,final String retentionPolicy,

-final ConsistencyLevel consistency,final List<String> records);

-

-public void write(final String database,final String retentionPolicy,

-final ConsistencyLevel consistency,final TimeUnit precision,final List<String>

records);

-

-public void write(final int udpPort,final String records);

-

-public void write(final int udpPort,final List<String> records);

-```

-

-### 3.3 Query Syntax Support

-

-The currently supported query SQL syntax is:

-

-```sql

-SELECT <field_key>[, <field_key>, <tag_key>]

-FROM <measurement_name>

-WHERE <conditional_expression > [( AND | OR) <conditional_expression > [...]]

-```

-

-WHERE clause supports `conditional_expressions` on `field`,`tag` and

`timestamp`.

-

-#### field

-

-```sql

-field_key <operator> ['string' | boolean | float | integer]

-```

-

-#### tag

-

-```sql

-tag_key <operator> ['tag_value']

-```

-

-#### timestamp

-

-```sql

-timestamp <operator> ['time']

-```

-

-At present, the filter condition of timestamp only supports the expressions

related to now(), such as now () - 7d. The specific timestamp is not supported

temporarily.

diff --git a/docs/UserGuide/Data-Concept/Encoding.md

b/docs/UserGuide/Data-Concept/Encoding.md

index 6f479df368c..b82aae3edb5 100644

--- a/docs/UserGuide/Data-Concept/Encoding.md

+++ b/docs/UserGuide/Data-Concept/Encoding.md

@@ -54,12 +54,6 @@ Usage restrictions: When using GORILLA to encode INT32 data,

you need to ensure

DICTIONARY encoding is lossless. It is suitable for TEXT data with low

cardinality (i.e. low number of distinct values). It is not recommended to use

it for high-cardinality data.

-* FREQ

-

-FREQ encoding is lossy. Based on the idea of transform coding, it transforms

the time sequence to the frequency domain and only reserve part of the

frequency components with high energy. Thus, it greatly improves the space

efficiency with little accuracy loss. It is suitable for data with high energy

concentration (especially those with obvious periodicity), not suitable for

data with uniformly distributed energy (such as white noise).

-

-> There are two parameters of FREQ encoding in the configuration file:

`freq_snr` defines the signal-noise-ratio (SNR). There is a mathematical

relationship between SNR and NRMSE as $NRMSE = 10^{-SNR/20}$. Both the

compression ratio and accuracy loss decrease when it increases.

`freq_block_size` defines the data size in a time-frequency transformation. It

is not recommended to modify the default value. The detailed experimental

results and analysis of the influences of parameters are in [...]

-

* ZIGZAG

ZIGZAG encoding maps signed integers to unsigned integers so that numbers with

a small absolute value (for instance, -1) have a small variant encoded value

too. It does this in a way that "zig-zags" back and forth through the positive

and negative integers.

@@ -84,14 +78,14 @@ The five encodings described in the previous sections are

applicable to differen

The correspondence between the data type and its supported encodings is

summarized in the Table below.

-| Data Type | Supported Encoding

|

-|:---------:|:-----------------------------------------------------------------:|

-| BOOLEAN | PLAIN, RLE

|

-| INT32 | PLAIN, RLE, TS_2DIFF, GORILLA, FREQ, ZIGZAG, CHIMP, SPRINTZ,

RLBE |

-| INT64 | PLAIN, RLE, TS_2DIFF, GORILLA, FREQ, ZIGZAG, CHIMP, SPRINTZ,

RLBE |

-| FLOAT | PLAIN, RLE, TS_2DIFF, GORILLA, FREQ, CHIMP, SPRINTZ, RLBE

|

-| DOUBLE | PLAIN, RLE, TS_2DIFF, GORILLA, FREQ, CHIMP, SPRINTZ, RLBE

|

-| TEXT | PLAIN, DICTIONARY

|

+| Data Type | Supported Encoding |

+|:---------:|:-----------------------------------------------------------:|

+| BOOLEAN | PLAIN, RLE |

+| INT32 | PLAIN, RLE, TS_2DIFF, GORILLA, ZIGZAG, CHIMP, SPRINTZ, RLBE |

+| INT64 | PLAIN, RLE, TS_2DIFF, GORILLA, ZIGZAG, CHIMP, SPRINTZ, RLBE |

+| FLOAT | PLAIN, RLE, TS_2DIFF, GORILLA, CHIMP, SPRINTZ, RLBE |

+| DOUBLE | PLAIN, RLE, TS_2DIFF, GORILLA, CHIMP, SPRINTZ, RLBE |

+| TEXT | PLAIN, DICTIONARY |

When the data type specified by the user does not correspond to the encoding

method, the system will prompt an error.

diff --git a/docs/UserGuide/Reference/Common-Config-Manual.md

b/docs/UserGuide/Reference/Common-Config-Manual.md

index 99752e97c02..b0bc78c76a7 100644

--- a/docs/UserGuide/Reference/Common-Config-Manual.md

+++ b/docs/UserGuide/Reference/Common-Config-Manual.md

@@ -592,12 +592,12 @@ Different configuration parameters take effect in the

following three ways:

* slow\_query\_threshold

-|Name| slow\_query\_threshold |

-|:---:|:---|

+|Name| slow\_query\_threshold |

+|:---:|:----------------------------------------|

|Description| Time cost(ms) threshold for slow query. |

-|Type| Int32 |

-|Default| 5000 |

-|Effective|Trigger|

+|Type| Int32 |

+|Default| 30000 |

+|Effective| Trigger |

* query\_timeout\_threshold

@@ -799,14 +799,6 @@ Different configuration parameters take effect in the

following three ways:

|Default| false

|

|Effective| After restarting system

|

-* upgrade\_thread\_count

-

-| Name | upgrade\_thread\_count

|

-|:---------:|:--------------------------------------------------------------------------------------------------|

-|Description| When there exists old version(v2) TsFile, how many thread will

be set up to perform upgrade tasks |

-| Type | Int32

|

-| Default | 1

|

-| Effective | After restarting system

|

* device\_path\_cache\_size

@@ -1219,15 +1211,6 @@ Different configuration parameters take effect in the

following three ways:

|Default| 128 |

|Effective|hot-load|

-* time\_encoder

-

-| Name | time\_encoder |

-| :---------: | :------------------------------------ |

-| Description | Encoding type of time column |

-| Type | Enum String: “TS_2DIFF”,“PLAIN”,“RLE” |

-| Default | TS_2DIFF |

-| Effective | hot-load |

-

* value\_encoder

| Name | value\_encoder |

@@ -1264,23 +1247,6 @@ Different configuration parameters take effect in the

following three ways:

| Default | 0.05

|

| Effective | After restarting system

|

-* freq\_snr

-

-| Name | freq\_snr |

-| :---------: | :---------------------------------------------- |

-| Description | Signal-noise-ratio (SNR) of lossy FREQ encoding |

-| Type | Double |

-| Default | 40.0 |

-| Effective | hot-load |

-

-* freq\_block\_size

-

-|Name| freq\_block\_size |

-|:---:|:---|

-|Description| Block size of FREQ encoding. In other words, the number of data

points in a time-frequency transformation. To speed up the encoding, it is

recommended to be the power of 2. |

-|Type|int32|

-|Default| 1024 |

-|Effective|hot-load|

### Authorization Configuration

@@ -1303,24 +1269,6 @@ Different configuration parameters take effect in the

following three ways:

| Default | no |

| Effective | After restarting system |

-* admin\_name

-

-| Name | admin\_name |

-| :---------: | :-------------------------------------------- |

-| Description | The username of admin |

-| Type | String |

-| Default | root |

-| Effective | Only allowed to be modified in first start up |

-

-* admin\_password

-

-| Name | admin\_password |

-| :---------: | :-------------------------------------------- |

-| Description | The password of admin |

-| Type | String |

-| Default | root |

-| Effective | Only allowed to be modified in first start up |

-

* iotdb\_server\_encrypt\_decrypt\_provider

| Name | iotdb\_server\_encrypt\_decrypt\_provider

|

@@ -2085,23 +2033,4 @@ Different configuration parameters take effect in the

following three ways:

|Default| 5000 |

|Effective| After restarting system |

-### InfluxDB RPC Service Configuration

-

-* enable\_influxdb\_rpc\_service

-

-| Name | enable\_influxdb\_rpc\_service |

-| :---------: | :------------------------------------- |

-| Description | Whether to enable InfluxDB RPC service |

-| Type | Boolean |

-| Default | true |

-| Effective | After restarting system |

-

-* influxdb\_rpc\_port

-

-| Name | influxdb\_rpc\_port |

-| :---------: | :------------------------------------ |

-| Description | The port used by InfluxDB RPC service |

-| Type | int32 |

-| Default | 8086 |

-| Effective | After restarting system |

diff --git a/docs/UserGuide/Reference/DataNode-Config-Manual.md

b/docs/UserGuide/Reference/DataNode-Config-Manual.md

index dfb1f8e69ae..a24f3b7bbc0 100644

--- a/docs/UserGuide/Reference/DataNode-Config-Manual.md

+++ b/docs/UserGuide/Reference/DataNode-Config-Manual.md

@@ -112,7 +112,7 @@ The permission definitions are in

${IOTDB\_CONF}/conf/jmx.access.

|:---:|:-----------------------------------------------|

|Description| The client rpc service listens on the address. |

|Type| String |

-|Default| 127.0.0.1 |

+|Default| 0.0.0.0 |

|Effective| After restarting system |

* dn\_rpc\_port

diff --git a/docs/zh/UserGuide/API/InfluxDB-Protocol.md

b/docs/zh/UserGuide/API/InfluxDB-Protocol.md

deleted file mode 100644

index 989ddd4379d..00000000000

--- a/docs/zh/UserGuide/API/InfluxDB-Protocol.md

+++ /dev/null

@@ -1,347 +0,0 @@

-<!--

-

- Licensed to the Apache Software Foundation (ASF) under one

- or more contributor license agreements. See the NOTICE file

- distributed with this work for additional information

- regarding copyright ownership. The ASF licenses this file

- to you under the Apache License, Version 2.0 (the

- "License"); you may not use this file except in compliance

- with the License. You may obtain a copy of the License at

-

- http://www.apache.org/licenses/LICENSE-2.0

-

- Unless required by applicable law or agreed to in writing,

- software distributed under the License is distributed on an

- "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

- KIND, either express or implied. See the License for the

- specific language governing permissions and limitations

- under the License.

-

--->

-

-## 0.引入依赖

-

-```xml

- <dependency>

- <groupId>org.apache.iotdb</groupId>

- <artifactId>influxdb-protocol</artifactId>

- <version>1.0.0</version>

- </dependency>

-```

-

-这里是一些使用 InfluxDB-Protocol 适配器连接 IoTDB

的[示例](https://github.com/apache/iotdb/blob/master/example/influxdb-protocol-example/src/main/java/org/apache/iotdb/influxdb/InfluxDBExample.java)

-

-

-## 1.切换方案

-

-假如您原先接入 InfluxDB 的业务代码如下:

-

-```java

-InfluxDB influxDB = InfluxDBFactory.connect(openurl, username, password);

-```

-

-您只需要将 InfluxDBFactory 替换为 **IoTDBInfluxDBFactory** 即可实现业务向 IoTDB 的切换:

-

-```java

-InfluxDB influxDB = IoTDBInfluxDBFactory.connect(openurl, username, password);

-```

-

-## 2.方案设计

-

-### 2.1 InfluxDB-Protocol适配器

-

-该适配器以 IoTDB Java ServiceProvider 接口为底层基础,实现了 InfluxDB 的 Java 接口 `interface

InfluxDB`,对用户提供了所有 InfluxDB 的接口方法,最终用户可以无感知地使用 InfluxDB 协议向 IoTDB 发起写入和读取请求。

-

-

-

-

-

-

-### 2.2 元数据格式转换

-

-InfluxDB 的元数据是 tag-field 模型,IoTDB 的元数据是树形模型。为了使适配器能够兼容 InfluxDB 协议,需要把

InfluxDB 的元数据模型转换成 IoTDB 的元数据模型。

-

-#### 2.2.1 InfluxDB 元数据

-

-1. database: 数据库名。

-2. measurement: 测量指标名。

-3. tags : 各种有索引的属性。

-4. fields : 各种记录值(没有索引的属性)。

-

-

-

-#### 2.2.2 IoTDB 元数据

-

-1. database: 数据库。

-2. path(time series ID):存储路径。

-3. measurement: 物理量。

-

-

-

-#### 2.2.3 两者映射关系

-

-InfluxDB 元数据和 IoTDB 元数据有着如下的映射关系:

-1. InfluxDB 中的 database 和 measurement 组合起来作为 IoTDB 中的 database。

-2. InfluxDB 中的 field key 作为 IoTDB 中 measurement 路径,InfluxDB 中的 field value

即是该路径下记录的测点值。

-3. InfluxDB 中的 tag 在 IoTDB 中使用 database 和 measurement 之间的路径表达。InfluxDB 的 tag

key 由 database 和 measurement 之间路径的顺序隐式表达,tag value 记录为对应顺序的路径的名称。

-

-InfluxDB 元数据向 IoTDB 元数据的转换关系可以由下面的公示表示:

-

-`root.{database}.{measurement}.{tag value 1}.{tag value 2}...{tag value

N-1}.{tag value N}.{field key}`

-

-

-

-如上图所示,可以看出:

-

-我们在 IoTDB 中使用 database 和 measurement 之间的路径来表达 InfluxDB tag 的概念,也就是图中右侧绿色方框的部分。

-

-database 和 measurement 之间的每一层都代表一个 tag。如果 tag key 的数量为 N,那么 database 和

measurement 之间的路径的层数就是 N。我们对 database 和 measurement 之间的每一层进行顺序编号,每一个序号都和一个 tag

key 一一对应。同时,我们使用 database 和 measurement 之间每一层 **路径的名字** 来记 tag value,tag key

可以通过自身的序号找到对应路径层级下的 tag value.

-

-#### 2.2.4 关键问题

-

-在 InfluxDB 的 SQL 语句中,tag 出现的顺序的不同并不会影响实际的执行结果。

-

-例如:`insert factory, workshop=A1, production=B1 temperature=16.9` 和 `insert

factory, production=B1, workshop=A1 temperature=16.9` 两条 InfluxDB SQL

的含义(以及执行结果)相等。

-

-但在 IoTDB 中,上述插入的数据点可以存储在 `root.monitor.factory.A1.B1.temperature` 下,也可以存储在

`root.monitor.factory.B1.A1.temperature` 下。因此,IoTDB 路径中储存的 InfluxDB 的 tag

的顺序是需要被特别考虑的,因为 `root.monitor.factory.A1.B1.temperature` 和

-`root.monitor.factory.B1.A1.temperature` 是两条不同的序列。我们可以认为,IoTDB 元数据模型对 tag

顺序的处理是“敏感”的。

-

-基于上述的考虑,我们还需要在 IoTDB 中记录 InfluxDB 每个 tag 对应在 IoTDB 路径中的层级顺序,以确保在执行 InfluxDB

SQL 时,不论 InfluxDB SQL 中 tag 出现的顺序如何,只要该 SQL 表达的是对同一个时间序列上的操作,那么适配器都可以唯一对应到

IoTDB 中的一条时间序列上进行操作。

-

-这里还需要考虑的另一个问题是:InfluxDB 的 tag key 及对应顺序关系应该如何持久化到 IoTDB 数据库中,以确保不会丢失相关信息。

-

-**解决方案:**

-

-**tag key 对应顺序关系在内存中的形式**

-

-通过利用内存中的`Map<Measurement, Map<Tag Key, Order>>` 这样一个 Map 结构,来维护 tag 在 IoTDB

路径层级上的顺序。

-

-``` java

- Map<String, Map<String, Integer>> measurementTagOrder

-```

-

-可以看出 Map 是一个两层的结构。

-

-第一层的 Key 是 String 类型的 InfluxDB measurement,第一层的 Value 是一个 <String, Integer>

结构的 Map。

-

-第二层的 Key 是 String 类型的 InfluxDB tag key,第二层的 Value 是 Integer 类型的 tag order,也就是

tag 在 IoTDB 路径层级上的顺序。

-

-使用时,就可以先通过 InfluxDB measurement 定位,再通过 InfluxDB tag key 定位,最后就可以获得 tag 在

IoTDB 路径层级上的顺序了。

-

-**tag key 对应顺序关系的持久化方案**

-

-Database 为`root.TAG_INFO`,分别用 database 下的 `database_name`, `measurement_name`,

`tag_name` 和 `tag_order` 测点来存储 tag key及其对应的顺序关系。

-

-```

-+-----------------------------+---------------------------+------------------------------+----------------------+-----------------------+

-|

Time|root.TAG_INFO.database_name|root.TAG_INFO.measurement_name|root.TAG_INFO.tag_name|root.TAG_INFO.tag_order|

-+-----------------------------+---------------------------+------------------------------+----------------------+-----------------------+

-|2021-10-12T01:21:26.907+08:00| monitor|

factory| workshop| 1|

-|2021-10-12T01:21:27.310+08:00| monitor|

factory| production| 2|

-|2021-10-12T01:21:27.313+08:00| monitor|

factory| cell| 3|

-|2021-10-12T01:21:47.314+08:00| building|

cpu| tempture| 1|

-+-----------------------------+---------------------------+------------------------------+----------------------+-----------------------+

-```

-

-

-

-### 2.3 实例

-

-#### 2.3.1 插入数据

-

-1. 假定按照以下的顺序插入三条数据到 InfluxDB 中 (database=monitor):

-

- (1)`insert student,name=A,phone=B,sex=C score=99`

-

- (2)`insert student,address=D score=98`

-

- (3)`insert student,name=A,phone=B,sex=C,address=D score=97`

-

-2. 简单对上述 InfluxDB 的时序进行解释,database 是 monitor; measurement 是student;tag 分别是

name,phone、sex 和 address;field 是 score。

-

-对应的InfluxDB的实际存储为:

-

-```

-time address name phone sex socre

----- ------- ---- ----- --- -----

-1633971920128182000 A B C 99

-1633971947112684000 D 98

-1633971963011262000 D A B C 97

-```

-

-

-3. IoTDB顺序插入三条数据的过程如下:

-

- (1)插入第一条数据时,需要将新出现的三个 tag key 更新到 table 中,IoTDB 对应的记录 tag 顺序的 table 为:

-

- | database | measurement | tag_key | Order |

- | -------- | ----------- | ------- | ----- |

- | monitor | student | name | 0 |

- | monitor | student | phone | 1 |

- | monitor | student | sex | 2 |

-

- (2)插入第二条数据时,由于此时记录 tag 顺序的 table 中已经有了三个 tag key,因此需要将出现的第四个 tag

key=address 更新记录。IoTDB 对应的记录 tag 顺序的 table 为:

-

- | database | measurement | tag_key | order |

- | -------- | ----------- | ------- | ----- |

- | monitor | student | name | 0 |

- | monitor | student | phone | 1 |

- | monitor | student | sex | 2 |

- | monitor | student | address | 3 |

-

- (3)插入第三条数据时,此时四个 tag key 都已经记录过,所以不需要更新记录,IoTDB 对应的记录 tag 顺序的 table 为:

-

- | database | measurement | tag_key | order |

- | -------- | ----------- | ------- | ----- |

- | monitor | student | name | 0 |

- | monitor | student | phone | 1 |

- | monitor | student | sex | 2 |

- | monitor | student | address | 3 |

-

-4. (1)第一条插入数据对应 IoTDB 时序为 root.monitor.student.A.B.C

-

- (2)第二条插入数据对应 IoTDB 时序为 root.monitor.student.PH.PH.PH.D (其中PH表示占位符)。

-

- 需要注意的是,由于该条数据的 tag key=address 是第四个出现的,但是自身却没有对应的前三个 tag 值,因此需要用 PH

占位符来代替。这样做的目的是保证每条数据中的 tag 顺序不会乱,是符合当前顺序表中的顺序,从而查询数据的时候可以进行指定 tag 过滤。

-

- (3)第三条插入数据对应 IoTDB 时序为 root.monitor.student.A.B.C.D

-

- 对应的 IoTDB 的实际存储为:

-

-```

-+-----------------------------+--------------------------------+-------------------------------------+----------------------------------+

-|

Time|root.monitor.student.A.B.C.score|root.monitor.student.PH.PH.PH.D.score|root.monitor.student.A.B.C.D.score|

-+-----------------------------+--------------------------------+-------------------------------------+----------------------------------+

-|2021-10-12T01:21:26.907+08:00| 99|

NULL| NULL|

-|2021-10-12T01:21:27.310+08:00| NULL|

98| NULL|

-|2021-10-12T01:21:27.313+08:00| NULL|

NULL| 97|

-+-----------------------------+--------------------------------+-------------------------------------+----------------------------------+

-```

-

-5.

如果上面三条数据插入的顺序不一样,我们可以看到对应的实际path路径也就发生了改变,因为InfluxDB数据中的Tag出现顺序发生了变化,所对应的到IoTDB中的path节点顺序也就发生了变化。

-

-

但是这样实际并不会影响查询的正确性,因为一旦Influxdb的Tag顺序确定之后,查询也会按照这个顺序表记录的顺序进行Tag值过滤。所以并不会影响查询的正确性。

-

-#### 2.3.2 查询数据

-

-1.

查询student中phone=B的数据。在database=monitor,measurement=student中tag=phone的顺序为1,order最大值是3,对应到IoTDB的查询为:

-

- ```sql

- select * from root.monitor.student.*.B

- ```

-

-2. 查询student中phone=B且score>97的数据,对应到IoTDB的查询为:

-

- ```sql

- select * from root.monitor.student.*.B where score>97

- ```

-

-3. 查询student中phone=B且score>97且时间在最近七天内的的数据,对应到IoTDB的查询为:

-

- ```sql

- select * from root.monitor.student.*.B where score>97 and time > now()-7d

- ```

-

-

-4.

查询student中name=A或score>97,由于tag存储在路径中,因此没有办法用一次查询同时完成tag和field的**或**语义查询,因此需要多次查询进行或运算求并集,对应到IoTDB的查询为:

-

- ```sql

- select * from root.monitor.student.A

- select * from root.monitor.student where score>97

- ```

- 最后手动对上面两次查询结果求并集。

-

-5.

查询student中(name=A或phone=B或sex=C)且score>97,由于tag存储在路径中,因此没有办法用一次查询完成tag的**或**语义,

因此需要多次查询进行或运算求并集,对应到IoTDB的查询为:

-

- ```sql

- select * from root.monitor.student.A where score>97

- select * from root.monitor.student.*.B where score>97

- select * from root.monitor.student.*.*.C where score>97

- ```

- 最后手动对上面三次查询结果求并集。

-

-## 3 支持情况

-

-### 3.1 InfluxDB版本支持情况

-

-目前支持InfluxDB 1.x 版本,暂不支持InfluxDB 2.x 版本。

-

-`influxdb-java`的maven依赖支持2.21+,低版本未进行测试。

-

-### 3.2 函数接口支持情况

-

-目前支持的接口函数如下:

-

-```java

-public Pong ping();

-

-public String version();

-

-public void flush();

-

-public void close();

-

-public InfluxDB setDatabase(final String database);

-

-public QueryResult query(final Query query);

-

-public void write(final Point point);

-

-public void write(final String records);

-

-public void write(final List<String> records);

-

-public void write(final String database,final String retentionPolicy,final

Point point);

-

-public void write(final int udpPort,final Point point);

-

-public void write(final BatchPoints batchPoints);

-

-public void write(final String database,final String retentionPolicy,

-final ConsistencyLevel consistency,final String records);

-

-public void write(final String database,final String retentionPolicy,

-final ConsistencyLevel consistency,final TimeUnit precision,final String

records);

-

-public void write(final String database,final String retentionPolicy,

-final ConsistencyLevel consistency,final List<String> records);

-

-public void write(final String database,final String retentionPolicy,

-final ConsistencyLevel consistency,final TimeUnit precision,final List<String>

records);

-

-public void write(final int udpPort,final String records);

-

-public void write(final int udpPort,final List<String> records);

-```

-

-### 3.3 查询语法支持情况

-

-目前支持的查询sql语法为

-

-```sql

-SELECT <field_key>[, <field_key>, <tag_key>]

-FROM <measurement_name>

-WHERE <conditional_expression > [( AND | OR) <conditional_expression > [...]]

-```

-

-WHERE子句在`field`,`tag`和`timestamp`上支持`conditional_expressions`.

-

-#### field

-

-```sql

-field_key <operator> ['string' | boolean | float | integer]

-```

-

-#### tag

-

-```sql

-tag_key <operator> ['tag_value']

-```

-

-#### timestamp

-

-```sql

-timestamp <operator> ['time']

-```

-

-目前timestamp的过滤条件只支持now()有关表达式,如:now()-7D,具体的时间戳暂不支持。

diff --git a/docs/zh/UserGuide/Data-Concept/Encoding.md

b/docs/zh/UserGuide/Data-Concept/Encoding.md

index e3515cfd291..5da4a73fe92 100644

--- a/docs/zh/UserGuide/Data-Concept/Encoding.md

+++ b/docs/zh/UserGuide/Data-Concept/Encoding.md

@@ -53,12 +53,6 @@ GORILLA 编码是一种无损编码,它比较适合编码前后值比较接近

字典编码是一种无损编码。它适合编码基数小的数据(即数据去重后唯一值数量小)。不推荐用于基数大的数据。

-* 频域编码 (FREQ)

-

-频域编码是一种有损编码,它基于变换编码的思想,将时序数据变换为频域,仅保留部分高能量的频域分量,以少许的精度损失为代价大幅提高空间效率。该编码适合于频域能量分布较为集中的数据(特别是具有明显周期性的数据),不适合能量分布均匀的数据(如白噪声)。

-

->

频域编码在配置文件中包括两个参数:`freq_snr`指定了编码的信噪比(与标准均方根误差的关系为$NRMSE=10^{-SNR/20}$),该参数增大会同时降低压缩比和精度损失,请根据实际应用的需要进行设置;`freq_block_size`指定了编码进行时频域变换的分组大小,推荐不对默认值进行修改。参数影响的实验结果和分析详见设计文档。

-

* ZIGZAG 编码

ZigZag编码将有符号整型映射到无符号整型,适合比较小的整数。

@@ -84,14 +78,14 @@ RLBE编码是一种无损编码,将差分编码,位填充编码,游程长

前文介绍的五种编码适用于不同的数据类型,若对应关系错误,则无法正确创建时间序列。数据类型与支持其编码的编码方式对应关系总结如下表所示。

-| 数据类型 | 支持的编码 |

-|:---------:|:-----------------------------------------------------------------:|

-| BOOLEAN | PLAIN, RLE

|

-| INT32 | PLAIN, RLE, TS_2DIFF, GORILLA, FREQ, ZIGZAG, CHIMP, SPRINTZ,

RLBE |

-| INT64 | PLAIN, RLE, TS_2DIFF, GORILLA, FREQ, ZIGZAG, CHIMP, SPRINTZ,

RLBE |

-| FLOAT | PLAIN, RLE, TS_2DIFF, GORILLA, FREQ, CHIMP, SPRINTZ, RLBE

|

-| DOUBLE | PLAIN, RLE, TS_2DIFF, GORILLA, FREQ, CHIMP, SPRINTZ, RLBE

|

-| TEXT | PLAIN, DICTIONARY

|

+| 数据类型 | 支持的编码 |

+|:---------:|:-----------------------------------------------------------:|

+| BOOLEAN | PLAIN, RLE |

+| INT32 | PLAIN, RLE, TS_2DIFF, GORILLA, ZIGZAG, CHIMP, SPRINTZ, RLBE |

+| INT64 | PLAIN, RLE, TS_2DIFF, GORILLA, ZIGZAG, CHIMP, SPRINTZ, RLBE |

+| FLOAT | PLAIN, RLE, TS_2DIFF, GORILLA, CHIMP, SPRINTZ, RLBE |

+| DOUBLE | PLAIN, RLE, TS_2DIFF, GORILLA, CHIMP, SPRINTZ, RLBE |

+| TEXT | PLAIN, DICTIONARY |

当用户输入的数据类型与编码方式不对应时,系统会提示错误。如下所示,二阶差分编码不支持布尔类型:

diff --git a/docs/zh/UserGuide/Reference/Common-Config-Manual.md

b/docs/zh/UserGuide/Reference/Common-Config-Manual.md

index d5508b5a903..eb657775fab 100644

--- a/docs/zh/UserGuide/Reference/Common-Config-Manual.md

+++ b/docs/zh/UserGuide/Reference/Common-Config-Manual.md

@@ -641,11 +641,11 @@ IoTDB ConfigNode 和 DataNode 的公共配置参数位于 `conf` 目录下。

* slow\_query\_threshold

|名字| slow\_query\_threshold |

-|:---:|:---|

-|描述| 慢查询的时间阈值。单位:毫秒。|

-|类型| Int32 |

-|默认值| 5000 |

-|改后生效方式|热加载|

+|:---:|:-----------------------|

+|描述| 慢查询的时间阈值。单位:毫秒。 |

+|类型| Int32 |

+|默认值| 30000 |

+|改后生效方式| 热加载 |

* query\_timeout\_threshold

@@ -847,15 +847,6 @@ IoTDB ConfigNode 和 DataNode 的公共配置参数位于 `conf` 目录下。

| 默认值 | false |

| 改后生效方式 | 重启服务生效 |

-* upgrade\_thread\_count

-

-| 名字 | upgrade\_thread\_count |

-| :----------: |:--------------------------------|

-| 描述 | 当存在老版本TsFile(v2),执行文件升级任务使用的线程数 |

-| 类型 | Int32 |

-| 默认值 | 1 |

-| 改后生效方式 | 重启服务生效 |

-

* device\_path\_cache\_size

| 名字 | device\_path\_cache\_size |

@@ -1267,15 +1258,6 @@ IoTDB ConfigNode 和 DataNode 的公共配置参数位于 `conf` 目录下。

|默认值| 默认为 2 位。注意:32 位浮点数的十进制精度为 7 位,64 位浮点数的十进制精度为 15 位。如果设置超过机器精度将没有实际意义。 |

|改后生效方式|热加载|

-* time\_encoder

-

-| 名字 | time\_encoder |

-| :----------: | :------------------------------------ |

-| 描述 | 时间列编码方式 |

-| 类型 | 枚举 String: “TS_2DIFF”,“PLAIN”,“RLE” |

-| 默认值 | TS_2DIFF |

-| 改后生效方式 | 热加载 |

-

* value\_encoder

| 名字 | value\_encoder |

@@ -1312,23 +1294,6 @@ IoTDB ConfigNode 和 DataNode 的公共配置参数位于 `conf` 目录下。

|默认值| 1 |

|改后生效方式|热加载|

-* freq\_snr

-

-| 名字 | freq\_snr |

-| :----------: | :--------------------- |

-| 描述 | 有损的FREQ编码的信噪比 |

-| 类型 | Double |

-| 默认值 | 40.0 |

-| 改后生效方式 | 热加载 |

-

-* freq\_block\_size

-

-|名字| freq\_block\_size |

-|:---:|:---|

-|描述| FREQ编码的块大小,即一次时频域变换的数据点个数。为了加快编码速度,建议将其设置为2的幂次。 |

-|类型|int32|

-|默认值| 1024 |

-|改后生效方式|热加载|

#### 授权配置

@@ -1351,24 +1316,6 @@ IoTDB ConfigNode 和 DataNode 的公共配置参数位于 `conf` 目录下。

| 默认值 | 无 |

| 改后生效方式 | 重启服务生效 |

-* admin\_name

-

-| 名字 | admin\_name |

-| :----------: | :--------------------------- |

-| 描述 | 管理员用户名,默认为root |

-| 类型 | String |

-| 默认值 | root |

-| 改后生效方式 | 仅允许在第一次启动服务前修改 |

-

-* admin\_password

-

-| 名字 | admin\_password |

-| :----------: | :--------------------------- |

-| 描述 | 管理员密码,默认为root |

-| 类型 | String |

-| 默认值 | root |

-| 改后生效方式 | 仅允许在第一次启动服务前修改 |

-

* iotdb\_server\_encrypt\_decrypt\_provider

| 名字 | iotdb\_server\_encrypt\_decrypt\_provider |

@@ -2122,23 +2069,3 @@ IoTDB ConfigNode 和 DataNode 的公共配置参数位于 `conf` 目录下。

|类型| int32 |

|默认值| 5000 |

|改后生效方式| 重启生效 |

-

-#### InfluxDB 协议适配器配置

-

-* enable\_influxdb\_rpc\_service

-

-| 名字 | enable\_influxdb\_rpc\_service |

-| :----------: | :--------------------------- |

-| 描述 | 是否开启InfluxDB RPC service |

-| 类型 | Boolean |

-| 默认值 | true |

-| 改后生效方式 | 重启服务生效 |

-

-* influxdb\_rpc\_port

-

-| 名字 | influxdb\_rpc\_port |

-| :----------: | :--------------------------- |

-| 描述 | influxdb rpc service占用端口 |

-| 类型 | int32 |

-| 默认值 | 8086 |

-| 改后生效方式 | 重启服务生效 |

\ No newline at end of file

diff --git a/docs/zh/UserGuide/Reference/DataNode-Config-Manual.md

b/docs/zh/UserGuide/Reference/DataNode-Config-Manual.md

index b3c9d194501..24a66877cfb 100644

--- a/docs/zh/UserGuide/Reference/DataNode-Config-Manual.md

+++ b/docs/zh/UserGuide/Reference/DataNode-Config-Manual.md

@@ -95,7 +95,7 @@ IoTDB DataNode 与 Standalone 模式共用一套配置文件,均位于 IoTDB

|:---:|:-----------------|

|描述| 客户端 RPC 服务监听地址 |

|类型| String |

-|默认值| 127.0.0.1 |

+|默认值| 0.0.0.0 |

|改后生效方式| 重启服务生效 |

* dn\_rpc\_port

diff --git

a/integration-test/src/test/java/org/apache/iotdb/db/it/IoTDBEncodingIT.java

b/integration-test/src/test/java/org/apache/iotdb/db/it/IoTDBEncodingIT.java

index 71e408c5312..aa7b7024677 100644

--- a/integration-test/src/test/java/org/apache/iotdb/db/it/IoTDBEncodingIT.java

+++ b/integration-test/src/test/java/org/apache/iotdb/db/it/IoTDBEncodingIT.java

@@ -36,7 +36,6 @@ import java.sql.SQLException;

import java.sql.Statement;

import static org.junit.Assert.assertEquals;

-import static org.junit.Assert.assertTrue;

import static org.junit.Assert.fail;

@RunWith(IoTDBTestRunner.class)

@@ -279,64 +278,6 @@ public class IoTDBEncodingIT {

}

}

- @Test

- public void testSetTimeEncoderRegularAndValueEncoderFREQ() {

- try (Connection connection = EnvFactory.getEnv().getConnection();

- Statement statement = connection.createStatement()) {

- statement.execute(

- "CREATE TIMESERIES root.db_0.tab0.salary WITH

DATATYPE=INT64,ENCODING=FREQ");

- statement.execute("insert into root.db_0.tab0(time,salary)

values(1,1100)");

- statement.execute("insert into root.db_0.tab0(time,salary)

values(2,1200)");

- statement.execute("insert into root.db_0.tab0(time,salary)

values(3,1300)");

- statement.execute("insert into root.db_0.tab0(time,salary)

values(4,1400)");

- statement.execute("flush");

-

- int[] groundtruth = new int[] {1100, 1200, 1300, 1400};

- int[] result = new int[4];

- try (ResultSet resultSet = statement.executeQuery("select * from

root.db_0.tab0")) {

- int index = 0;

- while (resultSet.next()) {

- int salary = resultSet.getInt("root.db_0.tab0.salary");

- result[index] = salary;

- index++;

- }

- assertTrue(SNR(groundtruth, result, groundtruth.length) > 40);

- }

- } catch (Exception e) {

- e.printStackTrace();

- fail();

- }

- }

-

- @Test

- public void testSetTimeEncoderRegularAndValueEncoderFREQOutofOrder() {

- try (Connection connection = EnvFactory.getEnv().getConnection();

- Statement statement = connection.createStatement()) {

- statement.execute(

- "CREATE TIMESERIES root.db_0.tab0.salary WITH

DATATYPE=INT64,ENCODING=FREQ");

- statement.execute("insert into root.db_0.tab0(time,salary)

values(1,1200)");

- statement.execute("insert into root.db_0.tab0(time,salary)

values(2,1100)");

- statement.execute("insert into root.db_0.tab0(time,salary)

values(7,1000)");

- statement.execute("insert into root.db_0.tab0(time,salary)

values(4,2200)");

- statement.execute("flush");

-

- int[] groundtruth = new int[] {1200, 1100, 2200, 1000};

- int[] result = new int[4];

- try (ResultSet resultSet = statement.executeQuery("select * from

root.db_0.tab0")) {

- int index = 0;

- while (resultSet.next()) {

- int salary = resultSet.getInt("root.db_0.tab0.salary");

- result[index] = salary;

- index++;

- }

- assertTrue(SNR(groundtruth, result, groundtruth.length) > 40);

- }

- } catch (Exception e) {

- e.printStackTrace();

- fail();

- }

- }

-

@Test

public void testSetTimeEncoderRegularAndValueEncoderDictionary() {

try (Connection connection = EnvFactory.getEnv().getConnection();

diff --git a/iotdb-client/client-cpp/src/main/Session.h

b/iotdb-client/client-cpp/src/main/Session.h

index b2bdd85521b..3fdc259cae6 100644

--- a/iotdb-client/client-cpp/src/main/Session.h

+++ b/iotdb-client/client-cpp/src/main/Session.h

@@ -180,7 +180,6 @@ namespace TSEncoding {

REGULAR = (char) 7,

GORILLA = (char) 8,

ZIGZAG = (char) 9,

- FREQ = (char) 10,

CHIMP = (char) 11,

SPRINTZ = (char) 12,

RLBE = (char) 13

diff --git a/iotdb-client/client-py/iotdb/utils/IoTDBConstants.py

b/iotdb-client/client-py/iotdb/utils/IoTDBConstants.py

index 36a8ceb66bc..fac308b7556 100644

--- a/iotdb-client/client-py/iotdb/utils/IoTDBConstants.py

+++ b/iotdb-client/client-py/iotdb/utils/IoTDBConstants.py

@@ -60,7 +60,6 @@ class TSEncoding(Enum):

REGULAR = 7

GORILLA = 8

ZIGZAG = 9

- FREQ = 10

CHIMP = 11

SPRINTZ = 12

RLBE = 13

diff --git

a/iotdb-connector/flink-tsfile-connector/src/main/java/org/apache/iotdb/flink/tsfile/util/TSFileConfigUtil.java

b/iotdb-connector/flink-tsfile-connector/src/main/java/org/apache/iotdb/flink/tsfile/util/TSFileConfigUtil.java

index c3be71c07bc..b128628b067 100644

---

a/iotdb-connector/flink-tsfile-connector/src/main/java/org/apache/iotdb/flink/tsfile/util/TSFileConfigUtil.java

+++

b/iotdb-connector/flink-tsfile-connector/src/main/java/org/apache/iotdb/flink/tsfile/util/TSFileConfigUtil.java

@@ -55,7 +55,6 @@ public class TSFileConfigUtil {

globalConfig.setPlaMaxError(config.getPlaMaxError());

globalConfig.setRleBitWidth(config.getRleBitWidth());

globalConfig.setSdtMaxError(config.getSdtMaxError());

- globalConfig.setTimeEncoder(config.getTimeEncoder());

globalConfig.setTimeSeriesDataType(config.getTimeSeriesDataType());

globalConfig.setTSFileStorageFs(config.getTSFileStorageFs());

globalConfig.setUseKerberos(config.isUseKerberos());

diff --git

a/iotdb-connector/flink-tsfile-connector/src/test/java/org/apache/iotdb/flink/util/TSFileConfigUtilCompletenessTest.java

b/iotdb-connector/flink-tsfile-connector/src/test/java/org/apache/iotdb/flink/util/TSFileConfigUtilCompletenessTest.java

index c209271f82c..5189faf6506 100644

---

a/iotdb-connector/flink-tsfile-connector/src/test/java/org/apache/iotdb/flink/util/TSFileConfigUtilCompletenessTest.java

+++

b/iotdb-connector/flink-tsfile-connector/src/test/java/org/apache/iotdb/flink/util/TSFileConfigUtilCompletenessTest.java

@@ -73,8 +73,6 @@ public class TSFileConfigUtilCompletenessTest {

"setTSFileStorageFs",

"setUseKerberos",

"setValueEncoder",

- "setFreqEncodingSNR",

- "setFreqEncodingBlockSize",

"setMaxTsBlockLineNumber",

"setMaxTsBlockSizeInBytes",

"setPatternMatchingThreshold",

diff --git a/node-commons/src/assembly/resources/conf/iotdb-common.properties

b/node-commons/src/assembly/resources/conf/iotdb-common.properties

index 024d4cd3dd8..1f91d8aae69 100644

--- a/node-commons/src/assembly/resources/conf/iotdb-common.properties

+++ b/node-commons/src/assembly/resources/conf/iotdb-common.properties

@@ -414,7 +414,7 @@ cluster_name=defaultCluster

# Time cost(ms) threshold for slow query

# Datatype: long

-# slow_query_threshold=5000

+# slow_query_threshold=30000

# The max executing time of query. unit: ms

# Datatype: int

@@ -540,11 +540,6 @@ cluster_name=defaultCluster

# Datatype: boolean

# 0.13_data_insert_adapt=false

-# When there exists old version(v2) TsFile, how many thread will be set up to

perform upgrade tasks, 1 by default.

-# Set to 1 when less than or equal to 0.

-# Datatype: int

-# upgrade_thread_count=1

-

# The max size of the device path cache. This cache is for avoiding initialize

duplicated device id object in write process.

# Datatype: int

# device_path_cache_size=500000

@@ -799,10 +794,6 @@ cluster_name=defaultCluster

# Datatype: int

# float_precision=2

-# Encoder configuration

-# Encoder of time series, supports TS_2DIFF, PLAIN and RLE(run-length

encoding), REGULAR and default value is TS_2DIFF

-# time_encoder=TS_2DIFF

-

# Encoder of value series. default value is PLAIN.

# For int, long data type, also supports TS_2DIFF and RLE(run-length

encoding), GORILLA and ZIGZAG.

# value_encoder=PLAIN

@@ -839,20 +830,12 @@ cluster_name=defaultCluster

# If OpenIdAuthorizer is enabled, then openID_url must be set.

# openID_url=

-# admin username, default is root

-# Datatype: string

-# admin_name=root

-

# encryption provider class

#

iotdb_server_encrypt_decrypt_provider=org.apache.iotdb.commons.security.encrypt.MessageDigestEncrypt

# encryption provided class parameter

# iotdb_server_encrypt_decrypt_provider_parameter=

-# admin password, default is root

-# Datatype: string

-# admin_password=root

-

# Cache size of user and role

# Datatype: int

# author_cache_size=1000

@@ -1169,13 +1152,3 @@ cluster_name=defaultCluster

# SSL timeout (in seconds)

# idle_timeout_in_seconds=50000

-

-####################

-### InfluxDB RPC Service Configuration

-####################

-

-# Datatype: boolean

-# enable_influxdb_rpc_service=false

-

-# Datatype: int

-# influxdb_rpc_port=8086

diff --git

a/node-commons/src/main/java/org/apache/iotdb/commons/conf/CommonDescriptor.java

b/node-commons/src/main/java/org/apache/iotdb/commons/conf/CommonDescriptor.java

index bd13ad3c53b..d2410d1cba3 100644

---

a/node-commons/src/main/java/org/apache/iotdb/commons/conf/CommonDescriptor.java

+++

b/node-commons/src/main/java/org/apache/iotdb/commons/conf/CommonDescriptor.java

@@ -67,10 +67,6 @@ public class CommonDescriptor {

// if using org.apache.iotdb.db.auth.authorizer.OpenIdAuthorizer,

openID_url is needed.

config.setOpenIdProviderUrl(

properties.getProperty("openID_url",

config.getOpenIdProviderUrl()).trim());

- config.setAdminName(properties.getProperty("admin_name",

config.getAdminName()).trim());

-

- config.setAdminPassword(

- properties.getProperty("admin_password",

config.getAdminPassword()).trim());

config.setEncryptDecryptProvider(

properties

.getProperty(

diff --git a/server/src/assembly/resources/conf/iotdb-datanode.properties

b/server/src/assembly/resources/conf/iotdb-datanode.properties

index 12e806103d0..71904a4bb28 100644

--- a/server/src/assembly/resources/conf/iotdb-datanode.properties

+++ b/server/src/assembly/resources/conf/iotdb-datanode.properties

@@ -24,7 +24,7 @@

# Used for connection of IoTDB native clients(Session)

# Could set 127.0.0.1(for local test) or ipv4 address

# Datatype: String

-dn_rpc_address=127.0.0.1

+dn_rpc_address=0.0.0.0

# Used for connection of IoTDB native clients(Session)

# Bind with dn_rpc_address

@@ -229,7 +229,7 @@ dn_target_config_node_list=127.0.0.1:10710

# The reporters of metric module to report metrics

# If there are more than one reporter, please separate them by commas ",".

-# Options: [JMX, PROMETHEUS, IOTDB]

+# Options: [JMX, PROMETHEUS]

# Datatype: String

# dn_metric_reporter_list=

@@ -251,37 +251,7 @@ dn_target_config_node_list=127.0.0.1:10710

# Datatype: int

# dn_metric_prometheus_reporter_port=9091

-# The host of IoTDB reporter of metric module

-# Could set 127.0.0.1(for local test) or ipv4 address

-# Datatype: String

-# dn_metric_iotdb_reporter_host=127.0.0.1

-

-# The port of IoTDB reporter of metric module

-# Datatype: int

-# dn_metric_iotdb_reporter_port=6667

-

-# The username of IoTDB reporter of metric module

-# Datatype: String

-# dn_metric_iotdb_reporter_username=root

-

-# The password of IoTDB reporter of metric module

-# Datatype: String

-# dn_metric_iotdb_reporter_password=root

-

-# The max connection number of IoTDB reporter of metric module

-# Datatype: int

-# dn_metric_iotdb_reporter_max_connection_number=3

-

-# The location of IoTDB reporter of metric module

-# The metrics will write into root.__system.${location}

-# Datatype: String

-# dn_metric_iotdb_reporter_location=metric

-

-# The push period of IoTDB reporter of metric module in second

-# Datatype: int

-# dn_metric_iotdb_reporter_push_period=15

-

-# The type of internal reporter in metric module

+# The type of internal reporter in metric module, used for checking flushed

point number

# Options: [MEMORY, IOTDB]

# Datatype: String

# dn_metric_internal_reporter_type=MEMORY

\ No newline at end of file

diff --git a/server/src/main/java/org/apache/iotdb/db/conf/IoTDBConfig.java

b/server/src/main/java/org/apache/iotdb/db/conf/IoTDBConfig.java

index 59b56e129cc..d4c694a23b8 100644

--- a/server/src/main/java/org/apache/iotdb/db/conf/IoTDBConfig.java

+++ b/server/src/main/java/org/apache/iotdb/db/conf/IoTDBConfig.java

@@ -110,7 +110,7 @@ public class IoTDBConfig {

private int mqttMaxMessageSize = 1048576;

/** Rpc binding address. */

- private String rpcAddress = "127.0.0.1";

+ private String rpcAddress = "0.0.0.0";

/** whether to use thrift compression. */

private boolean rpcThriftCompressionEnable = false;

@@ -121,9 +121,6 @@ public class IoTDBConfig {

/** Port which the JDBC server listens to. */

private int rpcPort = 6667;

- /** Port which the influxdb protocol server listens to. */

- private int influxDBRpcPort = 8086;

-

/** Rpc Selector thread num */

private int rpcSelectorThreadCount = 1;

@@ -827,7 +824,7 @@ public class IoTDBConfig {

private int frequencyIntervalInMinute = 1;

/** time cost(ms) threshold for slow query. Unit: millisecond */

- private long slowQueryThreshold = 5000;

+ private long slowQueryThreshold = 30000;

private int patternMatchingThreshold = 1000000;

@@ -837,12 +834,6 @@ public class IoTDBConfig {

*/

private boolean enableRpcService = true;

- /**

- * whether enable the influxdb rpc service. This parameter has no a

corresponding field in the

- * iotdb-common.properties

- */

- private boolean enableInfluxDBRpcService = false;

-

/** the size of ioTaskQueue */

private int ioTaskQueueSizeForFlushing = 10;

@@ -1393,14 +1384,6 @@ public class IoTDBConfig {

this.rpcPort = rpcPort;

}

- public int getInfluxDBRpcPort() {

- return influxDBRpcPort;

- }

-

- public void setInfluxDBRpcPort(int influxDBRpcPort) {

- this.influxDBRpcPort = influxDBRpcPort;

- }

-

public String getTimestampPrecision() {

return timestampPrecision;

}

@@ -2724,14 +2707,6 @@ public class IoTDBConfig {

this.enableRpcService = enableRpcService;

}

- public boolean isEnableInfluxDBRpcService() {

- return enableInfluxDBRpcService;

- }

-

- public void setEnableInfluxDBRpcService(boolean enableInfluxDBRpcService) {

- this.enableInfluxDBRpcService = enableInfluxDBRpcService;

- }

-

public int getIoTaskQueueSizeForFlushing() {

return ioTaskQueueSizeForFlushing;

}

diff --git a/server/src/main/java/org/apache/iotdb/db/conf/IoTDBDescriptor.java

b/server/src/main/java/org/apache/iotdb/db/conf/IoTDBDescriptor.java

index 96ecfbe3e82..6a6e789d474 100644

--- a/server/src/main/java/org/apache/iotdb/db/conf/IoTDBDescriptor.java

+++ b/server/src/main/java/org/apache/iotdb/db/conf/IoTDBDescriptor.java

@@ -286,20 +286,6 @@ public class IoTDBDescriptor {

.getProperty(IoTDBConstant.DN_RPC_PORT,

Integer.toString(conf.getRpcPort()))

.trim()));

- conf.setEnableInfluxDBRpcService(

- Boolean.parseBoolean(

- properties

- .getProperty(

- "enable_influxdb_rpc_service",

- Boolean.toString(conf.isEnableInfluxDBRpcService()))

- .trim()));

-

- conf.setInfluxDBRpcPort(

- Integer.parseInt(

- properties

- .getProperty("influxdb_rpc_port",

Integer.toString(conf.getInfluxDBRpcPort()))

- .trim()));

-

conf.setEnableMLNodeService(

Boolean.parseBoolean(

properties

@@ -636,11 +622,6 @@ public class IoTDBDescriptor {

conf.setChunkBufferPoolEnable(

Boolean.parseBoolean(properties.getProperty("chunk_buffer_pool_enable")));

}

-

- conf.setUpgradeThreadCount(

- Integer.parseInt(

- properties.getProperty(

- "upgrade_thread_count",

Integer.toString(conf.getUpgradeThreadCount()))));

conf.setCrossCompactionFileSelectionTimeBudget(

Long.parseLong(

properties.getProperty(

@@ -1351,11 +1332,6 @@ public class IoTDBDescriptor {

"float_precision",

Integer.toString(

TSFileDescriptor.getInstance().getConfig().getFloatPrecision()))));

- TSFileDescriptor.getInstance()

- .getConfig()

- .setTimeEncoder(

- properties.getProperty(

- "time_encoder",

TSFileDescriptor.getInstance().getConfig().getTimeEncoder()));

TSFileDescriptor.getInstance()

.getConfig()

.setValueEncoder(

diff --git a/server/src/main/java/org/apache/iotdb/db/service/DataNode.java

b/server/src/main/java/org/apache/iotdb/db/service/DataNode.java

index fd9311738b5..d9c2bf396db 100644

--- a/server/src/main/java/org/apache/iotdb/db/service/DataNode.java

+++ b/server/src/main/java/org/apache/iotdb/db/service/DataNode.java

@@ -876,9 +876,6 @@ public class DataNode implements DataNodeMBean {

}

private void initProtocols() throws StartupException {

- if (config.isEnableInfluxDBRpcService()) {

- registerManager.register(InfluxDBRPCService.getInstance());

- }

if (config.isEnableMQTTService()) {

registerManager.register(MQTTService.getInstance());

}

diff --git

a/server/src/main/java/org/apache/iotdb/db/service/InfluxDBRPCService.java

b/server/src/main/java/org/apache/iotdb/db/service/InfluxDBRPCService.java

deleted file mode 100644

index 5becc9302ac..00000000000

--- a/server/src/main/java/org/apache/iotdb/db/service/InfluxDBRPCService.java

+++ /dev/null

@@ -1,109 +0,0 @@

-/*

- * Licensed to the Apache Software Foundation (ASF) under one

- * or more contributor license agreements. See the NOTICE file

- * distributed with this work for additional information

- * regarding copyright ownership. The ASF licenses this file

- * to you under the Apache License, Version 2.0 (the

- * "License"); you may not use this file except in compliance

- * with the License. You may obtain a copy of the License at

- *

- * http://www.apache.org/licenses/LICENSE-2.0

- *

- * Unless required by applicable law or agreed to in writing,

- * software distributed under the License is distributed on an

- * "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

- * KIND, either express or implied. See the License for the

- * specific language governing permissions and limitations

- * under the License.

- */

-

-package org.apache.iotdb.db.service;

-

-import org.apache.iotdb.commons.concurrent.ThreadName;

-import org.apache.iotdb.commons.exception.runtime.RPCServiceException;

-import org.apache.iotdb.commons.service.ServiceType;

-import org.apache.iotdb.commons.service.ThriftService;

-import org.apache.iotdb.commons.service.ThriftServiceThread;

-import org.apache.iotdb.db.conf.IoTDBConfig;

-import org.apache.iotdb.db.conf.IoTDBDescriptor;

-import org.apache.iotdb.db.service.thrift.handler.InfluxDBServiceThriftHandler;

-import org.apache.iotdb.db.service.thrift.impl.ClientRPCServiceImpl;

-import org.apache.iotdb.db.service.thrift.impl.IInfluxDBServiceWithHandler;

-import org.apache.iotdb.db.service.thrift.impl.NewInfluxDBServiceImpl;

-import org.apache.iotdb.protocol.influxdb.rpc.thrift.InfluxDBService.Processor;

-

-public class InfluxDBRPCService extends ThriftService implements

InfluxDBRPCServiceMBean {

- private IInfluxDBServiceWithHandler impl;

-

- public static InfluxDBRPCService getInstance() {

- return InfluxDBServiceHolder.INSTANCE;

- }

-

- @Override

- public void initTProcessor()

- throws ClassNotFoundException, IllegalAccessException,

InstantiationException {

- if (IoTDBDescriptor.getInstance()

- .getConfig()

- .getRpcImplClassName()

- .equals(ClientRPCServiceImpl.class.getName())) {

- impl =

- (IInfluxDBServiceWithHandler)

-

Class.forName(NewInfluxDBServiceImpl.class.getName()).newInstance();

- } else {

- impl =

- (IInfluxDBServiceWithHandler)

-

Class.forName(IoTDBDescriptor.getInstance().getConfig().getInfluxDBImplClassName())

- .newInstance();

- }

- initSyncedServiceImpl(null);

- processor = new Processor<>(impl);

- }

-

- @Override

- public void initThriftServiceThread() throws IllegalAccessException {

- IoTDBConfig config = IoTDBDescriptor.getInstance().getConfig();

- try {

- thriftServiceThread =

- new ThriftServiceThread(

- processor,

- getID().getName(),

- ThreadName.INFLUXDB_RPC_PROCESSOR.getName(),

- config.getRpcAddress(),

- config.getInfluxDBRpcPort(),

- config.getRpcMaxConcurrentClientNum(),

- config.getThriftServerAwaitTimeForStopService(),

- new InfluxDBServiceThriftHandler(impl),

-

IoTDBDescriptor.getInstance().getConfig().isRpcThriftCompressionEnable());

- } catch (RPCServiceException e) {

- throw new IllegalAccessException(e.getMessage());

- }

- thriftServiceThread.setName(ThreadName.INFLUXDB_RPC_SERVICE.getName());

- }

-

- @Override

- public String getBindIP() {

- return IoTDBDescriptor.getInstance().getConfig().getRpcAddress();

- }

-

- @Override

- public int getBindPort() {

- return IoTDBDescriptor.getInstance().getConfig().getInfluxDBRpcPort();

- }

-

- @Override

- public int getRPCPort() {

- return getBindPort();

- }

-

- @Override

- public ServiceType getID() {

- return ServiceType.INFLUX_SERVICE;

- }

-

- private static class InfluxDBServiceHolder {

-

- private static final InfluxDBRPCService INSTANCE = new

InfluxDBRPCService();

-

- private InfluxDBServiceHolder() {}

- }

-}

diff --git

a/server/src/main/java/org/apache/iotdb/db/service/InfluxDBRPCServiceMBean.java

b/server/src/main/java/org/apache/iotdb/db/service/InfluxDBRPCServiceMBean.java

deleted file mode 100644

index ed2c70cea63..00000000000

---

a/server/src/main/java/org/apache/iotdb/db/service/InfluxDBRPCServiceMBean.java

+++ /dev/null

@@ -1,21 +0,0 @@

-/*

- * Licensed to the Apache Software Foundation (ASF) under one

- * or more contributor license agreements. See the NOTICE file

- * distributed with this work for additional information

- * regarding copyright ownership. The ASF licenses this file

- * to you under the Apache License, Version 2.0 (the

- * "License"); you may not use this file except in compliance

- * with the License. You may obtain a copy of the License at

- *

- * http://www.apache.org/licenses/LICENSE-2.0

- *

- * Unless required by applicable law or agreed to in writing,

- * software distributed under the License is distributed on an

- * "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

- * KIND, either express or implied. See the License for the

- * specific language governing permissions and limitations

- * under the License.

- */

-package org.apache.iotdb.db.service;

-

-public interface InfluxDBRPCServiceMBean extends RPCServiceMBean {}

diff --git a/server/src/main/java/org/apache/iotdb/db/utils/SchemaUtils.java

b/server/src/main/java/org/apache/iotdb/db/utils/SchemaUtils.java

index 267aa0e381e..5e576fe11bd 100644

--- a/server/src/main/java/org/apache/iotdb/db/utils/SchemaUtils.java

+++ b/server/src/main/java/org/apache/iotdb/db/utils/SchemaUtils.java

@@ -55,7 +55,6 @@ public class SchemaUtils {

intSet.add(TSEncoding.TS_2DIFF);

intSet.add(TSEncoding.GORILLA);

intSet.add(TSEncoding.ZIGZAG);

- intSet.add(TSEncoding.FREQ);

intSet.add(TSEncoding.CHIMP);

intSet.add(TSEncoding.SPRINTZ);

intSet.add(TSEncoding.RLBE);

@@ -69,7 +68,6 @@ public class SchemaUtils {

floatSet.add(TSEncoding.TS_2DIFF);

floatSet.add(TSEncoding.GORILLA_V1);

floatSet.add(TSEncoding.GORILLA);

- floatSet.add(TSEncoding.FREQ);

floatSet.add(TSEncoding.CHIMP);

floatSet.add(TSEncoding.SPRINTZ);

floatSet.add(TSEncoding.RLBE);

diff --git a/site/src/main/.vuepress/sidebar/en.ts

b/site/src/main/.vuepress/sidebar/en.ts

index cdb26b81e0c..daba87e1bca 100644

--- a/site/src/main/.vuepress/sidebar/en.ts

+++ b/site/src/main/.vuepress/sidebar/en.ts

@@ -100,7 +100,6 @@ export const enSidebar = sidebar({

{ text: 'REST API V1 (Not Recommend)', link: 'RestServiceV1' },

{ text: 'REST API V2', link: 'RestServiceV2' },

{ text: 'TsFile API', link: 'Programming-TsFile-API' },

- { text: 'InfluxDB Protocol', link: 'InfluxDB-Protocol' },

{ text: 'Interface Comparison', link: 'Interface-Comparison' },

],

},

diff --git a/site/src/main/.vuepress/sidebar/zh.ts

b/site/src/main/.vuepress/sidebar/zh.ts

index 7dda3df39b4..50c3b00892d 100644

--- a/site/src/main/.vuepress/sidebar/zh.ts

+++ b/site/src/main/.vuepress/sidebar/zh.ts

@@ -100,7 +100,6 @@ export const zhSidebar = sidebar({

{ text: 'REST API V1 (不推荐)', link: 'RestServiceV1' },

{ text: 'REST API V2', link: 'RestServiceV2' },

{ text: 'TsFile API', link: 'Programming-TsFile-API' },

- { text: 'InfluxDB 协议适配器', link: 'InfluxDB-Protocol' },

{ text: '原生接口对比', link: 'Interface-Comparison' },

],

},

diff --git a/tsfile/pom.xml b/tsfile/pom.xml

index 85ee32982f9..0f568495af4 100644

--- a/tsfile/pom.xml

+++ b/tsfile/pom.xml

@@ -61,10 +61,6 @@

<groupId>org.lz4</groupId>

<artifactId>lz4-java</artifactId>

</dependency>

- <dependency>