This is an automated email from the ASF dual-hosted git repository.

zhasheng pushed a commit to branch master

in repository https://gitbox.apache.org/repos/asf/incubator-mxnet.git

The following commit(s) were added to refs/heads/master by this push:

new a3115d5 [DOC] update contributors and readme (#19041)

a3115d5 is described below

commit a3115d552933bf50f46a06f9c56695168b4a42cd

Author: Sheng Zha <[email protected]>

AuthorDate: Sun Aug 30 13:17:21 2020 -0700

[DOC] update contributors and readme (#19041)

* update contributors and readme

* update

---

CONTRIBUTORS.md | 159 +++++++++++++++++++++++++++++++++-----------------------

README.md | 158 +++++++++++++++++++++++++++++++------------------------

2 files changed, 184 insertions(+), 133 deletions(-)

diff --git a/CONTRIBUTORS.md b/CONTRIBUTORS.md

index 4146d45..3bf9ece 100644

--- a/CONTRIBUTORS.md

+++ b/CONTRIBUTORS.md

@@ -17,76 +17,122 @@

Contributors of Apache MXNet (incubating)

=========================================

-MXNet has been developed by a community of people who are interested in

large-scale machine learning and deep learning.

-Everyone is more than welcomed to is a great way to make the project better

and more accessible to more users.

+Apache MXNet adopts the Apache way and governs by merit. We believe that it is

important to create

+an inclusive community where everyone can use, contribute to, and influence

the direction of

+the project. We actively invite contributors who have earned the merit to be

part of the

+development community. See [MXNet Community

Guide][https://mxnet.apache.org/community/community].

-Committers

-----------

-Committers are people who have made substantial contribution to the project

and being active.

-The committers are the granted write access to the project.

+PMC

+---

+The Project Management Committee(PMC) consists group of active committers that

moderate the

+discussion, manage the project release, and proposes new committer/PMC

members. Here's the list of

+PMC members in alphabetical order by first name.

+

+* [Anirudh Subramanian](https://github.com/anirudh2290)

* [Bing Xu](https://github.com/antinucleon)

- Bing is the initiator and major contributor of operators and ndarray

modules of MXNet.

-* [Tianjun Xiao](https://github.com/sneakerkg)

- - Tianqjun is the master behind the fast data loading and preprocessing.

-* [Yutian Li](https://github.com/hotpxl)

- - Yutian is the ninja behind the dependency and storage engine of MXNet.

-* [Mu Li](https://github.com/mli)

- - Mu is the contributor of the distributed key-value store in MXNet.

-* [Tianqi Chen](https://github.com/tqchen)

- - Tianqi is one of the initiator of the MXNet project.

-* [Min Lin](https://github.com/mavenlin)

- - Min is the guy behind the symbolic magics of MXNet.

-* [Naiyan Wang](https://github.com/winstywang)

- - Naiyan is the creator of static symbolic graph module of MXNet.

-* [Mingjie Wang](https://github.com/jermainewang)

- - Mingjie is the initiator, and contributes the design of the dependency

engine.

-* [Chuntao Hong](https://github.com/hjk41)

- - Chuntao is the initiator and provides the initial design of engine.

+* [Carin Meier](https://github.com/gigasquid)

+ - Carin created and is the current maintainer for the Clojure interface.

* [Chiyuan Zhang](https://github.com/pluskid)

- Chiyuan is the creator of MXNet Julia package.

+* [Chris Olivier](https://github.com/cjolivier01)

+* [Dick Carter](https://github.com/DickJC123)

* [Junyuan Xie](https://github.com/piiswrong)

* [Haibin Lin](https://github.com/eric-haibin-lin)

+* [Henri Yandell](https://github.com/hen)

+* [Hongliang Liu](https://github.com/phunterlau)

+* [Indhu Bharathi](https://github.com/indhub)

+* [Jian Zhang](https://github.com/jzhang-zju)

+* [Joe Spisak](https://github.com/jspisak)

+* [Jun Wu](https://github.com/reminisce)

+* [Leonard Lausen](https://github.com/leezu)

+* [Liang Depeng](https://github.com/Ldpe2G)

+* [Ly Nguyen](https://github.com/lxn2)

+* [Madan Jampani](https://github.com/madjam)

+* [Marco de Abreu](https://github.com/marcoabreu)

+ - Marco is the creator of the current MXNet CI.

+* [Mu Li](https://github.com/mli)

+ - Mu is the contributor of the distributed key-value store in MXNet.

+* [Nan Zhu](https://github.com/CodingCat)

+* [Naveen Swamy](https://github.com/nswamy)

* [Qiang Kou](https://github.com/thirdwing)

- KK is a R ninja, he makes mxnet available for R users.

+* [Qing Lan](https://github.com/lanking520)

+* [Sandeep Krishnamurthy](https://github.com/sandeep-krishnamurthy)

+* [Sergey Kolychev](https://github.com/sergeykolychev)

+ - Sergey is original author and current maintainer of Perl5 interface.

+* [Sheng Zha](https://github.com/szha)

+* [Shiwen Hu](https://github.com/yajiedesign)

+* [Tao Lv](https://github.com/TaoLv)

+ - Tao is a major contributor to the MXNet MKL-DNN backend and performance on

CPU.

+* [Terry Chen](https://github.com/terrychenism)

+* [Thomas Delteil](https://github.com/ThomasDelteil)

+* [Tianqi Chen](https://github.com/tqchen)

+ - Tianqi is one of the initiator of the MXNet project.

* [Tong He](https://github.com/hetong007)

- Tong is the major maintainer of MXNet R package, he designs the MXNet

interface and wrote many of the tutorials on R.

+* [Tsuyoshi Ozawa](https://github.com/oza)

+* [Xingjian Shi](https://github.com/sxjscience)

+* [Yifeng Geng](https://github.com/gengyifeng)

* [Yizhi Liu](https://github.com/yzhliu)

- Yizhi is the main creator on mxnet scala project to make deep learning

available for JVM stacks.

-* [Zixuan Huang](https://github.com/yanqingmen)

- - Zixuan is one of major maintainers of MXNet Scala package.

+* [Yu Zhang](https://github.com/yzhang87)

* [Yuan Tang](https://github.com/terrytangyuan)

- Yuan is one of major maintainers of MXNet Scala package.

-* [Chris Olivier](https://github.com/cjolivier01)

-* [Sergey Kolychev](https://github.com/sergeykolychev)

- - Sergey is original author and current maintainer of Perl5 interface.

-* [Naveen Swamy](https://github.com/nswamy)

-* [Marco de Abreu](https://github.com/marcoabreu)

- - Marco is the creator of the current MXNet CI.

-* [Carin Meier](https://github.com/gigasquid)

- - Carin created and is the current maintainer for the Clojure interface.

-* [Patric Zhao](https://github.com/pengzhao-intel)

- - Patric is a parallel computing expert and a major contributor to the MXNet

MKL-DNN backend.

-* [Tao Lv](https://github.com/TaoLv)

- - Tao is a major contributor to the MXNet MKL-DNN backend and performance on

CPU.

-* [Zach Kimberg](https://github.com/zachgk)

- - Zach is one of the major maintainers of the MXNet Scala package.

-* [Lin Yuan](https://github.com/apeforest)

- - Lin supports MXNet distributed training using Horovod and is also a major

contributor to higher order gradients.

+* [Yutian Li](https://github.com/hotpxl)

+ - Yutian is the ninja behind the dependency and storage engine of MXNet.

+* [Zhi Zhang](https://github.com/zhreshold)

+* [Zihao Zheng](https://github.com/zihaolucky)

+* [Ziheng Jiang](https://github.com/zihengjiang)

+* [Ziyue Huang](https://github.com/ZiyueHuang)

-### Become a Committer

-MXNet is a opensource project and we are actively looking for new committers

-who are willing to help maintaining and leading the project. Committers come

from contributors who:

-* Made substantial contribution to the project.

-* Willing to actively spend time on maintaining and leading the project.

+Committers

+----------

+Committers are individuals who are granted the write access to the project. A

committer is usually

+responsible for a certain area or several areas of the code where they oversee

the code review

+process. The area of contribution can take all forms, including code

contributions and code

+reviews, documents, education, and outreach. Committers are essential for a

high quality and

+healthy project. The community actively look for new committers from

contributors.

-New committers will be proposed by current committers, with support from more

than two of current committers.

+* [Aaron Markham](https://github.com/aaronmarkham)

+* [Alex Zai](https://github.com/azai91)

+* [Anirudh Acharya](https://github.com/anirudhacharya)

+* [Aston Zhang](https://github.com/astonzhang)

+* [Da Zheng](https://github.com/zheng-da)

+* [Ding Kuo](https://github.com/chinakook)

+* [Hao Jin](https://github.com/haojin2)

+* [Haozheng Fan](https://github.com/hzfan)

+* [Iblis Lin](https://github.com/iblis17)

+* [Jackie Wu](https://github.com/wkcn)

+* [Jeremie Desgagne-Bouchard](https://github.com/jeremiedb)

+* [Jiajun Wang](https://github.com/arcadiaphy)

+* [Junru Shao](https://github.com/junrushao1994)

+* [Kedar Bellare](https://github.com/kedarbellare)

+* [Kellen Sunderland](https://github.com/KellenSunderland)

+* [Kevin Qin](https://github.com/ZhennanQin)

+* [Lai Wei](https://github.com/roywei)

+* [Lin Yuan](https://github.com/apeforest)

+ - Lin supports MXNet distributed training using Horovod and is also a major

contributor to higher order gradients.

+* [Nicolas Modrzyk](https://github.com/hellonico)

+* [Patric Zhao](https://github.com/pengzhao-intel)

+* - Patric is a parallel computing expert and a major contributor to the MXNet

MKL-DNN backend.

+* [Przemysław Trędak](https://github.com/ptrendx)

+* [Rahul Huilgol](https://github.com/rahul003)

+* [Roshani Nagmote](https://github.com/roshrini)

+* [Sam Skalicky](https://github.com/samskalicky)

+* [Steffen Rochel](https://github.com/srochel)

+* [Xi Wang](https://github.com/xidulu)

+* [Yang Shi](https://github.com/ys2843)

+* [Yuxi Hu](https://github.com/yuxihu)

+* [Zach Kimberg](https://github.com/zachgk)

+ - Zach is one of the major maintainers of the MXNet Scala package.

List of Contributors

--------------------

-* [Full List of

Contributors](https://github.com/apache/incubator-mxnet/graphs/contributors)

+* [Top-100

Contributors](https://github.com/apache/incubator-mxnet/graphs/contributors)

- To contributors: please add your name to the list when you submit a patch

to the project:)

* [Feng Wang](https://github.com/happynear)

- Feng makes MXNet compatible with Windows Visual Studio.

@@ -94,7 +140,6 @@ List of Contributors

- Jack created the amalgamation script and Go bind for MXNet.

* [Li Dong](https://github.com/donglixp)

* [Piji Li](https://github.com/lipiji)

-* [Hu Shiwen](https://github.com/yajiedesign)

* [Boyuan Deng](https://github.com/bryandeng)

* [Junran He](https://github.com/junranhe)

- Junran makes device kvstore allocation strategy smarter

@@ -122,14 +167,11 @@ List of Contributors

* [Mathis](https://github.com/sveitser)

* [sennendoko](https://github.com/sennendoko)

* [srand99](https://github.com/srand99)

-* [Yizhi Liu](https://github.com/yzhliu)

* [Taiyun](https://github.com/taiyun)

* [Yanghao Li](https://github.com/lyttonhao)

-* [Nan Zhu](https://github.com/CodingCat)

* [Ye Zhou](https://github.com/zhouye)

* [Zhang Chen](https://github.com/zhangchen-qinyinghua)

* [Xianliang Wang](https://github.com/wangxianliang)

-* [Junru Shao](https://github.com/yzgysjr)

* [Xiao Liu](https://github.com/skylook)

* [Lowik CHANUSSOT](https://github.com/Nzeuwik)

* [Alexander Skidanov](https://github.com/SkidanovAlex)

@@ -141,13 +183,11 @@ List of Contributors

* [Tao Wei](https://github.com/taoari)

* [Max Kuhn](https://github.com/topepo)

* [Yuqi Li](https://github.com/ziyeqinghan)

-* [Depeng Liang](https://github.com/Ldpe2G)

* [Kiko Qiu](https://github.com/kikoqiu)

* [Yang Bo](https://github.com/Atry)

* [Jonas Amaro](https://github.com/jonasrla)

* [Yan Li](https://github.com/Godricly)

* [Yuance Li](https://github.com/liyuance)

-* [Sandeep Krishnamurthy](https://github.com/sandeep-krishnamurthy)

* [Andre Moeller](https://github.com/andremoeller)

* [Miguel Gonzalez-Fierro](https://github.com/miguelgfierro)

* [Mingjie Xing](https://github.com/EricFisher)

@@ -162,14 +202,11 @@ List of Contributors

* [Piyush Singh](https://github.com/Piyush3dB)

* [Freddy Chua](https://github.com/freddycct)

* [Jie Zhang](https://github.com/luoyetx)

-* [Leonard Lausen](https://github.com/leezu)

* [Robert Stone](https://github.com/tlby)

* [Pedro Larroy](https://github.com/larroy)

-* [Jun Wu](https://github.com/reminisce)

* [Dom Divakaruni](https://github.com/domdivakaruni)

* [David Salinas](https://github.com/geoalgo)

* [Asmus Hetzel](https://github.com/asmushetzel)

-* [Roshani Nagmote](https://github.com/Roshrini)

* [Chetan Khatri](https://github.com/chetkhatri/)

* [James Liu](https://github.com/jamesliu/)

* [Nir Ben-Zvi](https://github.com/nirbenz/)

@@ -192,19 +229,14 @@ List of Contributors

* [David Braude](https://github.com/dabraude/)

* [Nick Robinson](https://github.com/nickrobinson)

* [Kan Wu](https://github.com/wkcn)

-* [Rahul Huilgol](https://github.com/rahul003)

-* [Anirudh Subramanian](https://github.com/anirudh2290/)

* [Zheqin Wang](https://github.com/rasefon)

* [Thom Lane](https://github.com/thomelane)

* [Sina Afrooze](https://github.com/safrooze)

* [Sergey Sokolov](https://github.com/Ishitori)

-* [Thomas Delteil](https://github.com/ThomasDelteil)

* [Jesse Brizzi](https://github.com/jessebrizzi)

* [Hang Zhang](http://hangzh.com)

* [Kou Ding](https://github.com/chinakook)

* [Istvan Fehervari](https://github.com/ifeherva)

-* [Aaron Markham](https://github.com/aaronmarkham)

-* [Sam Skalicky](https://github.com/samskalicky)

* [Per Goncalves da Silva](https://github.com/perdasilva)

* [Zhijingcheng Yu](https://github.com/jasonyu1996)

* [Cheng-Che Lee](https://github.com/stu1130)

@@ -212,9 +244,7 @@ List of Contributors

* [LuckyPigeon](https://github.com/LuckyPigeon)

* [Anton Chernov](https://github.com/lebeg)

* [Denisa Roberts](https://github.com/D-Roberts)

-* [Dick Carter](https://github.com/DickJC123)

* [Rahul Padmanabhan](https://github.com/rahul3)

-* [Yuxi Hu](https://github.com/yuxihu)

* [Harsh Patel](https://github.com/harshp8l)

* [Xiao Wang](https://github.com/BeyonderXX)

* [Piyush Ghai](https://github.com/piyushghai)

@@ -253,7 +283,6 @@ List of Contributors

* [Oliver Kowalke](https://github.com/olk)

* [Connor Goggins](https://github.com/connorgoggins)

* [Wei Chu](https://github.com/waytrue17)

-* [Yang Shi](https://github.com/ys2843)

* [Joe Evans](https://github.com/josephevans)

Label Bot

@@ -263,7 +292,7 @@ Label Bot

- To use me, comment:

- @mxnet-label-bot add [specify comma separated labels here]

- @mxnet-label-bot remove [specify comma separated labels here]

- - @mxnet-label-bot update [specify comma separated labels here]

+ - @mxnet-label-bot update [specify comma separated labels here]

(i.e. @mxnet-label-bot update [Bug, Python])

- Available label names which are supported:

[Labels](https://github.com/apache/incubator-mxnet/labels)

diff --git a/README.md b/README.md

index 60bfe5a..f0a38b9 100644

--- a/README.md

+++ b/README.md

@@ -19,98 +19,120 @@

<a href="https://mxnet.apache.org/";><img

src="https://raw.githubusercontent.com/dmlc/web-data/master/mxnet/image/mxnet_logo_2.png";></a><br>

</div>

-Apache MXNet (incubating) for Deep Learning

-=====

-| Master | Docs | License |

-| :-------------:|:-------------:|:--------:|

-[](http://jenkins.mxnet-ci.amazon-ml.com/job/mxnet-validation/job/centos-cpu/job/master/)

[](http://jenkins.mxnet-ci.amazon-ml.com/job/mxnet-validation/job/centos-gpu/job/master/)

[![Clang [...]

+[](https://mxnet.apache.org)

-

+Apache MXNet (incubating) for Deep Learning

+===========================================

+[](https://github.com/apache/incubator-mxnet/releases)

[](https://github.com/apache/incubator-mxnet/stargazers)

[](https://github.com/apache/incubator-mxnet/network)

[ is a deep learning framework designed for both

*efficiency* and *flexibility*.

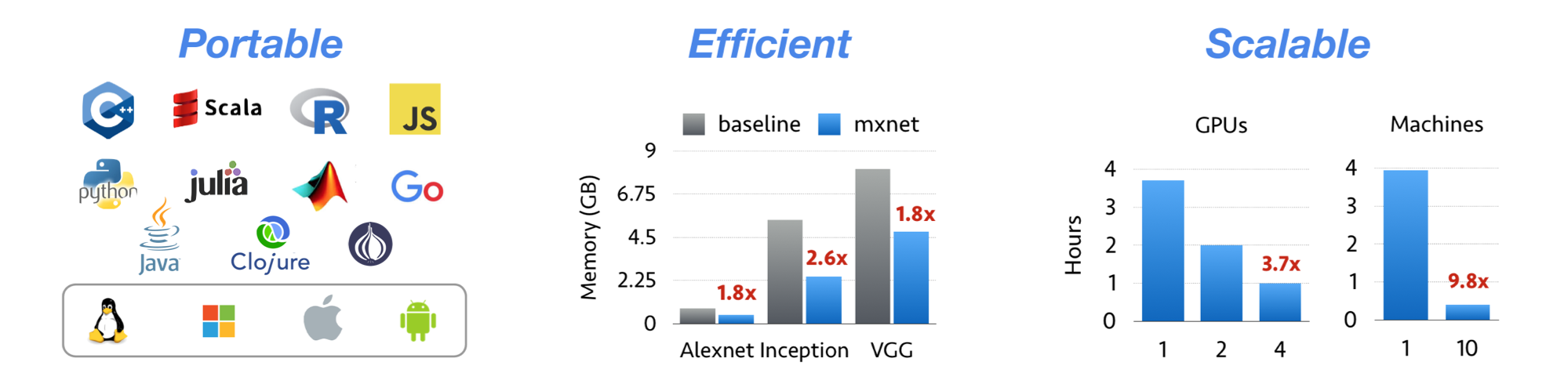

+Apache MXNet is a deep learning framework designed for both *efficiency* and

*flexibility*.

It allows you to ***mix*** [symbolic and imperative

programming](https://mxnet.apache.org/api/architecture/program_model)

to ***maximize*** efficiency and productivity.

At its core, MXNet contains a dynamic dependency scheduler that automatically

parallelizes both symbolic and imperative operations on the fly.

A graph optimization layer on top of that makes symbolic execution fast and

memory efficient.

-MXNet is portable and lightweight, scaling effectively to multiple GPUs and

multiple machines.

+MXNet is portable and lightweight, scalable to many GPUs and machines.

-MXNet is more than a deep learning project. It is a collection of

-[blue prints and

guidelines](https://mxnet.apache.org/api/architecture/overview) for building

-deep learning systems, and interesting insights of DL systems for hackers.

+MXNet is more than a deep learning project. It is a

[community](https://mxnet.apache.org/community)

+on a mission of democratizing AI. It is a collection of [blue prints and

guidelines](https://mxnet.apache.org/api/architecture/overview)

+for building deep learning systems, and interesting insights of DL systems for

hackers.

-Ask Questions

--------------

-* Please use [discuss.d2l.ai](https://discuss.d2l.ai/c/d2l-en/mxnet/) or [old

version:discuss.mxnet.io](https://discuss.mxnet.io/) for asking questions.

-* Please use [mxnet/issues](https://github.com/apache/incubator-mxnet/issues)

for reporting bugs.

-* [Frequent Asked Questions](https://mxnet.apache.org/faq/faq.html)

+Licensed under an

[Apache-2.0](https://github.com/apache/incubator-mxnet/blob/master/LICENSE)

license.

-How to Contribute

------------------

-* [Contribute to MXNet](https://mxnet.apache.org/community/contribute.html)

+| Branch | Build Status |

+|:-------:|:-------------:|

+| [master](https://github.com/apache/incubator-mxnet/tree/master) | [](http://jenkins.mxnet-ci.amazon-ml.com/job/mxnet-validation/job/centos-cpu/job/master/)

[](http://jenkins.mxnet-ci.ama

[...]

+| [v1.x](https://github.com/apache/incubator-mxnet/tree/v1.x) | [](http://jenkins.mxnet-ci.amazon-ml.com/job/mxnet-validation/job/centos-cpu/job/v1.x/)

[](http://jenkins.mxnet-ci.amazon-ml.com

[...]

+

+Features

+--------

+* NumPy-like programming interface, and is integrated with the new,

easy-to-use Gluon 2.0 interface. NumPy users can easily adopt MXNet and start

in deep learning.

+* Automatic hybridization provides imperative programming with the performance

of traditional symbolic programming.

+* Lightweight, memory-efficient, and portable to smart devices through native

cross-compilation support on ARM, and through ecosystem projects such as

[TVM](https://tvm.ai),

[TensorRT](https://docs.nvidia.com/deeplearning/tensorrt/developer-guide/index.html),

[OpenVINO](https://software.intel.com/content/www/us/en/develop/tools/openvino-toolkit.html).

+* Scales up to multi GPUs and distributed setting with auto parallelism

through [ps-lite](https://github.com/dmlc/ps-lite),

[Horovod](https://github.com/horovod/horovod), and

[BytePS](https://github.com/bytedance/byteps).

+* Extensible backend that supports full customization, allowing integration

with custom accelerator libraries and in-house hardware without the need to

maintain a fork.

+* Support for [Python](https://mxnet.apache.org/api/python),

[Java](https://mxnet.apache.org/api/java),

[C++](https://mxnet.apache.org/api/cpp), [R](https://mxnet.apache.org/api/r),

[Scala](https://mxnet.apache.org/api/scala),

[Clojure](https://mxnet.apache.org/api/clojure),

[Go](https://github.com/jdeng/gomxnet/),

[Javascript](https://github.com/dmlc/mxnet.js/),

[Perl](https://mxnet.apache.org/api/perl), and

[Julia](https://mxnet.apache.org/api/julia)

+* Cloud-friendly and directly compatible with AWS and Azure.

+

+Contents

+--------

+* [Installation](https://mxnet.apache.org/get_started)

+* [Tutorials](https://mxnet.apache.org/api/python/docs/tutorials/)

+* [Ecosystem](https://mxnet.apache.org/ecosystem)

+* [API Documentation](https://mxnet.apache.org/api)

+* [Examples](https://github.com/apache/incubator-mxnet-examples)

+* [Social Media](https://mxnet.apache.org/community#social-media)

+* [Contribute to MXNet](https://mxnet.apache.org/community/)

What's New

----------

-* [Version 1.6.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.6.0) - MXNet

1.6.0 Release.

-* [Version 1.5.1

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.5.1) - MXNet

1.5.1 Patch Release.

-* [Version 1.5.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.5.0) - MXNet

1.5.0 Release.

-* [Version 1.4.1

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.4.1) - MXNet

1.4.1 Patch Release.

-* [Version 1.4.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.4.0) - MXNet

1.4.0 Release.

-* [Version 1.3.1

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.3.1) - MXNet

1.3.1 Patch Release.

-* [Version 1.3.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.3.0) - MXNet

1.3.0 Release.

-* [Version 1.2.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.2.0) - MXNet

1.2.0 Release.

-* [Version 1.1.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.1.0) - MXNet

1.1.0 Release.

-* [Version 1.0.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.0.0) - MXNet

1.0.0 Release.

-* [Version 0.12.1

Release](https://github.com/apache/incubator-mxnet/releases/tag/0.12.1) - MXNet

0.12.1 Patch Release.

-* [Version 0.12.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/0.12.0) - MXNet

0.12.0 Release.

-* [Version 0.11.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/0.11.0) - MXNet

0.11.0 Release.

+* [1.7.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.7.0) - MXNet

1.7.0 Release.

+* [1.6.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.6.0) - MXNet

1.6.0 Release.

+* [1.5.1

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.5.1) - MXNet

1.5.1 Patch Release.

+* [1.5.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.5.0) - MXNet

1.5.0 Release.

+* [1.4.1

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.4.1) - MXNet

1.4.1 Patch Release.

+* [1.4.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.4.0) - MXNet

1.4.0 Release.

+* [1.3.1

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.3.1) - MXNet

1.3.1 Patch Release.

+* [1.3.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.3.0) - MXNet

1.3.0 Release.

+* [1.2.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.2.0) - MXNet

1.2.0 Release.

+* [1.1.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.1.0) - MXNet

1.1.0 Release.

+* [1.0.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/1.0.0) - MXNet

1.0.0 Release.

+* [0.12.1

Release](https://github.com/apache/incubator-mxnet/releases/tag/0.12.1) - MXNet

0.12.1 Patch Release.

+* [0.12.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/0.12.0) - MXNet

0.12.0 Release.

+* [0.11.0

Release](https://github.com/apache/incubator-mxnet/releases/tag/0.11.0) - MXNet

0.11.0 Release.

* [Apache Incubator](http://incubator.apache.org/projects/mxnet.html) - We are

now an Apache Incubator project.

-* [Version 0.10.0 Release](https://github.com/dmlc/mxnet/releases/tag/v0.10.0)

- MXNet 0.10.0 Release.

-* [Version 0.9.3 Release](./docs/architecture/release_note_0_9.md) - First 0.9

official release.

-* [Version 0.9.1 Release (NNVM

refactor)](./docs/architecture/release_note_0_9.md) - NNVM branch is merged

into master now. An official release will be made soon.

-* [Version 0.8.0 Release](https://github.com/dmlc/mxnet/releases/tag/v0.8.0)

-* [Updated Image Classification with new Pre-trained

Models](./example/image-classification)

-* [Notebooks How to Use MXNet](https://github.com/d2l-ai/d2l-en)

+* [0.10.0 Release](https://github.com/dmlc/mxnet/releases/tag/v0.10.0) - MXNet

0.10.0 Release.

+* [0.9.3 Release](./docs/architecture/release_note_0_9.md) - First 0.9

official release.

+* [0.9.1 Release (NNVM refactor)](./docs/architecture/release_note_0_9.md) -

NNVM branch is merged into master now. An official release will be made soon.

+* [0.8.0 Release](https://github.com/dmlc/mxnet/releases/tag/v0.8.0)

+

+### Ecosystem News

+

* [MKLDNN for Faster CPU

Performance](docs/python_docs/python/tutorials/performance/backend/mkldnn/mkldnn_readme.md)

* [MXNet Memory Monger, Training Deeper Nets with Sublinear Memory

Cost](https://github.com/dmlc/mxnet-memonger)

* [Tutorial for NVidia GTC 2016](https://github.com/dmlc/mxnet-gtc-tutorial)

* [MXNet.js: Javascript Package for Deep Learning in Browser (without

server)](https://github.com/dmlc/mxnet.js/)

* [Guide to Creating New Operators

(Layers)](https://mxnet.apache.org/api/faq/new_op)

* [Go binding for inference](https://github.com/songtianyi/go-mxnet-predictor)

-* [Large Scale Image

Classification](https://github.com/apache/incubator-mxnet/tree/master/example/image-classification)

-Contents

---------

-* [Book](https://d2l.ai)

-* [Website](https://mxnet.apache.org)

-* [Documentation](https://mxnet.apache.org/api)

-* [Blog](https://mxnet.apache.org/blog)

-* [Code

Examples](https://github.com/apache/incubator-mxnet/tree/master/example)

-* [Installation](https://mxnet.apache.org/get_started)

-* [Features](https://mxnet.apache.org/features)

-* [Ecosystem](https://mxnet.apache.org/ecosystem)

+Stay Connected

+--------------

-Features

---------

-* Design notes providing useful insights that can re-used by other DL projects

-* Flexible configuration for arbitrary computation graph

-* Mix and match imperative and symbolic programming to maximize flexibility

and efficiency

-* Lightweight, memory efficient and portable to smart devices

-* Scales up to multi GPUs and distributed setting with auto parallelism

-* Support for [Python](https://mxnet.apache.org/api/python),

[Scala](https://mxnet.apache.org/api/scala),

[C++](https://mxnet.apache.org/api/cpp),

[Java](https://mxnet.apache.org/api/java),

[Clojure](https://mxnet.apache.org/api/clojure),

[R](https://mxnet.apache.org/api/r), [Go](https://github.com/jdeng/gomxnet/),

[Javascript](https://github.com/dmlc/mxnet.js/),

[Perl](https://mxnet.apache.org/api/perl),

[Matlab](https://github.com/apache/incubator-mxnet/tree/master/matlab), and

[Julia] [...]

-* Cloud-friendly and directly compatible with AWS S3, AWS Deep Learning AMI,

AWS SageMaker, HDFS, and Azure

-

-License

--------

-Licensed under an

[Apache-2.0](https://github.com/apache/incubator-mxnet/blob/master/LICENSE)

license.

+| Channel | Purpose |

+|---|---|

+| [Follow MXNet Development on

Github](https://github.com/apache/incubator-mxnet/issues) | See what's going on

in the MXNet project. |

+| [MXNet Confluence Wiki for

Developers](https://cwiki.apache.org/confluence/display/MXNET/Apache+MXNet+Home)

<i class="fas fa-external-link-alt"> | MXNet developer wiki for information

related to project development, maintained by contributors and developers. To

request write access, send an email to [send request to the dev

list](mailto:[email protected]?subject=Requesting%20CWiki%20write%20access)

<i class="far fa-envelope"></i>. |

+| [[email protected] mailing

list](https://lists.apache.org/[email protected]) | The "dev

list". Discussions about the development of MXNet. To subscribe, send an email

to [[email protected]](mailto:[email protected]) <i

class="far fa-envelope"></i>. |

+| [discuss.mxnet.io](https://discuss.mxnet.io) <i class="fas

fa-external-link-alt"></i> | Asking & answering MXNet usage questions. |

+| [Apache Slack #mxnet Channel](https://the-asf.slack.com/archives/C7FN4FCP9)

<i class="fas fa-external-link-alt"> | Connect with MXNet and other Apache

developers. To join the MXNet slack channel [send request to the dev

list](mailto:[email protected]?subject=Requesting%20slack%20access) <i

class="far fa-envelope"></i>. |

+| [Follow MXNet on Social Media](#social-media) | Get updates about new

features and events. |

-Reference Paper

----------------

-Tianqi Chen, Mu Li, Yutian Li, Min Lin, Naiyan Wang, Minjie Wang, Tianjun Xiao,

-Bing Xu, Chiyuan Zhang, and Zheng Zhang.

-[MXNet: A Flexible and Efficient Machine Learning Library for Heterogeneous

Distributed

Systems](https://github.com/dmlc/web-data/raw/master/mxnet/paper/mxnet-learningsys.pdf).

-In Neural Information Processing Systems, Workshop on Machine Learning

Systems, 2015

+### Social Media

+

+Keep connected with the latest MXNet news and updates.

+

+<p>

+<a href="https://twitter.com/apachemxnet";><img

src="https://raw.githubusercontent.com/dmlc/web-data/master/mxnet/social/twitter.svg?sanitize=true";

height="30px"/> Apache MXNet on Twitter</a>

+</p>

+<p>

+<a href="https://medium.com/apache-mxnet";><img

src="https://raw.githubusercontent.com/dmlc/web-data/master/mxnet/social/medium_black.svg?sanitize=true";

height="30px"/> Contributor and user blogs about MXNet</a>

+</p>

+<p>

+<a href="https://reddit.com/r/mxnet";><img

src="https://raw.githubusercontent.com/dmlc/web-data/master/mxnet/social/reddit_blue.svg?sanitize=true";

height="30px" alt="reddit"/> Discuss MXNet on r/mxnet</a>

+</p>

+<p>

+<a href="https://www.youtube.com/apachemxnet";><img

src="https://raw.githubusercontent.com/dmlc/web-data/master/mxnet/social/youtube_red.svg?sanitize=true";

height="30px"/> Apache MXNet YouTube channel</a>

+</p>

+<p>

+<a href="https://www.linkedin.com/company/apache-mxnet";><img

src="https://raw.githubusercontent.com/dmlc/web-data/master/mxnet/social/linkedin.svg?sanitize=true";

height="30px"/> Apache MXNet on LinkedIn</a>

+</p>

+

History

-------

MXNet emerged from a collaboration by the authors of

[cxxnet](https://github.com/dmlc/cxxnet),

[minerva](https://github.com/dmlc/minerva), and

[purine2](https://github.com/purine/purine2). The project reflects what we have

learned from the past projects. MXNet combines aspects of each of these

projects to achieve flexibility, speed, and memory efficiency.

+

+Tianqi Chen, Mu Li, Yutian Li, Min Lin, Naiyan Wang, Minjie Wang, Tianjun Xiao,

+Bing Xu, Chiyuan Zhang, and Zheng Zhang.

+[MXNet: A Flexible and Efficient Machine Learning Library for Heterogeneous

Distributed

Systems](https://github.com/dmlc/web-data/raw/master/mxnet/paper/mxnet-learningsys.pdf).

+In Neural Information Processing Systems, Workshop on Machine Learning

Systems, 2015