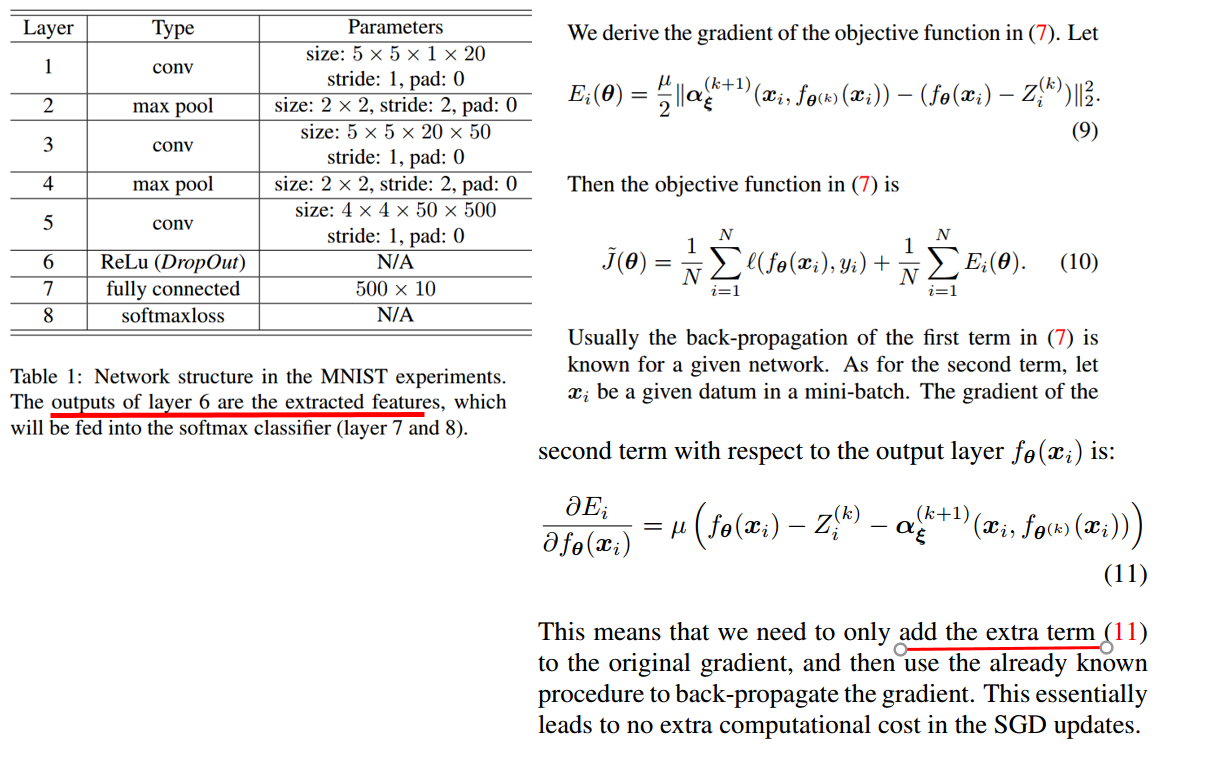

wang-qun-sen opened a new issue #11941: how to get the gradient which loss function with respect to ReLU layer output feature? URL: https://github.com/apache/incubator-mxnet/issues/11941 when i read the paper <<LDMNet: Low Dimensional Manifold Regularized Neural Networks>>, https://arxiv.org/abs/1711.06246?context=cs i got a different regularization iterm which come from the output feature of middle layer Relu. i know it is not like the weight decay , and i have to modify the backward of the gradient, but how to do this ?

---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected] With regards, Apache Git Services