ThomasDelteil commented on a change in pull request #13094: WIP: Simplifications and some fun stuff for the MNIST Gluon tutorial URL: https://github.com/apache/incubator-mxnet/pull/13094#discussion_r237286426

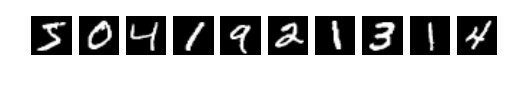

########## File path: docs/tutorials/gluon/mnist.md ########## @@ -1,333 +1,434 @@ -# Handwritten Digit Recognition +# Hand-written Digit Recognition -In this tutorial, we'll give you a step by step walk-through of how to build a hand-written digit classifier using the [MNIST](https://en.wikipedia.org/wiki/MNIST_database) dataset. +In this tutorial, we'll give you a step-by-step walkthrough of building a hand-written digit classifier using the [MNIST](https://en.wikipedia.org/wiki/MNIST_database) dataset. -MNIST is a widely used dataset for the hand-written digit classification task. It consists of 70,000 labeled 28x28 pixel grayscale images of hand-written digits. The dataset is split into 60,000 training images and 10,000 test images. There are 10 classes (one for each of the 10 digits). The task at hand is to train a model using the 60,000 training images and subsequently test its classification accuracy on the 10,000 test images. +MNIST is a widely used dataset for the hand-written digit classification task. It consists of 70,000 labeled grayscale images of hand-written digits, each 28x28 pixels in size. The dataset is split into 60,000 training images and 10,000 test images. There are 10 classes (one for each of the 10 digits). The task at hand is to train a model that can correctly classify the images into the digits they represent. The 60,000 training images are used to fit the model, and its performance in terms of classification accuracy is subsequently validated on the 10,000 test images.  **Figure 1:** Sample images from the MNIST dataset. -This tutorial uses MXNet's new high-level interface, gluon package to implement MLP using -imperative fashion. - -This is based on the Mnist tutorial with symbolic approach. You can find it [here](http://mxnet.io/tutorials/python/mnist.html). +This tutorial uses MXNet's high-level *Gluon* interface to implement neural networks in an imperative fashion. It is based on [the corresponding tutorial written with the symbolic approach](https://mxnet.incubator.apache.org/tutorials/python/mnist.html). ## Prerequisites -To complete this tutorial, we need: -- MXNet. See the instructions for your operating system in [Setup and Installation](http://mxnet.io/install/index.html). +To complete this tutorial, you need: -- [Python Requests](http://docs.python-requests.org/en/master/) and [Jupyter Notebook](http://jupyter.org/index.html). +- MXNet. See the instructions for your operating system in [Setup and Installation](https://mxnet.incubator.apache.org/install/index.html). +- The Python [`requests`](http://docs.python-requests.org/en/master/) library. +- (Optional) The [Jupyter Notebook](https://jupyter.org/index.html) software for interactively running the provided `.ipynb` file. ``` $ pip install requests jupyter ``` ## Loading Data -Before we define the model, let's first fetch the [MNIST](http://yann.lecun.com/exdb/mnist/) dataset. +The following code downloads the MNIST dataset to the default location (`.mxnet/datasets/mnist/` in your home directory) and creates `Dataset` objects `train_data` and `val_data` for training and validation, respectively. +These objects can later be used to get one image or a batch of images at a time, together with their corresponding labels. -The following source code downloads and loads the images and the corresponding labels into memory. +We also immediately apply the `transform_first()` method and supply a function that moves the channel axis of the images to the beginning (`(28, 28, 1) -> (1, 28, 28)`), casts them to `float32` and rescales them from `[0, 255]` to `[0, 1]`. +The name `transform_first` reflects the fact that these datasets contain images and labels, and that the transform should only be applied to the first of each `(image, label)` pair. ```python import mxnet as mx -# Fixing the random seed +# Select a fixed random seed for reproducibility mx.random.seed(42) -mnist = mx.test_utils.get_mnist() +def data_xform(data): + """Move channel axis to the beginning, cast to float32, and normalize to [0, 1].""" + return nd.moveaxis(data, 2, 0).astype('float32') / 255 + +train_data = mx.gluon.data.vision.MNIST(train=True).transform_first(data_xform) +val_data = mx.gluon.data.vision.MNIST(train=False).transform_first(data_xform) ``` -After running the above source code, the entire MNIST dataset should be fully loaded into memory. Note that for large datasets it is not feasible to pre-load the entire dataset first like we did here. What is needed is a mechanism by which we can quickly and efficiently stream data directly from the source. MXNet Data iterators come to the rescue here by providing exactly that. Data iterator is the mechanism by which we feed input data into an MXNet training algorithm and they are very simple to initialize and use and are optimized for speed. During training, we typically process training samples in small batches and over the entire training lifetime will end up processing each training example multiple times. In this tutorial, we'll configure the data iterator to feed examples in batches of 100. Keep in mind that each example is a 28x28 grayscale image and the corresponding label. +Since the MNIST dataset is relatively small, the `MNIST` class loads it into memory all at once, but for larger datasets like ImageNet, this would no longer be possible. +The Gluon `Dataset` class from which `MNIST` derives supports both cases. +In general, `Dataset` and `DataLoader` (which we will encounter next) are the machinery in MXNet that provides a stream of input data to be consumed by a training algorithm, typically in batches of multiple data entities at once for better efficiency. +In this tutorial, we will configure the data loader to feed examples in batches of 100. + +An image batch is commonly represented as a 4-D array with shape `(batch_size, num_channels, height, width)`. +This convention is denoted by "BCHW", and it is the default in MXNet. Review comment: can you replace with `NCHW` ? Thanks! ---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected] With regards, Apache Git Services