tobezhou33 opened a new issue #1593: URL: https://github.com/apache/incubator-seatunnel/issues/1593

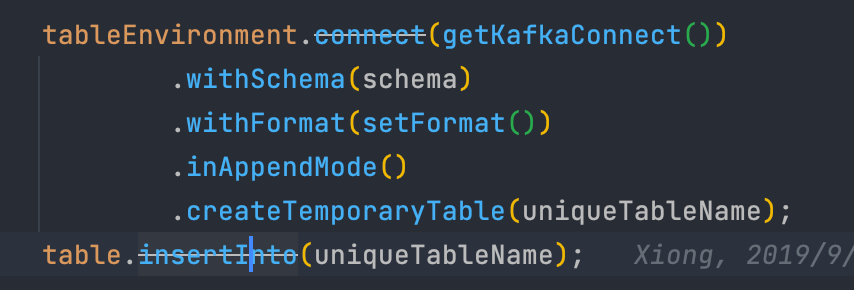

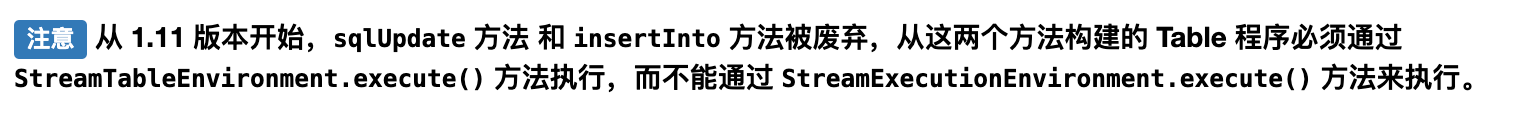

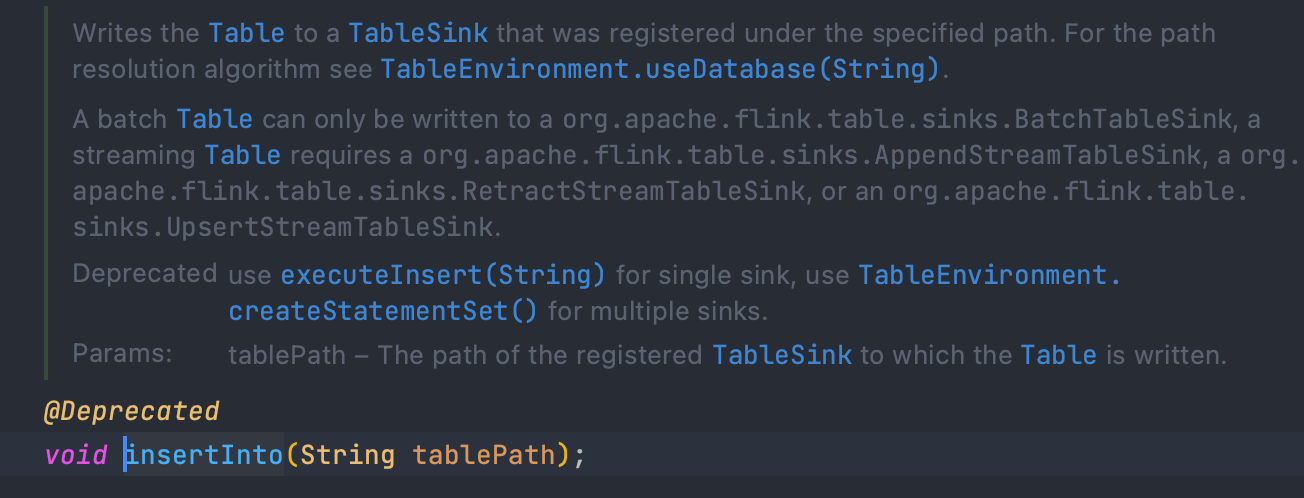

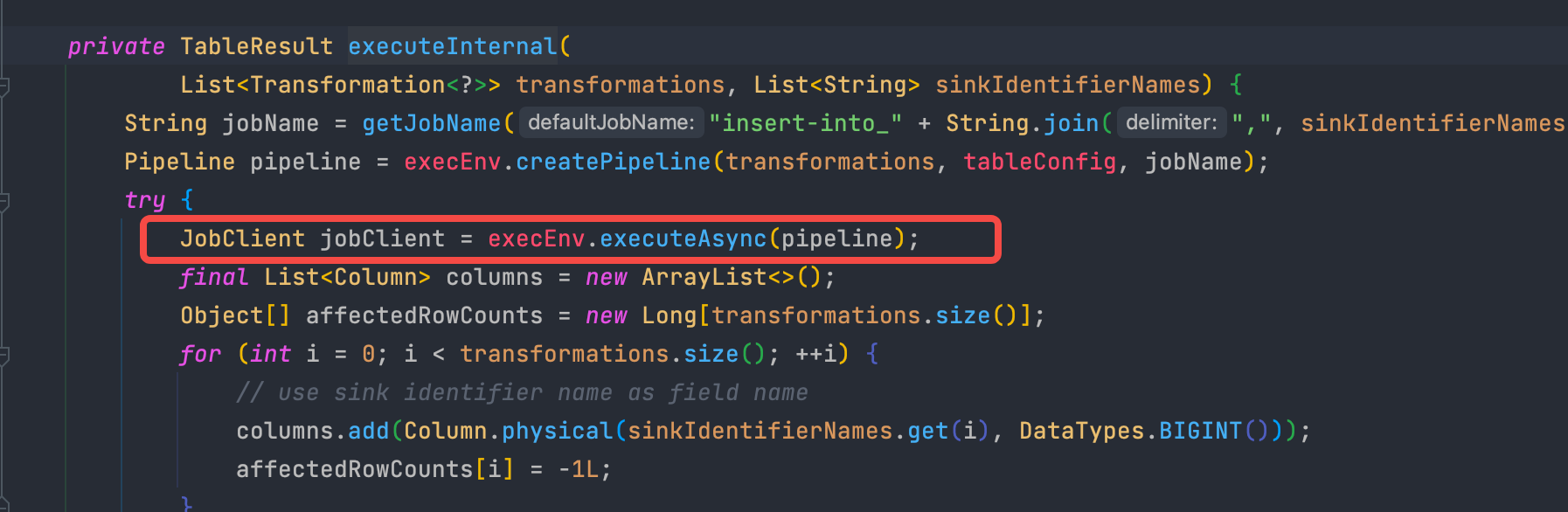

### Search before asking - [X] I had searched in the [issues](https://github.com/apache/incubator-seatunnel/issues?q=is%3Aissue+label%3A%22bug%22) and found no similar issues. ### What happened when i test kafka to kafka task.i fount no output。   i find that if after 1.11 use table.insertInto(uniqueTableName); and then use flinkEnvironment.getStreamExecutionEnvironment().execute(flinkEnvironment.getJobName()); it cannot work. and must be use StreamTableEnvironment.execute()  i see use executeInsert(String) for single sink.but i also have a try. but i find it would submit 2 job. because all excuteXXX is table and tableEnvironment finally executeAsync a job named insert-into_XXXXX  so in kafka table sink ,could use datastream api to add a kafka sink. or use StreamTableEnvironment.execute() in FlinkStreamExecution.java to submit job ? ### SeaTunnel Version latest ### SeaTunnel Config ```conf env { # You can set flink configuration here execution.parallelism = 1 execution.planner = blink #execution.checkpoint.interval = 10000 #execution.checkpoint.data-uri = "hdfs://localhost:9000/checkpoint" } source { # This is a example source plugin **only for test and demonstrate the feature source plugin** KafkaTableStream { result_table_name = test topics = test consumer.group.id = "seatunnel#2" consumer.bootstrap.servers = "127.0.0.1:9092" schema = "{\"name\":111,\"age\":\"aaaa\"}" format.type = json format.field-delimiter = "," format.allow-comments = "true" format.ignore-parse-errors = "true" } } transform { } sink { KafkaTable { producer.bootstrap.servers = "127.0.0.1:9092" topics = sink } } ``` ### Running Command ```shell ide ``` ### Error Exception ```log . ``` ### Flink or Spark Version 1.13 ### Java or Scala Version 8 ### Screenshots _No response_ ### Are you willing to submit PR? - [X] Yes I am willing to submit a PR! ### Code of Conduct - [X] I agree to follow this project's [Code of Conduct](https://www.apache.org/foundation/policies/conduct) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]