Hisoka-X opened a new issue, #2426: URL: https://github.com/apache/incubator-seatunnel/issues/2426

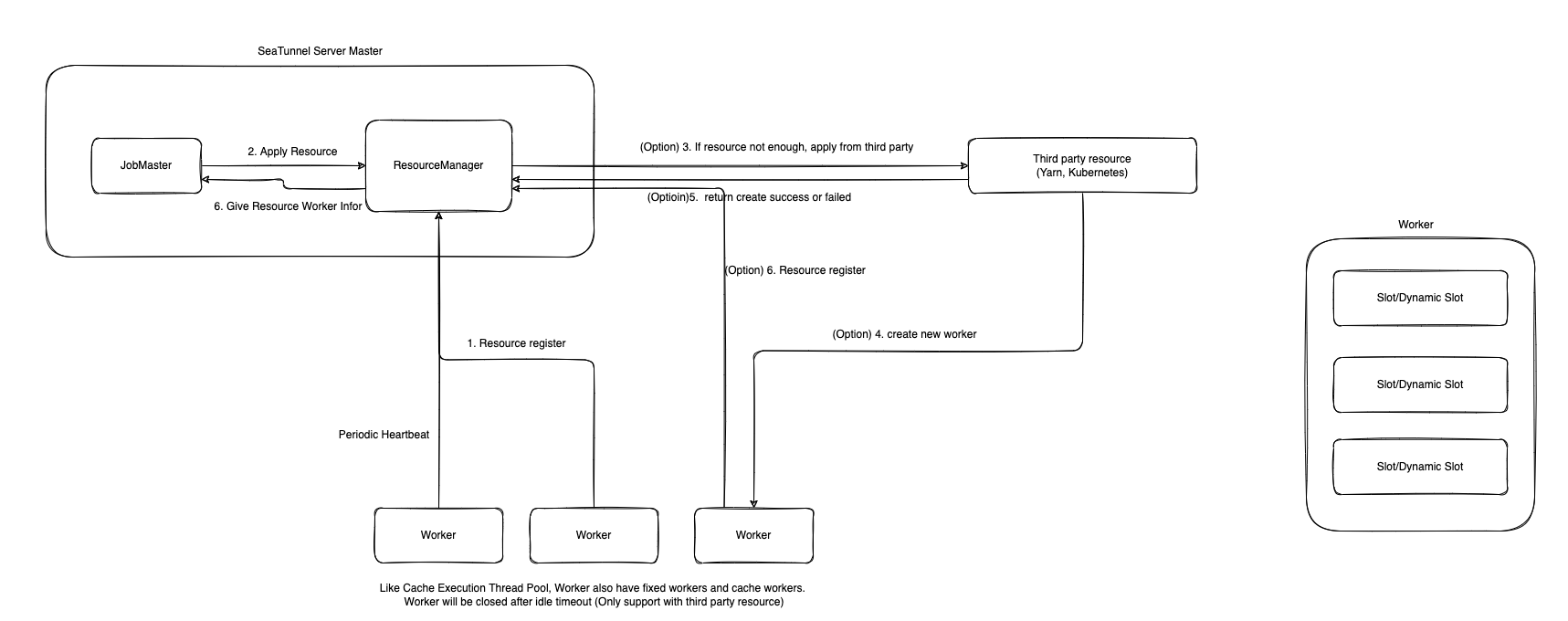

### Search before asking - [X] I had searched in the [feature](https://github.com/apache/incubator-seatunnel/issues?q=is%3Aissue+label%3A%22Feature%22) and found no similar feature requirement. ### Description ### Purpose Of Design 1. It can provide the resource address required for the execute of the Job 2. Ability to dynamically scale nodes in third-party resource management services 3. Each Worker can support running multiple tasks of different Jobs to make full use of resources 4. Worker can recover in time if abnormality occurs ### Resource Definition #### Unit resources ````java public class Worker { private Slot[] slots; } ```` ````java public class Slot { private Resource resource; } ```` #### Unit resource definition The number of resources each unit slot should have ````java public class Resource { private Memory memorySize; // only work on kubernetes private CPU cpu; } ```` SeaTunnel supports normal worker memory allocation, and if running on Kubernetes, it can also support allocation for the CPU running the worker. ### Implementation process #### Standalone 1. The Master and Worker are started, the Worker registers the information to the ResourceManager, and the status is monitored by regular heartbeat. 2. JobMaster requests resources from ResourceManager 3. If the resources are sufficient, the corresponding Worker information will be provided to the JobMaster. If not enough, the JobMaster will not have enough resources. 4. After the JobMaster receives the feedback, it determines whether to start scheduling the task or throw an exception #### Third Party Resource Manager 1. The Master and Worker are started, the Worker registers the information to the ResourceManager, and the status is monitored by regular heartbeat. 2. JobMaster requests resources from ResourceManager 3. If the resources are sufficient, provide the corresponding Worker information to the JobMaster, if not, apply for resources from the third-party resource management and start the Worker. 4. Worker registers with ResourceManager 5. After the JobMaster receives the feedback, it determines whether to start scheduling the task or throw an exception 6. Return resources to ResourceManager after JobMaster execution ends 7. According to the policy, the ResourceManager determines whether the worker needs to be released and then returned to the third-party resource management system or reserved for the next task. #### The specific process is as follows:  ### Worker's slot allocation Each Worker needs to allocate its resources to different Slots for the Job to run and use. How to split the Worker into different Slots? There are two allocation strategies: 1. When the Worker starts, the average distribution is completed according to the configuration information 2. Dynamic allocation. When a task applies for resources, the corresponding slot is dynamically created. The size of the slot is determined according to the number of resources required by the task. This can maximize the use of resources and improve the success rate of scheduling large-resource tasks. ### Usage Scenario _No response_ ### Related issues _No response_ ### Are you willing to submit a PR? - [X] Yes I am willing to submit a PR! ### Code of Conduct - [X] I agree to follow this project's [Code of Conduct](https://www.apache.org/foundation/policies/conduct) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]