hailin0 opened a new issue, #2635: URL: https://github.com/apache/incubator-seatunnel/issues/2635

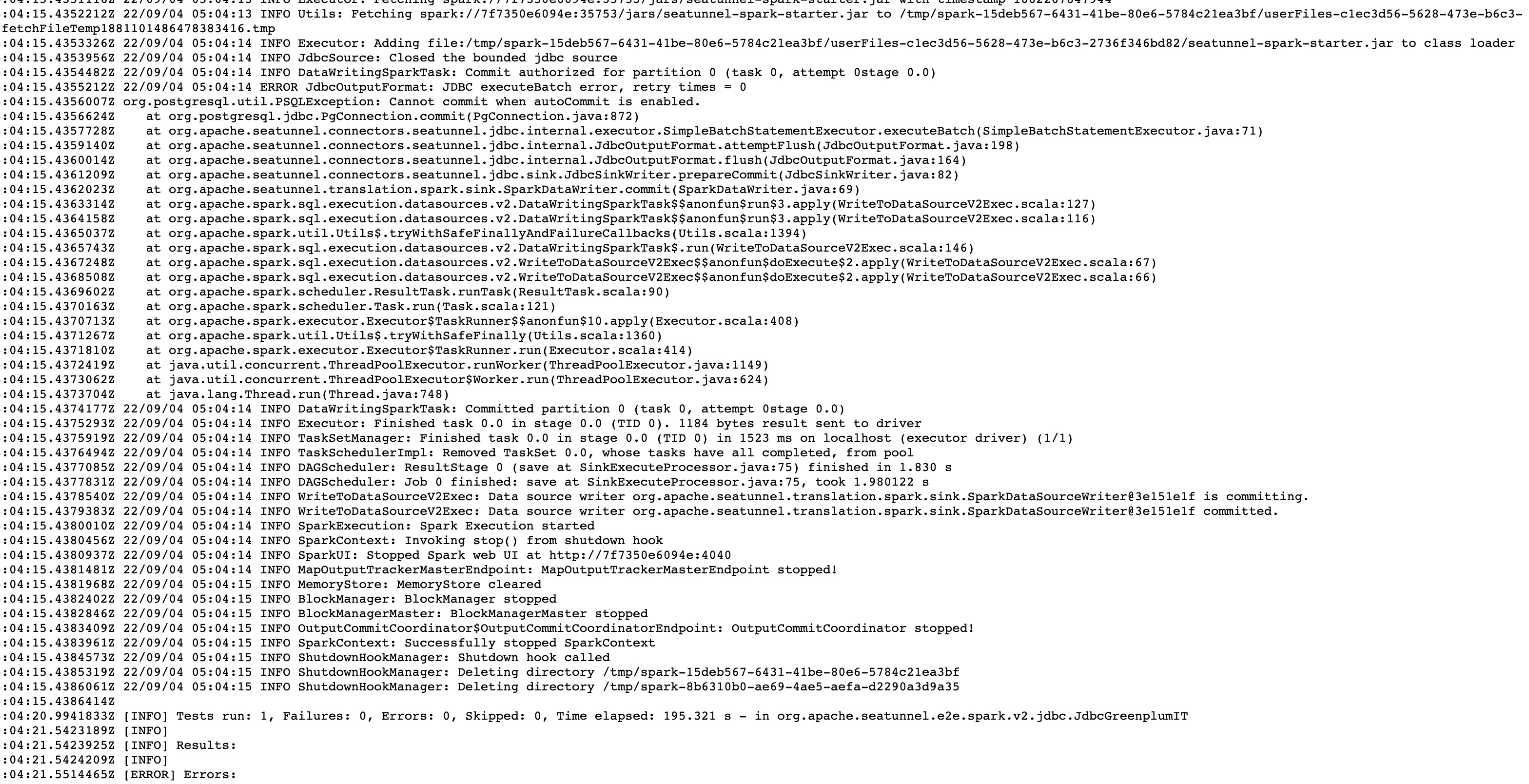

### Search before asking - [X] I had searched in the [issues](https://github.com/apache/incubator-seatunnel/issues?q=is%3Aissue+label%3A%22bug%22) and found no similar issues. ### What happened connector-jdbc throw exception: Cannot commit when autoCommit is enabled ### SeaTunnel Version dev branch ### SeaTunnel Config ```conf https://github.com/apache/incubator-seatunnel/blob/dev/seatunnel-e2e/seatunnel-spark-connector-v2-e2e/src/test/resources/jdbc/jdbc_greenplum_source_and_sink.conf ``` ### Running Command ```shell https://github.com/apache/incubator-seatunnel/blob/dev/seatunnel-e2e/seatunnel-spark-connector-v2-e2e/src/test/java/org/apache/seatunnel/e2e/spark/v2/jdbc/JdbcGreenplumIT.java#L87 ``` ### Error Exception ```log 2022-09-04T05:04:15.4355212Z 22/09/04 05:04:14 ERROR JdbcOutputFormat: JDBC executeBatch error, retry times = 0 2022-09-04T05:04:15.4356007Z org.postgresql.util.PSQLException: Cannot commit when autoCommit is enabled. 2022-09-04T05:04:15.4356624Z at org.postgresql.jdbc.PgConnection.commit(PgConnection.java:872) 2022-09-04T05:04:15.4357728Z at org.apache.seatunnel.connectors.seatunnel.jdbc.internal.executor.SimpleBatchStatementExecutor.executeBatch(SimpleBatchStatementExecutor.java:71) 2022-09-04T05:04:15.4359140Z at org.apache.seatunnel.connectors.seatunnel.jdbc.internal.JdbcOutputFormat.attemptFlush(JdbcOutputFormat.java:198) 2022-09-04T05:04:15.4360014Z at org.apache.seatunnel.connectors.seatunnel.jdbc.internal.JdbcOutputFormat.flush(JdbcOutputFormat.java:164) 2022-09-04T05:04:15.4361209Z at org.apache.seatunnel.connectors.seatunnel.jdbc.sink.JdbcSinkWriter.prepareCommit(JdbcSinkWriter.java:82) 2022-09-04T05:04:15.4362023Z at org.apache.seatunnel.translation.spark.sink.SparkDataWriter.commit(SparkDataWriter.java:69) 2022-09-04T05:04:15.4363314Z at org.apache.spark.sql.execution.datasources.v2.DataWritingSparkTask$$anonfun$run$3.apply(WriteToDataSourceV2Exec.scala:127) 2022-09-04T05:04:15.4364158Z at org.apache.spark.sql.execution.datasources.v2.DataWritingSparkTask$$anonfun$run$3.apply(WriteToDataSourceV2Exec.scala:116) 2022-09-04T05:04:15.4365037Z at org.apache.spark.util.Utils$.tryWithSafeFinallyAndFailureCallbacks(Utils.scala:1394) 2022-09-04T05:04:15.4365743Z at org.apache.spark.sql.execution.datasources.v2.DataWritingSparkTask$.run(WriteToDataSourceV2Exec.scala:146) 2022-09-04T05:04:15.4367248Z at org.apache.spark.sql.execution.datasources.v2.WriteToDataSourceV2Exec$$anonfun$doExecute$2.apply(WriteToDataSourceV2Exec.scala:67) 2022-09-04T05:04:15.4368508Z at org.apache.spark.sql.execution.datasources.v2.WriteToDataSourceV2Exec$$anonfun$doExecute$2.apply(WriteToDataSourceV2Exec.scala:66) 2022-09-04T05:04:15.4369602Z at org.apache.spark.scheduler.ResultTask.runTask(ResultTask.scala:90) 2022-09-04T05:04:15.4370163Z at org.apache.spark.scheduler.Task.run(Task.scala:121) 2022-09-04T05:04:15.4370713Z at org.apache.spark.executor.Executor$TaskRunner$$anonfun$10.apply(Executor.scala:408) 2022-09-04T05:04:15.4371267Z at org.apache.spark.util.Utils$.tryWithSafeFinally(Utils.scala:1360) 2022-09-04T05:04:15.4371810Z at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:414) 2022-09-04T05:04:15.4372419Z at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) 2022-09-04T05:04:15.4373062Z at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) 2022-09-04T05:04:15.4373704Z at java.lang.Thread.run(Thread.java:748) ``` ### Flink or Spark Version dev branch e2e-testcase ### Java or Scala Version _No response_ ### Screenshots  ### Are you willing to submit PR? - [X] Yes I am willing to submit a PR! ### Code of Conduct - [X] I agree to follow this project's [Code of Conduct](https://www.apache.org/foundation/policies/conduct) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]