itsallsame opened a new issue, #3059: URL: https://github.com/apache/incubator-seatunnel/issues/3059

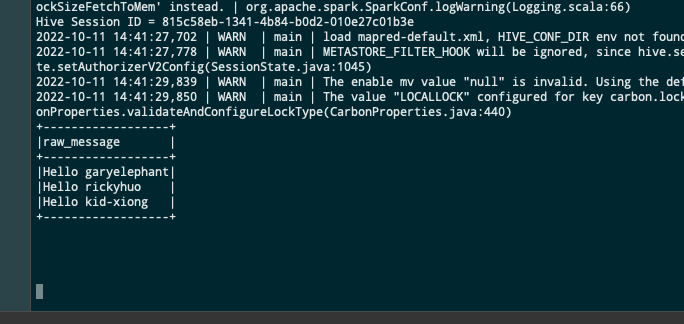

### Search before asking - [X] I had searched in the [issues](https://github.com/apache/incubator-seatunnel/issues?q=is%3Aissue+label%3A%22bug%22) and found no similar issues. ### What happened running Official examples(https://seatunnel.incubator.apache.org/docs/2.1.3/start/local). In batch mode, the seatunnel did not stop after the completion of data transmission. at production environment, has same problem. ### SeaTunnel Version 2.1.3 ### SeaTunnel Config ```conf # # Licensed to the Apache Software Foundation (ASF) under one or more # contributor license agreements. See the NOTICE file distributed with # this work for additional information regarding copyright ownership. # The ASF licenses this file to You under the Apache License, Version 2.0 # (the "License"); you may not use this file except in compliance with # the License. You may obtain a copy of the License at # # http://www.apache.org/licenses/LICENSE-2.0 # # Unless required by applicable law or agreed to in writing, software # distributed under the License is distributed on an "AS IS" BASIS, # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. # See the License for the specific language governing permissions and # limitations under the License. # ###### ###### This config file is a demonstration of batch processing in SeaTunnel config ###### env { # You can set spark configuration here # see available properties defined by spark: https://spark.apache.org/docs/latest/configuration.html#available-properties spark.app.name = "SeaTunnel" spark.executor.instances = 2 spark.executor.cores = 1 spark.executor.memory = "1g" } source { # This is a example input plugin **only for test and demonstrate the feature input plugin** Fake { result_table_name = "my_dataset" } # You can also use other input plugins, such as file # file { # result_table_name = "accesslog" # path = "hdfs://hadoop-cluster-01/nginx/accesslog" # format = "json" # } # If you would like to get more information about how to configure seatunnel and see full list of input plugins, # please go to https://seatunnel.apache.org/docs/spark/configuration/source-plugins/Fake } transform { # split data by specific delimiter # you can also use other filter plugins, such as sql # sql { # sql = "select * from accesslog where request_time > 1000" # } # If you would like to get more information about how to configure seatunnel and see full list of filter plugins, # please go to https://seatunnel.apache.org/docs/spark/configuration/transform-plugins/Sql } sink { # choose stdout output plugin to output data to console Console {} # you can also use other output plugins, such as hdfs # hdfs { # path = "hdfs://hadoop-cluster-01/nginx/accesslog_processed" # save_mode = "append" # } # If you would like to get more information about how to configure seatunnel and see full list of output plugins, # please go to https://seatunnel.apache.org/docs/spark/configuration/sink-plugins/Console } ``` ### Running Command ```shell cd apache-seatunnel-incubating-2.1.3 ./bin/start-seatunnel-spark.sh \ --master local[4] \ --deploy-mode client \ --config ./config/spark.batch.conf.template ``` ### Error Exception ```log no error, all log. ./bin/start-seatunnel-spark.sh --master local[4] --deploy-mode client --config ./config/spark.batch.conf.template log4j:WARN No appenders could be found for logger (org.apache.seatunnel.core.base.config.ConfigBuilder). log4j:WARN Please initialize the log4j system properly. log4j:WARN See http://logging.apache.org/log4j/1.2/faq.html#noconfig for more info. Execute SeaTunnel Spark Job: ${SPARK_HOME}/bin/spark-submit --class "org.apache.seatunnel.core.spark.SeatunnelSpark" --name "SeaTunnel" --master "local[4]" --deploy-mode "client" --jars "/home/data/just/seatunnel/apache-seatunnel-incubating-2.1.3/plugins/mysql/lib/mysql-connector-java-8.0.21.jar,/home/data/just/seatunnel/apache-seatunnel-incubating-2.1.3/connectors/spark/seatunnel-connector-spark-fake-2.1.3.jar,/home/data/just/seatunnel/apache-seatunnel-incubating-2.1.3/connectors/spark/seatunnel-connector-spark-console-2.1.3.jar" --conf "spark.executor.memory=1g" --conf "spark.executor.cores=1" --conf "spark.app.name=SeaTunnel" --conf "spark.executor.instances=2" /home/data/just/seatunnel/apache-seatunnel-incubating-2.1.3/lib/seatunnel-core-spark.jar --master local[4] --deploy-mode client --config ./config/spark.batch.conf.template 2022-10-11 14:41:23,967 | WARN | main | The configuration key 'spark.reducer.maxReqSizeShuffleToMem' has been deprecated as of Spark 2.3 and may be removed in the future. Please use the new key 'spark.maxRemoteBlockSizeFetchToMem' instead. | org.apache.spark.SparkConf.logWarning(Logging.scala:66) 2022-10-11 14:41:24,897 | WARN | main | The configuration key 'spark.reducer.maxReqSizeShuffleToMem' has been deprecated as of Spark 2.3 and may be removed in the future. Please use the new key 'spark.maxRemoteBlockSizeFetchToMem' instead. | org.apache.spark.SparkConf.logWarning(Logging.scala:66) 2022-10-11 14:41:24,911 | WARN | main | The configuration key 'spark.reducer.maxReqSizeShuffleToMem' has been deprecated as of Spark 2.3 and may be removed in the future. Please use the new key 'spark.maxRemoteBlockSizeFetchToMem' instead. | org.apache.spark.SparkConf.logWarning(Logging.scala:66) 2022-10-11 14:41:24,912 | WARN | main | The configuration key 'spark.reducer.maxReqSizeShuffleToMem' has been deprecated as of Spark 2.3 and may be removed in the future. Please use the new key 'spark.maxRemoteBlockSizeFetchToMem' instead. | org.apache.spark.SparkConf.logWarning(Logging.scala:66) 2022-10-11 14:41:24,937 | WARN | main | Note that spark.local.dir will be overridden by the value set by the cluster manager (via SPARK_LOCAL_DIRS in mesos/standalone/kubernetes and LOCAL_DIRS in YARN). | org.apache.spark.SparkConf.logWarning(Logging.scala:66) 2022-10-11 14:41:24,941 | WARN | main | Detected deprecated memory fraction settings: [spark.shuffle.memoryFraction, spark.storage.memoryFraction, spark.storage.unrollFraction]. As of Spark 1.6, execution and storage memory management are unified. All memory fractions used in the old model are now deprecated and no longer read. If you wish to use the old memory management, you may explicitly enable `spark.memory.useLegacyMode` (not recommended). | org.apache.spark.SparkConf.logWarning(Logging.scala:66) 2022-10-11 14:41:25,362 | WARN | main | The configuration key 'spark.reducer.maxReqSizeShuffleToMem' has been deprecated as of Spark 2.3 and may be removed in the future. Please use the new key 'spark.maxRemoteBlockSizeFetchToMem' instead. | org.apache.spark.SparkConf.logWarning(Logging.scala:66) 2022-10-11 14:41:25,367 | WARN | main | The configuration key 'spark.reducer.maxReqSizeShuffleToMem' has been deprecated as of Spark 2.3 and may be removed in the future. Please use the new key 'spark.maxRemoteBlockSizeFetchToMem' instead. | org.apache.spark.SparkConf.logWarning(Logging.scala:66) 2022-10-11 14:41:25,368 | WARN | main | The configuration key 'spark.reducer.maxReqSizeShuffleToMem' has been deprecated as of Spark 2.3 and may be removed in the future. Please use the new key 'spark.maxRemoteBlockSizeFetchToMem' instead. | org.apache.spark.SparkConf.logWarning(Logging.scala:66) Hive Session ID = 815c58eb-1341-4b84-b0d2-010e27c01b3e 2022-10-11 14:41:27,702 | WARN | main | load mapred-default.xml, HIVE_CONF_DIR env not found! | org.apache.hadoop.hive.ql.session.SessionState.loadMapredDefaultXml(SessionState.java:1460) 2022-10-11 14:41:27,778 | WARN | main | METASTORE_FILTER_HOOK will be ignored, since hive.security.authorization.manager is set to instance of HiveAuthorizerFactory. | org.apache.hadoop.hive.ql.session.SessionState.setAuthorizerV2Config(SessionState.java:1045) 2022-10-11 14:41:29,839 | WARN | main | The enable mv value "null" is invalid. Using the default value "true" | org.apache.carbondata.core.util.CarbonProperties.validateEnableMV(CarbonProperties.java:511) 2022-10-11 14:41:29,850 | WARN | main | The value "LOCALLOCK" configured for key carbon.lock.type is invalid for current file system. Use the default value HDFSLOCK instead. | org.apache.carbondata.core.util.CarbonProperties.validateAndConfigureLockType(CarbonProperties.java:440) +------------------+ |raw_message | +------------------+ |Hello garyelephant| |Hello rickyhuo | |Hello kid-xiong | +------------------+ ``` ### Flink or Spark Version spark2.4.5 ### Java or Scala Version java1.8 ### Screenshots  ### Are you willing to submit PR? - [ ] Yes I am willing to submit a PR! ### Code of Conduct - [X] I agree to follow this project's [Code of Conduct](https://www.apache.org/foundation/policies/conduct) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@seatunnel.apache.org.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org