czwanglei opened a new issue, #3314: URL: https://github.com/apache/incubator-seatunnel/issues/3314

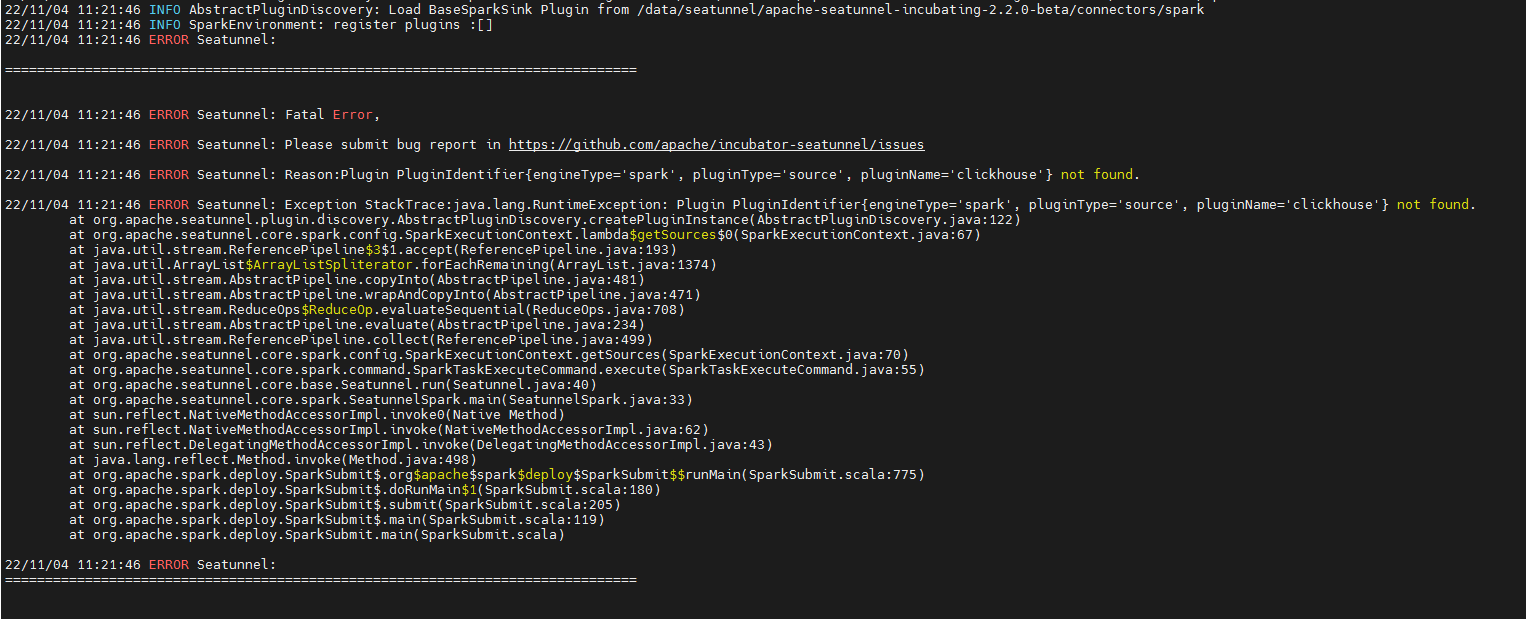

### Search before asking - [X] I had searched in the [issues](https://github.com/apache/incubator-seatunnel/issues?q=is%3Aissue+label%3A%22bug%22) and found no similar issues. ### What happened Exception in thread "main" java.lang.RuntimeException: Plugin PluginIdentifier{engineType='spark', pluginType='source', pluginName='clickhouse'} not found. at org.apache.seatunnel.plugin.discovery.AbstractPluginDiscovery.createPluginInstance(AbstractPluginDiscovery.java:122) at org.apache.seatunnel.core.spark.config.SparkExecutionContext.lambda$getSources$0(SparkExecutionContext.java:67) at java.util.stream.ReferencePipeline$3$1.accept(ReferencePipeline.java:193) at java.util.ArrayList$ArrayListSpliterator.forEachRemaining(ArrayList.java:1374) at java.util.stream.AbstractPipeline.copyInto(AbstractPipeline.java:481) at java.util.stream.AbstractPipeline.wrapAndCopyInto(AbstractPipeline.java:471) at java.util.stream.ReduceOps$ReduceOp.evaluateSequential(ReduceOps.java:708) at java.util.stream.AbstractPipeline.evaluate(AbstractPipeline.java:234) at java.util.stream.ReferencePipeline.collect(ReferencePipeline.java:499) at org.apache.seatunnel.core.spark.config.SparkExecutionContext.getSources(SparkExecutionContext.java:70) at org.apache.seatunnel.core.spark.command.SparkTaskExecuteCommand.execute(SparkTaskExecuteCommand.java:55) at org.apache.seatunnel.core.base.Seatunnel.run(Seatunnel.java:40) at org.apache.seatunnel.core.spark.SeatunnelSpark.main(SeatunnelSpark.java:33) at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:498) at org.apache.spark.deploy.SparkSubmit$.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:775) at org.apache.spark.deploy.SparkSubmit$.doRunMain$1(SparkSubmit.scala:180) at org.apache.spark.deploy.SparkSubmit$.submit(SparkSubmit.scala:205) at org.apache.spark.deploy.Spar ### SeaTunnel Version apache-seatunnel-incubating-2.2.0-beta ### SeaTunnel Config ```conf # # Licensed to the Apache Software Foundation (ASF) under one or more # contributor license agreements. See the NOTICE file distributed with # this work for additional information regarding copyright ownership. # The ASF licenses this file to You under the Apache License, Version 2.0 # (the "License"); you may not use this file except in compliance with # the License. You may obtain a copy of the License at # # http://www.apache.org/licenses/LICENSE-2.0 # # Unless required by applicable law or agreed to in writing, software # distributed under the License is distributed on an "AS IS" BASIS, # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. # See the License for the specific language governing permissions and # limitations under the License. # ###### ###### This config file is a demonstration of batch processing in SeaTunnel config ###### env { # You can set spark configuration here # see available properties defined by spark: https://spark.apache.org/docs/latest/configuration.html#available-properties spark.app.name = "SeaTunnel" spark.executor.instances = 2 spark.executor.cores = 1 spark.executor.memory = "1g" } source { clickhouse { host = "10.111.111.123:8123" database = "test" sql = " with now() as nowDay select aa.one_id,bb.tenant_code from ( select one_id,max(request_time) as lastTime,min(request_time) as firstTime from dwm_sdk_collection_info_oneid_di group by one_id ) as aa left join ( select one_id,tenant_code,count(dt)as nums from ( select one_id,tenant_code,dt from dwm_sdk_collection_info_oneid_di group by one_id,tenant_code,dt )group by one_id,tenant_code ) as bb on aa.one_id=bb.one_id where ( dateDiff('day',aa.firstTime,aa.lastTime)>60 and dateDiff('day',aa.lastTime,nowDay)>30 ) or ( dateDiff('day',aa.firstTime,aa.lastTime)>60 and bb.nums<=10 ) or ( dateDiff('day',aa.firstTime,aa.lastTime)>120 and dateDiff('day',aa.lastTime,nowDay)>=60 )" username = "default" password = "ABCD1234" result_table_name = "dwm_sdk_collection_info_oneid_di_tmp" } } transform { # split data by specific delimiter # you can also use other filter plugins, such as sql sql { sql = "select * from dwm_sdk_collection_info_oneid_di_tmp" } # If you would like to get more information about how to configure seatunnel and see full list of filter plugins, # please go to https://seatunnel.apache.org/docs/spark/configuration/transform-plugins/Sql } sink { # choose stdout output plugin to output data to console Console {} # you can also use other output plugins, such as hdfs # hdfs { # path = "hdfs://hadoop-cluster-01/nginx/accesslog_processed" # save_mode = "append" # } # If you would like to get more information about how to configure seatunnel and see full list of output plugins, # please go to https://seatunnel.apache.org/docs/spark/configuration/sink-plugins/Console } ``` ### Running Command ```shell /data/seatunnel/apache-seatunnel-incubating-2.2.0-beta//bin/start-seatunnel-spark.sh --master local[2] --deploy-mode client --config /data/seatunnel/apache-seatunnel-incubating-2.2.0-beta/config/spark.batch.conf.clickhouse ``` ### Error Exception ```log Exception in thread "main" java.lang.RuntimeException: Plugin PluginIdentifier{engineType='spark', pluginType='source', pluginName='clickhouse'} not found. at org.apache.seatunnel.plugin.discovery.AbstractPluginDiscovery.createPluginInstance(AbstractPluginDiscovery.java:122) at org.apache.seatunnel.core.spark.config.SparkExecutionContext.lambda$getSources$0(SparkExecutionContext.java:67) at java.util.stream.ReferencePipeline$3$1.accept(ReferencePipeline.java:193) at java.util.ArrayList$ArrayListSpliterator.forEachRemaining(ArrayList.java:1374) at java.util.stream.AbstractPipeline.copyInto(AbstractPipeline.java:481) at java.util.stream.AbstractPipeline.wrapAndCopyInto(AbstractPipeline.java:471) at java.util.stream.ReduceOps$ReduceOp.evaluateSequential(ReduceOps.java:708) at java.util.stream.AbstractPipeline.evaluate(AbstractPipeline.java:234) at java.util.stream.ReferencePipeline.collect(ReferencePipeline.java:499) at org.apache.seatunnel.core.spark.config.SparkExecutionContext.getSources(SparkExecutionContext.java:70) at org.apache.seatunnel.core.spark.command.SparkTaskExecuteCommand.execute(SparkTaskExecuteCommand.java:55) at org.apache.seatunnel.core.base.Seatunnel.run(Seatunnel.java:40) at org.apache.seatunnel.core.spark.SeatunnelSpark.main(SeatunnelSpark.java:33) at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:498) at org.apache.spark.deploy.SparkSubmit$.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:775) at org.apache.spark.deploy.SparkSubmit$.doRunMain$1(SparkSubmit.scala:180) at org.apache.spark.deploy.SparkSubmit$.submit(SparkSubmit.scala:205) at org.apache.spark.deploy.Spar ``` ### Flink or Spark Version spark-2.2.3-bin-hadoop2.7 ### Java or Scala Version _No response_ ### Screenshots  ### Are you willing to submit PR? - [ ] Yes I am willing to submit a PR! ### Code of Conduct - [X] I agree to follow this project's [Code of Conduct](https://www.apache.org/foundation/policies/conduct) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]