bravekong opened a new issue, #3380: URL: https://github.com/apache/incubator-seatunnel/issues/3380

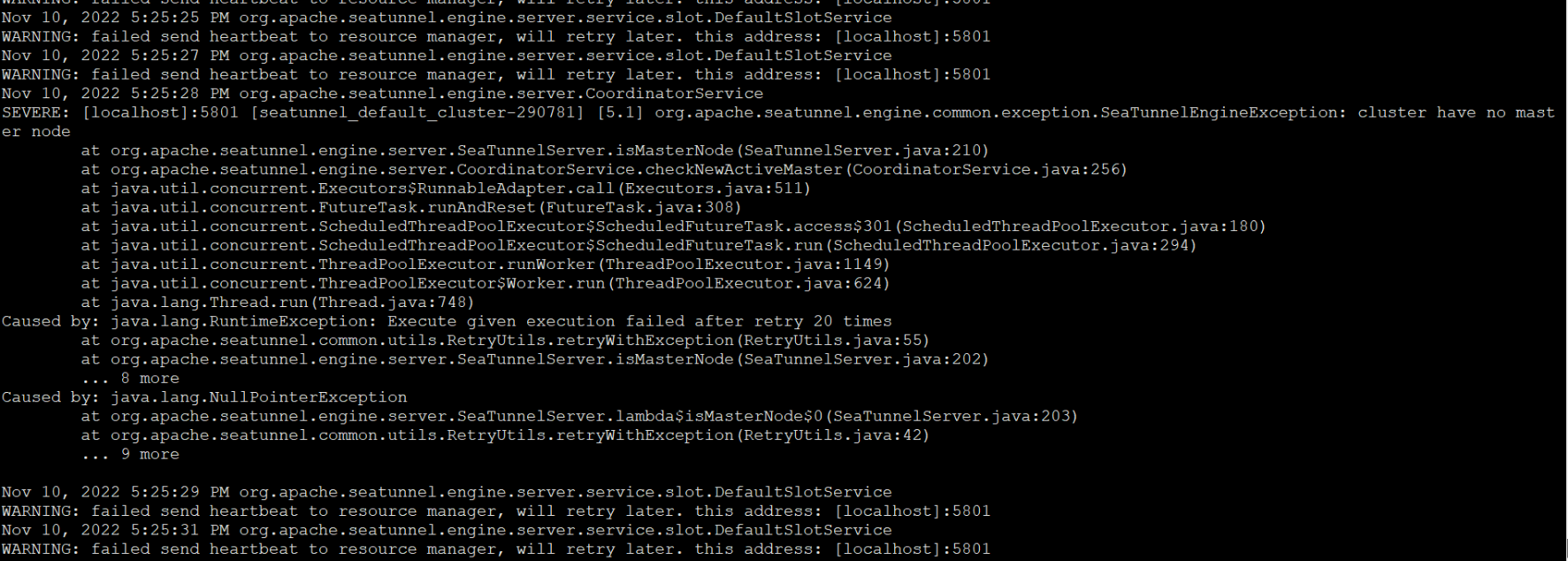

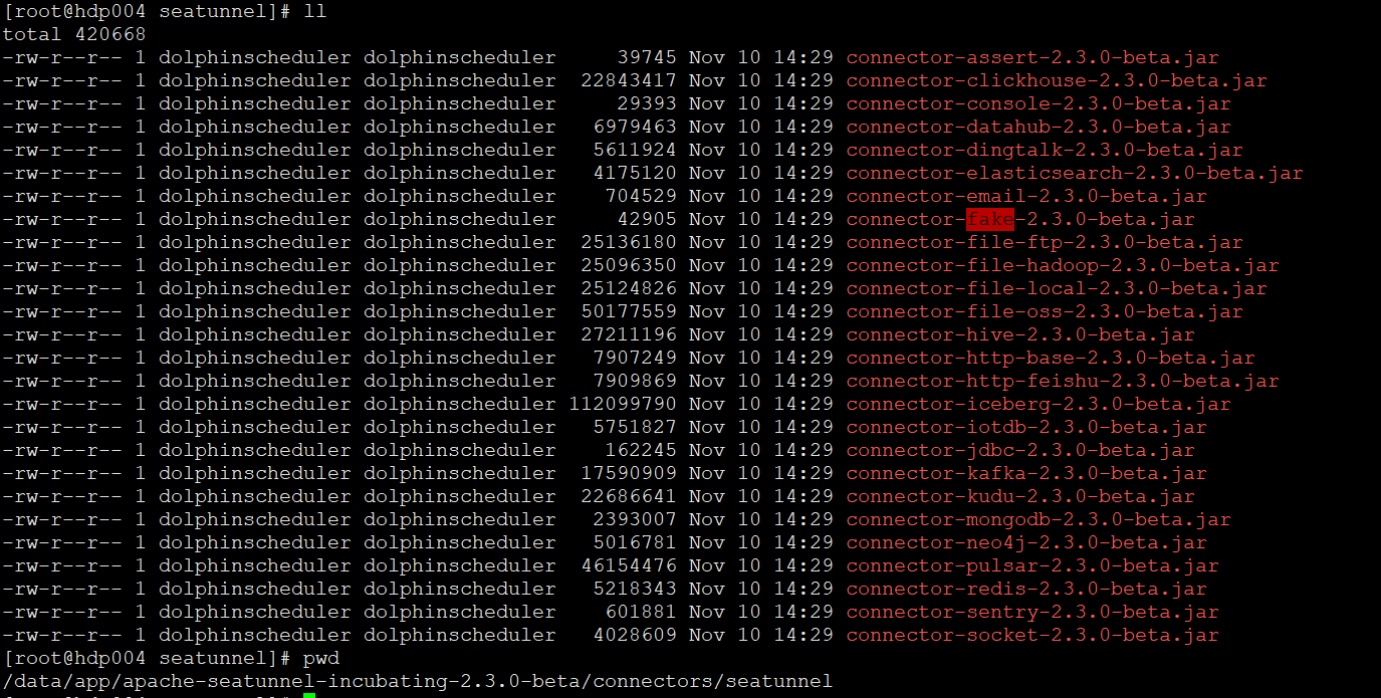

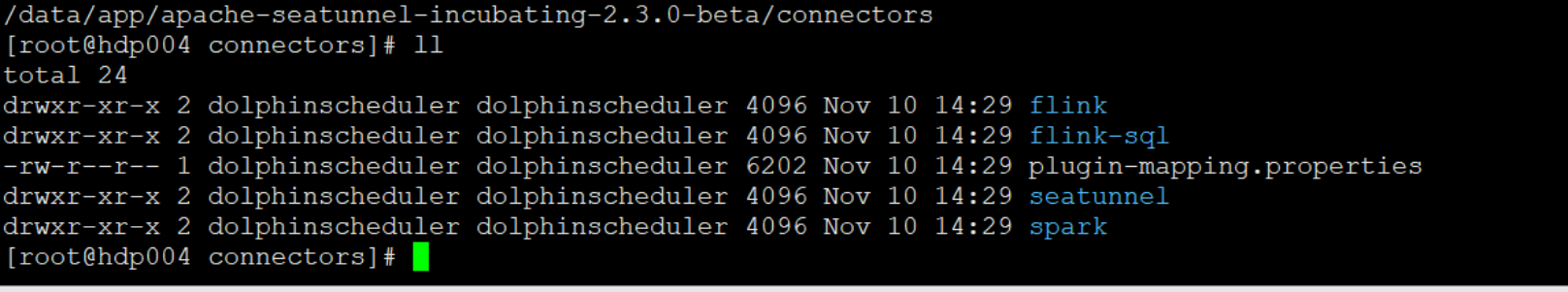

### Search before asking - [X] I had searched in the [issues](https://github.com/apache/incubator-seatunnel/issues?q=is%3Aissue+label%3A%22bug%22) and found no similar issues. ### What happened When I use the SeaTunnel Engine according to the official website, I report an error:org.apache.seatunnel.engine.common.exception.SeaTunnelEngineException: cluster have no master node. The environment and dependencies jars already exist. ### SeaTunnel Version 2.3.0-beta ### SeaTunnel Config ```conf env { execution.parallelism = 1 job.mode = "BATCH" } source { FakeSource { result_table_name = "fake" row.num = 16 schema = { fields { name = "string" age = "int" } } } } transform { } sink { Console {} } ``` ### Running Command ```shell cd "apache-seatunnel-incubating-${version}" ./bin/seatunnel.sh \ --config ./config/seatunnel.streaming.conf.template -e local ``` ### Error Exception ```log WARNING: failed send heartbeat to resource manager, will retry later. this address: [localhost]:5801 Nov 10, 2022 5:25:28 PM org.apache.seatunnel.engine.server.CoordinatorService SEVERE: [localhost]:5801 [seatunnel_default_cluster-290781] [5.1] org.apache.seatunnel.engine.common.exception.SeaTunnelEngineException: cluster have no master node at org.apache.seatunnel.engine.server.SeaTunnelServer.isMasterNode(SeaTunnelServer.java:210) at org.apache.seatunnel.engine.server.CoordinatorService.checkNewActiveMaster(CoordinatorService.java:256) at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511) at java.util.concurrent.FutureTask.runAndReset(FutureTask.java:308) at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.access$301(ScheduledThreadPoolExecutor.java:180) at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.run(ScheduledThreadPoolExecutor.java:294) at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) at java.lang.Thread.run(Thread.java:748) Caused by: java.lang.RuntimeException: Execute given execution failed after retry 20 times at org.apache.seatunnel.common.utils.RetryUtils.retryWithException(RetryUtils.java:55) at org.apache.seatunnel.engine.server.SeaTunnelServer.isMasterNode(SeaTunnelServer.java:202) ... 8 more Caused by: java.lang.NullPointerException at org.apache.seatunnel.engine.server.SeaTunnelServer.lambda$isMasterNode$0(SeaTunnelServer.java:203) at org.apache.seatunnel.common.utils.RetryUtils.retryWithException(RetryUtils.java:42) ... 9 more ``` ### Flink or Spark Version _No response_ ### Java or Scala Version java 1.8 ### Screenshots    ### Are you willing to submit PR? - [X] Yes I am willing to submit a PR! ### Code of Conduct - [X] I agree to follow this project's [Code of Conduct](https://www.apache.org/foundation/policies/conduct) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]