Repository: spark Updated Branches: refs/heads/branch-2.4 90fcd12af -> a091216a6

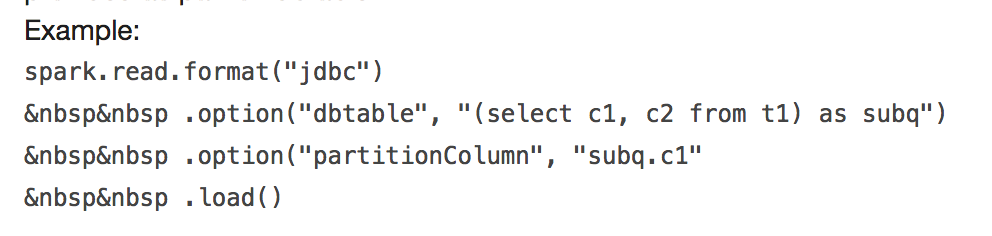

[SPARK-24423][FOLLOW-UP][SQL] Fix error example ## What changes were proposed in this pull request?  It will throw: ``` requirement failed: When reading JDBC data sources, users need to specify all or none for the following options: 'partitionColumn', 'lowerBound', 'upperBound', and 'numPartitions' ``` and ``` User-defined partition column subq.c1 not found in the JDBC relation ... ``` This PR fix this error example. ## How was this patch tested? manual tests Closes #23170 from wangyum/SPARK-24499. Authored-by: Yuming Wang <[email protected]> Signed-off-by: Sean Owen <[email protected]> (cherry picked from commit 06a3b6aafa510ede2f1376b29a46f99447286c67) Signed-off-by: Sean Owen <[email protected]> Project: http://git-wip-us.apache.org/repos/asf/spark/repo Commit: http://git-wip-us.apache.org/repos/asf/spark/commit/a091216a Tree: http://git-wip-us.apache.org/repos/asf/spark/tree/a091216a Diff: http://git-wip-us.apache.org/repos/asf/spark/diff/a091216a Branch: refs/heads/branch-2.4 Commit: a091216a6d34ec998de05dca441ae5a368c13c22 Parents: 90fcd12 Author: Yuming Wang <[email protected]> Authored: Tue Dec 4 07:57:58 2018 -0600 Committer: Sean Owen <[email protected]> Committed: Tue Dec 4 07:58:12 2018 -0600 ---------------------------------------------------------------------- docs/sql-data-sources-jdbc.md | 6 +++--- .../sql/execution/datasources/jdbc/JDBCOptions.scala | 10 +++++++--- .../test/scala/org/apache/spark/sql/jdbc/JDBCSuite.scala | 10 +++++++--- 3 files changed, 17 insertions(+), 9 deletions(-) ---------------------------------------------------------------------- http://git-wip-us.apache.org/repos/asf/spark/blob/a091216a/docs/sql-data-sources-jdbc.md ---------------------------------------------------------------------- diff --git a/docs/sql-data-sources-jdbc.md b/docs/sql-data-sources-jdbc.md index 057e821..0f2bc49 100644 --- a/docs/sql-data-sources-jdbc.md +++ b/docs/sql-data-sources-jdbc.md @@ -64,9 +64,9 @@ the following case-insensitive options: Example:<br> <code> spark.read.format("jdbc")<br> -    .option("dbtable", "(select c1, c2 from t1) as subq")<br> -    .option("partitionColumn", "subq.c1"<br> -    .load() + .option("url", jdbcUrl)<br> + .option("query", "select c1, c2 from t1")<br> + .load() </code></li> </ol> </td> http://git-wip-us.apache.org/repos/asf/spark/blob/a091216a/sql/core/src/main/scala/org/apache/spark/sql/execution/datasources/jdbc/JDBCOptions.scala ---------------------------------------------------------------------- diff --git a/sql/core/src/main/scala/org/apache/spark/sql/execution/datasources/jdbc/JDBCOptions.scala b/sql/core/src/main/scala/org/apache/spark/sql/execution/datasources/jdbc/JDBCOptions.scala index 7dfbb9d..b4469cb 100644 --- a/sql/core/src/main/scala/org/apache/spark/sql/execution/datasources/jdbc/JDBCOptions.scala +++ b/sql/core/src/main/scala/org/apache/spark/sql/execution/datasources/jdbc/JDBCOptions.scala @@ -137,9 +137,13 @@ class JDBCOptions( |the partition columns using the supplied subquery alias to resolve any ambiguity. |Example : |spark.read.format("jdbc") - | .option("dbtable", "(select c1, c2 from t1) as subq") - | .option("partitionColumn", "subq.c1" - | .load() + | .option("url", jdbcUrl) + | .option("dbtable", "(select c1, c2 from t1) as subq") + | .option("partitionColumn", "c1") + | .option("lowerBound", "1") + | .option("upperBound", "100") + | .option("numPartitions", "3") + | .load() """.stripMargin ) http://git-wip-us.apache.org/repos/asf/spark/blob/a091216a/sql/core/src/test/scala/org/apache/spark/sql/jdbc/JDBCSuite.scala ---------------------------------------------------------------------- diff --git a/sql/core/src/test/scala/org/apache/spark/sql/jdbc/JDBCSuite.scala b/sql/core/src/test/scala/org/apache/spark/sql/jdbc/JDBCSuite.scala index 7fa0e7f..71e8376 100644 --- a/sql/core/src/test/scala/org/apache/spark/sql/jdbc/JDBCSuite.scala +++ b/sql/core/src/test/scala/org/apache/spark/sql/jdbc/JDBCSuite.scala @@ -1348,9 +1348,13 @@ class JDBCSuite extends QueryTest |the partition columns using the supplied subquery alias to resolve any ambiguity. |Example : |spark.read.format("jdbc") - | .option("dbtable", "(select c1, c2 from t1) as subq") - | .option("partitionColumn", "subq.c1" - | .load() + | .option("url", jdbcUrl) + | .option("dbtable", "(select c1, c2 from t1) as subq") + | .option("partitionColumn", "c1") + | .option("lowerBound", "1") + | .option("upperBound", "100") + | .option("numPartitions", "3") + | .load() """.stripMargin val e5 = intercept[RuntimeException] { sql( --------------------------------------------------------------------- To unsubscribe, e-mail: [email protected] For additional commands, e-mail: [email protected]