This is an automated email from the ASF dual-hosted git repository.

gengliang pushed a commit to branch master

in repository https://gitbox.apache.org/repos/asf/spark.git

The following commit(s) were added to refs/heads/master by this push:

new 198b90c [SPARK-37714][SQL] ANSI mode: allow casting between numeric

type and timestamp type

198b90c is described below

commit 198b90c3bf5c3cbc90ba1bb4460e92c72695b50c

Author: Gengliang Wang <[email protected]>

AuthorDate: Thu Dec 23 20:13:10 2021 +0800

[SPARK-37714][SQL] ANSI mode: allow casting between numeric type and

timestamp type

### What changes were proposed in this pull request?

* Allow casting between numeric type and timestamp type under ANSI mode

* Remove the user-facing configuration

`spark.sql.ansi.allowCastBetweenDatetimeAndNumeric`

### Why are the changes needed?

Same reason as mentioned in https://github.com/apache/spark/pull/34459. It

is for better adoption of ANSI SQL mode since users are relying on it:

- As we did some data science, we found that many Spark SQL users are

actually using `Cast(Timestamp as Numeric)` and `Cast(Numeric as Timestamp)`.

- The Spark SQL connector for Tableau is using this feature for DateTime

math. e.g.

`CAST(FROM_UNIXTIME(CAST(CAST(%1 AS BIGINT) + (%2 * 86400) AS BIGINT)) AS

TIMESTAMP)`

### Does this PR introduce _any_ user-facing change?

Yes, casting between numeric type and timestamp type is allowed by default

under ANSI SQL mode

### How was this patch tested?

Unit tests.

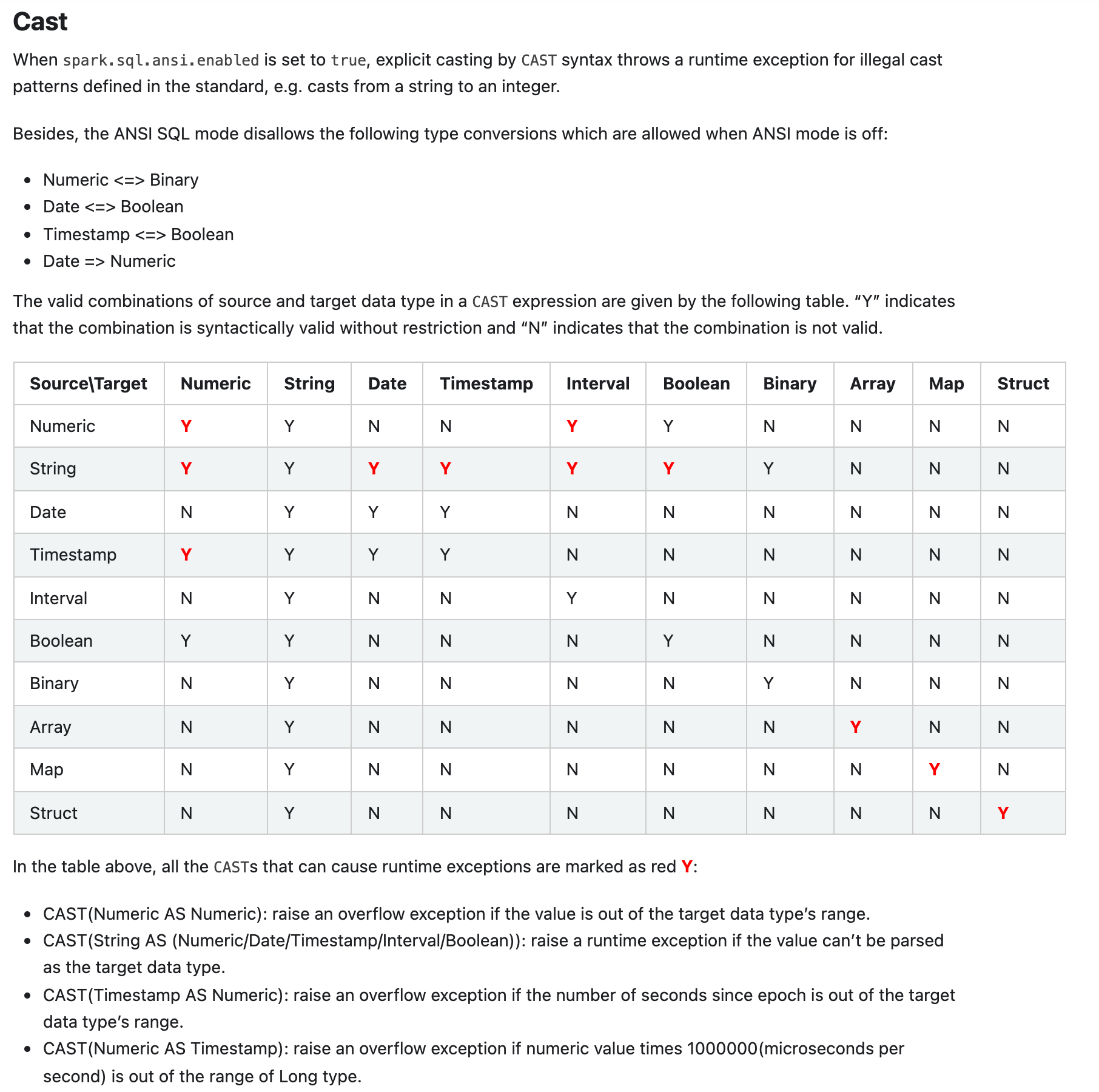

Here is the screenshot of the document change:

Closes #34985 from gengliangwang/changeSubConf.

Authored-by: Gengliang Wang <[email protected]>

Signed-off-by: Gengliang Wang <[email protected]>

---

docs/sql-ref-ansi-compliance.md | 18 ++--

.../spark/sql/catalyst/expressions/Cast.scala | 5 +-

.../org/apache/spark/sql/internal/SQLConf.scala | 12 ---

.../catalyst/expressions/AnsiCastSuiteBase.scala | 120 +++++----------------

.../spark/sql/catalyst/expressions/CastSuite.scala | 32 +-----

.../sql/catalyst/expressions/CastSuiteBase.scala | 31 ++++++

6 files changed, 67 insertions(+), 151 deletions(-)

diff --git a/docs/sql-ref-ansi-compliance.md b/docs/sql-ref-ansi-compliance.md

index d8d5a24..03b8db1 100644

--- a/docs/sql-ref-ansi-compliance.md

+++ b/docs/sql-ref-ansi-compliance.md

@@ -70,23 +70,21 @@ SELECT abs(-2147483648);

When `spark.sql.ansi.enabled` is set to `true`, explicit casting by `CAST`

syntax throws a runtime exception for illegal cast patterns defined in the

standard, e.g. casts from a string to an integer.

-The `CAST` clause of Spark ANSI mode follows the syntax rules of section 6.13

"cast specification" in [ISO/IEC 9075-2:2011 Information technology — Database

languages - SQL — Part 2: Foundation

(SQL/Foundation)](https://www.iso.org/standard/53682.html), except it specially

allows the following

- straightforward type conversions which are disallowed as per the ANSI

standard:

-* NumericType <=> BooleanType

-* StringType <=> BinaryType

-* ArrayType => String

-* MapType => String

-* StructType => String

+Besides, the ANSI SQL mode disallows the following type conversions which are

allowed when ANSI mode is off:

+* Numeric <=> Binary

+* Date <=> Boolean

+* Timestamp <=> Boolean

+* Date => Numeric

The valid combinations of source and target data type in a `CAST` expression

are given by the following table.

“Y” indicates that the combination is syntactically valid without restriction

and “N” indicates that the combination is not valid.

| Source\Target | Numeric | String | Date | Timestamp | Interval | Boolean |

Binary | Array | Map | Struct |

|-----------|---------|--------|------|-----------|----------|---------|--------|-------|-----|--------|

-| Numeric | <span style="color:red">**Y**</span> | Y | N | N

| N | Y | N | N | N | N |

+| Numeric | <span style="color:red">**Y**</span> | Y | N | N

| <span style="color:red">**Y**</span> | Y | N | N | N | N

|

| String | <span style="color:red">**Y**</span> | Y | <span

style="color:red">**Y**</span> | <span style="color:red">**Y**</span> | <span

style="color:red">**Y**</span> | <span style="color:red">**Y**</span> | Y | N

| N | N |

| Date | N | Y | Y | Y | N | N | N

| N | N | N |

-| Timestamp | N | Y | Y | Y | N | N | N

| N | N | N |

+| Timestamp | <span style="color:red">**Y**</span> | Y | Y | Y

| N | N | N | N | N | N |

| Interval | N | Y | N | N | Y | N | N

| N | N | N |

| Boolean | Y | Y | N | N | N | Y | N

| N | N | N |

| Binary | N | Y | N | N | N | N | Y

| N | N | N |

@@ -97,6 +95,8 @@ The `CAST` clause of Spark ANSI mode follows the syntax rules

of section 6.13 "c

In the table above, all the `CAST`s that can cause runtime exceptions are

marked as red <span style="color:red">**Y**</span>:

* CAST(Numeric AS Numeric): raise an overflow exception if the value is out of

the target data type's range.

* CAST(String AS (Numeric/Date/Timestamp/Interval/Boolean)): raise a runtime

exception if the value can't be parsed as the target data type.

+* CAST(Timestamp AS Numeric): raise an overflow exception if the number of

seconds since epoch is out of the target data type's range.

+* CAST(Numeric AS Timestamp): raise an overflow exception if numeric value

times 1000000(microseconds per second) is out of the range of Long type.

* CAST(Array AS Array): raise an exception if there is any on the conversion

of the elements.

* CAST(Map AS Map): raise an exception if there is any on the conversion of

the keys and the values.

* CAST(Struct AS Struct): raise an exception if there is any on the conversion

of the struct fields.

diff --git

a/sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/expressions/Cast.scala

b/sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/expressions/Cast.scala

index 0cf7ffd..8fe7eda 100644

---

a/sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/expressions/Cast.scala

+++

b/sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/expressions/Cast.scala

@@ -2202,7 +2202,7 @@ object AnsiCast {

case (StringType, TimestampType) => true

case (DateType, TimestampType) => true

case (TimestampNTZType, TimestampType) => true

- case (_: NumericType, TimestampType) =>

SQLConf.get.allowCastBetweenDatetimeAndNumericInAnsi

+ case (_: NumericType, TimestampType) => true

case (StringType, TimestampNTZType) => true

case (DateType, TimestampNTZType) => true

@@ -2222,8 +2222,7 @@ object AnsiCast {

case (_: NumericType, _: NumericType) => true

case (StringType, _: NumericType) => true

case (BooleanType, _: NumericType) => true

- case (TimestampType, _: NumericType) =>

SQLConf.get.allowCastBetweenDatetimeAndNumericInAnsi

- case (DateType, _: NumericType) =>

SQLConf.get.allowCastBetweenDatetimeAndNumericInAnsi

+ case (TimestampType, _: NumericType) => true

case (ArrayType(fromType, fn), ArrayType(toType, tn)) =>

canCast(fromType, toType) &&

diff --git

a/sql/catalyst/src/main/scala/org/apache/spark/sql/internal/SQLConf.scala

b/sql/catalyst/src/main/scala/org/apache/spark/sql/internal/SQLConf.scala

index 868340b..0859f53 100644

--- a/sql/catalyst/src/main/scala/org/apache/spark/sql/internal/SQLConf.scala

+++ b/sql/catalyst/src/main/scala/org/apache/spark/sql/internal/SQLConf.scala

@@ -2665,15 +2665,6 @@ object SQLConf {

.booleanConf

.createWithDefault(true)

- val ALLOW_CAST_BETWEEN_DATETIME_AND_NUMERIC_IN_ANSI =

- buildConf("spark.sql.ansi.allowCastBetweenDatetimeAndNumeric")

- .doc("When true, the data type conversions between datetime types and

numeric types are " +

- "allowed in ANSI SQL mode. This configuration is only effective when "

+

- s"'${ANSI_ENABLED.key}' is true.")

- .version("3.3.0")

- .booleanConf

- .createWithDefault(false)

-

val SORT_BEFORE_REPARTITION =

buildConf("spark.sql.execution.sortBeforeRepartition")

.internal()

@@ -4139,9 +4130,6 @@ class SQLConf extends Serializable with Logging {

def enforceReservedKeywords: Boolean = ansiEnabled &&

getConf(ENFORCE_RESERVED_KEYWORDS)

- def allowCastBetweenDatetimeAndNumericInAnsi: Boolean =

- getConf(ALLOW_CAST_BETWEEN_DATETIME_AND_NUMERIC_IN_ANSI)

-

def timestampType: AtomicType = getConf(TIMESTAMP_TYPE) match {

case "TIMESTAMP_LTZ" =>

// For historical reason, the TimestampType maps to TIMESTAMP WITH LOCAL

TIME ZONE

diff --git

a/sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/AnsiCastSuiteBase.scala

b/sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/AnsiCastSuiteBase.scala

index 75dbe18..6338be1 100644

---

a/sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/AnsiCastSuiteBase.scala

+++

b/sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/AnsiCastSuiteBase.scala

@@ -17,15 +17,14 @@

package org.apache.spark.sql.catalyst.expressions

-import java.sql.{Date, Timestamp}

+import java.sql.Timestamp

import java.time.DateTimeException

import org.apache.spark.SparkArithmeticException

import org.apache.spark.sql.catalyst.InternalRow

import org.apache.spark.sql.catalyst.util.DateTimeConstants.MILLIS_PER_SECOND

import org.apache.spark.sql.catalyst.util.DateTimeTestUtils

-import

org.apache.spark.sql.catalyst.util.DateTimeTestUtils.{withDefaultTimeZone, UTC,

UTC_OPT}

-import org.apache.spark.sql.catalyst.util.DateTimeUtils.fromJavaTimestamp

+import

org.apache.spark.sql.catalyst.util.DateTimeTestUtils.{withDefaultTimeZone, UTC}

import org.apache.spark.sql.internal.SQLConf

import org.apache.spark.sql.types._

import org.apache.spark.unsafe.types.UTF8String

@@ -139,20 +138,6 @@ abstract class AnsiCastSuiteBase extends CastSuiteBase {

cast(Literal(134.12), DecimalType(3, 2)), "cannot be represented")

}

- test("ANSI mode: disallow type conversions between Numeric types and

Timestamp type") {

- import DataTypeTestUtils.numericTypes

- checkInvalidCastFromNumericType(TimestampType)

- var errorMsg =

- "you can use functions

TIMESTAMP_SECONDS/TIMESTAMP_MILLIS/TIMESTAMP_MICROS instead"

- verifyCastFailure(cast(Literal(0L), TimestampType), Some(errorMsg))

-

- val timestampLiteral = Literal(1L, TimestampType)

- errorMsg = "you can use functions UNIX_SECONDS/UNIX_MILLIS/UNIX_MICROS

instead."

- numericTypes.foreach { numericType =>

- verifyCastFailure(cast(timestampLiteral, numericType), Some(errorMsg))

- }

- }

-

test("ANSI mode: disallow type conversions between Numeric types and Date

type") {

import DataTypeTestUtils.numericTypes

checkInvalidCastFromNumericType(DateType)

@@ -276,95 +261,38 @@ abstract class AnsiCastSuiteBase extends CastSuiteBase {

}

test("cast from timestamp II") {

- withSQLConf(SQLConf.ALLOW_CAST_BETWEEN_DATETIME_AND_NUMERIC_IN_ANSI.key ->

"true") {

- checkCastToTimestampError(Literal(Double.NaN), TimestampType)

- checkCastToTimestampError(Literal(1.0 / 0.0), TimestampType)

- checkCastToTimestampError(Literal(Float.NaN), TimestampType)

- checkCastToTimestampError(Literal(1.0f / 0.0f), TimestampType)

- Seq(Long.MinValue.toDouble, Long.MaxValue.toDouble,

Long.MinValue.toFloat,

- Long.MaxValue.toFloat).foreach { v =>

- checkExceptionInExpression[SparkArithmeticException](

- cast(Literal(v), TimestampType), "overflow")

- }

+ checkCastToTimestampError(Literal(Double.NaN), TimestampType)

+ checkCastToTimestampError(Literal(1.0 / 0.0), TimestampType)

+ checkCastToTimestampError(Literal(Float.NaN), TimestampType)

+ checkCastToTimestampError(Literal(1.0f / 0.0f), TimestampType)

+ Seq(Long.MinValue.toDouble, Long.MaxValue.toDouble, Long.MinValue.toFloat,

+ Long.MaxValue.toFloat).foreach { v =>

+ checkExceptionInExpression[SparkArithmeticException](

+ cast(Literal(v), TimestampType), "overflow")

}

}

test("cast a timestamp before the epoch 1970-01-01 00:00:00Z II") {

- withSQLConf(SQLConf.ALLOW_CAST_BETWEEN_DATETIME_AND_NUMERIC_IN_ANSI.key ->

"true") {

- withDefaultTimeZone(UTC) {

- val negativeTs = Timestamp.valueOf("1900-05-05 18:34:56.1")

- assert(negativeTs.getTime < 0)

- Seq(ByteType, ShortType, IntegerType).foreach { dt =>

- checkExceptionInExpression[SparkArithmeticException](

- cast(negativeTs, dt), s"to ${dt.catalogString} causes overflow")

- }

+ withDefaultTimeZone(UTC) {

+ val negativeTs = Timestamp.valueOf("1900-05-05 18:34:56.1")

+ assert(negativeTs.getTime < 0)

+ Seq(ByteType, ShortType, IntegerType).foreach { dt =>

+ checkExceptionInExpression[SparkArithmeticException](

+ cast(negativeTs, dt), s"to ${dt.catalogString} causes overflow")

}

}

}

- test("cast from timestamp") {

- withSQLConf(SQLConf.ALLOW_CAST_BETWEEN_DATETIME_AND_NUMERIC_IN_ANSI.key ->

"true") {

- val millis = 15 * 1000 + 3

- val seconds = millis * 1000 + 3

- val ts = new Timestamp(millis)

- val tss = new Timestamp(seconds)

- checkEvaluation(cast(ts, ShortType), 15.toShort)

- checkEvaluation(cast(ts, IntegerType), 15)

- checkEvaluation(cast(ts, LongType), 15.toLong)

- checkEvaluation(cast(ts, FloatType), 15.003f)

- checkEvaluation(cast(ts, DoubleType), 15.003)

-

- checkEvaluation(cast(cast(tss, ShortType), TimestampType),

- fromJavaTimestamp(ts) * MILLIS_PER_SECOND)

- checkEvaluation(cast(cast(tss, IntegerType), TimestampType),

- fromJavaTimestamp(ts) * MILLIS_PER_SECOND)

- checkEvaluation(cast(cast(tss, LongType), TimestampType),

- fromJavaTimestamp(ts) * MILLIS_PER_SECOND)

- checkEvaluation(

- cast(cast(millis.toFloat / MILLIS_PER_SECOND, TimestampType),

FloatType),

- millis.toFloat / MILLIS_PER_SECOND)

- checkEvaluation(

- cast(cast(millis.toDouble / MILLIS_PER_SECOND, TimestampType),

DoubleType),

- millis.toDouble / MILLIS_PER_SECOND)

- checkEvaluation(

- cast(cast(Decimal(1), TimestampType), DecimalType.SYSTEM_DEFAULT),

- Decimal(1))

-

- // A test for higher precision than millis

- checkEvaluation(cast(cast(0.000001, TimestampType), DoubleType),

0.000001)

- }

- }

-

test("cast a timestamp before the epoch 1970-01-01 00:00:00Z") {

- withSQLConf(SQLConf.ALLOW_CAST_BETWEEN_DATETIME_AND_NUMERIC_IN_ANSI.key ->

"true") {

- withDefaultTimeZone(UTC) {

- val negativeTs = Timestamp.valueOf("1900-05-05 18:34:56.1")

- assert(negativeTs.getTime < 0)

- Seq(ByteType, ShortType, IntegerType).foreach { dt =>

- checkExceptionInExpression[SparkArithmeticException](

- cast(negativeTs, dt), s"to ${dt.catalogString} causes overflow")

- }

- val expectedSecs = Math.floorDiv(negativeTs.getTime, MILLIS_PER_SECOND)

- checkEvaluation(cast(negativeTs, LongType), expectedSecs)

+ withDefaultTimeZone(UTC) {

+ val negativeTs = Timestamp.valueOf("1900-05-05 18:34:56.1")

+ assert(negativeTs.getTime < 0)

+ Seq(ByteType, ShortType, IntegerType).foreach { dt =>

+ checkExceptionInExpression[SparkArithmeticException](

+ cast(negativeTs, dt), s"to ${dt.catalogString} causes overflow")

}

- }

- }

-

- test("cast from date") {

- withSQLConf(SQLConf.ALLOW_CAST_BETWEEN_DATETIME_AND_NUMERIC_IN_ANSI.key ->

"true") {

- val d = Date.valueOf("1970-01-01")

- checkEvaluation(cast(d, ShortType), null)

- checkEvaluation(cast(d, IntegerType), null)

- checkEvaluation(cast(d, LongType), null)

- checkEvaluation(cast(d, FloatType), null)

- checkEvaluation(cast(d, DoubleType), null)

- checkEvaluation(cast(d, DecimalType.SYSTEM_DEFAULT), null)

- checkEvaluation(cast(d, DecimalType(10, 2)), null)

- checkEvaluation(cast(d, StringType), "1970-01-01")

-

- checkEvaluation(

- cast(cast(d, TimestampType, UTC_OPT), StringType, UTC_OPT),

- "1970-01-01 00:00:00")

+ val expectedSecs = Math.floorDiv(negativeTs.getTime, MILLIS_PER_SECOND)

+ checkEvaluation(cast(negativeTs, LongType), expectedSecs)

}

}

diff --git

a/sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/CastSuite.scala

b/sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/CastSuite.scala

index 9121f2d..ba36fa0 100644

---

a/sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/CastSuite.scala

+++

b/sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/CastSuite.scala

@@ -27,7 +27,6 @@ import

org.apache.spark.sql.catalyst.analysis.TypeCoercionSuite

import org.apache.spark.sql.catalyst.expressions.aggregate.{CollectList,

CollectSet}

import org.apache.spark.sql.catalyst.util.DateTimeConstants._

import org.apache.spark.sql.catalyst.util.DateTimeTestUtils._

-import org.apache.spark.sql.catalyst.util.DateTimeUtils._

import org.apache.spark.sql.internal.SQLConf

import org.apache.spark.sql.types._

import org.apache.spark.sql.types.DayTimeIntervalType.{DAY, HOUR, MINUTE,

SECOND}

@@ -455,36 +454,7 @@ class CastSuite extends CastSuiteBase {

"1970-01-01 00:00:00")

}

- test("cast from timestamp") {

- val millis = 15 * 1000 + 3

- val seconds = millis * 1000 + 3

- val ts = new Timestamp(millis)

- val tss = new Timestamp(seconds)

- checkEvaluation(cast(ts, ShortType), 15.toShort)

- checkEvaluation(cast(ts, IntegerType), 15)

- checkEvaluation(cast(ts, LongType), 15.toLong)

- checkEvaluation(cast(ts, FloatType), 15.003f)

- checkEvaluation(cast(ts, DoubleType), 15.003)

-

- checkEvaluation(cast(cast(tss, ShortType), TimestampType),

- fromJavaTimestamp(ts) * MILLIS_PER_SECOND)

- checkEvaluation(cast(cast(tss, IntegerType), TimestampType),

- fromJavaTimestamp(ts) * MILLIS_PER_SECOND)

- checkEvaluation(cast(cast(tss, LongType), TimestampType),

- fromJavaTimestamp(ts) * MILLIS_PER_SECOND)

- checkEvaluation(

- cast(cast(millis.toFloat / MILLIS_PER_SECOND, TimestampType), FloatType),

- millis.toFloat / MILLIS_PER_SECOND)

- checkEvaluation(

- cast(cast(millis.toDouble / MILLIS_PER_SECOND, TimestampType),

DoubleType),

- millis.toDouble / MILLIS_PER_SECOND)

- checkEvaluation(

- cast(cast(Decimal(1), TimestampType), DecimalType.SYSTEM_DEFAULT),

- Decimal(1))

-

- // A test for higher precision than millis

- checkEvaluation(cast(cast(0.000001, TimestampType), DoubleType), 0.000001)

-

+ test("cast from timestamp II") {

checkEvaluation(cast(Double.NaN, TimestampType), null)

checkEvaluation(cast(1.0 / 0.0, TimestampType), null)

checkEvaluation(cast(Float.NaN, TimestampType), null)

diff --git

a/sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/CastSuiteBase.scala

b/sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/CastSuiteBase.scala

index f01fea8..54497f1 100644

---

a/sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/CastSuiteBase.scala

+++

b/sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/CastSuiteBase.scala

@@ -295,6 +295,37 @@ abstract class CastSuiteBase extends SparkFunSuite with

ExpressionEvalHelper {

assert(cast("abcdef", FloatType).nullable)

}

+ test("cast from timestamp") {

+ val millis = 15 * 1000 + 3

+ val seconds = millis * 1000 + 3

+ val ts = new Timestamp(millis)

+ val tss = new Timestamp(seconds)

+ checkEvaluation(cast(ts, ShortType), 15.toShort)

+ checkEvaluation(cast(ts, IntegerType), 15)

+ checkEvaluation(cast(ts, LongType), 15.toLong)

+ checkEvaluation(cast(ts, FloatType), 15.003f)

+ checkEvaluation(cast(ts, DoubleType), 15.003)

+

+ checkEvaluation(cast(cast(tss, ShortType), TimestampType),

+ fromJavaTimestamp(ts) * MILLIS_PER_SECOND)

+ checkEvaluation(cast(cast(tss, IntegerType), TimestampType),

+ fromJavaTimestamp(ts) * MILLIS_PER_SECOND)

+ checkEvaluation(cast(cast(tss, LongType), TimestampType),

+ fromJavaTimestamp(ts) * MILLIS_PER_SECOND)

+ checkEvaluation(

+ cast(cast(millis.toFloat / MILLIS_PER_SECOND, TimestampType), FloatType),

+ millis.toFloat / MILLIS_PER_SECOND)

+ checkEvaluation(

+ cast(cast(millis.toDouble / MILLIS_PER_SECOND, TimestampType),

DoubleType),

+ millis.toDouble / MILLIS_PER_SECOND)

+ checkEvaluation(

+ cast(cast(Decimal(1), TimestampType), DecimalType.SYSTEM_DEFAULT),

+ Decimal(1))

+

+ // A test for higher precision than millis

+ checkEvaluation(cast(cast(0.000001, TimestampType), DoubleType), 0.000001)

+ }

+

test("data type casting") {

val sd = "1970-01-01"

val d = Date.valueOf(sd)

---------------------------------------------------------------------

To unsubscribe, e-mail: [email protected]

For additional commands, e-mail: [email protected]