This is an automated email from the ASF dual-hosted git repository.

gurwls223 pushed a commit to branch master

in repository https://gitbox.apache.org/repos/asf/spark.git

The following commit(s) were added to refs/heads/master by this push:

new 5925e1d186f [SPARK-39522][INFRA] Uses Docker image cache over a custom

image in pyspark job

5925e1d186f is described below

commit 5925e1d186f6bf7649eb851408f8d0bc12169d63

Author: Yikun Jiang <[email protected]>

AuthorDate: Fri Jul 8 16:48:40 2022 +0900

[SPARK-39522][INFRA] Uses Docker image cache over a custom image in pyspark

job

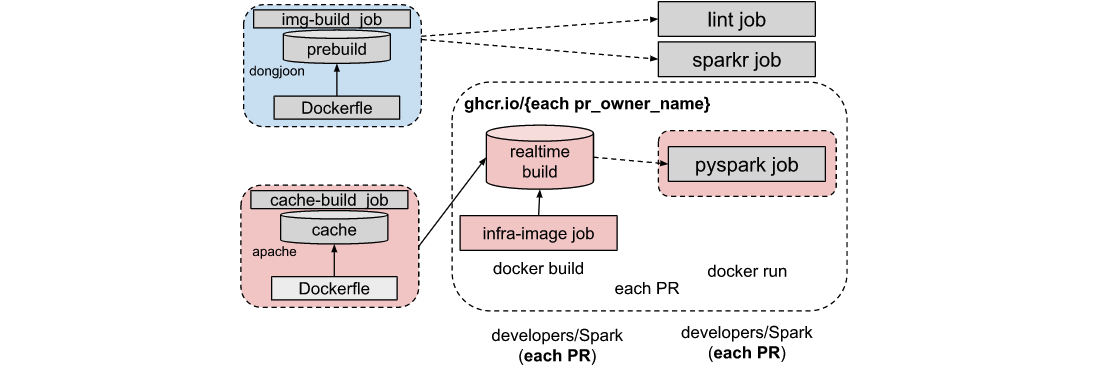

### What changes were proposed in this pull request?

Change pyspark container from original static image to just-in-time build

image from cache.

See also:

https://docs.google.com/document/d/1_uiId-U1DODYyYZejAZeyz2OAjxcnA-xfwjynDF6vd0

This patch has 2 changes:

1. Add a `infra-image` job to build ci image from [cache

images](https://github.com/apache/spark/pkgs/container/spark%2Fapache-spark-github-action-image-cache)

2. Use the ci image as pyspark job container image, only master branch

enable the new workflow now.

### Why are the changes needed?

Help to speed up docker infra image build in each PR.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

CI passed

Closes #37005 from Yikun/SPARK-39522-pyspark.

Authored-by: Yikun Jiang <[email protected]>

Signed-off-by: Hyukjin Kwon <[email protected]>

---

.github/workflows/build_and_test.yml | 66 ++++++++++++++++++++++++++++++++++--

1 file changed, 63 insertions(+), 3 deletions(-)

diff --git a/.github/workflows/build_and_test.yml

b/.github/workflows/build_and_test.yml

index bc5eef68afe..9ad43acdc0c 100644

--- a/.github/workflows/build_and_test.yml

+++ b/.github/workflows/build_and_test.yml

@@ -251,13 +251,73 @@ jobs:

name: unit-tests-log-${{ matrix.modules }}-${{ matrix.comment }}-${{

matrix.java }}-${{ matrix.hadoop }}-${{ matrix.hive }}

path: "**/target/unit-tests.log"

- pyspark:

+ infra-image:

needs: precondition

- if: fromJson(needs.precondition.outputs.required).pyspark == 'true'

+ # Currently, only enable docker build from cache for `master` branch jobs

+ if: fromJson(needs.precondition.outputs.required).pyspark == 'true' && ${{

inputs.branch }} == 'master'

+ runs-on: ubuntu-latest

+ outputs:

+ image_url: ${{ steps.infra-image-outputs.outputs.image_url }}

+ steps:

+ - name: Generate image name and url

+ id: infra-image-outputs

+ run: |

+ # Convert to lowercase to meet docker repo name requirement

+ REPO_OWNER=$(echo "${{ github.repository_owner }}" | tr '[:upper:]'

'[:lower:]')

+ IMG_NAME="apache-spark-ci-image:${{ inputs.branch }}-${{

github.run_id }}"

+ IMG_URL="ghcr.io/$REPO_OWNER/$IMG_NAME"

+ echo ::set-output name=image_url::$IMG_URL

+ - name: Login to GitHub Container Registry

+ uses: docker/login-action@v2

+ with:

+ registry: ghcr.io

+ username: ${{ github.actor }}

+ password: ${{ secrets.GITHUB_TOKEN }}

+ - name: Checkout Spark repository

+ uses: actions/checkout@v2

+ # In order to fetch changed files

+ with:

+ fetch-depth: 0

+ repository: apache/spark

+ ref: ${{ inputs.branch }}

+ - name: Sync the current branch with the latest in Apache Spark

+ if: github.repository != 'apache/spark'

+ run: |

+ echo "APACHE_SPARK_REF=$(git rev-parse HEAD)" >> $GITHUB_ENV

+ git fetch https://github.com/$GITHUB_REPOSITORY.git

${GITHUB_REF#refs/heads/}

+ git -c user.name='Apache Spark Test Account' -c

user.email='[email protected]' merge --no-commit --progress --squash

FETCH_HEAD

+ git -c user.name='Apache Spark Test Account' -c

user.email='[email protected]' commit -m "Merged commit" --allow-empty

+ -

+ name: Set up QEMU

+ uses: docker/setup-qemu-action@v1

+ -

+ name: Set up Docker Buildx

+ uses: docker/setup-buildx-action@v1

+ -

+ name: Build and push

+ id: docker_build

+ uses: docker/build-push-action@v2

+ with:

+ context: ./dev/infra/

+ push: true

+ tags: |

+ ${{ steps.infra-image-outputs.outputs.image_url }}

+ # Use the infra image cache to speed up

+ cache-from:

type=registry,ref=ghcr.io/apache/spark/apache-spark-github-action-image-cache:${{

inputs.branch }}

+

+ pyspark:

+ needs: [precondition, infra-image]

+ # always run if pyspark == 'true', even infra-image is skip (such as

non-master job)

+ if: always() && fromJson(needs.precondition.outputs.required).pyspark ==

'true'

name: "Build modules: ${{ matrix.modules }}"

runs-on: ubuntu-20.04

container:

- image: dongjoon/apache-spark-github-action-image:20220207

+ # Currently, only enable docker build from cache for `master` branch jobs

+ image: >-

+ ${{

+ (inputs.branch == 'master' && needs.infra-image.outputs.image_url)

+ || 'dongjoon/apache-spark-github-action-image:20220207'

+ }}

strategy:

fail-fast: false

matrix:

---------------------------------------------------------------------

To unsubscribe, e-mail: [email protected]

For additional commands, e-mail: [email protected]