This is an automated email from the ASF dual-hosted git repository.

yikun pushed a commit to branch master

in repository https://gitbox.apache.org/repos/asf/spark-docker.git

The following commit(s) were added to refs/heads/master by this push:

new e61aba1 [SPARK-40516] Add Apache Spark 3.3.0 Dockerfile

e61aba1 is described below

commit e61aba1ed4ca8e747f38cae5f6bd72a3a50f57cd

Author: Yikun Jiang <[email protected]>

AuthorDate: Tue Oct 11 10:45:57 2022 +0800

[SPARK-40516] Add Apache Spark 3.3.0 Dockerfile

### What changes were proposed in this pull request?

This patch adds Apache Spark 3.3.0 Dockerfile:

- 3.3.0-scala2.12-java11-python3-ubuntu: pyspark + scala

- 3.3.0-scala2.12-java11-ubuntu: scala

- 3.3.0-scala2.12-java11-r-ubuntu: sparkr + scala

- 3.3.0-scala2.12-java11-python3-r-ubuntu: All in one image

### Why are the changes needed?

This is needed by Docker Official Image

See also in:

https://docs.google.com/document/d/1nN-pKuvt-amUcrkTvYAQ-bJBgtsWb9nAkNoVNRM2S2o

### Does this PR introduce _any_ user-facing change?

No

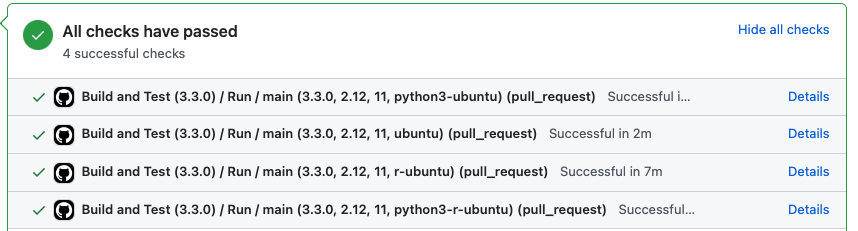

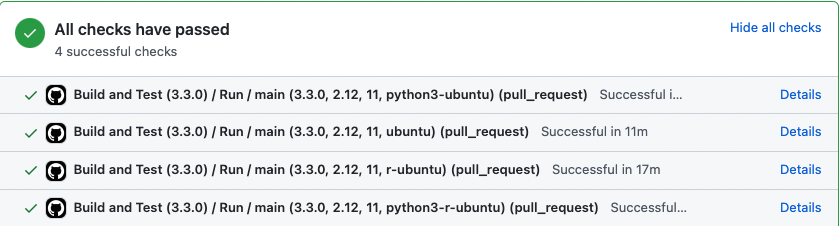

### How was this patch tested?

**The action won't be triggered until the workflow is merged to the default

branch**, so I can only test it in my local repo:

- local test: https://github.com/Yikun/spark-docker/pull/1

- Dockerfile E2E K8S Local test:

https://github.com/Yikun/spark-docker-bak/pull/7

Closes #2 from Yikun/SPARK-40516.

Authored-by: Yikun Jiang <[email protected]>

Signed-off-by: Yikun Jiang <[email protected]>

---

.github/workflows/build_3.3.0.yaml | 38 ++++++++

.github/workflows/main.yml | 105 ++++++++++++++++++++

3.3.0/scala2.12-java11-python3-r-ubuntu/Dockerfile | 84 ++++++++++++++++

.../entrypoint.sh | 107 +++++++++++++++++++++

3.3.0/scala2.12-java11-python3-ubuntu/Dockerfile | 81 ++++++++++++++++

.../scala2.12-java11-python3-ubuntu/entrypoint.sh | 107 +++++++++++++++++++++

3.3.0/scala2.12-java11-r-ubuntu/Dockerfile | 79 +++++++++++++++

3.3.0/scala2.12-java11-r-ubuntu/entrypoint.sh | 107 +++++++++++++++++++++

3.3.0/scala2.12-java11-ubuntu/Dockerfile | 76 +++++++++++++++

3.3.0/scala2.12-java11-ubuntu/entrypoint.sh | 107 +++++++++++++++++++++

10 files changed, 891 insertions(+)

diff --git a/.github/workflows/build_3.3.0.yaml

b/.github/workflows/build_3.3.0.yaml

new file mode 100644

index 0000000..63b1ab3

--- /dev/null

+++ b/.github/workflows/build_3.3.0.yaml

@@ -0,0 +1,38 @@

+#

+# Licensed to the Apache Software Foundation (ASF) under one

+# or more contributor license agreements. See the NOTICE file

+# distributed with this work for additional information

+# regarding copyright ownership. The ASF licenses this file

+# to you under the Apache License, Version 2.0 (the

+# "License"); you may not use this file except in compliance

+# with the License. You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing,

+# software distributed under the License is distributed on an

+# "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+# KIND, either express or implied. See the License for the

+# specific language governing permissions and limitations

+# under the License.

+#

+

+name: "Build and Test (3.3.0)"

+

+on:

+ pull_request:

+ branches:

+ - 'master'

+ paths:

+ - '3.3.0/'

+ - '.github/workflows/main.yml'

+

+jobs:

+ run-build:

+ name: Run

+ secrets: inherit

+ uses: ./.github/workflows/main.yml

+ with:

+ spark: 3.3.0

+ scala: 2.12

+ java: 11

diff --git a/.github/workflows/main.yml b/.github/workflows/main.yml

new file mode 100644

index 0000000..90bd706

--- /dev/null

+++ b/.github/workflows/main.yml

@@ -0,0 +1,105 @@

+#

+# Licensed to the Apache Software Foundation (ASF) under one

+# or more contributor license agreements. See the NOTICE file

+# distributed with this work for additional information

+# regarding copyright ownership. The ASF licenses this file

+# to you under the Apache License, Version 2.0 (the

+# "License"); you may not use this file except in compliance

+# with the License. You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing,

+# software distributed under the License is distributed on an

+# "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+# KIND, either express or implied. See the License for the

+# specific language governing permissions and limitations

+# under the License.

+#

+

+name: Main (Build/Test/Publish)

+

+on:

+ workflow_call:

+ inputs:

+ spark:

+ description: The Spark version of Spark image.

+ required: true

+ type: string

+ default: 3.3.0

+ scala:

+ description: The Scala version of Spark image.

+ required: true

+ type: string

+ default: 2.12

+ java:

+ description: The Java version of Spark image.

+ required: true

+ type: string

+ default: 11

+

+jobs:

+ main:

+ runs-on: ubuntu-latest

+ strategy:

+ matrix:

+ spark_version:

+ - ${{ inputs.spark }}

+ scala_version:

+ - ${{ inputs.scala }}

+ java_version:

+ - ${{ inputs.java }}

+ image_suffix: [python3-ubuntu, ubuntu, r-ubuntu, python3-r-ubuntu]

+ steps:

+ - name: Checkout Spark repository

+ uses: actions/checkout@v2

+

+ - name: Set up QEMU

+ uses: docker/setup-qemu-action@v1

+

+ - name: Set up Docker Buildx

+ uses: docker/setup-buildx-action@v1

+

+ - name: Login to GHCR

+ uses: docker/login-action@v2

+ with:

+ registry: ghcr.io

+ username: ${{ github.actor }}

+ password: ${{ secrets.GITHUB_TOKEN }}

+

+ - name: Generate tags

+ run: |

+ TAG=scala${{ matrix.scala_version }}-java${{ matrix.java_version

}}-${{ matrix.image_suffix }}

+

+ REPO_OWNER=$(echo "${{ github.repository_owner }}" | tr '[:upper:]'

'[:lower:]')

+ TEST_REPO=ghcr.io/$REPO_OWNER/spark-docker

+ IMAGE_NAME=spark

+ IMAGE_PATH=${{ matrix.spark_version }}/$TAG

+ UNIQUE_IMAGE_TAG=${{ matrix.spark_version }}-$TAG

+

+ # Unique image tag in each version: scala2.12-java11-python3-ubuntu

+ echo "UNIQUE_IMAGE_TAG=${UNIQUE_IMAGE_TAG}" >> $GITHUB_ENV

+ # Test repo: ghcr.io/apache/spark-docker

+ echo "TEST_REPO=${TEST_REPO}" >> $GITHUB_ENV

+ # Image name: spark

+ echo "IMAGE_NAME=${IMAGE_NAME}" >> $GITHUB_ENV

+ # Image dockerfile path: 3.3.0/scala2.12-java11-python3-ubuntu

+ echo "IMAGE_PATH=${IMAGE_PATH}" >> $GITHUB_ENV

+

+ - name: Print Image tags

+ run: |

+ echo "UNIQUE_IMAGE_TAG: "${UNIQUE_IMAGE_TAG}

+ echo "TEST_REPO: "${TEST_REPO}

+ echo "IMAGE_NAME: "${IMAGE_NAME}

+ echo "IMAGE_PATH: "${IMAGE_PATH}

+

+ - name: Build and push test image

+ uses: docker/build-push-action@v2

+ with:

+ context: ${{ env.IMAGE_PATH }}

+ push: true

+ tags: ${{ env.TEST_REPO }}:${{ env.UNIQUE_IMAGE_TAG }}

+ platforms: linux/amd64,linux/arm64

+

+ - name: Image digest

+ run: echo ${{ steps.docker_build.outputs.digest }}

diff --git a/3.3.0/scala2.12-java11-python3-r-ubuntu/Dockerfile

b/3.3.0/scala2.12-java11-python3-r-ubuntu/Dockerfile

new file mode 100644

index 0000000..c95dd39

--- /dev/null

+++ b/3.3.0/scala2.12-java11-python3-r-ubuntu/Dockerfile

@@ -0,0 +1,84 @@

+#

+# Licensed to the Apache Software Foundation (ASF) under one or more

+# contributor license agreements. See the NOTICE file distributed with

+# this work for additional information regarding copyright ownership.

+# The ASF licenses this file to You under the Apache License, Version 2.0

+# (the "License"); you may not use this file except in compliance with

+# the License. You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+#

+FROM eclipse-temurin:11-jre-focal

+

+ARG spark_uid=185

+

+RUN set -ex && \

+ apt-get update && \

+ ln -s /lib /lib64 && \

+ apt install -y gnupg2 wget bash tini libc6 libpam-modules krb5-user

libnss3 procps net-tools && \

+ apt install -y python3 python3-pip && \

+ pip3 install --upgrade pip setuptools && \

+ apt install -y r-base r-base-dev && \

+ mkdir -p /opt/spark && \

+ mkdir /opt/spark/python && \

+ mkdir -p /opt/spark/examples && \

+ mkdir -p /opt/spark/work-dir && \

+ touch /opt/spark/RELEASE && \

+ rm /bin/sh && \

+ ln -sv /bin/bash /bin/sh && \

+ echo "auth required pam_wheel.so use_uid" >> /etc/pam.d/su && \

+ chgrp root /etc/passwd && chmod ug+rw /etc/passwd && \

+ rm -rf /var/cache/apt/*

+

+# Install Apache Spark

+# https://downloads.apache.org/spark/KEYS

+ENV

SPARK_TGZ_URL=https://dlcdn.apache.org/spark/spark-3.3.0/spark-3.3.0-bin-hadoop3.tgz

\

+

SPARK_TGZ_ASC_URL=https://downloads.apache.org/spark/spark-3.3.0/spark-3.3.0-bin-hadoop3.tgz.asc

\

+ GPG_KEY=E298A3A825C0D65DFD57CBB651716619E084DAB9

+

+RUN set -ex; \

+ export SPARK_TMP="$(mktemp -d)"; \

+ cd $SPARK_TMP; \

+ wget -nv -O spark.tgz "$SPARK_TGZ_URL"; \

+ wget -nv -O spark.tgz.asc "$SPARK_TGZ_ASC_URL"; \

+ export GNUPGHOME="$(mktemp -d)"; \

+ gpg --keyserver hkps://keyserver.pgp.com --recv-key "$GPG_KEY" || \

+ gpg --keyserver hkps://keyserver.ubuntu.com --recv-keys "$GPG_KEY" || \

+ gpg --batch --verify spark.tgz.asc spark.tgz; \

+ gpgconf --kill all; \

+ rm -rf "$GNUPGHOME" spark.tgz.asc; \

+ \

+ tar -xf spark.tgz --strip-components=1; \

+ mv jars /opt/spark/; \

+ mv bin /opt/spark/; \

+ mv sbin /opt/spark/; \

+ mv kubernetes/dockerfiles/spark/decom.sh /opt/; \

+ mv examples /opt/spark/; \

+ mv kubernetes/tests /opt/spark/; \

+ mv data /opt/spark/; \

+ mv python/pyspark /opt/spark/python/pyspark/; \

+ mv python/lib /opt/spark/python/lib/; \

+ mv R /opt/spark/; \

+ cd ..; \

+ rm -rf "$SPARK_TMP";

+

+COPY entrypoint.sh /opt/

+

+ENV SPARK_HOME /opt/spark

+ENV R_HOME /usr/lib/R

+

+WORKDIR /opt/spark/work-dir

+RUN chmod g+w /opt/spark/work-dir

+RUN chmod a+x /opt/decom.sh

+RUN chmod a+x /opt/entrypoint.sh

+

+ENTRYPOINT [ "/opt/entrypoint.sh" ]

+

+# Specify the User that the actual main process will run as

+USER ${spark_uid}

diff --git a/3.3.0/scala2.12-java11-python3-r-ubuntu/entrypoint.sh

b/3.3.0/scala2.12-java11-python3-r-ubuntu/entrypoint.sh

new file mode 100644

index 0000000..cfd7a69

--- /dev/null

+++ b/3.3.0/scala2.12-java11-python3-r-ubuntu/entrypoint.sh

@@ -0,0 +1,107 @@

+#!/bin/bash

+#

+# Licensed to the Apache Software Foundation (ASF) under one or more

+# contributor license agreements. See the NOTICE file distributed with

+# this work for additional information regarding copyright ownership.

+# The ASF licenses this file to You under the Apache License, Version 2.0

+# (the "License"); you may not use this file except in compliance with

+# the License. You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+#

+

+# Check whether there is a passwd entry for the container UID

+myuid=$(id -u)

+mygid=$(id -g)

+# turn off -e for getent because it will return error code in anonymous uid

case

+set +e

+uidentry=$(getent passwd $myuid)

+set -e

+

+# If there is no passwd entry for the container UID, attempt to create one

+if [ -z "$uidentry" ] ; then

+ if [ -w /etc/passwd ] ; then

+ echo "$myuid:x:$myuid:$mygid:${SPARK_USER_NAME:-anonymous

uid}:$SPARK_HOME:/bin/false" >> /etc/passwd

+ else

+ echo "Container ENTRYPOINT failed to add passwd entry for anonymous

UID"

+ fi

+fi

+

+if [ -z "$JAVA_HOME" ]; then

+ JAVA_HOME=$(java -XshowSettings:properties -version 2>&1 > /dev/null | grep

'java.home' | awk '{print $3}')

+fi

+

+SPARK_CLASSPATH="$SPARK_CLASSPATH:${SPARK_HOME}/jars/*"

+env | grep SPARK_JAVA_OPT_ | sort -t_ -k4 -n | sed 's/[^=]*=\(.*\)/\1/g' >

/tmp/java_opts.txt

+readarray -t SPARK_EXECUTOR_JAVA_OPTS < /tmp/java_opts.txt

+

+if [ -n "$SPARK_EXTRA_CLASSPATH" ]; then

+ SPARK_CLASSPATH="$SPARK_CLASSPATH:$SPARK_EXTRA_CLASSPATH"

+fi

+

+if ! [ -z ${PYSPARK_PYTHON+x} ]; then

+ export PYSPARK_PYTHON

+fi

+if ! [ -z ${PYSPARK_DRIVER_PYTHON+x} ]; then

+ export PYSPARK_DRIVER_PYTHON

+fi

+

+# If HADOOP_HOME is set and SPARK_DIST_CLASSPATH is not set, set it here so

Hadoop jars are available to the executor.

+# It does not set SPARK_DIST_CLASSPATH if already set, to avoid overriding

customizations of this value from elsewhere e.g. Docker/K8s.

+if [ -n "${HADOOP_HOME}" ] && [ -z "${SPARK_DIST_CLASSPATH}" ]; then

+ export SPARK_DIST_CLASSPATH="$($HADOOP_HOME/bin/hadoop classpath)"

+fi

+

+if ! [ -z ${HADOOP_CONF_DIR+x} ]; then

+ SPARK_CLASSPATH="$HADOOP_CONF_DIR:$SPARK_CLASSPATH";

+fi

+

+if ! [ -z ${SPARK_CONF_DIR+x} ]; then

+ SPARK_CLASSPATH="$SPARK_CONF_DIR:$SPARK_CLASSPATH";

+elif ! [ -z ${SPARK_HOME+x} ]; then

+ SPARK_CLASSPATH="$SPARK_HOME/conf:$SPARK_CLASSPATH";

+fi

+

+case "$1" in

+ driver)

+ shift 1

+ CMD=(

+ "$SPARK_HOME/bin/spark-submit"

+ --conf "spark.driver.bindAddress=$SPARK_DRIVER_BIND_ADDRESS"

+ --deploy-mode client

+ "$@"

+ )

+ ;;

+ executor)

+ shift 1

+ CMD=(

+ ${JAVA_HOME}/bin/java

+ "${SPARK_EXECUTOR_JAVA_OPTS[@]}"

+ -Xms$SPARK_EXECUTOR_MEMORY

+ -Xmx$SPARK_EXECUTOR_MEMORY

+ -cp "$SPARK_CLASSPATH:$SPARK_DIST_CLASSPATH"

+ org.apache.spark.scheduler.cluster.k8s.KubernetesExecutorBackend

+ --driver-url $SPARK_DRIVER_URL

+ --executor-id $SPARK_EXECUTOR_ID

+ --cores $SPARK_EXECUTOR_CORES

+ --app-id $SPARK_APPLICATION_ID

+ --hostname $SPARK_EXECUTOR_POD_IP

+ --resourceProfileId $SPARK_RESOURCE_PROFILE_ID

+ --podName $SPARK_EXECUTOR_POD_NAME

+ )

+ ;;

+

+ *)

+ # Non-spark-on-k8s command provided, proceeding in pass-through mode...

+ CMD=("$@")

+ ;;

+esac

+

+# Execute the container CMD under tini for better hygiene

+exec /usr/bin/tini -s -- "${CMD[@]}"

diff --git a/3.3.0/scala2.12-java11-python3-ubuntu/Dockerfile

b/3.3.0/scala2.12-java11-python3-ubuntu/Dockerfile

new file mode 100644

index 0000000..e3d9829

--- /dev/null

+++ b/3.3.0/scala2.12-java11-python3-ubuntu/Dockerfile

@@ -0,0 +1,81 @@

+#

+# Licensed to the Apache Software Foundation (ASF) under one or more

+# contributor license agreements. See the NOTICE file distributed with

+# this work for additional information regarding copyright ownership.

+# The ASF licenses this file to You under the Apache License, Version 2.0

+# (the "License"); you may not use this file except in compliance with

+# the License. You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+#

+FROM eclipse-temurin:11-jre-focal

+

+ARG spark_uid=185

+

+RUN set -ex && \

+ apt-get update && \

+ ln -s /lib /lib64 && \

+ apt install -y gnupg2 wget bash tini libc6 libpam-modules krb5-user

libnss3 procps net-tools && \

+ apt install -y python3 python3-pip && \

+ pip3 install --upgrade pip setuptools && \

+ mkdir -p /opt/spark && \

+ mkdir /opt/spark/python && \

+ mkdir -p /opt/spark/examples && \

+ mkdir -p /opt/spark/work-dir && \

+ touch /opt/spark/RELEASE && \

+ rm /bin/sh && \

+ ln -sv /bin/bash /bin/sh && \

+ echo "auth required pam_wheel.so use_uid" >> /etc/pam.d/su && \

+ chgrp root /etc/passwd && chmod ug+rw /etc/passwd && \

+ rm -rf /var/cache/apt/*

+

+# Install Apache Spark

+# https://downloads.apache.org/spark/KEYS

+ENV

SPARK_TGZ_URL=https://dlcdn.apache.org/spark/spark-3.3.0/spark-3.3.0-bin-hadoop3.tgz

\

+

SPARK_TGZ_ASC_URL=https://downloads.apache.org/spark/spark-3.3.0/spark-3.3.0-bin-hadoop3.tgz.asc

\

+ GPG_KEY=E298A3A825C0D65DFD57CBB651716619E084DAB9

+

+RUN set -ex; \

+ export SPARK_TMP="$(mktemp -d)"; \

+ cd $SPARK_TMP; \

+ wget -nv -O spark.tgz "$SPARK_TGZ_URL"; \

+ wget -nv -O spark.tgz.asc "$SPARK_TGZ_ASC_URL"; \

+ export GNUPGHOME="$(mktemp -d)"; \

+ gpg --keyserver hkps://keyserver.pgp.com --recv-key "$GPG_KEY" || \

+ gpg --keyserver hkps://keyserver.ubuntu.com --recv-keys "$GPG_KEY" || \

+ gpg --batch --verify spark.tgz.asc spark.tgz; \

+ gpgconf --kill all; \

+ rm -rf "$GNUPGHOME" spark.tgz.asc; \

+ \

+ tar -xf spark.tgz --strip-components=1; \

+ mv jars /opt/spark/; \

+ mv bin /opt/spark/; \

+ mv sbin /opt/spark/; \

+ mv kubernetes/dockerfiles/spark/decom.sh /opt/; \

+ mv examples /opt/spark/; \

+ mv kubernetes/tests /opt/spark/; \

+ mv data /opt/spark/; \

+ mv python/pyspark /opt/spark/python/pyspark/; \

+ mv python/lib /opt/spark/python/lib/; \

+ cd ..; \

+ rm -rf "$SPARK_TMP";

+

+COPY entrypoint.sh /opt/

+

+ENV SPARK_HOME /opt/spark

+

+WORKDIR /opt/spark/work-dir

+RUN chmod g+w /opt/spark/work-dir

+RUN chmod a+x /opt/decom.sh

+RUN chmod a+x /opt/entrypoint.sh

+

+ENTRYPOINT [ "/opt/entrypoint.sh" ]

+

+# Specify the User that the actual main process will run as

+USER ${spark_uid}

diff --git a/3.3.0/scala2.12-java11-python3-ubuntu/entrypoint.sh

b/3.3.0/scala2.12-java11-python3-ubuntu/entrypoint.sh

new file mode 100644

index 0000000..cfd7a69

--- /dev/null

+++ b/3.3.0/scala2.12-java11-python3-ubuntu/entrypoint.sh

@@ -0,0 +1,107 @@

+#!/bin/bash

+#

+# Licensed to the Apache Software Foundation (ASF) under one or more

+# contributor license agreements. See the NOTICE file distributed with

+# this work for additional information regarding copyright ownership.

+# The ASF licenses this file to You under the Apache License, Version 2.0

+# (the "License"); you may not use this file except in compliance with

+# the License. You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+#

+

+# Check whether there is a passwd entry for the container UID

+myuid=$(id -u)

+mygid=$(id -g)

+# turn off -e for getent because it will return error code in anonymous uid

case

+set +e

+uidentry=$(getent passwd $myuid)

+set -e

+

+# If there is no passwd entry for the container UID, attempt to create one

+if [ -z "$uidentry" ] ; then

+ if [ -w /etc/passwd ] ; then

+ echo "$myuid:x:$myuid:$mygid:${SPARK_USER_NAME:-anonymous

uid}:$SPARK_HOME:/bin/false" >> /etc/passwd

+ else

+ echo "Container ENTRYPOINT failed to add passwd entry for anonymous

UID"

+ fi

+fi

+

+if [ -z "$JAVA_HOME" ]; then

+ JAVA_HOME=$(java -XshowSettings:properties -version 2>&1 > /dev/null | grep

'java.home' | awk '{print $3}')

+fi

+

+SPARK_CLASSPATH="$SPARK_CLASSPATH:${SPARK_HOME}/jars/*"

+env | grep SPARK_JAVA_OPT_ | sort -t_ -k4 -n | sed 's/[^=]*=\(.*\)/\1/g' >

/tmp/java_opts.txt

+readarray -t SPARK_EXECUTOR_JAVA_OPTS < /tmp/java_opts.txt

+

+if [ -n "$SPARK_EXTRA_CLASSPATH" ]; then

+ SPARK_CLASSPATH="$SPARK_CLASSPATH:$SPARK_EXTRA_CLASSPATH"

+fi

+

+if ! [ -z ${PYSPARK_PYTHON+x} ]; then

+ export PYSPARK_PYTHON

+fi

+if ! [ -z ${PYSPARK_DRIVER_PYTHON+x} ]; then

+ export PYSPARK_DRIVER_PYTHON

+fi

+

+# If HADOOP_HOME is set and SPARK_DIST_CLASSPATH is not set, set it here so

Hadoop jars are available to the executor.

+# It does not set SPARK_DIST_CLASSPATH if already set, to avoid overriding

customizations of this value from elsewhere e.g. Docker/K8s.

+if [ -n "${HADOOP_HOME}" ] && [ -z "${SPARK_DIST_CLASSPATH}" ]; then

+ export SPARK_DIST_CLASSPATH="$($HADOOP_HOME/bin/hadoop classpath)"

+fi

+

+if ! [ -z ${HADOOP_CONF_DIR+x} ]; then

+ SPARK_CLASSPATH="$HADOOP_CONF_DIR:$SPARK_CLASSPATH";

+fi

+

+if ! [ -z ${SPARK_CONF_DIR+x} ]; then

+ SPARK_CLASSPATH="$SPARK_CONF_DIR:$SPARK_CLASSPATH";

+elif ! [ -z ${SPARK_HOME+x} ]; then

+ SPARK_CLASSPATH="$SPARK_HOME/conf:$SPARK_CLASSPATH";

+fi

+

+case "$1" in

+ driver)

+ shift 1

+ CMD=(

+ "$SPARK_HOME/bin/spark-submit"

+ --conf "spark.driver.bindAddress=$SPARK_DRIVER_BIND_ADDRESS"

+ --deploy-mode client

+ "$@"

+ )

+ ;;

+ executor)

+ shift 1

+ CMD=(

+ ${JAVA_HOME}/bin/java

+ "${SPARK_EXECUTOR_JAVA_OPTS[@]}"

+ -Xms$SPARK_EXECUTOR_MEMORY

+ -Xmx$SPARK_EXECUTOR_MEMORY

+ -cp "$SPARK_CLASSPATH:$SPARK_DIST_CLASSPATH"

+ org.apache.spark.scheduler.cluster.k8s.KubernetesExecutorBackend

+ --driver-url $SPARK_DRIVER_URL

+ --executor-id $SPARK_EXECUTOR_ID

+ --cores $SPARK_EXECUTOR_CORES

+ --app-id $SPARK_APPLICATION_ID

+ --hostname $SPARK_EXECUTOR_POD_IP

+ --resourceProfileId $SPARK_RESOURCE_PROFILE_ID

+ --podName $SPARK_EXECUTOR_POD_NAME

+ )

+ ;;

+

+ *)

+ # Non-spark-on-k8s command provided, proceeding in pass-through mode...

+ CMD=("$@")

+ ;;

+esac

+

+# Execute the container CMD under tini for better hygiene

+exec /usr/bin/tini -s -- "${CMD[@]}"

diff --git a/3.3.0/scala2.12-java11-r-ubuntu/Dockerfile

b/3.3.0/scala2.12-java11-r-ubuntu/Dockerfile

new file mode 100644

index 0000000..9745f54

--- /dev/null

+++ b/3.3.0/scala2.12-java11-r-ubuntu/Dockerfile

@@ -0,0 +1,79 @@

+#

+# Licensed to the Apache Software Foundation (ASF) under one or more

+# contributor license agreements. See the NOTICE file distributed with

+# this work for additional information regarding copyright ownership.

+# The ASF licenses this file to You under the Apache License, Version 2.0

+# (the "License"); you may not use this file except in compliance with

+# the License. You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+#

+FROM eclipse-temurin:11-jre-focal

+

+ARG spark_uid=185

+

+RUN set -ex && \

+ apt-get update && \

+ ln -s /lib /lib64 && \

+ apt install -y gnupg2 wget bash tini libc6 libpam-modules krb5-user

libnss3 procps net-tools && \

+ apt install -y r-base r-base-dev && \

+ mkdir -p /opt/spark && \

+ mkdir -p /opt/spark/examples && \

+ mkdir -p /opt/spark/work-dir && \

+ touch /opt/spark/RELEASE && \

+ rm /bin/sh && \

+ ln -sv /bin/bash /bin/sh && \

+ echo "auth required pam_wheel.so use_uid" >> /etc/pam.d/su && \

+ chgrp root /etc/passwd && chmod ug+rw /etc/passwd && \

+ rm -rf /var/cache/apt/*

+

+# Install Apache Spark

+# https://downloads.apache.org/spark/KEYS

+ENV

SPARK_TGZ_URL=https://dlcdn.apache.org/spark/spark-3.3.0/spark-3.3.0-bin-hadoop3.tgz

\

+

SPARK_TGZ_ASC_URL=https://downloads.apache.org/spark/spark-3.3.0/spark-3.3.0-bin-hadoop3.tgz.asc

\

+ GPG_KEY=E298A3A825C0D65DFD57CBB651716619E084DAB9

+

+RUN set -ex; \

+ export SPARK_TMP="$(mktemp -d)"; \

+ cd $SPARK_TMP; \

+ wget -nv -O spark.tgz "$SPARK_TGZ_URL"; \

+ wget -nv -O spark.tgz.asc "$SPARK_TGZ_ASC_URL"; \

+ export GNUPGHOME="$(mktemp -d)"; \

+ gpg --keyserver hkps://keyserver.pgp.com --recv-key "$GPG_KEY" || \

+ gpg --keyserver hkps://keyserver.ubuntu.com --recv-keys "$GPG_KEY" || \

+ gpg --batch --verify spark.tgz.asc spark.tgz; \

+ gpgconf --kill all; \

+ rm -rf "$GNUPGHOME" spark.tgz.asc; \

+ \

+ tar -xf spark.tgz --strip-components=1; \

+ mv jars /opt/spark/; \

+ mv bin /opt/spark/; \

+ mv sbin /opt/spark/; \

+ mv kubernetes/dockerfiles/spark/decom.sh /opt/; \

+ mv examples /opt/spark/; \

+ mv kubernetes/tests /opt/spark/; \

+ mv data /opt/spark/; \

+ mv R /opt/spark/; \

+ cd ..; \

+ rm -rf "$SPARK_TMP";

+

+COPY entrypoint.sh /opt/

+

+ENV SPARK_HOME /opt/spark

+ENV R_HOME /usr/lib/R

+

+WORKDIR /opt/spark/work-dir

+RUN chmod g+w /opt/spark/work-dir

+RUN chmod a+x /opt/decom.sh

+RUN chmod a+x /opt/entrypoint.sh

+

+ENTRYPOINT [ "/opt/entrypoint.sh" ]

+

+# Specify the User that the actual main process will run as

+USER ${spark_uid}

diff --git a/3.3.0/scala2.12-java11-r-ubuntu/entrypoint.sh

b/3.3.0/scala2.12-java11-r-ubuntu/entrypoint.sh

new file mode 100644

index 0000000..cfd7a69

--- /dev/null

+++ b/3.3.0/scala2.12-java11-r-ubuntu/entrypoint.sh

@@ -0,0 +1,107 @@

+#!/bin/bash

+#

+# Licensed to the Apache Software Foundation (ASF) under one or more

+# contributor license agreements. See the NOTICE file distributed with

+# this work for additional information regarding copyright ownership.

+# The ASF licenses this file to You under the Apache License, Version 2.0

+# (the "License"); you may not use this file except in compliance with

+# the License. You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+#

+

+# Check whether there is a passwd entry for the container UID

+myuid=$(id -u)

+mygid=$(id -g)

+# turn off -e for getent because it will return error code in anonymous uid

case

+set +e

+uidentry=$(getent passwd $myuid)

+set -e

+

+# If there is no passwd entry for the container UID, attempt to create one

+if [ -z "$uidentry" ] ; then

+ if [ -w /etc/passwd ] ; then

+ echo "$myuid:x:$myuid:$mygid:${SPARK_USER_NAME:-anonymous

uid}:$SPARK_HOME:/bin/false" >> /etc/passwd

+ else

+ echo "Container ENTRYPOINT failed to add passwd entry for anonymous

UID"

+ fi

+fi

+

+if [ -z "$JAVA_HOME" ]; then

+ JAVA_HOME=$(java -XshowSettings:properties -version 2>&1 > /dev/null | grep

'java.home' | awk '{print $3}')

+fi

+

+SPARK_CLASSPATH="$SPARK_CLASSPATH:${SPARK_HOME}/jars/*"

+env | grep SPARK_JAVA_OPT_ | sort -t_ -k4 -n | sed 's/[^=]*=\(.*\)/\1/g' >

/tmp/java_opts.txt

+readarray -t SPARK_EXECUTOR_JAVA_OPTS < /tmp/java_opts.txt

+

+if [ -n "$SPARK_EXTRA_CLASSPATH" ]; then

+ SPARK_CLASSPATH="$SPARK_CLASSPATH:$SPARK_EXTRA_CLASSPATH"

+fi

+

+if ! [ -z ${PYSPARK_PYTHON+x} ]; then

+ export PYSPARK_PYTHON

+fi

+if ! [ -z ${PYSPARK_DRIVER_PYTHON+x} ]; then

+ export PYSPARK_DRIVER_PYTHON

+fi

+

+# If HADOOP_HOME is set and SPARK_DIST_CLASSPATH is not set, set it here so

Hadoop jars are available to the executor.

+# It does not set SPARK_DIST_CLASSPATH if already set, to avoid overriding

customizations of this value from elsewhere e.g. Docker/K8s.

+if [ -n "${HADOOP_HOME}" ] && [ -z "${SPARK_DIST_CLASSPATH}" ]; then

+ export SPARK_DIST_CLASSPATH="$($HADOOP_HOME/bin/hadoop classpath)"

+fi

+

+if ! [ -z ${HADOOP_CONF_DIR+x} ]; then

+ SPARK_CLASSPATH="$HADOOP_CONF_DIR:$SPARK_CLASSPATH";

+fi

+

+if ! [ -z ${SPARK_CONF_DIR+x} ]; then

+ SPARK_CLASSPATH="$SPARK_CONF_DIR:$SPARK_CLASSPATH";

+elif ! [ -z ${SPARK_HOME+x} ]; then

+ SPARK_CLASSPATH="$SPARK_HOME/conf:$SPARK_CLASSPATH";

+fi

+

+case "$1" in

+ driver)

+ shift 1

+ CMD=(

+ "$SPARK_HOME/bin/spark-submit"

+ --conf "spark.driver.bindAddress=$SPARK_DRIVER_BIND_ADDRESS"

+ --deploy-mode client

+ "$@"

+ )

+ ;;

+ executor)

+ shift 1

+ CMD=(

+ ${JAVA_HOME}/bin/java

+ "${SPARK_EXECUTOR_JAVA_OPTS[@]}"

+ -Xms$SPARK_EXECUTOR_MEMORY

+ -Xmx$SPARK_EXECUTOR_MEMORY

+ -cp "$SPARK_CLASSPATH:$SPARK_DIST_CLASSPATH"

+ org.apache.spark.scheduler.cluster.k8s.KubernetesExecutorBackend

+ --driver-url $SPARK_DRIVER_URL

+ --executor-id $SPARK_EXECUTOR_ID

+ --cores $SPARK_EXECUTOR_CORES

+ --app-id $SPARK_APPLICATION_ID

+ --hostname $SPARK_EXECUTOR_POD_IP

+ --resourceProfileId $SPARK_RESOURCE_PROFILE_ID

+ --podName $SPARK_EXECUTOR_POD_NAME

+ )

+ ;;

+

+ *)

+ # Non-spark-on-k8s command provided, proceeding in pass-through mode...

+ CMD=("$@")

+ ;;

+esac

+

+# Execute the container CMD under tini for better hygiene

+exec /usr/bin/tini -s -- "${CMD[@]}"

diff --git a/3.3.0/scala2.12-java11-ubuntu/Dockerfile

b/3.3.0/scala2.12-java11-ubuntu/Dockerfile

new file mode 100644

index 0000000..ecbcc32

--- /dev/null

+++ b/3.3.0/scala2.12-java11-ubuntu/Dockerfile

@@ -0,0 +1,76 @@

+#

+# Licensed to the Apache Software Foundation (ASF) under one or more

+# contributor license agreements. See the NOTICE file distributed with

+# this work for additional information regarding copyright ownership.

+# The ASF licenses this file to You under the Apache License, Version 2.0

+# (the "License"); you may not use this file except in compliance with

+# the License. You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+#

+FROM eclipse-temurin:11-jre-focal

+

+ARG spark_uid=185

+

+RUN set -ex && \

+ apt-get update && \

+ ln -s /lib /lib64 && \

+ apt install -y gnupg2 wget bash tini libc6 libpam-modules krb5-user

libnss3 procps net-tools && \

+ mkdir -p /opt/spark && \

+ mkdir -p /opt/spark/examples && \

+ mkdir -p /opt/spark/work-dir && \

+ touch /opt/spark/RELEASE && \

+ rm /bin/sh && \

+ ln -sv /bin/bash /bin/sh && \

+ echo "auth required pam_wheel.so use_uid" >> /etc/pam.d/su && \

+ chgrp root /etc/passwd && chmod ug+rw /etc/passwd && \

+ rm -rf /var/cache/apt/*

+

+# Install Apache Spark

+# https://downloads.apache.org/spark/KEYS

+ENV

SPARK_TGZ_URL=https://dlcdn.apache.org/spark/spark-3.3.0/spark-3.3.0-bin-hadoop3.tgz

\

+

SPARK_TGZ_ASC_URL=https://downloads.apache.org/spark/spark-3.3.0/spark-3.3.0-bin-hadoop3.tgz.asc

\

+ GPG_KEY=E298A3A825C0D65DFD57CBB651716619E084DAB9

+

+RUN set -ex; \

+ export SPARK_TMP="$(mktemp -d)"; \

+ cd $SPARK_TMP; \

+ wget -nv -O spark.tgz "$SPARK_TGZ_URL"; \

+ wget -nv -O spark.tgz.asc "$SPARK_TGZ_ASC_URL"; \

+ export GNUPGHOME="$(mktemp -d)"; \

+ gpg --keyserver hkps://keyserver.pgp.com --recv-key "$GPG_KEY" || \

+ gpg --keyserver hkps://keyserver.ubuntu.com --recv-keys "$GPG_KEY" || \

+ gpg --batch --verify spark.tgz.asc spark.tgz; \

+ gpgconf --kill all; \

+ rm -rf "$GNUPGHOME" spark.tgz.asc; \

+ \

+ tar -xf spark.tgz --strip-components=1; \

+ mv jars /opt/spark/; \

+ mv bin /opt/spark/; \

+ mv sbin /opt/spark/; \

+ mv kubernetes/dockerfiles/spark/decom.sh /opt/; \

+ mv examples /opt/spark/; \

+ mv kubernetes/tests /opt/spark/; \

+ mv data /opt/spark/; \

+ cd ..; \

+ rm -rf "$SPARK_TMP";

+

+COPY entrypoint.sh /opt/

+

+ENV SPARK_HOME /opt/spark

+

+WORKDIR /opt/spark/work-dir

+RUN chmod g+w /opt/spark/work-dir

+RUN chmod a+x /opt/decom.sh

+RUN chmod a+x /opt/entrypoint.sh

+

+ENTRYPOINT [ "/opt/entrypoint.sh" ]

+

+# Specify the User that the actual main process will run as

+USER ${spark_uid}

diff --git a/3.3.0/scala2.12-java11-ubuntu/entrypoint.sh

b/3.3.0/scala2.12-java11-ubuntu/entrypoint.sh

new file mode 100644

index 0000000..cfd7a69

--- /dev/null

+++ b/3.3.0/scala2.12-java11-ubuntu/entrypoint.sh

@@ -0,0 +1,107 @@

+#!/bin/bash

+#

+# Licensed to the Apache Software Foundation (ASF) under one or more

+# contributor license agreements. See the NOTICE file distributed with

+# this work for additional information regarding copyright ownership.

+# The ASF licenses this file to You under the Apache License, Version 2.0

+# (the "License"); you may not use this file except in compliance with

+# the License. You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+#

+

+# Check whether there is a passwd entry for the container UID

+myuid=$(id -u)

+mygid=$(id -g)

+# turn off -e for getent because it will return error code in anonymous uid

case

+set +e

+uidentry=$(getent passwd $myuid)

+set -e

+

+# If there is no passwd entry for the container UID, attempt to create one

+if [ -z "$uidentry" ] ; then

+ if [ -w /etc/passwd ] ; then

+ echo "$myuid:x:$myuid:$mygid:${SPARK_USER_NAME:-anonymous

uid}:$SPARK_HOME:/bin/false" >> /etc/passwd

+ else

+ echo "Container ENTRYPOINT failed to add passwd entry for anonymous

UID"

+ fi

+fi

+

+if [ -z "$JAVA_HOME" ]; then

+ JAVA_HOME=$(java -XshowSettings:properties -version 2>&1 > /dev/null | grep

'java.home' | awk '{print $3}')

+fi

+

+SPARK_CLASSPATH="$SPARK_CLASSPATH:${SPARK_HOME}/jars/*"

+env | grep SPARK_JAVA_OPT_ | sort -t_ -k4 -n | sed 's/[^=]*=\(.*\)/\1/g' >

/tmp/java_opts.txt

+readarray -t SPARK_EXECUTOR_JAVA_OPTS < /tmp/java_opts.txt

+

+if [ -n "$SPARK_EXTRA_CLASSPATH" ]; then

+ SPARK_CLASSPATH="$SPARK_CLASSPATH:$SPARK_EXTRA_CLASSPATH"

+fi

+

+if ! [ -z ${PYSPARK_PYTHON+x} ]; then

+ export PYSPARK_PYTHON

+fi

+if ! [ -z ${PYSPARK_DRIVER_PYTHON+x} ]; then

+ export PYSPARK_DRIVER_PYTHON

+fi

+

+# If HADOOP_HOME is set and SPARK_DIST_CLASSPATH is not set, set it here so

Hadoop jars are available to the executor.

+# It does not set SPARK_DIST_CLASSPATH if already set, to avoid overriding

customizations of this value from elsewhere e.g. Docker/K8s.

+if [ -n "${HADOOP_HOME}" ] && [ -z "${SPARK_DIST_CLASSPATH}" ]; then

+ export SPARK_DIST_CLASSPATH="$($HADOOP_HOME/bin/hadoop classpath)"

+fi

+

+if ! [ -z ${HADOOP_CONF_DIR+x} ]; then

+ SPARK_CLASSPATH="$HADOOP_CONF_DIR:$SPARK_CLASSPATH";

+fi

+

+if ! [ -z ${SPARK_CONF_DIR+x} ]; then

+ SPARK_CLASSPATH="$SPARK_CONF_DIR:$SPARK_CLASSPATH";

+elif ! [ -z ${SPARK_HOME+x} ]; then

+ SPARK_CLASSPATH="$SPARK_HOME/conf:$SPARK_CLASSPATH";

+fi

+

+case "$1" in

+ driver)

+ shift 1

+ CMD=(

+ "$SPARK_HOME/bin/spark-submit"

+ --conf "spark.driver.bindAddress=$SPARK_DRIVER_BIND_ADDRESS"

+ --deploy-mode client

+ "$@"

+ )

+ ;;

+ executor)

+ shift 1

+ CMD=(

+ ${JAVA_HOME}/bin/java

+ "${SPARK_EXECUTOR_JAVA_OPTS[@]}"

+ -Xms$SPARK_EXECUTOR_MEMORY

+ -Xmx$SPARK_EXECUTOR_MEMORY

+ -cp "$SPARK_CLASSPATH:$SPARK_DIST_CLASSPATH"

+ org.apache.spark.scheduler.cluster.k8s.KubernetesExecutorBackend

+ --driver-url $SPARK_DRIVER_URL

+ --executor-id $SPARK_EXECUTOR_ID

+ --cores $SPARK_EXECUTOR_CORES

+ --app-id $SPARK_APPLICATION_ID

+ --hostname $SPARK_EXECUTOR_POD_IP

+ --resourceProfileId $SPARK_RESOURCE_PROFILE_ID

+ --podName $SPARK_EXECUTOR_POD_NAME

+ )

+ ;;

+

+ *)

+ # Non-spark-on-k8s command provided, proceeding in pass-through mode...

+ CMD=("$@")

+ ;;

+esac

+

+# Execute the container CMD under tini for better hygiene

+exec /usr/bin/tini -s -- "${CMD[@]}"

---------------------------------------------------------------------

To unsubscribe, e-mail: [email protected]

For additional commands, e-mail: [email protected]