This is an automated email from the ASF dual-hosted git repository. jackietien pushed a commit to branch iotdb in repository https://gitbox.apache.org/repos/asf/tsfile.git

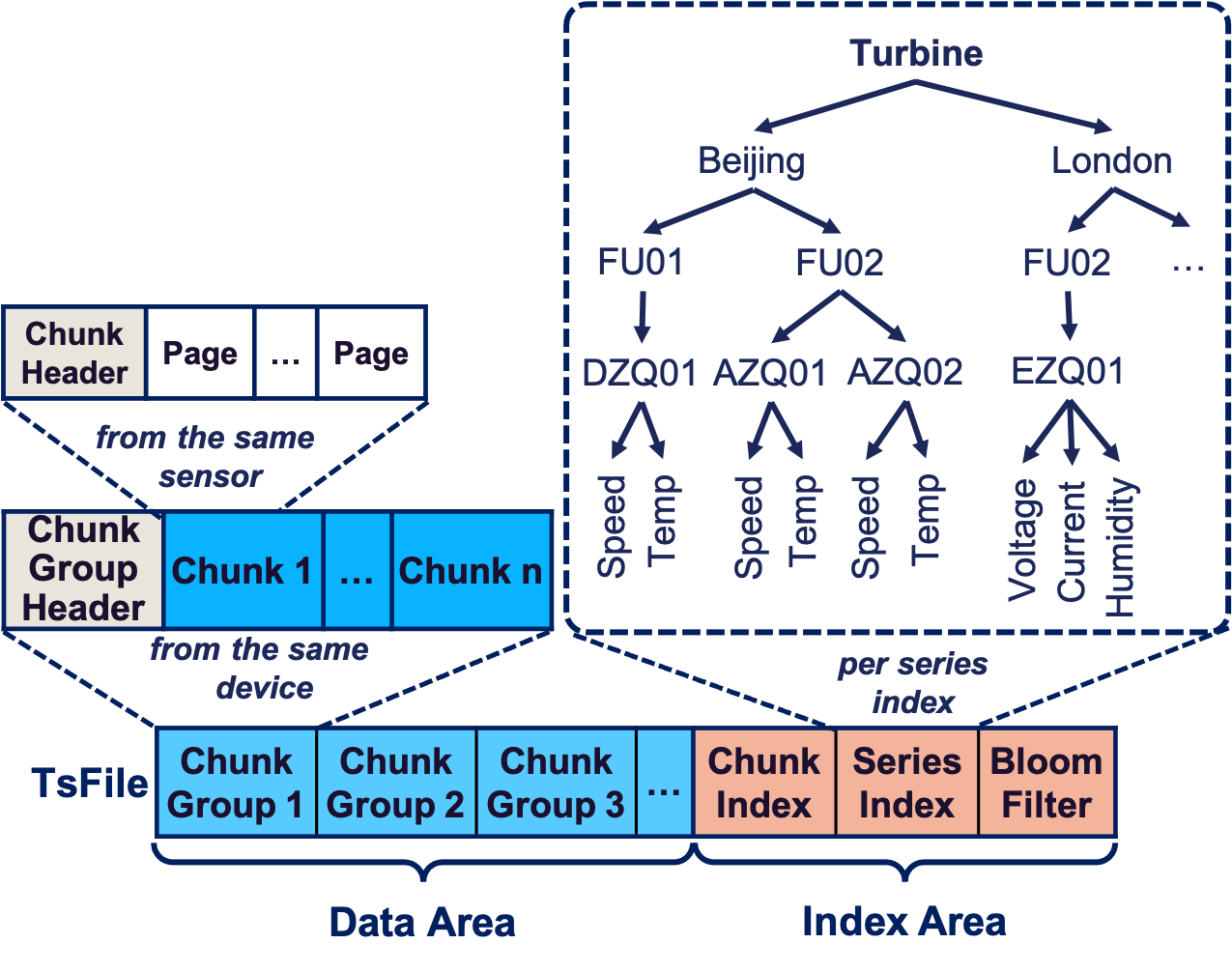

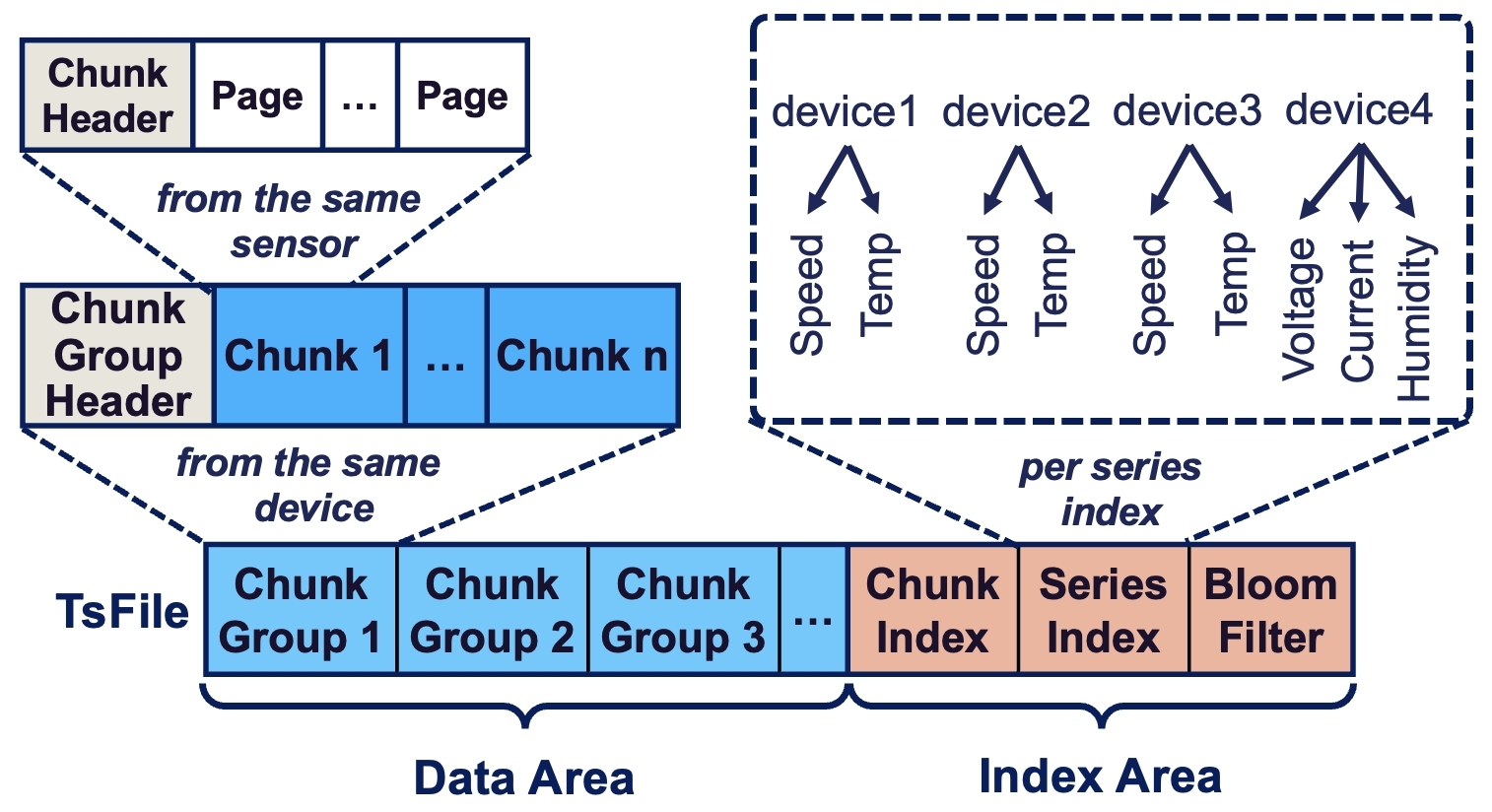

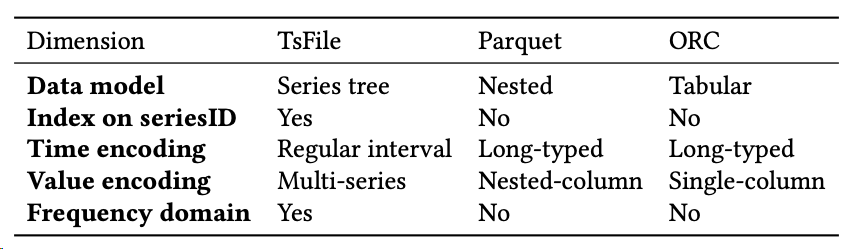

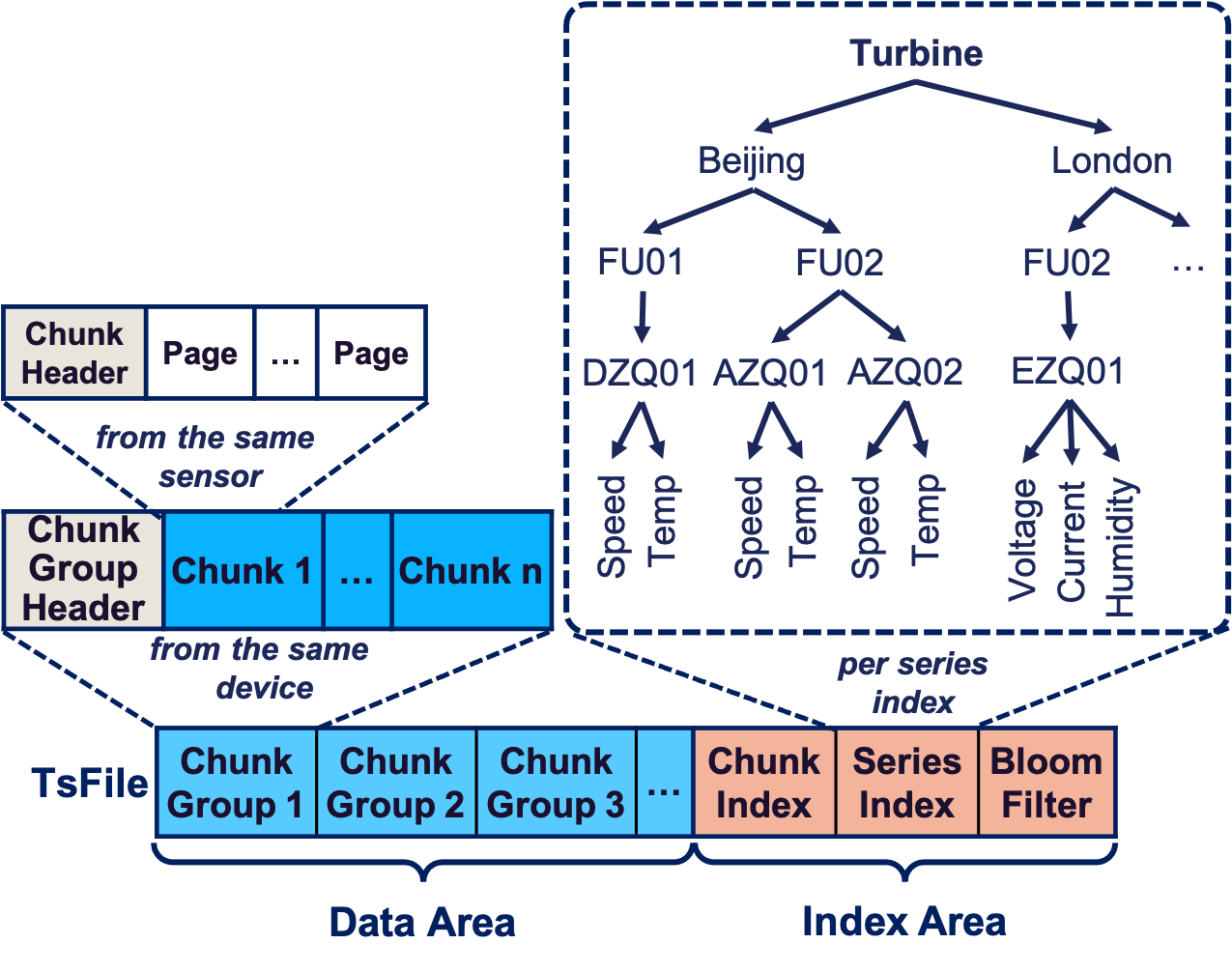

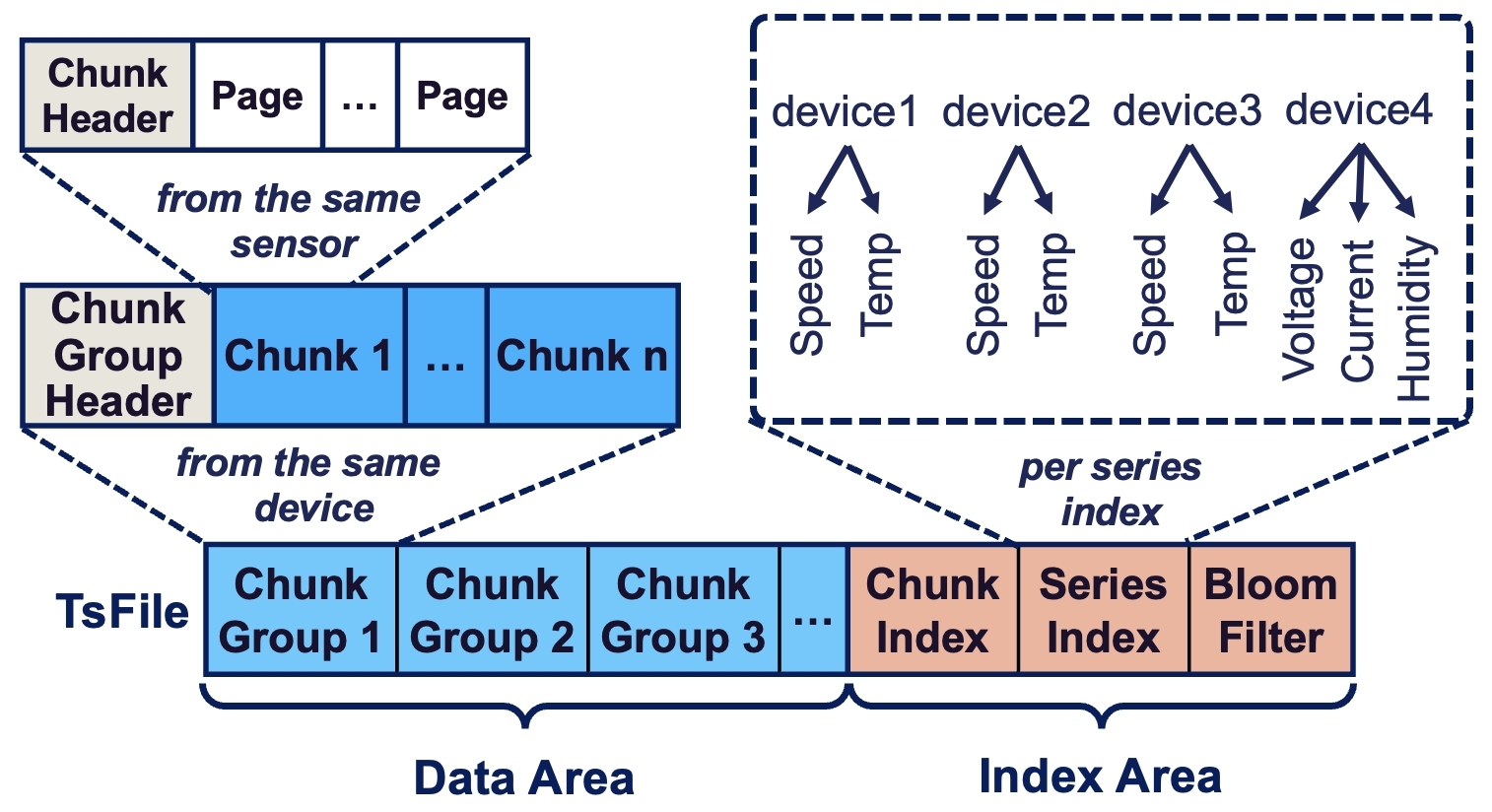

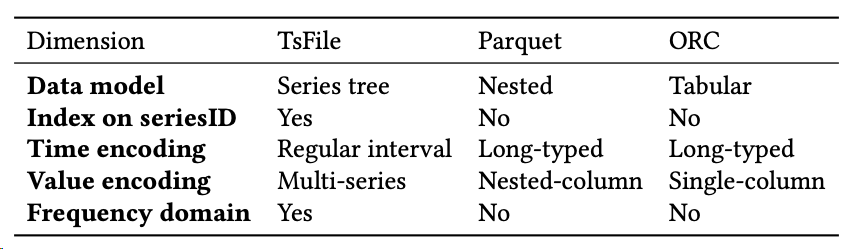

commit d48b3ff4b330411238110f2c08d032f1d85bd458 Author: CritasWang <[email protected]> AuthorDate: Tue May 28 19:14:53 2024 +0800 change Comparison to table (#90) --- README-zh.md | 12 ++++++++++-- README.md | 12 ++++++++++-- 2 files changed, 20 insertions(+), 4 deletions(-) diff --git a/README-zh.md b/README-zh.md index 11ed40f8..0e082981 100644 --- a/README-zh.md +++ b/README-zh.md @@ -87,7 +87,7 @@ TsFile 数据模型(Schema)定义了所有设备物理量的集合,如下 - Index:TsFile 末尾的元数据文件包含序列内部时间维度的索引和序列间的索引信息。 - + ### 编码和压缩 @@ -95,8 +95,16 @@ TsFile 通过采用二阶差分编码、游程编码(RLE)、位压缩和 Sna 其独特之处在于编码算法专为时序数据特性设计,聚焦在时间属性和数据之间的相关性。 -() +TsFile、CSV 和 Parquet 三种文件格式的比较 +| 维度 | TsFile | CSV | Parquet | +|---------|--------|-----|---------| +| 数据模型 | 物联网时序 | 无 | 嵌套 | +| 写入模式 | 批, 行 | 行 | 行 | +| 压缩 | 有 | 无 | 有 | +| 读取模式 | 查询, 扫描 | 扫描 | 查询 | +| 序列索引 | 有 | 无 | 无 | +| 时间索引 | 有 | 无 | 无 | 基于对时序数据应用需求的深刻理解,TsFile 有助于实现时序数据高压缩比和实时访问速度,并为企业进一步构建高效、可扩展、灵活的数据分析平台提供底层文件技术支撑。 diff --git a/README.md b/README.md index 7b0c8b05..8d673dfb 100644 --- a/README.md +++ b/README.md @@ -84,7 +84,7 @@ TsFile adopts a columnar storage design, similar to other file formats, primaril - Index: The file metadata at the end of TsFile contains a chunk-level index and file-level statistics for efficient data access. - + ## Encoding and Compression @@ -94,8 +94,16 @@ Its uniqueness lies in the encoding algorithm designed specifically for time ser The table below compares 3 file formats in different dimensions. -() +TsFile, CSV and Parquet in Comparison +| Dimension | TsFile | CSV | Parquet | +|-----------------|--------------|-------|---------| +| Data Model | IoT | Plain | Nested | +| Write Mode | Tablet, Line | Line | Line | +| Compression | Yes | No | Yes | +| Read Mode | Query, Scan | Scan | Query | +| Index on Series | Yes | No | No | +| Index on Time | Yes | No | No | Its development facilitates efficient data encoding, compression, and access, reflecting a deep understanding of industry needs, pioneering a path toward efficient, scalable, and flexible data analytics platforms.