jikechao opened a new issue, #14795: URL: https://github.com/apache/tvm/issues/14795

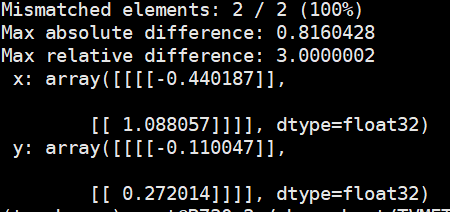

### Expected behavior If this attribute is already supported, we should fix this bug. If `divisor_override` is not yet supported, we should directly reject it in the frontend and throw an exception to show that. Wrong inference results is confusing and dangerous! ### Actual behavior TVM gave different inference results with PyTorch  ### Environment Pytorch 1.71 TVM 0.13.0-dev ### Steps to reproduce ``` import torch from tvm import relay import tvm import numpy as np m = torch.nn.AvgPool2d((2, 2), divisor_override=1,) # tvm produced a wrong inference result when using divisor_override input_data=[torch.randn([1, 2, 2, 2], dtype=torch.float32)] torch_outputs = m(*[input.clone() for input in input_data]) trace = torch.jit.trace(m, input_data) input_shapes = [('input0', torch.Size([1, 2, 2, 2]))] mod, params = relay.frontend.from_pytorch(trace, input_shapes) with tvm.transform.PassContext(opt_level=3): exe = relay.create_executor('graph', mod=mod, params=params, device=tvm.device('llvm', 0), target='llvm').evaluate() input_tvm = dict(zip(['input0'], [inp.clone().cpu().numpy() for inp in input_data])) tvm_outputs = exe(**input_tvm).asnumpy() np.testing.assert_allclose(torch_outputs, tvm_outputs, rtol=1e-3, atol=1e-3) ``` ### Triage * frontend:pytorch -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]