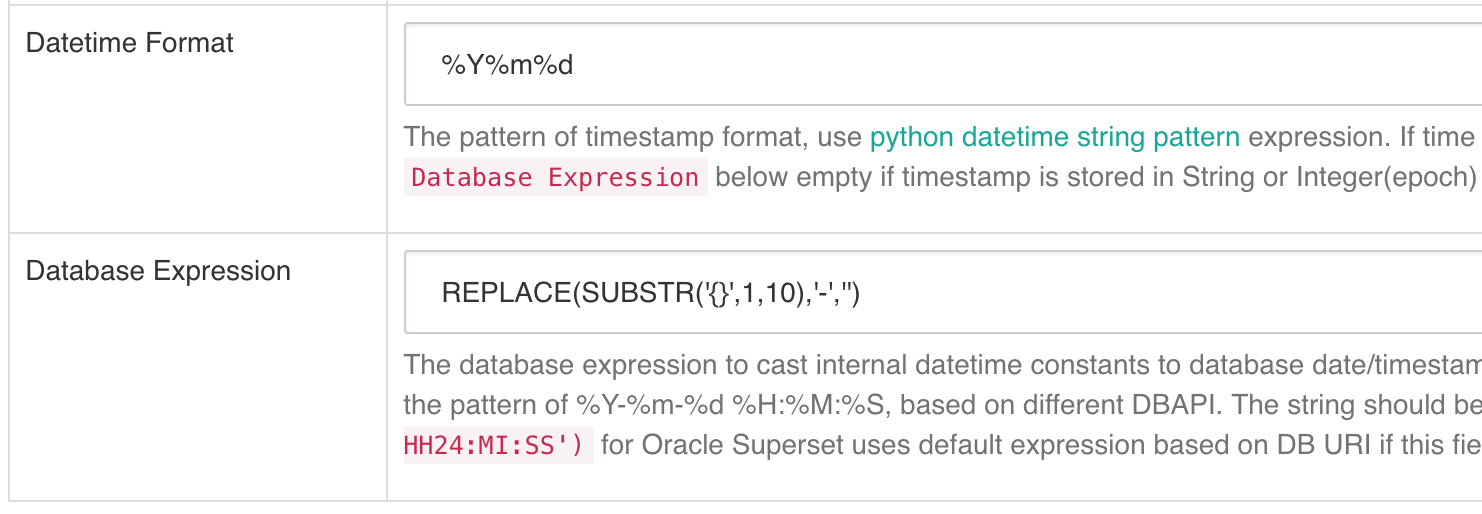

kkalyan opened a new issue #4021: Question: How to configure Temporal fields on Presto URL: https://github.com/apache/incubator-superset/issues/4021 Make sure these boxes are checked before submitting your issue - thank you! - [x] I have checked the superset logs for python stacktraces and included it here as text if any - [x] I have reproduced the issue with at least the latest released version of superset - [x] I have checked the issue tracker for the same issue and I haven't found one similar ### Superset version { GIT_SHA: "e98a1c35378b97702b92396e12845bee1d4d5568", version: "0.21.0dev" } on Mac I'm using Superset to query Hive database via Presto connector, Hive table has partitions in this format: 20171201 (YYYYMMDD). SQL lab works fine. What should be the configuration for Datetime Format and Database Expression to use the table Explore screen? I've been using  Is there a better way? When 'Include time' is used, this fails with the error `Should not have nextUri if failed` because of using date_trunc the query generated is ``` SELECT "cluster_type" AS "cluster_type", date_trunc('day', CAST(dt AS TIMESTAMP)) AS "__timestamp", COUNT(*) AS "count" FROM "xxx"."xxx" WHERE "dt" >= REPLACE(SUBSTR('2017-12-04 00:00:00',1,10),'-','') AND "dt" <= REPLACE(SUBSTR('2017-12-06 11:33:53',1,10),'-','') GROUP BY "cluster_type", date_trunc('day', CAST(dt AS TIMESTAMP)) ORDER BY "count" DESC LIMIT 50000 ``` Stack trace ``` 2017-12-06 11:44:39,049:DEBUG:root:[stats_logger] (incr) explore_json 2017-12-06 11:44:39,125:DEBUG:root:[stats_logger] (incr) loaded_from_source 2017-12-06 11:44:39,131:INFO:root:Database.get_sqla_engine(). Masked URL: presto://xxxx/hivedb 2017-12-06 11:44:39,135:INFO:root:SELECT "cluster_type" AS "cluster_type", date_trunc('day', CAST(dt AS TIMESTAMP)) AS "__timestamp", COUNT(*) AS "count" FROM "xxx"."xxx" WHERE "dt" >= REPLACE(SUBSTR('2017-12-04 00:00:00',1,10),'-','') AND "dt" <= REPLACE(SUBSTR('2017-12-06 11:44:39',1,10),'-','') GROUP BY "cluster_type", date_trunc('day', CAST(dt AS TIMESTAMP)) ORDER BY "count" DESC LIMIT 50000 2017-12-06 11:44:39,154:INFO:root:Database.get_sqla_engine(). Masked URL: presto://xxxx/hivedb/xxx 2017-12-06 11:44:39,155:INFO:pyhive.presto:SHOW COLUMNS FROM "SELECT ""cluster_type"" AS ""cluster_type"", date_trunc('day', CAST(dt AS TIMESTAMP)) AS ""__timestamp"", COUNT(*) AS ""count"" FROM ""xxx"".""xxx"" WHERE ""dt"" >= REPLACE(SUBSTR('2017-12-04 00:00:00',1,10),'-','') AND ""dt"" <= REPLACE(SUBSTR('2017-12-06 11:44:39',1,10),'-','') GROUP BY ""cluster_type"", date_trunc('day', CAST(dt AS TIMESTAMP)) ORDER BY ""count"" DESC LIMIT 50000" 2017-12-06 11:44:39,157:DEBUG:urllib3.connectionpool:Starting new HTTP connection (1): xxx 2017-12-06 11:44:39,238:DEBUG:urllib3.connectionpool:http://xxx:4080 "POST /v1/statement HTTP/1.1" 200 504 2017-12-06 11:44:39,241:DEBUG:urllib3.connectionpool:Starting new HTTP connection (1): xxx 2017-12-06 11:44:39,403:DEBUG:urllib3.connectionpool:http://xxx:4080 "GET /v1/statement/20171206_194439_00242_tdqs3/1 HTTP/1.1" 200 917 2017-12-06 11:44:39,408:INFO:pyhive.presto:SELECT "cluster_type" AS "cluster_type", date_trunc('day', CAST(dt AS TIMESTAMP)) AS "__timestamp", COUNT(*) AS "count" FROM "xxxx"."xxxx" WHERE "dt" >= REPLACE(SUBSTR('2017-12-04 00:00:00',1,10),'-','') AND "dt" <= REPLACE(SUBSTR('2017-12-06 11:44:39',1,10),'-','') GROUP BY "cluster_type", date_trunc('day', CAST(dt AS TIMESTAMP)) ORDER BY "count" DESC LIMIT 50000 2017-12-06 11:44:39,410:DEBUG:urllib3.connectionpool:Starting new HTTP connection (1): xxx 2017-12-06 11:44:39,490:DEBUG:urllib3.connectionpool:http://xxx:4080 "POST /v1/statement HTTP/1.1" 200 504 2017-12-06 11:44:39,494:DEBUG:urllib3.connectionpool:Starting new HTTP connection (1): xxx 2017-12-06 11:44:40,015:DEBUG:urllib3.connectionpool:http://xxx:4080 "GET /v1/statement/20171206_194439_00243_tdqs3/1 HTTP/1.1" 200 1423 2017-12-06 11:44:40,016:ERROR:root:Should not have nextUri if failed Traceback (most recent call last): File "/Users/kkalyan/try/incubator-superset/superset/connectors/sqla/models.py", line 568, in query df = self.database.get_df(sql, self.schema) File "/Users/kkalyan/try/incubator-superset/superset/models/core.py", line 673, in get_df df = pd.read_sql(sql, eng) File "/Users/kkalyan/try/incubator-superset/env/lib/python2.7/site-packages/pandas/io/sql.py", line 416, in read_sql chunksize=chunksize) File "/Users/kkalyan/try/incubator-superset/env/lib/python2.7/site-packages/pandas/io/sql.py", line 1087, in read_query result = self.execute(*args) File "/Users/kkalyan/try/incubator-superset/env/lib/python2.7/site-packages/pandas/io/sql.py", line 978, in execute return self.connectable.execute(*args, **kwargs) File "/Users/kkalyan/try/incubator-superset/env/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 2064, in execute return connection.execute(statement, *multiparams, **params) File "/Users/kkalyan/try/incubator-superset/env/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 939, in execute return self._execute_text(object, multiparams, params) File "/Users/kkalyan/try/incubator-superset/env/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 1097, in _execute_text statement, parameters File "/Users/kkalyan/try/incubator-superset/env/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 1202, in _execute_context result = context._setup_crud_result_proxy() File "/Users/kkalyan/try/incubator-superset/env/lib/python2.7/site-packages/sqlalchemy/engine/default.py", line 901, in _setup_crud_result_proxy result = self.get_result_proxy() File "/Users/kkalyan/try/incubator-superset/env/lib/python2.7/site-packages/sqlalchemy/engine/default.py", line 877, in get_result_proxy return result.ResultProxy(self) File "/Users/kkalyan/try/incubator-superset/env/lib/python2.7/site-packages/sqlalchemy/engine/result.py", line 653, in __init__ self._init_metadata() File "/Users/kkalyan/try/incubator-superset/env/lib/python2.7/site-packages/sqlalchemy/engine/result.py", line 672, in _init_metadata cursor_description = self._cursor_description() File "/Users/kkalyan/try/incubator-superset/env/lib/python2.7/site-packages/sqlalchemy/engine/result.py", line 793, in _cursor_description return self._saved_cursor.description File "/Users/kkalyan/try/incubator-superset/env/lib/python2.7/site-packages/pyhive/presto.py", line 161, in description lambda: self._columns is None and File "/Users/kkalyan/try/incubator-superset/env/lib/python2.7/site-packages/pyhive/common.py", line 45, in _fetch_while self._fetch_more() File "/Users/kkalyan/try/incubator-superset/env/lib/python2.7/site-packages/pyhive/presto.py", line 243, in _fetch_more self._process_response(self._requests_session.get(self._nextUri, **self._requests_kwargs)) File "/Users/kkalyan/try/incubator-superset/env/lib/python2.7/site-packages/pyhive/presto.py", line 282, in _process_response assert not self._nextUri, "Should not have nextUri if failed" AssertionError: Should not have nextUri if failed 2017-12-06 11:44:40,019:INFO:root:Caching for the next 86400 seconds ``` @mistercrunch

---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected] With regards, Apache Git Services